Unlock the Power of AI With the Complete Marketing Automation Playbook

Discover how to reclaim your time and scale smarter with AI-driven workflows that actually work. You’ll get frameworks, strategies, and templates you can put to use immediately to streamline and supercharge your marketing:

A detailed audit framework for your current marketing workflows

Step-by-step guidance for choosing the right AI-powered automations

Pro tips for improving personalization without losing the human touch

Tools and templates to speed up implementation

Built to help you automate the busywork and focus on work that actually makes an impact.

THEWHITEBOX

TLDR;

Welcome back! This week, we take a look at a varied manifold of news, from Will Smith’s unexpected AI controversy, OpenAI’s new model, and a deep analysis of Meta’s upcoming Manhattan-sized data center, to NVIDIA’s earnings, among other relevant news.

Finally, we examine Google’s new model’s impressive capabilities, which lead us to question whether text-based AIs are the focus we should be paying attention to, as a new revolution in image and video technology might be around the corner.

Enjoy!

THEWHITEBOX

Things You’ve Missed By Not Being Premium

On Tuesday, we took a look at the new hot product in Silicon Valley: browser agents. We also discussed Cohere’s great reasoning model, Google’s SOTA image generation model, and the growing concern that AI price reductions are stagnating, among other cool stuff.

MODELS

ChatGPT RealTime API

Literally minutes ago, OpenAI announced a new speech-to-speech model and the Realtime API, offering a low-latency audio service that is truly impressive.

It’s important to note that this isn’t an amalgam of models but a single, end-to-end model that takes in speech (and, notably, images, which was very impressive to see) and returns speech.

Unsurprisingly, the model is RL-trained, meaning it has been optimized to achieve specific goals. In particular, one of these goals seems to be steerability, allowing the user to steer the model’s prosody, tone, excitement, character, and so on.

TheWhiteBox’s takeaway:

Is this the final piece in the customer support puzzle?

The live demo included a demonstration with T-Mobile, showing how they are utilizing these models to handle customer requests for device upgrades. I was particularly surprised by how effective the interruption system is; you can stop the model with ease, and it complies immediately, something that was not the case beforehand.

The model also excels at following instructions, a crucial requirement for enterprise-grade applications, and it’s considerably resistant to nefarious user intent to deviate from system prompt instructions. That is, if you’re deploying a customer support agent, you can define clear instructions that the model will not deviate from.

The model is also ‘agent-ready’, meaning that it will not only communicate effectively but also execute tools (both OpenAI-native connectors, such as Gmail, and the broader MCP ecosystem).

So, are we finally ready to automate the entire support process? Perhaps, but as someone fortunate enough to speak two languages, English and Spanish, I can assure you that the quality is not even close, which makes me believe that this is probably also the case for all languages except English.

VIDEO

Will Smith Accused of Using AI

Will Smith has been accused of using AI in a promotional video for his tour. The video features several crowd shots, including some highly suspicious faces.

TheWhiteBox’s takeaway:

It’s clearly AI; there is no possible debate. The faces appear distorted, clearly not those of humans. It’s a terrible look for Will Smith, but it says more about the future that awaits us:

We are about to be bombarded with AI content.

Will got caught because video-generation models are still not quite there, but in just a few months, video generation will be basically impossible to detect without watermarks.

I believe AI-generated content should be disclosed when used; we must remain capable of differentiating between what’s real and what isn’t. Otherwise, many people would lose trust in social media content. Who would want to see Instagram if we know that 90% of it is not even real? I wouldn’t.

RESEARCH

Prime Intellect Launches Environment Hub

Prime Intellect, an AI cloud company, has released an environment Hub for Reinforcement Learning training. As we have discussed many times, models today are trained using a method that utilizes a reward system to reinforce good model actions and penalize bad ones, thereby helping the model learn what works.

The hub also doubles as an evaluation hub to test models on certain evals, making it a one-shot place to train and test models.

TheWhiteBox’s takeaway:

This is an important release because environments are severely underexplored.

The issue is that most mindshare is focused on either models or reward systems, but little on the environments where these models are trained, which is equally important (the more the environment adheres to the real one, the better the results; this is particularly important in areas like robotics).

Releasing an open-source environment hub where people can access environments or publish new ones is a great way to incentivize more research in this area. Without the proper environment, models and reward systems are ineffective; they won’t learn the necessary skills to work effectively.

JOBS

Google has cut 35% of Small-Team Manager Jobs

In a town‑hall gathering held last week (late August 2025), Brian Welle, Google’s Vice President of People Analytics and Performance, revealed that the company has reduced the number of managers overseeing tiny teams by approximately one‑third, or 35%, compared to a year ago. These managers typically led teams of fewer than three individuals. Rather than exiting the company, many of those affected transitioned into individual contributor roles.

CEO Sundar Pichai explained that this restructuring is part of a broader effort to drive efficiency and agility as Google scales. In layman’s terms, they are saying not all problems are solved by hiring. This initiative builds upon previous cuts: in December, managerial, director, and VP roles had already been reduced by around 10%.

Complementing the management cuts, Google has rolled out voluntary exit programs (VEPs) across 10 different product areas, including search, marketing, hardware, and people operations.

Between 3% and 5% of employees within those divisions have opted for the buyouts so far. Company leadership positioned these programs as a more compassionate alternative to mass layoffs, offering employees control and autonomy in their career decisions.

TheWhiteBox’s takeaway:

It’s clear Google has been very careful about the PR side of this news. They are very clear on relocations and employee buyouts, rather than just handling the issue the old way: massive layoffs.

But perhaps more importantly, it points to the job I’ve believed for a long time is more exposed to current AI progress: corporate managers.

This is a very broad spectrum of people, but within this cohort, there’s a decent number of them whose job is measured by the number of meetings they have that day. In some cases, they aren’t even accountable for specific projects, merely spectators, if not for pretending to be useful.

More than a year ago, I wrote the “Am I getting streamrolled by AI” framework, which is accurate to this day. In it, I argued that the probability of being substituted was proportional to how hard it was to define your job’s outcomes and, crucially, measure them.

Put simply, if your boss struggles explaining to their boss why you’re essential, beyond things like “they are a great teammate” or “hard worker,” instead of hard statistics and demonstrable measurements, you will be first in line to be substituted by an AI.

In that framework, which I recommend you take a look at, I mentioned that if you were in that position, you should be talking to your boss to redefine how you’re evaluated, making sure you can be “hard measured,” instead of “vibe measured”.

To be clear, I’m not saying these people aren’t valuable; I’m saying your job is to enhance others (help them get the job done), which sounds eerily close to what AI promises. And even if the reality isn’t quite there, that’s not the point; the crux of the issue is whether your boss's boss thinks AI is there.

Every job has its own particularities, but if I were a CEO being pushed by the board to make some cuts, here is where I would be looking first. Sorry if that sounds harsh to you, but I’m not here to make you feel better; I'm here to help you become more aware of your strengths and weaknesses.

TRUMP ADMINISTRATION

Trump Makes $50 Billion Claim about Meta’s Data Center

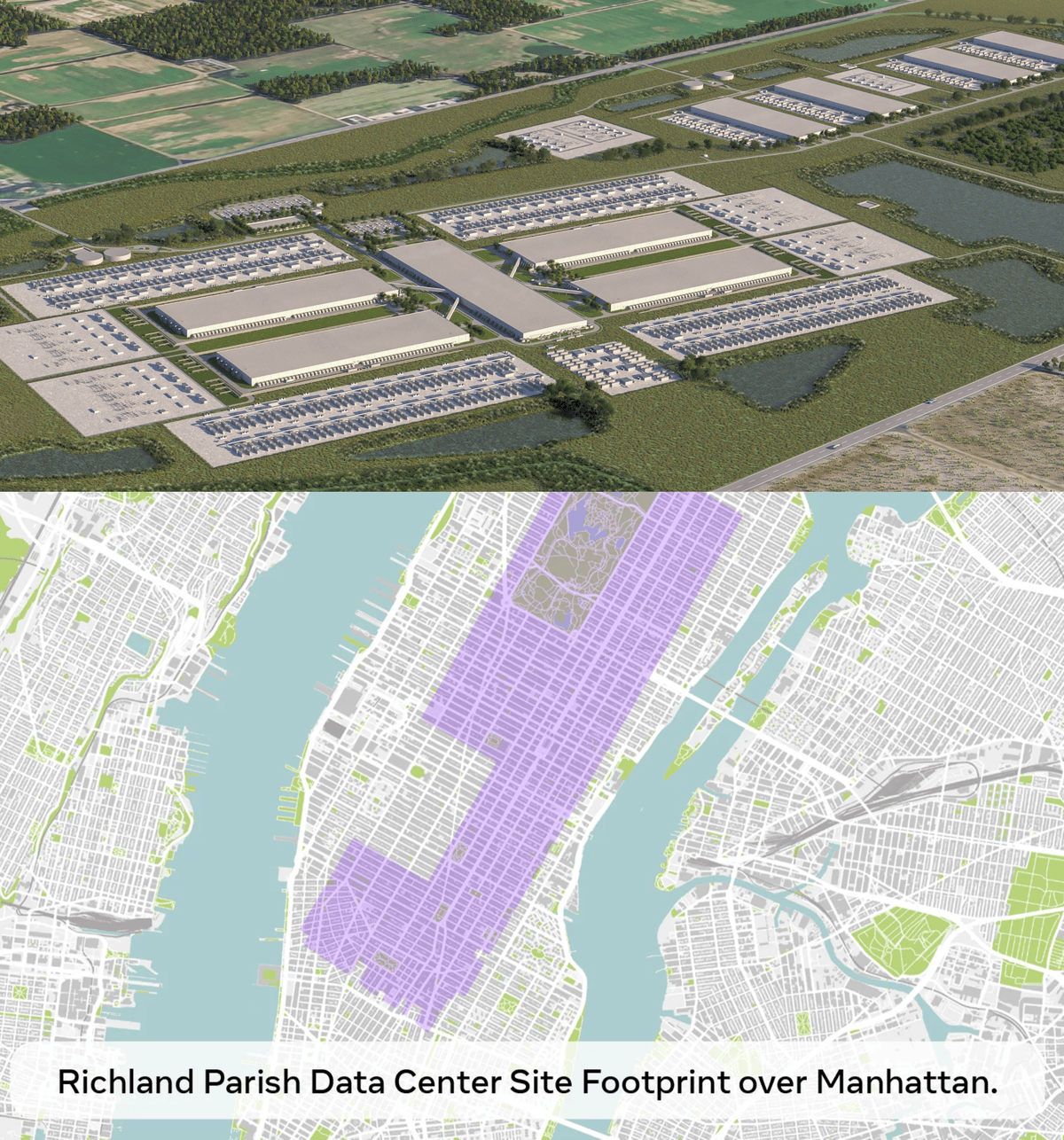

President Donald Trump on Tuesday claimed Meta Platforms’ massive data center project in Richland Parish, Louisiana, will carry a $50 billion price tag.

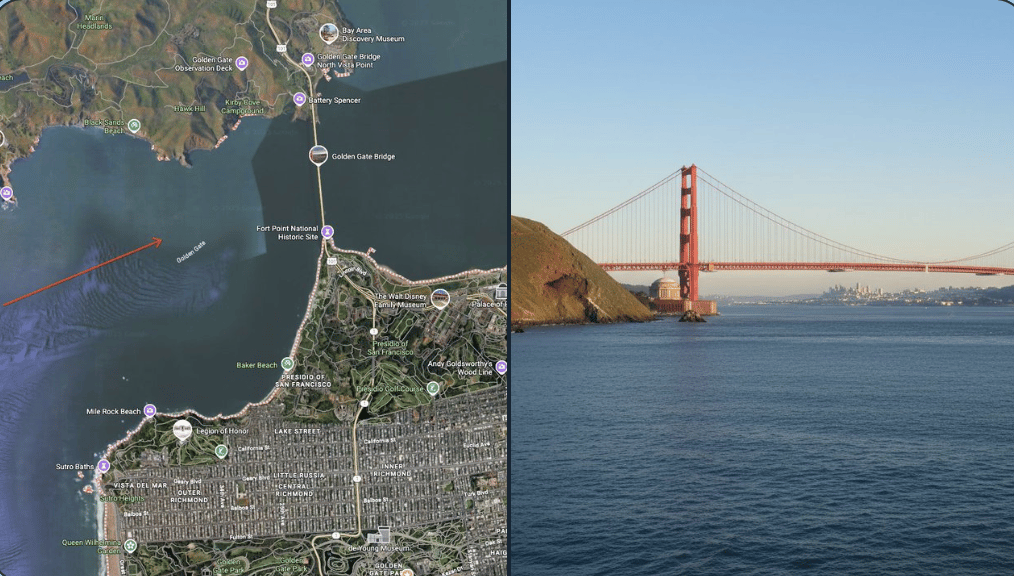

“When they said $50 billion for a plant, I said, ‘What the hell kind of plant is that?’” Trump remarked, before adding that the scale made sense once he saw a rendering of the facility. He said the image (shared with him by CEO Mark Zuckerberg) showed Meta’s Louisiana campus superimposed over Manhattan (shown above).

Meta originally announced the project in December, pledging more than $10 billion in investment and projecting it could reach 2 gigawatts of capacity by 2030. By July, the company suggested the campus might scale as high as 5 gigawatts, though it did not update its cost estimate.

Meta is not financing the buildout on its own. Bloomberg has reported the company secured nearly $30 billion in funding for the site, primarily through debt. They did not respond to a request for comment.

TheWhiteBox’s takeaway:

With CAPEX estimates for 2026 nearing $400 billion, considering all Hyperscalers and the inclusion of Oracle, this number is not particularly surprising, even if the investment, if it were to materialize, is a long-term commitment extending up to 2030.

However, we must take these numbers with a pinch of salt. These aren’t fully committed values, just estimates. In fact, only $30 billion appears to have been secured, and even that amount is likely heavily contingent upon factors such as letters of intent (LOIs), which reserve Meta's right to hold back if necessary (a tactic Microsoft has employed in the past).

The numbers serve the President well, enabling him to score media victories in his ongoing campaign to encourage major companies to invest heavily in the US.

However, I remain skeptical about the veracity of these values, as numerous factors could be at play.

Without a doubt, energy constraints are the most significant risk. As Casey Handmer explained in the Dwarkesh Podcast, there’s no way these data centers are going to be plugged into Louisiana’s electricity grid. Instead, it’s almost certain that Meta will have to build a literal power plant on-site to handle the up to 5 Gigawatts this data center is expected to require.

Casey bets on solar as the key enabler due to its significantly lower lead times compared to other options, such as nuclear. I agree a potential explosion in solar interest might be approaching.

To provide some context on the size of this project, every gigawatt is equivalent to approximately one million homes (around 834,000), assuming the average US home's yearly consumption is 10,500 kWh. Assuming 3.6 persons per home (the current US fertility rate of 1.6 children per woman), such a data center would then consume as much energy as 15 million people, more than LA’s entire metropolitan population (12.9 million).

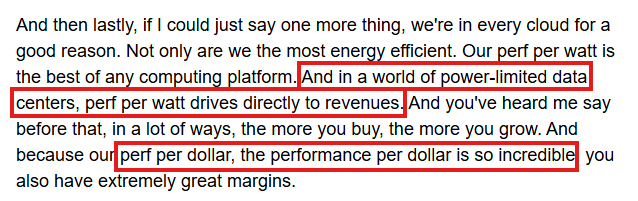

If you read between the lines, this is precisely what Jensen is alluding to in the passage below. Performance per watt, where NVIDIA excels, is crucial in energy-constrained places like the US (not so much in China, as we have discussed multiple times).

Even if edge AI (AI workloads running on local environments, rather than cloud data centers) becomes prominent in the overall scheme of things, these local environments are still connected to the grid, so decreasing the cost per watt remains the ultimate determining factor regarding AI’s viability.

Build. More. Power.

PUBLIC COMPANIES

NVIDIA somehow disappoints

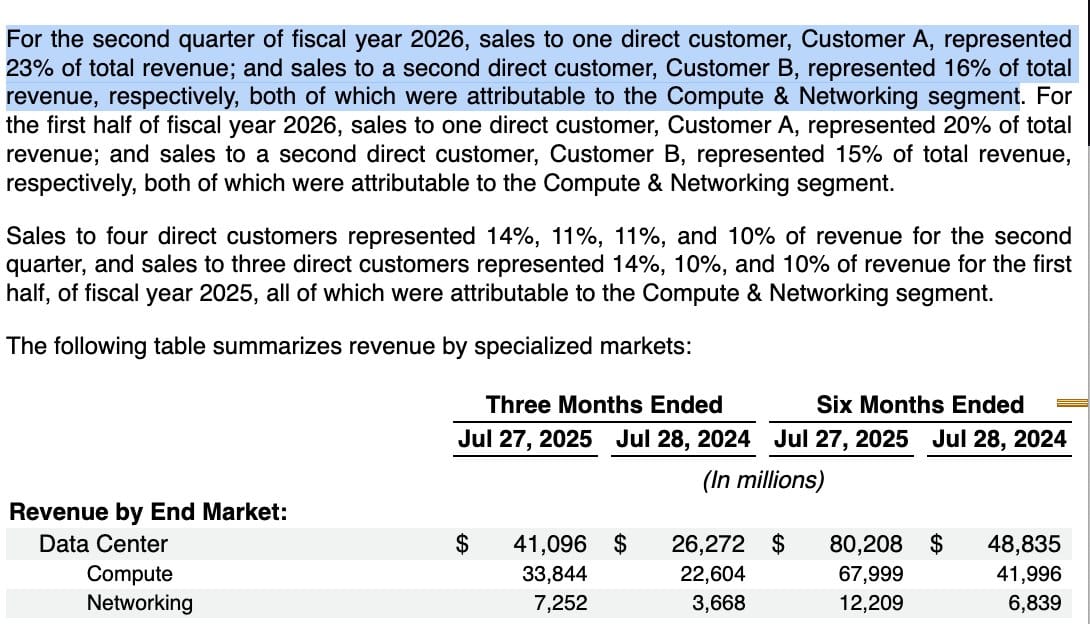

NVIDIA has presented its latest earnings, and it somewhat disappointed. Its fiscal Q2 data center revenue hit $41 billion, up 56% year over year.

While this marks its slowest growth rate in over two years, the figure still dwarfs the performance of other tech majors. The slight revenue miss versus Wall Street expectations triggered a modest stock dip.

Looking ahead, Nvidia projects a record $7.3 billion quarter-over-quarter jump in revenue, fueled by strong demand for its new Blackwell chips. The next generation is expected to solidify Nvidia’s lead in AI infrastructure, further extending its dominance over rivals. Gross margins remain exceptionally strong at 72%, far above pre-AI norms, and the company expects them to hold in the mid-70s through year-end.

TheWhiteBox’s takeaway:

NVIDIA still seems to be enjoying strong pricing power. I’m particularly impressed by how resilient their growth margins are, despite the growing pressure to deploy more HBM chips into each GPU, a costly component (upwards of $110 per GB) that should erode margins (I assume they are passing the cost on to consumers).

The takeaway for me here is the absurdly high standards Wall Street is using to measure NVIDIA, clearly reflecting extreme skepticism, or at the very least, strong uneasiness among public investors regarding AI and its lackluster enterprise adoption.

Maybe the fact that the company is extremely consumer-concentrated, with two customers accounting for 44.4% of NVIDIA’s total revenue from the data center business in Q2, doesn’t help:

Furthermore, MIT’s recent “95% of AI implementations fail” study, which is, as we discussed, nothing but textbook sensationalism, isn’t helping either.

With that said, there are objective reasons to doubt NVIDIA’s stock growth potential. On the one hand, while downsizing revenue projections, rivals like Groq or Cerebras are offering unique value propositions that, in some instances, are objectively superior to NVIDIA’s GPUs, and will only get worse.

Another tension for NVIDIA comes from China. Despite Trump allowing the sale of H20s to Chinese companies, these have been rejected by the CCP (Chinese Communist Party) after Trump demanded NVIDIA to place ‘trackers’ in the servers to prevent GPU smuggling (or identify it). China rejected this idea and thus canceled the orders. Therefore, NVIDIA’s China business future, which once accounted for nearly a quarter of sales, remains as unclear as ever.

But the biggest risk is AMD. Coming in 2026, AMD should be ready to offer its first NVIDIA-scale server, which will naturally reduce market share as AMD is far cheaper despite very similar specs (making it better at a performance per dollar). NVIDIA is extensively scrutinized by investors on its gross margins, so I’m not sure they are very willing to enter a price battle with AMD.

Instead, they will rely on their brand and, as of today, superior software as justification for being more expensive. That is a valid justification (GPU software is key, despite being a hardware market), but let’s not forget what we analyzed in AMD’s deep dive: AMD is heavily relying on partners like Tinybox’s genius leader, George Hotz, to improve its software.

If they deliver, coupled with the assumption that AMD’s promises on its upcoming MI400x server also deliver, which is expected to be competitive with NVIDIA’s next-generation GPU platform, Rubin (coming after Blackwell), there’s really no moat for NVIDIA at that point (even Sam Altman praised the potential release), which is why I’m so bullish on AMD.

It’s essential to understand why this upcoming server is crucial for AMD (and NVIDIA). In AI, there are two types of AI workloads: training and inference.

Training is not latency sensitive (how long a token takes to be outputted isn’t that relevant, if at all). Instead, what matters is how many sequences we can process in parallel, given the vast amounts of text data the model must go through.

Therefore, training workloads tend to encompass a large set of GPU servers (a server is a group of tightly-connected GPUs) communicating with each other, reaching up to 200,000 concurrent GPUs in training models like Grok-4. Increasing the amount of connected servers is called, in industry parlance, “scaling out”, which becomes essential for training.

On the other hand, inference is latency sensitive, so you want to keep workloads intra-server (because server-to-server communication is prohibitively slow). Increasing the number of GPUs in a server is called a “scale up,” and as you can guess, the key metric in inference workloads.

Current AMD servers have a serious scale-up limitation, supporting only up to 8 GPUs per server, compared to NVIDIA’s 72, making them unsuitable for large-scale inference workloads, the predominant workload since the arrival of reasoning models. Hopefully, the MI400x AMD server will match NVIDIA’s scale-up to 144 tightly connected GPUs expected for NVIDIA’s Rubin server, correcting this critical flaw, which might put into question NVIDIA’s dominance.

Overall, NVIDIA continues to perform well, with strong guidance of up to $54 billion, despite underwriting all potential H2 sales to China at zero. But issues are mounting, with an unpredictable Chinese business and strong geopolitical exposure, rivals catching up, and a growing concentration in its customer pool.

A confirmation of a cutoff to the Chinese region, AMD fulfilling its promises around servers and software, a key customer catching a cold, or all of them combined, could lead to significant price action, especially considering its current beta value, a measure of volatility, is significantly higher compared to the average stock.

IMAGE MODELS

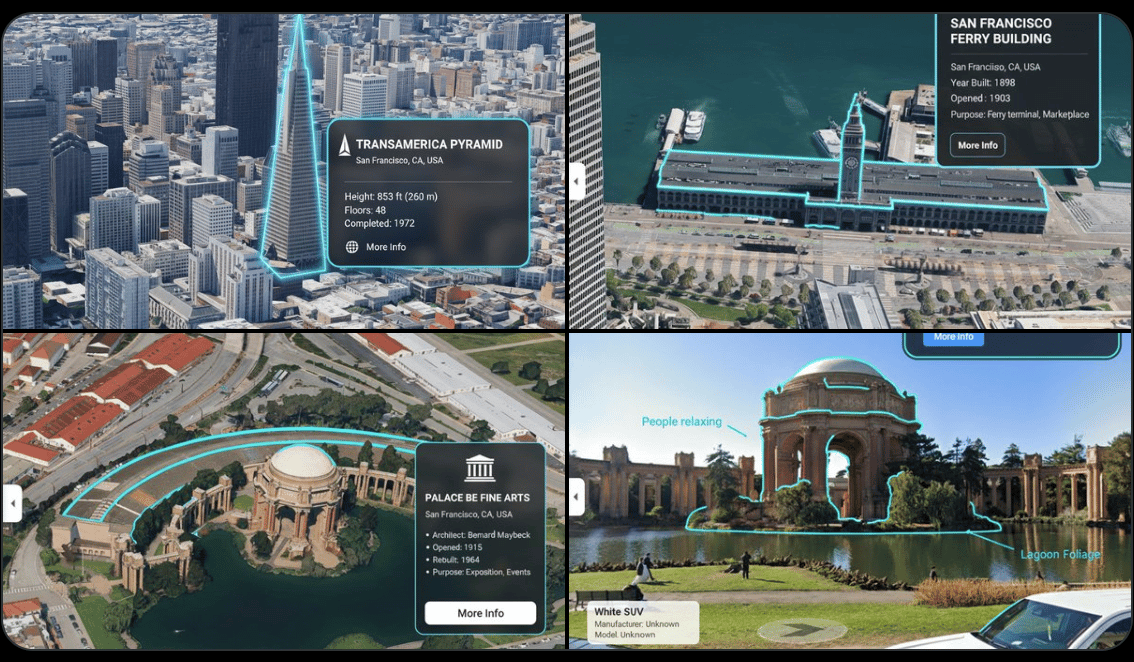

Gemini Flash 2.5 Image Continues to Impress

Days after the release of what is now considered the best image-generation and editing model on the planet, ahead of OpenAI’s GPT-4o, it continues to show impressive capabilities.

Thanks to the fact that the model possesses Gemini’s world knowledge, it can immediately recognize famous landmarks and annotate them, as shown in the image above.

But it gets crazier; the model seems to have ‘spatial intuition’, meaning you can draw an arrow on a map, asking what a person would see from that point of view, and it delivers:

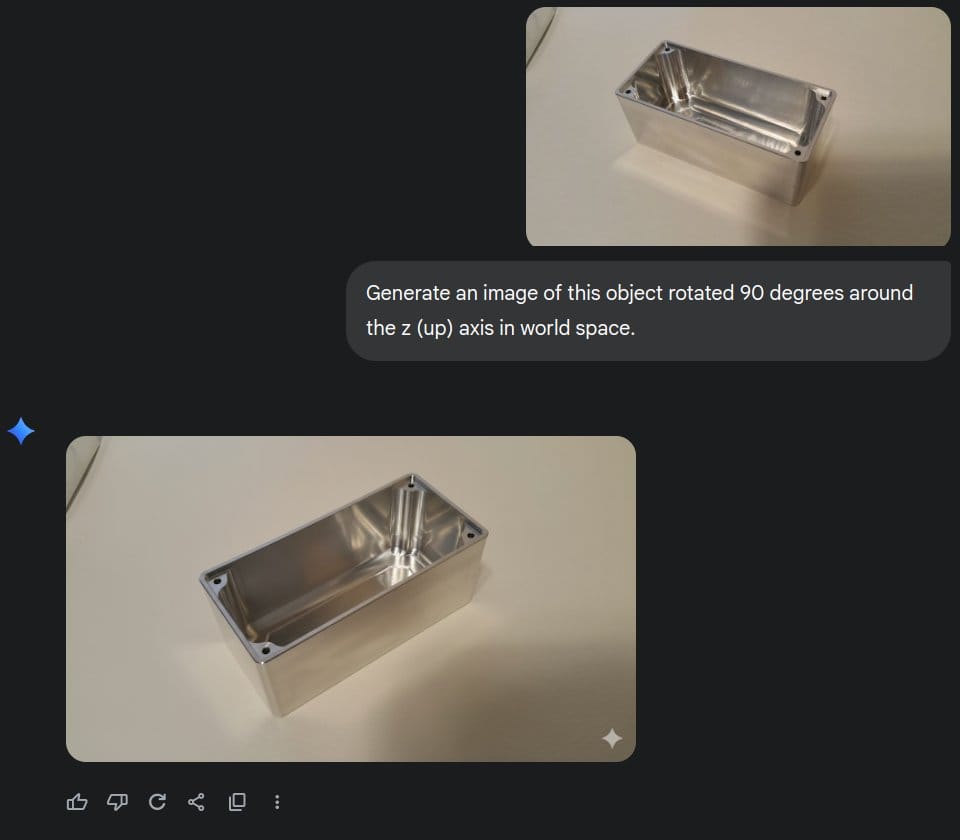

Perhaps even more striking, the model can even rotate objects in images:

These previously unseen features have led some to speculate that, in reality, it’s not an image model per se, but a video model disguised as an image model, generating a single frame. This makes sense considering that video models have spatial awareness, which allows for the incredible images you see above.

And if you recall where Google excels most, in video generation models, everything falls into place, and one understands Google’s dominance.

Moreover, the model is also absurdly competitive in terms of pricing, being 79% cheaper than OpenAI’s model, and it is considerably faster.

If there’s one thing I consider this model to be worse at, it is text; it makes far more mistakes than OpenAI’s model. At least based on my personal experience.

TheWhiteBox’s takeaway:

Google’s strategy should be to consistently undercut OpenAI’s prices. Thanks to the fact that Google isn’t as pressured by venture capitalists to generate profits as OpenAI, and that it doesn’t have to raise money thanks to Google’s cash-printing businesses, coupled with the fact that they can design models to maximize performance in their own TPU chips (or vice versa), gives Google a significant advantage over OpenAI (and everyone for that matter, no company is in this position right now).

In layman’s terms, being vertically integrated (designing both hardware and software) and not being in a rush to make money is a significant advantage in this space.

At risk of sounding like a broken record, it’s really, really hard to bet against Google at this moment in time.

I must disclose that I’m a significant Google shareholder (in relation to my own net worth), so I’m biased; please don’t take this as financial advice, as it’s not intended to be.

Closing Thoughts

This week has had it all. Lying celebrities using AI in very deceiving ways, intense price action in the stock market thanks to NVIDIA’s earnings, confirmation that corporate managers are really strong candidates to be one of the first casualties in the AI era, and with OpenAI delivering a new model, this time fully focused on audio.

Importantly, money seems to continue pouring in, with Meta’s potential $50 billion data center being almost the size of Manhattan. However, perhaps the most interesting news of the week is that Google’s new model boasts previously unseen skills, leading many to wonder whether we are entering a new era of progress in AI, one that is less focused on text models and more on video models.

Spatial understanding is considered by experts to be a crucial element of AI models, potentially leading to all-around smarter models in areas like robotics. Spatial understanding is highly underrated by the masses, but conquering it could be an even larger milestone than ChatGPT.

It is quite possible that, by the end of the year, video models will be at the center of most of the hype. And if that happens, there’s really little debate on who’s ahead.

THEWHITEBOX

Join Premium Today!

If you like this content, by joining Premium, you will receive four times as much content weekly without saturating your inbox. You will even be able to ask the questions you need answers to.

Until next time!

Give a Rating to Today's Newsletter

For business inquiries, reach me out at [email protected]