THEWHITEBOX

This Will Change Your View on Agents

Rarely, if ever in my long writing career, have I been more excited about something than the thing I’m writing about today.

Because, finally, I get it. I get agents, and I get software (of its future, to be more exact). And I can assure you that, after reading, you will see things the same way I do.

That is, different from the rest.

Last week, we took a look at an agent’s first leg: how to provide the agent with the right context. This week, we’re looking at the other side, how to enable action.

But I’ve decided to take it a step further and show you, quite literally, what the future of software looks like.

I’m building a finance app for myself, but I’ve also made it ‘agentic’, meaning my personal agent is a first-class citizen of this app, once again proving that only when you get your hands dirty, you truly get to understand how things really are.

The app has a little bit of everything I’ve learned in AI over the years. Fully built with AI, context-engineering enabled, fully programmable by the user, using embeddings search for pretty cool use cases, AI-powered with a fully AI backend, and more. The app adapts to my constraints and evolves with me, creating a fully customized experience.

It’s too early to understand what I mean, but let me put it this way: software as we know it is dead.

At this point, I’m comfortable telling you that what you’re about to see is nothing you’ve ever seen before.

So the goal of today is two-fold:

Clarify. Explain why most agent limitations today are not the AI’s fault.

Open your eyes. Settle for good the concept of ‘agentic software’ and what it actually means, because guess what, even top AI Labs are thinking about this wrong.

What we’re seeing today has many implications for markets, for startups, for the AI industry as a whole, and, of course, most importantly, for you. I’m going to make you a builder, and that journey starts today.

The Tools side, more than it seems

Recalling our vision of what an agent is: an AI model that has some knowledge of the world and the context you provide, and, based on all of that, takes action on our behalf.

It’s literally a math equation: f(knowledge + context) = action.

And while today the knowledge component is largely out of our control because it’s dependent on AIs we don’t train (in the future, everyone will retrain their agents), the context component is definitely under our control, which is why context engineering has become such a popular term and why I dedicated an entire article last week to it.

In fact, agents are not so different in spirit from a standard chatbot; the difference between ChatGPT and your personal secretary is really nothing beyond the fact that the latter can take action via the use of “tools.”

But what is an agent tool? No, but seriously, what is an agent tool really, not what people tell you?

The key concept known as tool calling

What do we actually mean when we say an agent executes an action? In reality, they don’t execute anything. In fact, the picture is more nuanced: it just declares what it wants to use.

This may sound like something with zero implications, but it’s actually a $100 billion factor.

At risk of scaring you, you can actually think of an agent as the Marvel villain “Supreme Intelligence,” this massive head from the Marvel franchise. I know this makes no sense right now, but bear with me.

Why? Well, because AI agents (at least the popular ones based on Large Language Models) don’t have a body or a way to execute actions themselves, they just declare what needs to be done.

Actually, they receive a set of context and instructions, coupled with their knowledge, that take up all that information and predict one of two things: either a token (e.g., a new word, image, etc.) or a ‘tool call’.

But what is a tool call?

The meaning is in the name. A ‘tool call’ is the agent saying ‘I want to use ‘x’ or ‘y’ tool. This means tools are, ironically, tokens too, so the LLM predicts a tool name rather than a word.

That is why I was drawing an analogy with the big-headed villain: an agent declares what needs to be executed, but lacks the body to do it.

For example, say we ask our agent what the weather is like in Madrid, Spain, today. You do this because you know your agent has access to a ‘get_weather’ tool.

The model then predicts something like:

tool_name = "get_weather"

arguments = {"city": "Madrid"}

Then the harness (the system around the AI) maps get_weather to real code, and that code may internally call an API endpoint like GET /weather?city=Madrid. In that case, the model does not even know the raw HTTP details. It only knows the abstract tool interface.

Second, you can expose the API more directly and let the model produce something closer to:

endpoint: "/users/123"

method: "PATCH"

body: {"status": "active"}

But even there, the model is still usually not making the network call itself. It is generating the request specification, and your runtime executes it.

This is important for two reasons: it helps us understand ‘how agents actually look like under the fancy naming’, and also because it beautifully explains why CPUs are suddenly fancy again. Yes, that’s the $100 billion/year decision I was alluding to.

On the former, what this means is that an agent, by itself, can’t do anything; it requires a harness on top that identifies which tool the agent calls, executes it, and returns the response to the agent for it to interpret.

Robotic AIs are agents too, but real ones; the AIs acting as brains of humanoids are VLA (Vision-Language-Models), models that are “similar” to LLMs, but unlike an LLM, which can only make tool calls, the model is actually outputting actions for the robot. This means that, unlike LLM agents, the entire agent trajectory runs on GPUs in a humanoid.

The CPU Renaissance

Before we continue with agents, I can’t help but remind you that agents are a blessing for CPUs precisely because of this. I talked about it at length here, so I’ll cut to the chase: the tool world is a CPU world, the AI world is a GPU world.

Therefore, if agents aren’t the ones actually running the tools but just declare them, those declarations are sent to CPUs, which process the tools and send the tool responses back to the GPU.

This is why CPUs are so important, because they are the bottleneck in agentic workloads. Without powerful CPUs, you can have the most powerful accelerator the world has ever seen, but the overall agent response will be slow.

And now, back to tools: how do we make AIs use tools effectively?

Skills and MCP

As AIs are nothing but predictions of tools based on their knowledge, the provided context, tool definitions, and instructions, it’s vital that tools are thoroughly explained.

Because how is an agent supposed to choose a tool to be called if the agent doesn’t understand what the tool does?

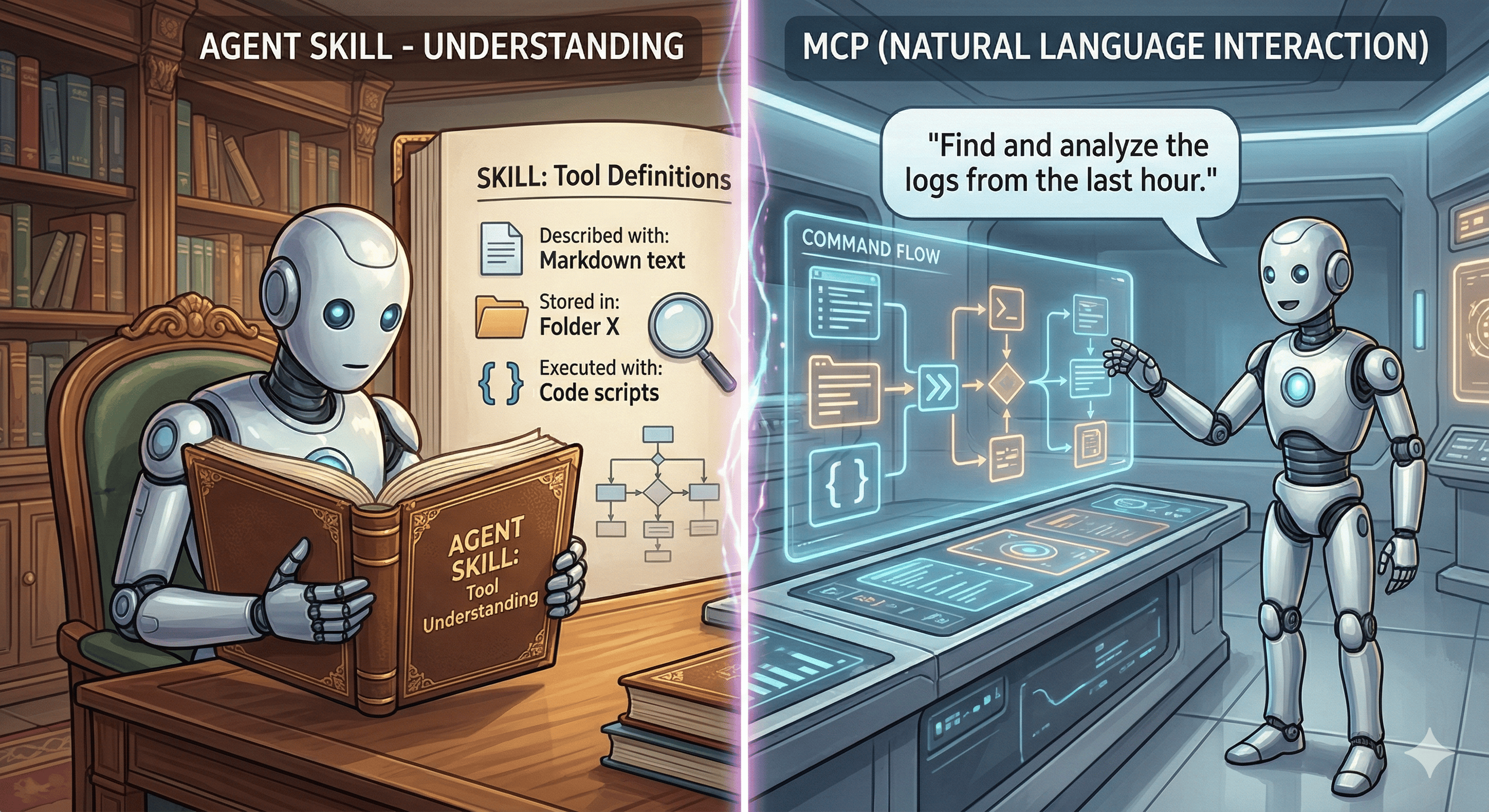

This is where skills come in. This agent primitive, popularized by Anthropic, is again marketing on steroids (in pure agentic fashion) for something really, really simple: folders and text.

That is, an “agent skill” is nothing but a markdown file (i.e., text) and code scripts (the execution harness) that help the agent understand what a tool does and, importantly, how to use it.

This is materially different from MCPs, the hottest way to integrate tools into agent settings for a long time, which allows agents to use tools via natural language.

The difference is that an MCP lets the agent use a tool without knowing how, while a skill explains how to use it at runtime, like an instruction manual for your dishwasher while you're using it.

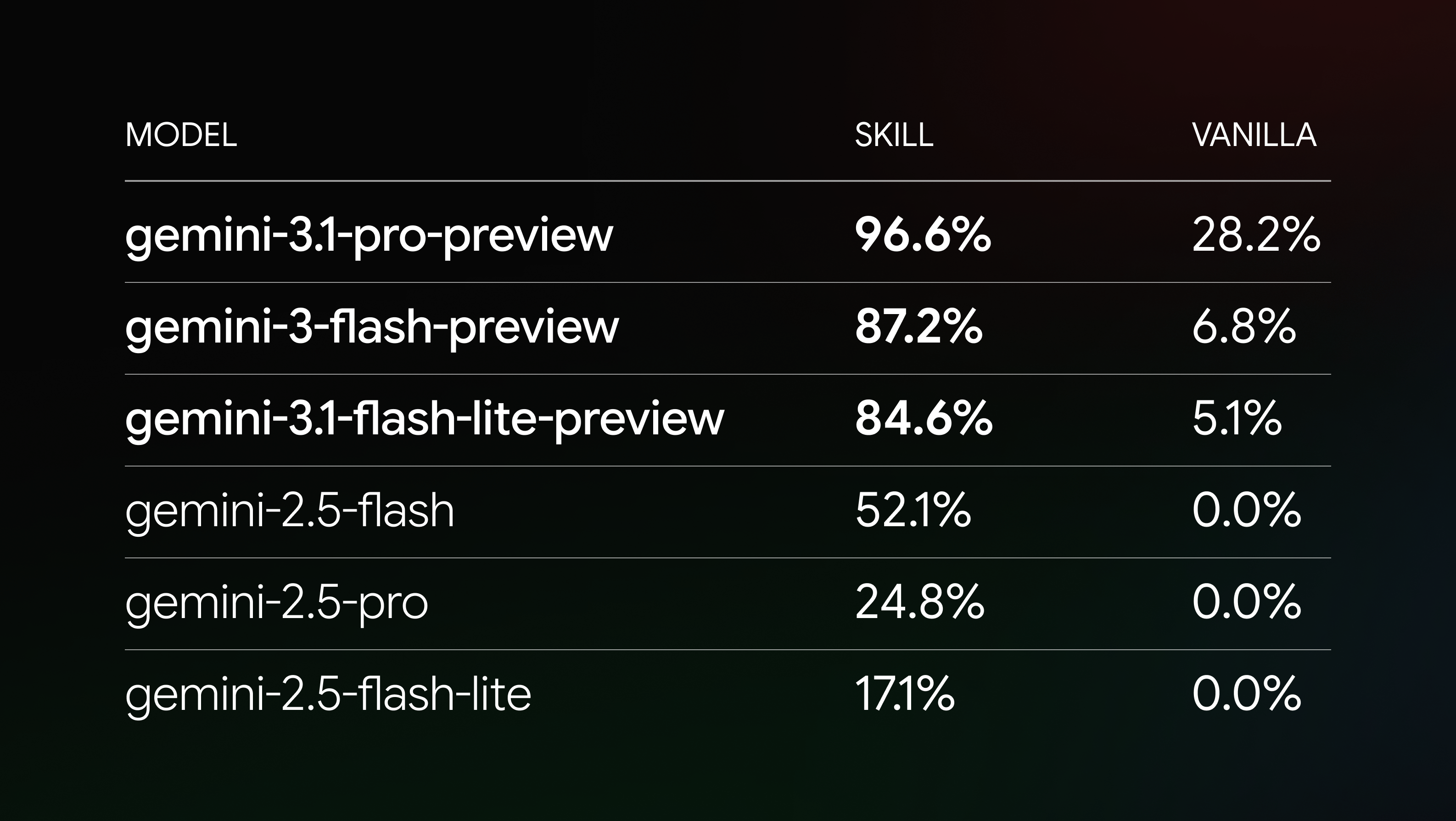

And the reason skills draw so much interest these days is that, well, they are actually pretty powerful, more than MCPs even, as they provide the necessary explanations for the agent to get the most out of the tool, as Google proved.

In an interesting blog post, Google DeepMind engineers show that adding a simple skill that explains how to build apps with Gemini libraries dramatically improves the model’s capacity, from less than 30% of their top model to near saturation, in some cases going from literally 0% to 52%, and from an 'okay-ish' 28% to basically perfection with the most powerful Gemini model:

And it’s the realization that skills matter that takes us to the big realization with agents: issues with agents are not that much about AI, but more so about deploying agents in a world not meant for them.

But before we get to the weeds of today’s message, let’s quickly cover what is definitely to blame on AI.

AI Limitations

As I’ve said multiple times, we are usually setting up agents for failure, but that doesn’t mean all blame is on us. In fact, agents do have some unsolved problems:

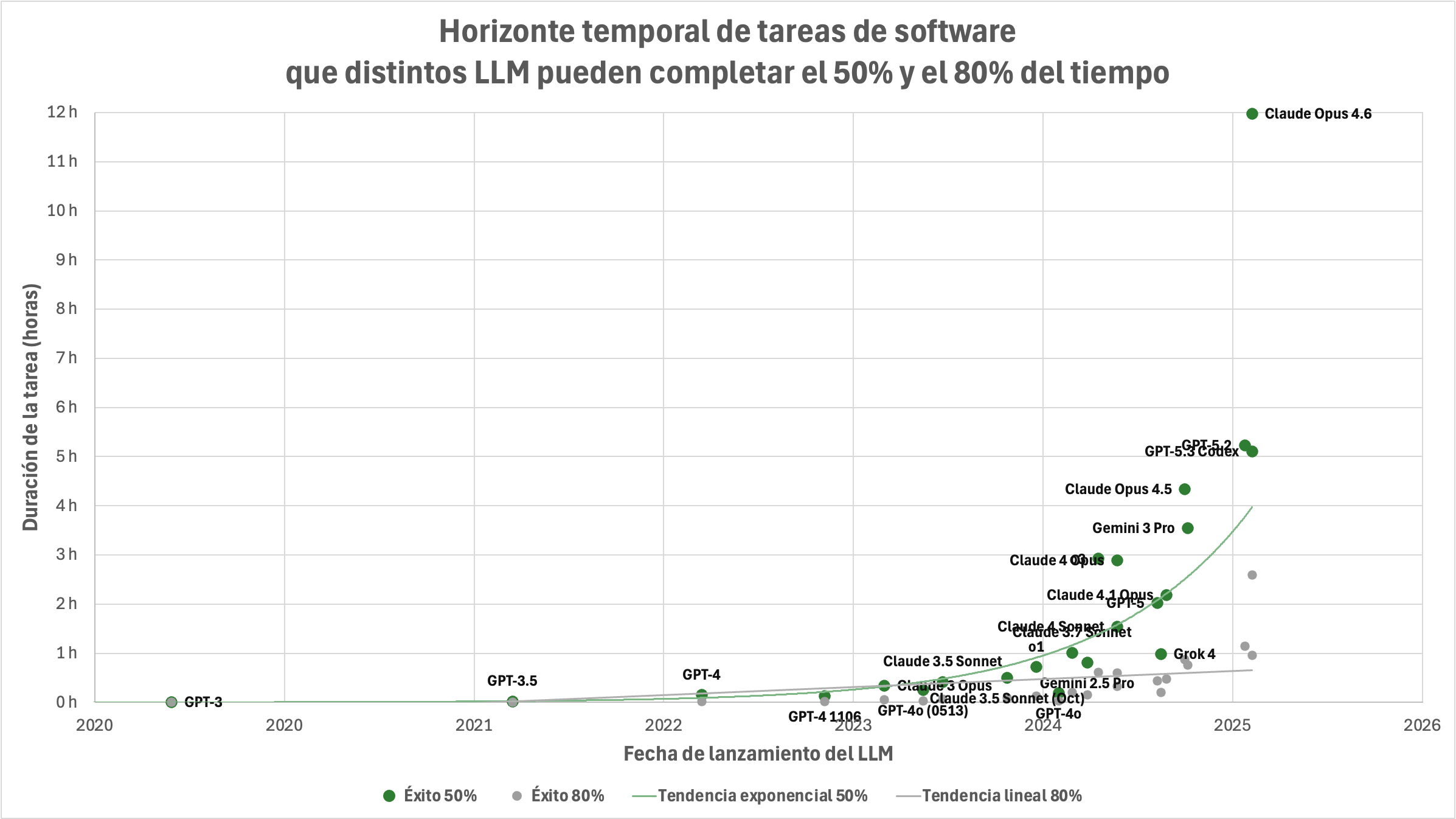

Reliability. We’ve talked far and wide about this. As you can see below, the “long horizon” nature they’ve been attributed is more an illusion than anything else, and they struggle to execute a task consistently.

If you increase the accuracy requirements from 50% to 80%, model performance collapses, and 80% is still unacceptable (you want at least 99.99% accuracy).

Context rot. We feed them more context than they can meaningfully handle. We are promised 1 million context windows, but in reality, performance falls off a cliff.

Cost. Probably the most important one of them all, serving AIs is much more expensive than we realize. And the worst thing is that we are being subsidized by investor money. Once AI Labs start charging for AI’s true costs… oh boy.

No better proof that we are getting subsidized than Anthropic prohibiting the use of its subscriptions for OpenClaw-esque agents (you can pay for the API, but that’s where you’re the one getting bled dry), which shows they were, indeed, getting bled dry; they wouldn’t be destroying demand if they were getting paid their fair share.

But I’m not here to tell you that agents are young, because you already know that.

The overarching point I’m making today is that the ecosystem around them is younger, and I’m going to show you today how I think it will look in a few months.

The Act: How do I use Agents?

Before I explain the app I’m building to put an end to my agent’s financial context and action issues, let me recap how I use agents.

As you may have guessed from last week’s skepticism, I’m not fully agent-pilled yet as respects to action; what the agent can do for me. Interestingly, though, it’s not the agent’s fault.

What agents do for me

First, I use them for context and search gathering, and for generating reports that get me up to speed on what’s going on in the world without me having a backlog of 100 sources every day.

My agent reviews my key sources (mostly my email accounts) and generates a report that lands in my inbox. Just two things I want to say on this:

My sourcing has become vetted, meaning that, in a world where most digital content is horseshit, I deliberately choose the sources I trust and want to use. Most applications of agents generating reports for users are toys and not really useful, not because the agents are bad, but because the sources are terrible.

If your agent has to go through a lot of context, as in my case, subagents are a good option. I do not recommend the use of subagents on collaboration situations (models tend to fight with each other and collaborate way less than you would imagine, because they were not trained for it), but in here, each agent is working alone, so it’s not a collaboration, it’s a parallelization, and it works great.

My other big use of agents is on Excel. This is particularly interesting because it has made me the perfect example of why AIs, regularly seen as job destroyers, can actually be demand unlockers.

I rarely used Excel before agents, at least not at the rate I do now. My work is more about getting a handle on trends and deep-diving into very hard concepts that people with decision-making capabilities need to know how to respond to progress.

I would love to do more analytical work; model scenarios, picture futures, because I have the statistical knowledge for it. However, keeping up with everything that is going on was already a full-time job (more than full-time actually), so Excel modeling wasn’t on the menu.

With ChatGPT for Excel (there are other options, too, of course), I can actually elevate my work by testing my own hypotheses. For instance, my study on the war in Iran and how it affects AI was possible thanks to ChatGPT.

To be very clear on what I’m implying, this is an expansion of what I can do, not an improvement of what I was already doing.

I didn’t have the time to set up an entire predictive cost model in a few hours. Now, I can chat with ChatGPT, explain what I want, discuss how we’re gonna do it, and it takes care of the doing; I do the thinking.

To me, this is undeniable proof against the usual approach to predicting AI's future; we forget that Jevons paradox exists. We picture a future where everyone is suddenly so much more productive while, for magical reasons, demand stays constant.

Yet, history tells us again and again that demand is not constant; it’s a function of price, which in turn is driven by increases in productivity. Productivity goes up, costs go down, demand goes up, meaning the idea that AI is just going to make everything so much more productive and that new demand won’t be created is a vision at odds with history.

Of course, demand growth is not infinite, and “too much productivity” eventually leads to much more supply than there is demand, and prices fall off a cliff (e.g., agriculture).

But I digress. With all said, my personal experience is that most (if not all) use cases where I’ve come to the conclusion that AI is really worth it are iterative, not automative.

Financial modeling. Coding. Creative work. Maths theorem proving. Consulting due diligence.

All those areas where AI is already making a huge impact are iterative workflows, areas where AI and humans work side by side on the problem.

Instead, in automation workflows, areas where AIs handle everything, and humans are out of the loop, its impact is negligible. Nonetheless:

Every six months, radiologists are six months away from disappearing, and there they are.

Customer Service should have been automated 2 years ago, allegedly, according to your average AI enthusiast prognosticator. And yet, India’s exports of customer reps continue to grow, unchallenged, quarter after quarter.

Everyone thinks of AI as an automation tool. But currently, it has shown zero evidence of that. Thus, I hope I haven’t ruined your day by telling you that, from my experience, this idea that agents are automating everything is a barefaced lie.

At this point, you may be thinking I’m a hypocrite, considering I’ve been predicting the arrival of agents for months now. But, for what it’s worth, they are here; it’s we who aren’t ready for them.

This has led me to realize that the agentic revolution doesn’t reach everyone equally. To make agents work, you need the right sources and tools. So you can either wait until the entire digital world has repurposed itself toward agents… or just build it yourself.

I’m in the latter group. Thus, today, I’m going to show you how I see the agentic future actually looking like, or how I call it: software 3.0.

Behind the paywall, I’ll explain my approach to vibe coding, including the tools and strategies I use.

I will show you the finance app I’m building for my agent and explain in full detail why it is not an ordinary finance app and why it’s actually built for agents first, not for humans. I’ll explain its structure, what makes it materially different from every single piece of software you’ve ever seen, and why English is the most powerful tool in anyone’s pocket (yes, no lines of code were written here; as most “aggressive” drunk men you run into every once in a while, I was “all talk”, fully programmable by the power of Shakespeare’s language).

Most software will be built this way. So, if you’re curious about how I pitch executives what that future looks like, this is it.

Subscribe to Full Premium package to read the rest.

Become a paying subscriber of Full Premium package to get access to this post and other subscriber-only content.

UpgradeA subscription gets you:

- NO ADS

- An additional insights email on Tuesdays

- Gain access to TheWhiteBox's knowledge base to access four times more content than the free version on markets, cutting-edge research, company deep dives, AI engineering tips, & more