THEWHITEBOX

The Time of the CPU has Come

Finally, the market is coming around to what we started hinting at in this newsletter two months ago: the time of the CPU has come. Gothmog from The Lord of the Rings trilogy already said it (kind of):

Whether you’re a LOTR fan or not, I deeply apologize for the terrible meme

But if you’re a regular of this newsletter, you already saw this coming. In my 2026 predictions, I explained my belief that CPUs were set to return to vogue due to agents.

And the reason why has nothing to do with the AI itself.

Today, it’s not even March, and we still have pretty much full visibility that my prediction was true: CPUs are definitely back in vogue as a fully-fledged AI trade, after years on the sidelines as everyone and their grandmother simplified AI = GPUs.

And what does that mean to you? How should you navigate this newfound opportunity?

By the end of this article, you’ll intuitively understand why CPUs are now suddenly so important, and also how the key companies in this market, NVIDIA, AMD, Intel, ARM, and Hyperscalers, fare in benefiting the most from this new huge market need, with one clear short-term victor that could soon see a revenue explosion that I don’t believe is remotely priced in by markets.

Let’s dive in.

The CPU, Back in Vogue

In the latest NVIDIA deal, in this case with Meta, something “strange” was announced: Meta would be buying standalone CPU servers. That is, not just GPUs with some CPUs on the side, literally CPU servers. Like the old times in cloud computing.

But to understand why this changes everything, we need to do a quick rundown on AI hardware, even if we’re only focusing on the key high-level stuff.

AI workloads from the CPU side

While GPUs have captured most of the narrative over the last few years, catapulting NVIDIA to the most valuable company in the world, GPUs aren’t standalone computers. Actually, they are external components to a CPU.

Often forgotten, AI GPU servers have CPUs inside, too.

But what is a computer? Computers are just that, machines that compute numbers. Each machine has both compute cores and memory: the latter feeds the data to the former and stores the results.

As you might guess, the compute core’s sole purpose is to perform mathematical operations (it has a bit of memory too, but it’s very small). And the more a chip (a bunch of cores) can execute per second, the better it is (throughput-wise).

Some companies build cores to optimize for energy consumption because more throughput means more power requirements and more heat, which leads to more cooling, which leads to more power requirements… You get the point. This will become important later.

The types of computations in a standard computer vary widely, so usually, these compute cores have to be somewhat general-purpose. This means chip designers have to allocate different parts of the chip’s surface area to different ends.

NVIDIA’s Vera CPU

Naturally, this means that being general-purpose is a trade-off: the more general a chip is, the worse its performance on any given specialized task.

CPUs are the perfect example of this; they are the essential chip in any computer workload, capable of managing and orchestrating everything that needs to be executed in a laptop or in AI workloads, but this comes at the cost of being suboptimal for certain tasks compared to otherschips.

Importantly to our topic of today, by design, CPUs are meant for sequential workloads, meaning they are conceived for execution of many very complex tasks in sequence.

In practice, however, modern CPUs, especially data center CPUs we are talking about today, have more than one core, reaching hundreds of cores with top-of-the-line CPUs. This means that CPUs are already somewhat parallel, in the sense that they can execute several operations simultaneously (usually equal to the number of cores).

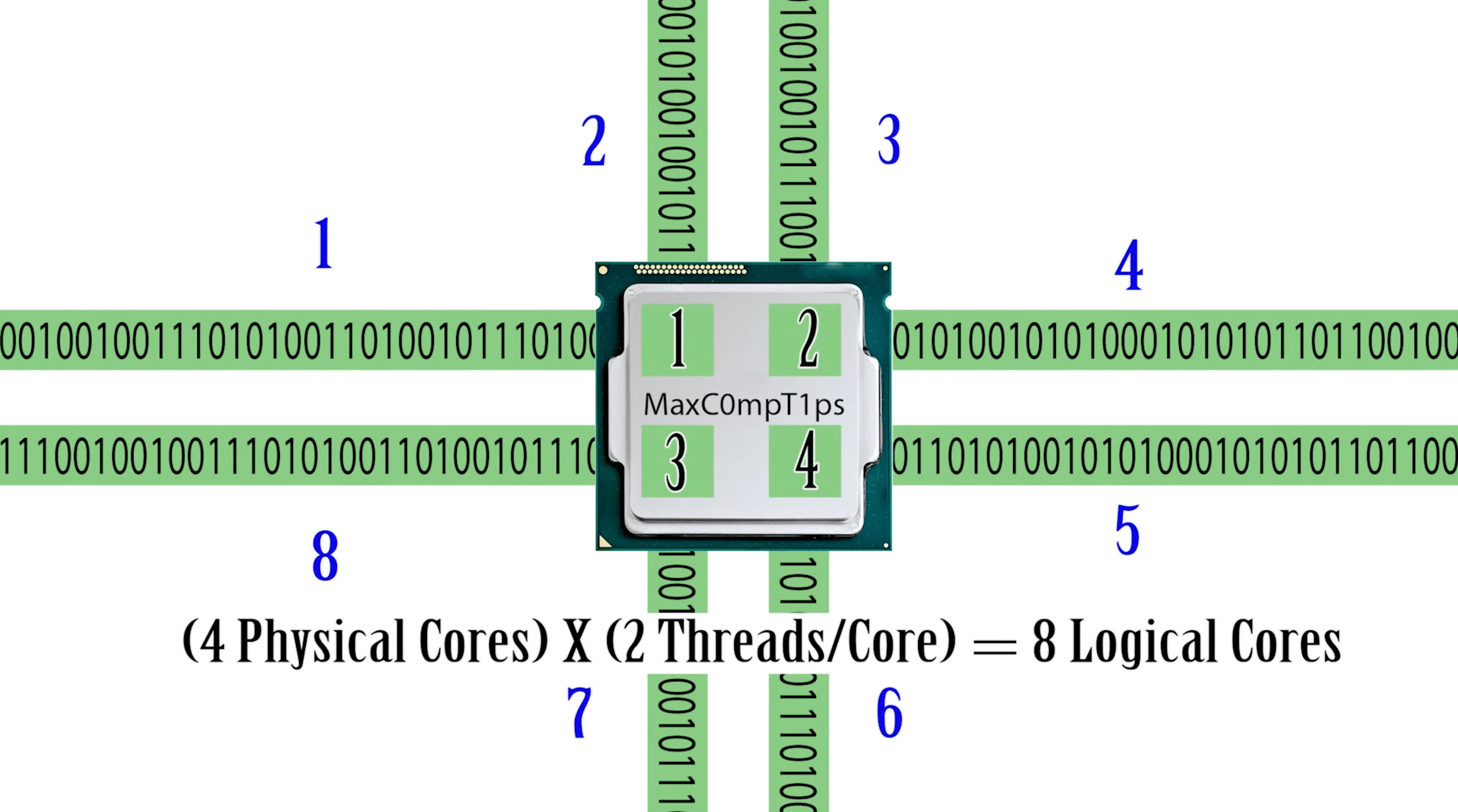

Furthermore, some CPUs have threading capabilities, meaning each core can effectively parallelize multiple workloads while sharing the same hardware.

As you can see in this visual example, each physical core has two threads, which can be thought of as each “worker” (a single core) working on two simultaneous “assembly lines” (the threads).

A logical core is just an abstraction to explain how many “parallel” jobs we are seeing. But to be clear, “logical cores” do not imply perfect parallelism; what threading enables is very fast switching between workloads (i.e., if one thread has to wait for something from memory, the core doesn’t remain idle and continues working on another thread).

This is nice, but these chips are still not ideal for highly parallelizable workloads. Therefore, the role of the GPU becomes apparent: they are just a whole bunch of additional cores (two or even three orders of magnitude more) that the CPU can use to offload workloads that require extreme parallelization.

And AI is that prime example, which is what led us to the age of the GPU.

This sets the stage perfectly to understand why the CPU's age is back.

The Classical AI Workload

You wouldn’t be wrong if you said that all AI is today, or, being more specific, all neural networks are, a bunch of matrix multiplications.

And in case you don’t know, all modern AI takes the form of neural networks. Below, you find the simplest neural network one can define.

Coarsely imitating two particularities of human-brain neurons, the synapses that connect different neurons, and their ‘firing nature’ (meaning neurons either fire or they don’t), AIs like ChatGPT today are just a bunch of these so-called neurons interconnected with one another.

These aren’t remotely perfect representations of human neurons, which can also adapt their firing rates in response to feedback, among other important distinctions that make the idea of calling them neurons a bit of a stretch, to be honest.

The key is that these connections and neuron firings are simulated using matrix multiplications, since these neural nets are distributed in layers where the output of one layer depends on the outputs of the previous layer(s).

This works wonderfully well, but it implies that every neural network requires a huge amount of parallel computation (e.g., determining whether a1 fires depends only on the inputs from the previous layer, but this can be computed in parallel with determining whether a2 fires).

And as we have literally trillions of artificial neurons in today's frontier models, we are talking about a lot of parallel computation. But how much? Well, let me open your eyes to the reality of AI.

When thinking about Transformers, the quintessential architecture in AI these days, a good rule of thumb is that, to make a single forward pass (a single prediction), the model has to perform ‘2*N’ operations, with ‘N’ being the number of parameters.

With mixture-of-expert networks (most networks today), ‘N’ is in reality ‘A’, the number of activated parameters. But let’s ignore this for the sake of simplicity.

Today's models are trillions of parameters large, so we are basically saying that each prediction potentially requires trillions of mathematical operations.

Furthermore, chatbots outputting fewer than ~50 words/second are considered too slow, so if we assume each prediction outputs a single word, that means the systems feeding us models like ChatGPT have to compute in the order of 100 trillion operations per second (more if we consider >1 trillion parameter models).

And that’s for a single user!

Such an abnormal amount of computing can only be provided by GPUs, solidifying their vital role in today’s “AI economy.”

Therefore, and this is a clear distinction to have in mind, when the AI workload is “AI heavy”, GPUs steal the spotlight completely.

But here’s the point I’m trying to get to: times are changing, as agents flip the picture.

The New Workload: Agents

GPUs are used mainly for one thing: highly parallelizable workloads. But, funnily enough, agents don’t necessarily fit that workload structure.

To clarify, an agent is just an AI that uses tools and external memory to carry out actions on our behalf. This won’t mean anything to you right now, but it matters a lot.

And to understand this, let’s first imagine a standard agent workflow, and then we’ll see how it fits into hardware:

The agentic workload

Let’s envision a simple example of an order service customer support agent:

Reception. The order service system receives a request from a user. First, the agent’s prompt needs to be built, including important context from the user in question, the agent’s scaffold (instructions on how it must behave), the tool catalog (the tools at its disposal), etc.

Memory retrieval. The orchestrator loads session context (recent messages) plus long-term user profile (e.g., saved “office address” and preferred delivery name) from a memory store, and builds the initial prompt state.

Agent inference (decide next action). The model is called with the user request, retrieved memory, and the tool catalog, and returns a structured tool call demand, e.g., lookup_recent_orders. In other words, the AI identifies the need to call a look-up tool to search for orders.

Tool call 1 (read). Execute lookup_recent_orders against the Order Service and return a list of candidate orders.

Agent inference (branch + next tool). The model is called again with the tool result and decides the next action, which is another tool, e.g., get_shipment_status.

Tool call 2 (read/write). Execute get_shipment_status; if not shipped, execute update_delivery_address tool. If shipped, skip the write and proceed.

Final agent inference + memory write. The model is called to produce the user-facing response (status + tracking or address-change confirmation) and, if policy allows, writes a small episodic memory update like “confirmed office address label” or “prefers office delivery when possible.”

The world is moving toward these workloads, as agents are poised to be the trillion-dollar use case. But here’s the thing: if you look carefully… something is different.

The key player: the CPU.

Now let’s look at where each step runs, hardware-wise.

Receive user request, runs on the gateway/orchestrator CPU because it’s networking, auth, routing, and initializing run state; no GPU work yet.

Fetch profile context, runs on CPU because it’s storage/network IO to memory systems (KV store/vector DB) plus prompt assembly in host RAM.

Infer next action using an AI model, runs on model-serving GPU(s). CPU only dispatches and collects output.

The order lookup call runs on CPU and network because it’s an RPC/HTTP call to the Order Service (CPU-backed service + database); results are returned to host RAM.

Infer branch decision, runs on GPU(s) again because the model conditions on the tool output to choose the next step; CPU packages the updated prompt.

Execute status update, runs on CPU + network because shipment-status and address change have to be executed over a remote service connected to the system (like the orders database); orchestrator coordinates side effects and stores results in host RAM.

Respond and persist. Response generation runs on GPU(s); streaming and formatting run on CPU; episodic memory writes are CPU-driven IO to a memory store and are persisted off-GPU.

Do you realize now what is going on? Suddenly, the workload is mostly CPU-bound!

This sudden balance toward the CPU is probably way more real than we had initially realized, as an industry, just a few months ago. But you don’t have to trust this small toy example: we already have research proof. Nonetheless, a paper from Intel showed this reality.

But how bad is it? Well, pretty bad. Basically, CPUs are the bottleneck in agent workloads, and by a long shot.

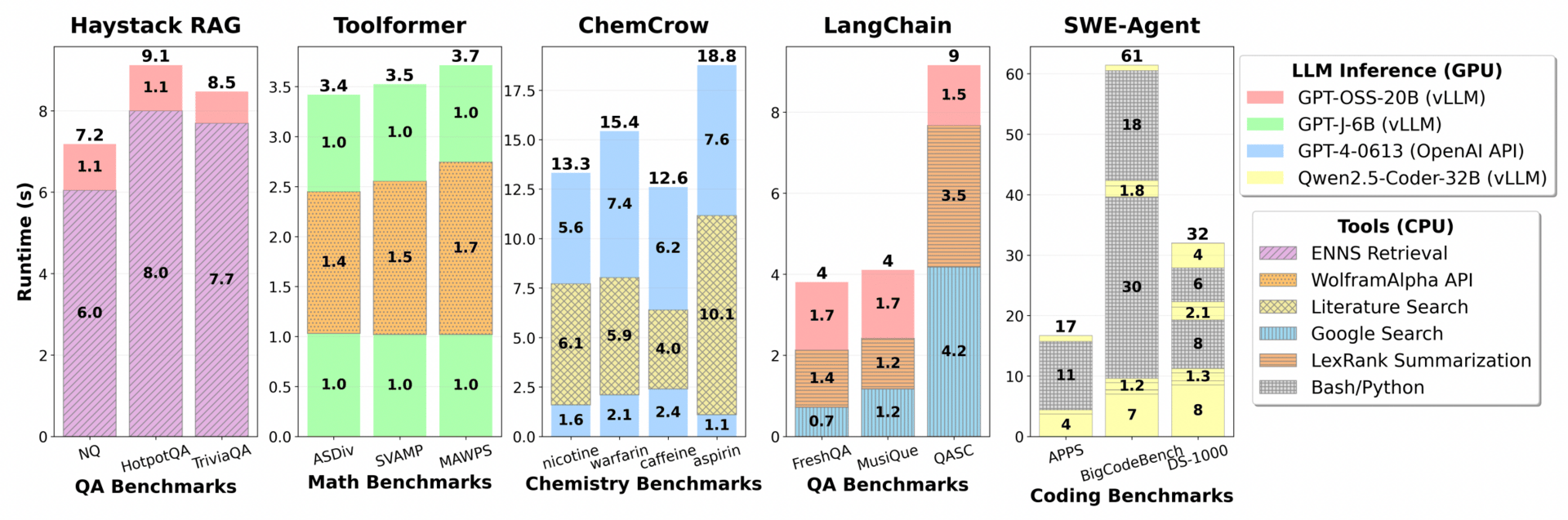

To prove this, researchers ran several LLMs using different agent scaffolds and tools and found that, among the overall agent latency, CPU workloads accounted for the overwhelming majority, especially in pervasive patterns like RAG (Retrieval Augmented Generation).

Mind you, most of the workloads below aren’t even fully agentic workloads, where the CPU penalty is even larger.

And just like that, suddenly everyone cares just as much regarding CPUs as they care about GPUs, or more, prompting NVIDIA to initiate an aggressive strategy to become a ‘CPU supplier’, leaving the ‘GPU-only’ tag behind, starting by announcing the release of standalone CPU servers, entire racks filled up with CPUs.

But here’s the thing: for the first time in the AI trade, NVIDIA is now playing catch-up.

However, make no mistake; there are many other players to watch, and we will compare them all to determine the most likely winner.

Subscribe to Full Premium package to read the rest.

Become a paying subscriber of Full Premium package to get access to this post and other subscriber-only content.

UpgradeA subscription gets you:

- NO ADS

- An additional insights email on Tuesdays

- Gain access to TheWhiteBox's knowledge base to access four times more content than the free version on markets, cutting-edge research, company deep dives, AI engineering tips, & more