THEWHITEBOX

TLDR;

This week, we examine new dubious valuations, startup drama, and another telling event about NVIDIA’s future. We then move to the world’s first known attempt at leveraging inference-time compute for video generation and research showing how AIs learn similarly to humans.

Finally, we take a look at Amazon’s new feature and how OpenAI is conceding and adopting its biggest competitor’s communication protocol.

Enjoy!

THEWHITEBOX

Things You’ve Missed By Not Being Premium

On Tuesday, we sent our weekly Premium news rundown packed with additional insights on AI labs, including how OpenAI, Google, and DeepSeek are accelerating the release of new models. There is also a new AGI benchmark, a study of AI and loneliness, and more.

AGENTS

The Future of Agent Payments

Manny Medina, founder and former CEO of Outreach, has launched a new startup called Paid, aiming to address the unique billing challenges faced by companies developing AI agents.

The company argues that traditional software billing models, such as per-user or usage-based pricing, are inadequate for AI agents that often operate autonomously and perform entire roles.

Paid offers a platform that enables agentic startups to create flexible pricing strategies—both fixed and variable—focused on profitable margins. The platform also tracks agents’ output to validate return on investment, effectively blending billing and HR management for the AI agent era.

Paid has secured €10 million (approximately $11 million) in pre-seed funding from investors including EQT Ventures, Sequoia, and GTMFund.

TheWhiteBox’s takeaway:

If you’re a regular reader of this newsletter, you’ll know that we have been proclaiming the death of seat-based pricing. With agentic software, it really doesn’t make sense.

Instead, we have been proposing usage-based pricing, where customers pay only for what they consume. This makes much more sense in a world where most software will act on our behalf based on what we instruct.

But Paid takes it a step further, and I think they are actually into something. The idea would be outcome-based pricing, getting billed only for successful agent inferences (the whole point of this platform is to measure agents’ success and clearly separate irrelevant inferences from those that matter).

Since agents are probabilistic-based software, picturing a world with 100% agent accuracy is pointless. Worse, if we factor in multi-agent scenarios, the customer will feel hopeless in trying to measure return on investments, let alone agent accuracy. Consequently, this idea of getting paid per successful outcome makes a lot of sense but feels incredibly hard to measure.

If Paid pulls it off, this is a billion-dollar startup in the making.

It’s also interesting to see how we are witnessing the creation of a new software stack around agents: new client-server communication protocols (MCP), new metering software (Paid), and auth for agents (Okta), among many others.

PRIVATE MARKETS

Perplexity, an $18 Billion Startup?

Perplexity AI, an artificial intelligence search startup, is reportedly in early discussions to raise up to $1 billion in a new funding round that would value the company at $18 billion.

This valuation represents a significant increase from its $9 billion valuation in December 2024. The startup offers an AI-powered search engine that provides real-time answers by searching the internet, positioning itself as a competitor to Google’s Gemini and OpenAI’s ChatGPT.

Furthermore, Perplexity introduced Comet, a web browser that leverages AI to perform complex searches and tasks. They plan to release it soon to fully enter the consumer market (currently, their only entry point for consumers is their website).

TheWhiteBox’s takeaway:

Personally, I’m having a tough time understanding Perplexity’s valuations. Considering the market they are trying to win, search, is a $200 billion market (primarily dominated by Google) that is not only huge but growing incredibly fast (at a projected 10% CAGR—Compound Annual Growth Rate, which, if true, would mean a $400+ market by 2032), the valuation doesn’t seem ridiculous, especially since they have just crossed $100 million in ARR (Annual Recurring Revenue).

My problem with their valuation comes when you perform relative comparisons. Anthropic recently raised at a $60-ish billion valuation while having already crossed the $1 billion revenue mark, more than ten times more. That means Anthropic has a P/E ratio of 60, while Perplexity has a jaw-dropping 180, three times more.

Could it be that Anthropic is undervalued?

It doesn’t seem that way if you compare it to OpenAI’s most recent valuation (P/E of 39, with a $156 billion valuation and $4 billion in revenues). Let’s not forget they are all trading at a significant premium compared to Big Tech, and in Perplexity’s case we must factor in that their most important competitor is Google, with a P/E ratio of 20, a profitable cash cow, and fully verticalized (they own both the AI and the underlying hardware).

Private companies tend to have a premium over public ones, but let’s not forget that all these companies are stupidly unprofitable. So we must reach a point where we must ask: bubble much?

As for the browser play, it could be a home run. The idea they are pitching is a deep-research-type product at scale, making all your Internet searches ones where the AI “reasons” over your request to provide not only valuable links, but also additional insights.

That sounds incredible to me, but also sounds incredibly expensive to serve, which means it’s a sign of many future investment rounds. We must also remain cautious with their claims because their Deep Research tool is miles behind ChatGPT's, for instance.

One great thing about Comet is that it seems very RAM efficient compared to Chrome (Chrome eats your non-volatile memory like crazy, a deal breaker for anyone running local AIs like me—I use Brave currently).

By the way, if you’re curious about which tool I use for search (both standard and deep research), it’s ChatGPT. They are head and shoulders above the rest, especially on the latter (even when factoring Grok 3).

PRIVATE MARKETS

AI Illusions & Lies

11x, an AI-powered sales automation startup, has faced scrutiny over its business practices. Despite reporting rapid growth and securing significant investments—a $24 million Series A led by Benchmark and a $50 million Series B led by Andreessen Horowitz—the company has been accused of misrepresenting its customer base, with misrepresenting being a euphemism for ‘lying about’.

Multiple companies, including ZoomInfo and Airtable, have stated that they were inaccurately listed as clients on 11x’s website without consent. ZoomInfo, for instance, conducted only a brief trial and did not proceed further due to unsatisfactory performance. Despite this, 11x continued to present them as a customer, leading ZoomInfo to consider legal action for deceptive practices and trademark infringement.

Additionally, concerns have been raised about 11x’s calculation of annual recurring revenue (ARR). The company reportedly included full-year projections from clients who had the option to terminate contracts after a short trial period, potentially inflating ARR figures. High customer turnover was also noted, with reports indicating that 70-80% of clients discontinued use after initial engagement.

To make matters worse, internally, 11x has been described as having a demanding work environment, with expectations of long hours and constant availability. This has reportedly resulted in significant employee turnover, with only the CEO remaining from the original team.

TheWhiteBox’s takeaway:

The fugazi we saw during the dot-com bubble, where many companies were ridiculous ideas raising millions by adding ‘.com’ to their name, will happen too in AI.

As I discussed on Sunday, I’m highly skeptical of the hype surrounding many application-layer AI startups with impressive initial traction—one must ask the extent to which it’s true—but mainly because I believe it will be short-lived as the model-layer companies cannibalize their businesses as models get stronger.

I see most of these businesses as ‘oasis in a desert’ illusions for VCs, which see what appears to be an oasis they are so desperately looking for to save their funds as hardware and infrastructure layers are either public companies or multiple-billion CAPEX moonshot, and the model layer is not only considerably well capitalized but also rapidly commoditizing. Unless you’re Benchmark, Sequioa, or A16Z, the application layer is the only place for VCs to hope to make money.

However, this is, by far, the hardest because model-layer companies are incentivized to verticalize upward and will do so naturally through model improvement.

If you want to understand better how software startups can protect themselves from this, read my recent Leaders segment.

So, where will most money be made then? Simple, buying companies and making them more efficient using AI.

It’s not about creating an AI product, but being the best at using them.

M&A

NVIDIA Acquires Gretel

NVIDIA has bought Gretel, a synthetic data generation platform, for a nine-figure undisclosed value. Gretel’s software helps you create synthetic data for AI training at scale.

Synthetic data, data for AI training created by other AIs, has become a crucial aspect of AI model training, as companies struggle to find new data to scrape and, above all, for use cases where data is simply non-existent, like in reasoning.

For instance, a prevalent approach these days is to use open-source DeepSeek R1 to create synthetic reasoning chains (the process the model follows to answer a task) and use these chains to fine-tune other models.

TheWhiteBox’s takeaway:

NVIDIA understands better than anyone that having all your eggs in one basket is a risk. Currently, about 90-ish % of their business is selling AI GPUs; I believe it’s by far the least diversified Big Tech company.

As we’ve covered in the past, NVIDIA is positioning itself as a company with strong plays in other areas of AI besides hardware, mainly data (see above), robotics (in all facets), or autonomous driving.

As for this particular purchase, based on recent model releases like Nemotron, I believe NVIDIA is seriously pondering the idea of getting into the AI model training game.

The reasons, I believe, are two:

The bigger open-source AI popularity gets, the more usage of their GPUs

As they are trying to become an accelerated Hyperscaler (an infrastructure company for accelerated compute, aka GPU clouds) and have mostly failed so far, creating leading open-source models and offering them through their NIMs cloud (NVIDIA Inference Microservices) service is a great way to incentivize adoption.

As for the Lepton acquisition, it’s clear that they are all-in on their cloud computing business; investors should pay close attention in future earnings calls to see if Colette or Jensen publish cloud computing revenues (which would be a signal that they are growing and massively bullish for them, and a bearish signal otherwise).

You have probably heard of OpenAI’s recent image generation mode. This news is so relevant that it will occupy this Sunday’s entire Leaders segment. Stay tuned.

FRONTIER RESEARCH

Unlocking Inference-time Compute for Video Generation

A team of researchers at Tencent—yet again, China—has published what seems to be the first real attempt at unlocking inference-time compute on video models.

This paradigm, which is all the rage right now for assistants like ChatGPT or Claude, refers to unlocking more compute for the model during inference. In other words, it lets models “think for longer on a task.”

In video generation, the idea would be to sample several generative trajectories (several different parallel generations of the video) combined with internal verifiers (surrogate models that check the intermediate results and critique them to guide the generator), but using a search technique they’ve called ‘Tree of Frames’.

Think of this method as the following: Instead of generating 10 entirely different ways of solving a math equation, a tree search will combine the various generations, expanding on promising solutions and rejecting ones that lead to dead ends.

For instance, one candidate might have a better start, but another may have better final execution. Thus, we cut the last part of the first candidate short and continue with the progress of the other candidate, ending with a result that requires 1.5x compute instead of 2x.

Very, very promising research.

TheWhiteBox’s takeaway:

The logic of this research is obvious: Inference-time compute, which has allowed LLMs to continue to push the frontier of what they can achieve, should and will transfer to other modalities.

Allowing a model to deploy more compute to work on a task is obviously going to improve its results. However, the key takeaway is that the first company we know actively doing this is not from the US but from China.

This means one of two things:

US companies like OpenAI are doing this too, which means they are becoming less and less open about their work

US companies aren’t doing this, which means China is leading in innovation.

Honestly, I don’t know which one’s worse for the US’s aim for AI supremacy.

HEALTHCARE

Mapping the Brain with AI

A recent study by Google Research, in collaboration with Princeton University, NYU, and HUJI, investigated the parallels between human brain activity and the internal representations of Large Language Models (LLMs) during natural language processing.

The researchers analyzed neural activity recorded via intracranial electrodes from individuals engaged in spontaneous conversations. In layman’s terms, they measured brain activity while humans involved in conversation.

Then, they compared this neural data with the contextual embeddings generated by a Transformer-based speech-to-text model, specifically OpenAI’s Whisper. The findings revealed a notable linear alignment between the brain’s neural patterns and the model’s embeddings: neural activity in speech-related brain regions corresponded with the model’s speech embeddings, while activity in language-associated areas aligned with the model’s language embeddings.

This study suggests that LLMs, despite their distinct computational frameworks, can serve as practical tools for understanding the neural mechanisms underlying human language comprehension.

TheWhiteBox’s takeaway:

In summary, this fascinating research shows that AIs seem to be learning similar representations about the world to humans.

When exposed to text, the internal model representations align pretty closely to the patterns we observe in the human brain in the areas dedicated to language, and the same occurs for speech.

But wait, what is a representation? AIs compress the knowledge they are exposed to during training. In other words, they capture knowledge in some shape or form. This knowledge is expressed internally as ‘representations’ that tell us whether the models grasp the key underlying patterns behind concepts.

For example, the representations of ‘dog’ and ‘cat’ are much more similar to ‘dog’ and ‘trousers’ because dogs share many more similarities with cats than they do with trousers.

However, there’s a big question about the extent of the quality of these representations. Many experts, like Meta’s Chief AI Scientist Yann Le Cun, are notoriously against the idea that LLMs build an accurate understanding of the world. We mustn’t forget that these models learn from the world mainly through text, which is nowhere near the quality signal we humans receive through our senses, so Yann has a strong argument in its favor.

However, the fact that these models seem to be capturing similar patterns as humans inevitably forces us to ask ourselves whether LLM enthusiasts might be on to something here. With the rise of multimodal models, models that no longer learn principally from text but from other formats like images or video, one has to question whether skeptics, including myself, could soon be proven totally wrong.

AGENTS

OpenAI Embraces MCP

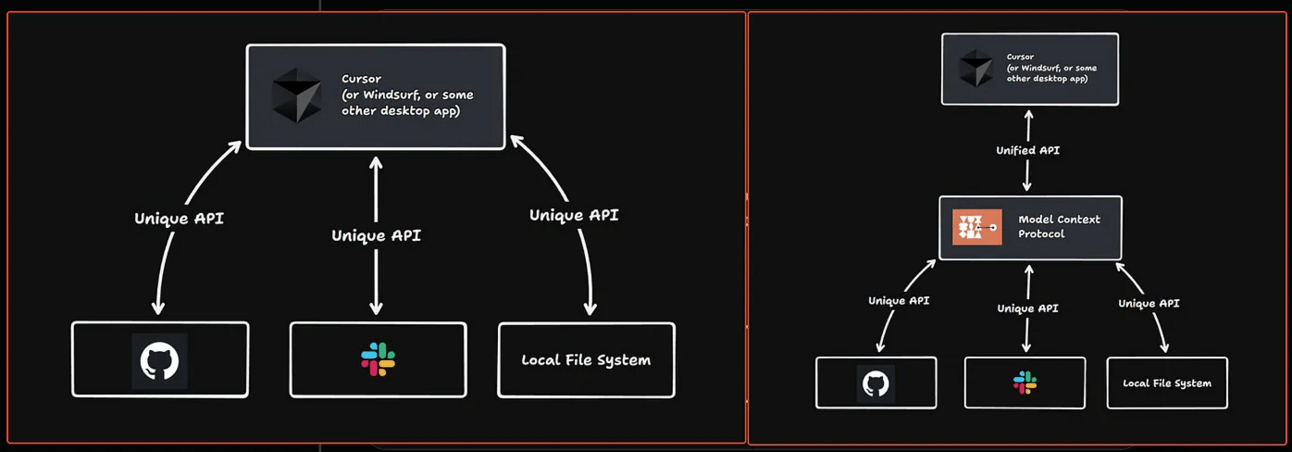

If there were doubts about MCP, Anthropic’s open-source client-server protocol for agents, they are pretty much out of question now that OpenAI has officially added support.

But what is MCP? The Model Context Protocol is a recently presented standard for agent-tool communication.

In layman’s terms, it creates a language-based interface between your agent and the tools it needs to use. In other words, once an agent identifies the need to use a tool (like browsing the web), it can connect to Brave’s MCP server and talk to it, literally asking, “Give me results on Austin’s weather for today.” The Brave MCP server receives the natural language request and returns another English-based response with all the information.

Here are some examples of the fantastic things you can do already with MCP:

Think of MCP this way: it’s a standard that allows agentic software to use tools using the English language, preventing you from having to assemble the integration. But how does that differ from APIs?

Well, standardization. To an agent using MCP, all tools and resources are accessed in the same way:

To me, this is literally the future of software, no doubt about it.

So what has OpenAI done? Well, they now allow their agents to serve as MCP clients (software agents that can now use MCP).

This also helps a lot in visualizing what software will look like in a year:

An agent-based MCP client, the agent that receives user instructions and orchestrates the task

MCP Servers acting as tools for agents. In other words, the Salesforces and Workdays of our time will evolve into tools for agents; while you will still be able to log into your Salesforce UI, most of Salesforce traffic will come from agents.

All the necessary layers (like auth, IAM, cyber, metering, payments, and so on) will also be agents interacting with yours.

Welcome to the future!

PRODUCT

Amazon’s New ‘Interests’ Button

Amazon has shipped a new ‘Interests’ button that allows users to describe in natural language the types of products they might be interested in so that the recommendation engine knows what products to push to them.

TheWhiteBox’s takeaway:

An interesting idea that builds our intuitions of what software is becoming: an English-based interface. But importantly, one that learns from you. I expect most software should strive to learn from you over time and adapt to your renewed interests, and it feels Amazon shares this idea.

What I believe this feature is under the hood is a system where the text the user writes is sent to an LLM that parses the interests and computes semantic similarity of these interests with products that could meet those interests.

For example, if the user writes; “I’m a big fun of cycling, pottery, and electronics” the parsing LLM might turn that into a series of potential sentences that describe each interest better, such as “he/she likes bikes” so that the word bike is used so find semantically similar products (bikes, naturally).

If you plan on trying out this feature, I recommend being particularly specific with your interests, so that the potential semantic matches reflect what you want.

Remember: LLMs' performance is only as good as the context you send them.

THEWHITEBOX

Join Premium Today!

If you like this content, by joining Premium, you will receive four times as much content weekly without saturating your inbox. You will even be able to ask the questions you need answers to.

Until next time!

Give a Rating to Today's Newsletter

For business inquiries, reach me out at [email protected]