FUTURE

Clarifying the Future of Software

After months of thinking about it and dozens of articles, papers, and keynotes, I think I finally understand the future of software. And my conclusion is that I am fully convinced that most AI and software startups are doomed.

In fact, almost everyone, including AI startups building the so-called “agents for x,” is doing it wrong. And it’s not just me who’s saying this; OpenAI and Anthropic have also expressed the same sentiment.

The funny thing? I was doing it wrong too!

By the end of today’s piece, I guarantee you will be able to evaluate any software product and determine whether it will survive AI.

This is an important read for:

Business owners using or planning to use AI software

Investors in software (public and private) or Big Tech and index funds in general

Business Leaders using software as part of their daily lives and those of their employees because it will help them understand how to deal with crappy salesmen and make better business decisions.

Here we go!

What Agents Actually Are

We’ve discussed agents extensively in the past, even giving a tech-aware definition that goes further than most people will.

However, my definition lacked one crucial piece, which an Anthropic researcher helped me identify.

An End-to-End Goal Achiever

For most people, agents are AI models that interact with an environment and have a goal they must achieve under a set of constraints. A great example is AlphaGo, an AI agent that played the board game Go to a superhuman level.

In that case, we had the following variables:

Environment: The board game

State: the state of the game being played (in reality, this environment is fully observable, but most environments aren’t. Therefore, normally, we would call this not a measure of a state but an observation of the state, a partial representation.

Goal: Win.

Constraints: The rules of Go.

The AI model then learns an action policy that maximizes the likelihood of achieving the goal under the environment and set constraints. This is a definition most people, even experts, will be okay with.

But it’s missing a crucial piece: engineering mindset. With agents, how the AI achieves the goal is equally important as achieving the goal… and I totally missed that part.

Crucially, while everyone also misses that part, it’s precisely the one that will define your software product's survival probability.

Today, we are fixing the definition for good (and actually visualizing it). But what do I mean by this?

Workflow vs Agentic

Cutting to the chase, most software thought to be agentic is, in fact, not. I realized this while seeing how the Head of Applied AI at Startup Ramp used agents internally.

Ramp is a company that helps other companies, mainly startups, by offering corporate charge cards, expense management, and bill-payment software.

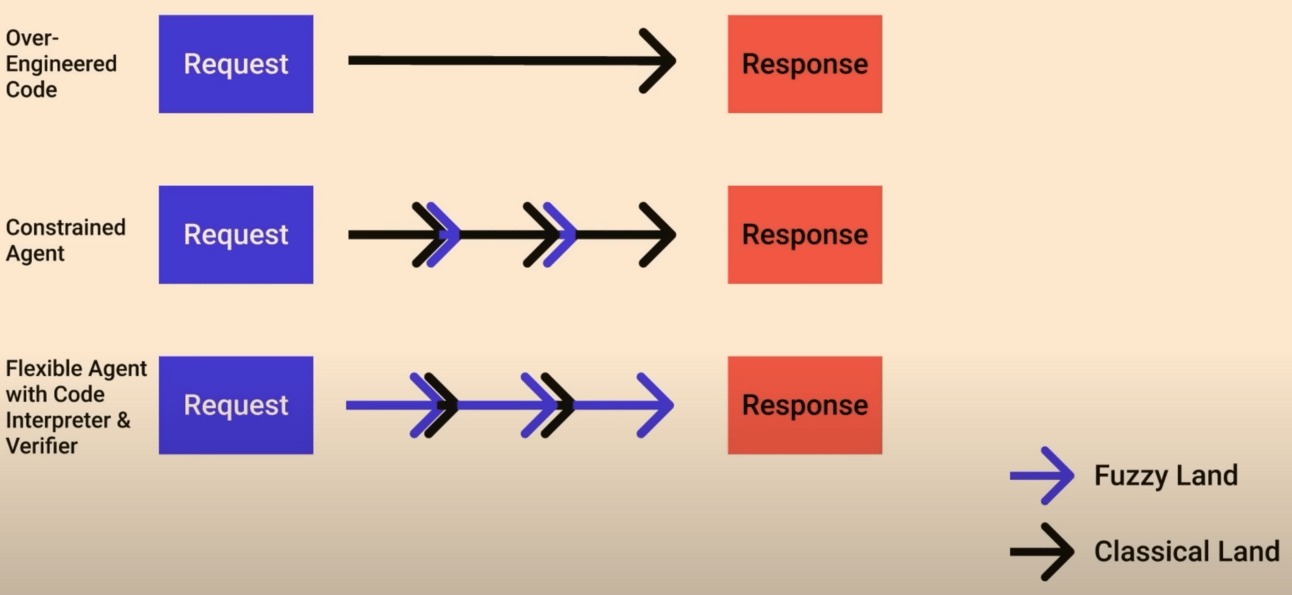

In his keynote at an agent’s conference, he defined three ways of building software:

Over-engineered code, where engineers look for fully deterministic and, often, hardcoded and rigid software. Here, code is entirely predictable, but your code is not AI-enhanced (AIs don’t participate in the process) and, thus, not flexible (if something changes, code has to be updated). This is the state of most code today.

Constrained agent. Here, we engage an AI model that helps us perform our job better, but the human-engineered scaffolding guides the entire process. Here, we look to adopt AI when it suits us, but we resist the temptation of setting the AI free. In other words, the human-written code is making the calls.

Agentic. Here, the AI model is given a task that it must fulfill independently. Human-crafted code is still playing a role, but that of a tool that empowers the AI, not vice versa.

Source: Ramp

And that is when it hit me. Future software won’t be a product. It will be a tool.

But what is the difference?

It's simple: For your process, software, or product to be agentic, you must give the steering wheel to the AI model. While this might sound dangerous, this simple change will determine survival chances of most software startups.

Giving AI the Steering Wheel

Most agentic software you are being pitched or marketed to falls into camp two. Its developers are constrained agents who add AI at a couple of steps (purple arrows) and then claim the tool is agentic.

But that’s not an agent.

Most startups chose step two because most people, including them, believe AI isn’t suitable yet for the third approach, which involves taking the lead in the process.

And well, they are right and wrong at the same time. However, it’s still the wrong approach, and you should definitely be building software according to approach three.

At this point, you might feel I’m an oxymoron, as you’ve seen me criticizing the living daylight of agents for not being robust and crucial for enterprise use cases.

And that is still true indeed, as agents do have a robustness problem that AI labs don’t tend to show (and when they do, they do so indirectly).

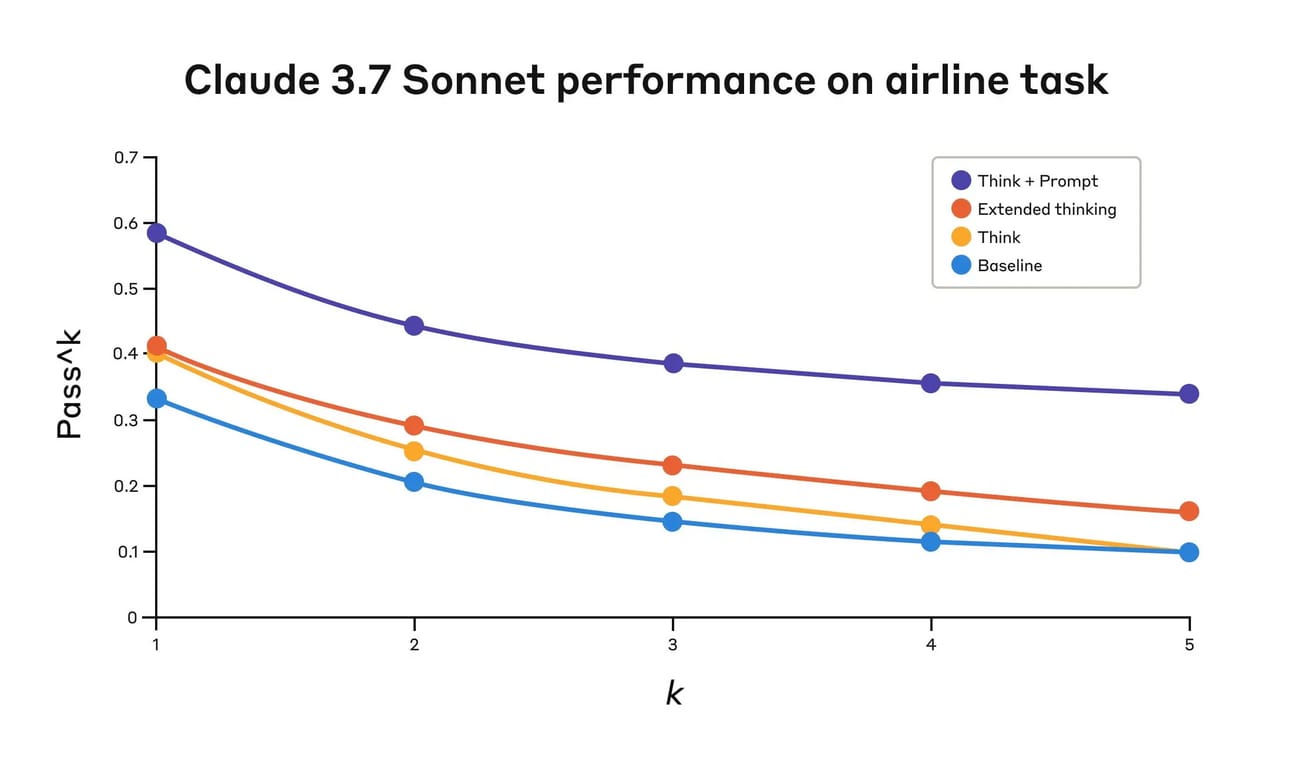

For instance, in a recently published blog post, Anthropic introduces a fascinating hack to improve models' reasoning capabilities: They give them a “thinking tool” (in reality, it’s just a reminder to think longer on the task). While showcasing the improvement the tool provides, they also provide proof of this robustness issue.

The graph below shows the pass^k metric, where the AI passes the test if it gets the task correct ‘k’ independent tries. Unlike pass@k, which measures if the agent gets the task correct 1-of-k times at least, which hides the robustness issue, here we clearly see whether the AI is reliable.

And, as you can see for yourself, it’s mostly not, seeing accuracy drop, on average, 20% by going from k = 1 to 5.

Source: Anthropic

Later, we will see why this does not invalidate today’s thesis for agentic software; it just introduces an extra-yet-easily-solvable engineering hack to the process.

However, even though it might give you vertigo just by simply imagining giving unreliable AIs the steering wheel of your critical business processes, that’s precisely what you are going to end up doing.

If you want to survive, of course.

If You Can’t Beat Them, Join’em.

A year ago, Sam Altman said that if you are building software that wants to thrive in the AI era, the best way to identify if you’re doing it right is to ask yourself whether better AIs make your product better… or obsolete.

And that if you were on the second camp, you were doing it wrong because, as he claimed, “we are going to steamroll you.”

In a recent interview, an Anthropic researcher echoed the same thought: building good software in the AI era shouldn’t be about making AI better, but the wrong way around; better AI should make your product better and more necessary.

This is why most of the hype around application layer startups, which have seen explosive revenue increases and great traction in weeks, are an illusion and most of these tools won’t make it to their second year of existence.

But how do I know if my software or the software company I own will have a business in two years? How do I know if it meets this definition of improving as AI improves?

As I now see it, the difference between truly agentic software, software that aligns with the future of AI, and software that pretends to be surfing the wave that will inevitably devour it is a simple change in mindset, one I’ve had to do myself.

Unfortunately, I realized too late that I had spent months building software destined to fail. Can you avoid my costly mistake?

Subscribe to Full Premium package to read the rest.

Become a paying subscriber of Full Premium package to get access to this post and other subscriber-only content.

A subscription gets you:

• NO ADS

• An additional insights email on Tuesdays

• Gain access to TheWhiteBox's knowledge base to access four times more content than the free version on markets, cutting-edge research, company deep dives, AI engineering tips, & more