Try Artisan’s All-in-one Outbound Sales Platform & AI BDR

Ava automates your entire outbound demand generation so you can get leads delivered to your inbox on autopilot. She operates within the Artisan platform, which consolidates every tool you need for outbound:

300M+ High-Quality B2B Prospects, including E-Commerce and Local Business Leads

Automated Lead Enrichment With 10+ Data Sources

Full Email Deliverability Management

Multi-Channel Outreach Across Email & LinkedIn

Human-Level Personalization

THEWHITEBOX

TLDR;

This week, we comment on OpenAI’s insane price for o1-pro, a striking difference in the size and quality of HuggingFace’s new Olympic-level coder, new models from Perplexity, and an interesting Deepmind/Disney/NVIDIA partnership.

We then move into markets to discuss SoftBank’s latest acquisition and Apple’s fragile status quo. Finally, we look at Anthropic’s new feature for Claude and creating music with LLMs using MCP.

Enjoy!

FRONTIER

$600 for OpenAI o1-Pro

OpenAI has set a new record in both price and deliria with their latest prices for the o1-pro API access, their best—released—reasoning model.

The price?

TheWhiteBox’s takeaway:

The audacity of the price is incredible.

$150 per million input tokens and $600 per output million tokens are outrageous prices. A million tokens are around 750k-ish words, which sounds like a lot, but it’s not for reasoning models.

These models need to speak to think, so they tend to generate an absurd amount of tokens to answer, around twenty times what non-reasoning models require, skyrocketing prices.

If you’re wondering why the output tokens are four times higher than the input tokens (usually, it’s three times), this is due to the nature of both computations. Generating words is much more inefficient to run on GPUs, as a greater percentage of the GPU’s energy consumption is used to move the different model parts and the KV Cache from memory to the cores and vice versa, instead of actually making computations.

On the other hand, during input processing, we are building the cache by processing all words in the input at once, which is very heavy computationally speaking, ideal for GPUs (more energy consumed on compute than on memory transfer).

The ratio of compute/data transfers in proportion to total energy consumed is called arithmetic intensity, a term AI incumbents love to use to sound smarter.

These companies make money with compute, not data transfers, so word generation has to be priced higher to make money.

BUT NOT THAT HIGH.

While nobody doubts that the o1-Pro model is the best around, it is heavily rumored to be a best-of-N-chains model. It generates several plausible chains of thought (multi-step problem-solving) to answer your request and choose the best one, a technique known as majority sampling or ‘self-consistency sampling.’ If that is true, this price, exactly 1000x that of GPT-4o mini, is audacious.

For the non-technical reading this, if your CIO/CTO suggests using this model for this price, fire them. And if you really find the use case, just pay the $200 subscription.

OPEN-SOURCE

A Small Olympian-Level Coder

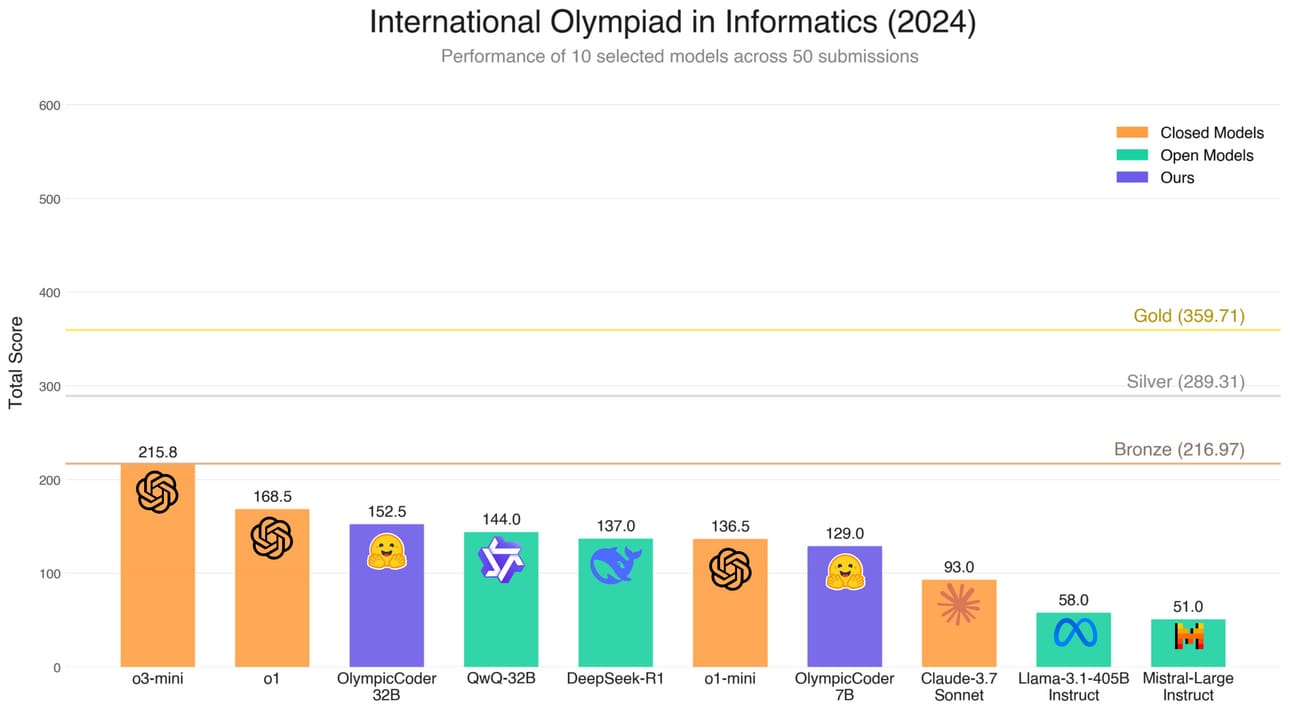

A HuggingFace team has released OlympicCoder 7B and 32B, which showcase borderline state-of-the-art (only behind ChatGPT) results when tested on last year’s International Olympiad in Informatics exam.

To achieve this, the team built a custom dataset by distilling reasoning traces from DeepSeek R1 (sampling R1 to solve complex problems and then using its responses to fine-tune a base model). Interestingly, despite being trained on R1, the 32B model outcompetes it in the task.

TheWhiteBox’s takeaway:

This research proves three things:

Open-source is becoming as good as proprietary models.

Distillation works wonderfully and, when combined with strong base models, can even surpass the performance of the models from which it was derived.

Running powerful models at home is becoming viable.

Anyone with a computer with a GPU and 16GB of RAM or more can run the 7B model (the speed at which the model generates words is another story, depending mainly on your memory bandwidth and your GPU’s processing power).

And with growing options for top consumer-end hardware, like Apple M4 laptops, the M3 Ultra and M4 Max Mac Studio, and NVIDIA’s DGX Spark and Station, you could eventually run dozens of agents working for you under the hood while you work.

SEARCH

Sonar Models, the Cheapest?

From expensive prices to cheap ones. According to Aravind, Perplexity's CEO, Perplexity’s Sonar models offer the best performance/cost ratio for search use cases, beating frontier models used for search fair and square.

TheWhiteBox’s takeaway:

Aravind’s comment is interesting because they claim the base Sonar model to be cheaper than GPT-4o-mini, even though the latter has lower prices per million tokens.

I assume that what he’s saying between the lines is that Sonar is cheaper because it generates fewer output tokens, so the cost is smaller for the total budget.

Otherwise, he’s simply lying.

ROBOTICS

Blue, The Collab between Disney, NVIDIA, & Deepmind.

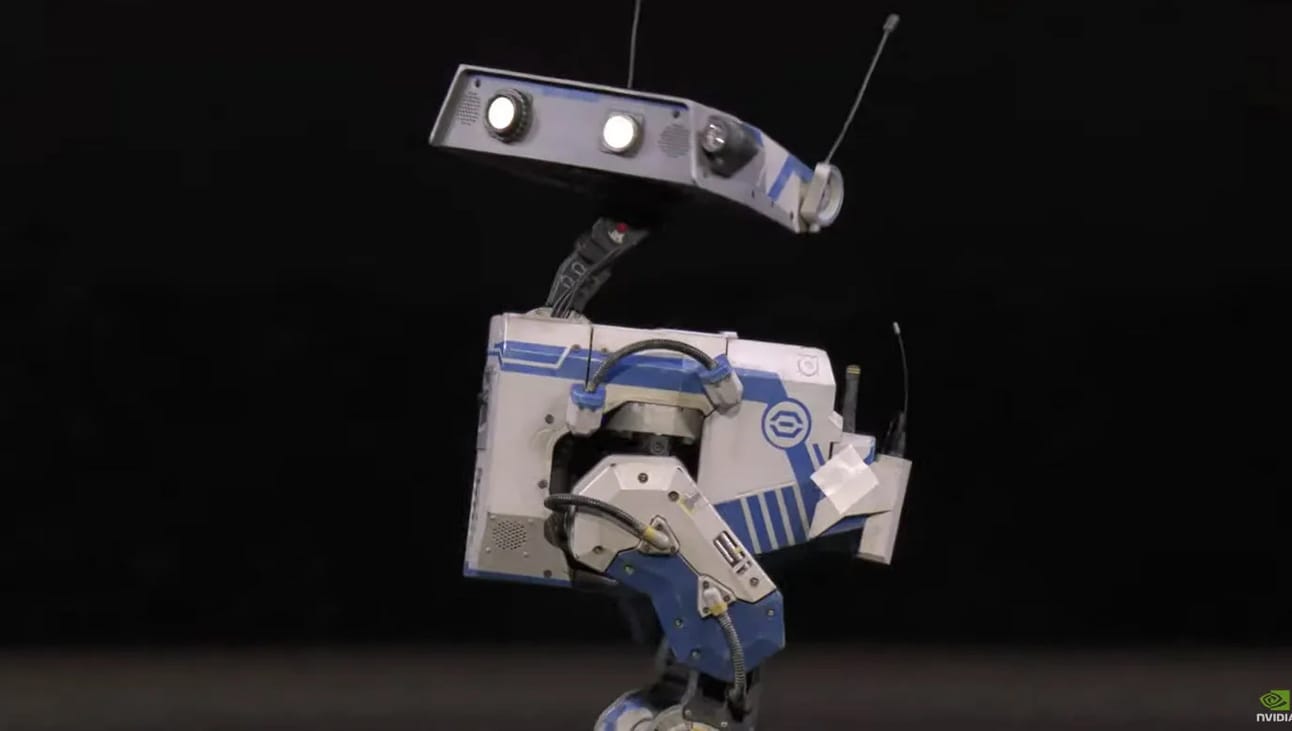

One of the most visual parts of Jensen’s GTC keynote was the presentation of Blue, a physical robot created through a collaboration between NVIDIA, Google Deepmind, and Disney. Soon, Disneyland parks worldwide will be able to see these robots interacting with attendants.

The most remarkable thing was how the robot responded to Jensen’s commands in real-time, which is pretty powerful to demonstrate in front of millions of people. In parallel, Google Deepmind presented its Gemini Robotics advancements, which most likely fuel Blue’s intelligence.

In the videos Google showcased, we can see how robots are becoming much smarter from a spatiotemporal perspective. They react in real-time to environmental changes (just like Jensen asked Blue to shut up or move to the side). Goal dynamism, switching the robot’s task on the fly. This is surprisingly hard but extremely necessary for robots to inhabit an ever-changing world.

Coupled with what appears to be modest generalization (the robots can somewhat execute tasks they had never seen before), it brings the future of robots one step closer.

TheWhiteBox’s takeaway:

There are several things to point out here:

First, Vision-language-action models continue to be the way to go, meaning that, at the robot's core, we have an AI trained mainly through text. We still haven't seen video-based models take over robotics despite, in theory, being much more well-suited for the task (as they’ve learned from the world by seeing video, not text). Language models help us communicate with robots, making them much more appealing to serve as backbones, at least for now.

On-premise GPUs are becoming the norm, meaning that the robot’s brain is not somewhere in the cloud but in the robot itself. This is key to avoid latency issues and, more importantly, cybersecurity concerns (hacks).

The default architecture comprises two models (just like in FigureAI’s robots and also for Gr00t N1). However, in Gemini Robotics’ case, the backbone model is in the cloud, which is not ideal (as Jensen stated, this is not the case for Blue). Having robots continually connected to the Internet makes no sense in the long run.

HARDWARE

SoftBank Acquires Ampere for $6.5 Billion

Softbank, the largest technology investment company on the planet and a crucial partner to OpenAI in the Stargate Project, has agreed to pay $6.5 billion to purchase Ampere, a US chip designer company.

Ampere designs CPU chips based on the ARM architecture (Softbank also owns ARM).

TheWhiteBox’s takeaway:

Although GPUs steal the spotlight regarding AI hardware, CPUs are an essential piece of the puzzle. There’s no GPU without a CPU, as GPUs follow CPU instructions (the CPU offloads parallelizable work to the GPU to accelerate the computation, hence why GPUs are often referred to as accelerated computing).

Furthermore, in the context of AI workloads, CPUs play an increasingly relevant job, as AI workloads don’t only include the AI model, which resides in the GPU, but also code sandboxes, physics engines, and other stuff that is executed at the CPU level. Even NVIDIA is getting into the CPU game for their next GPU platform, Vera Rubin.

TRUMP ADMINISTRATION

Apple’s Fragile AI Position

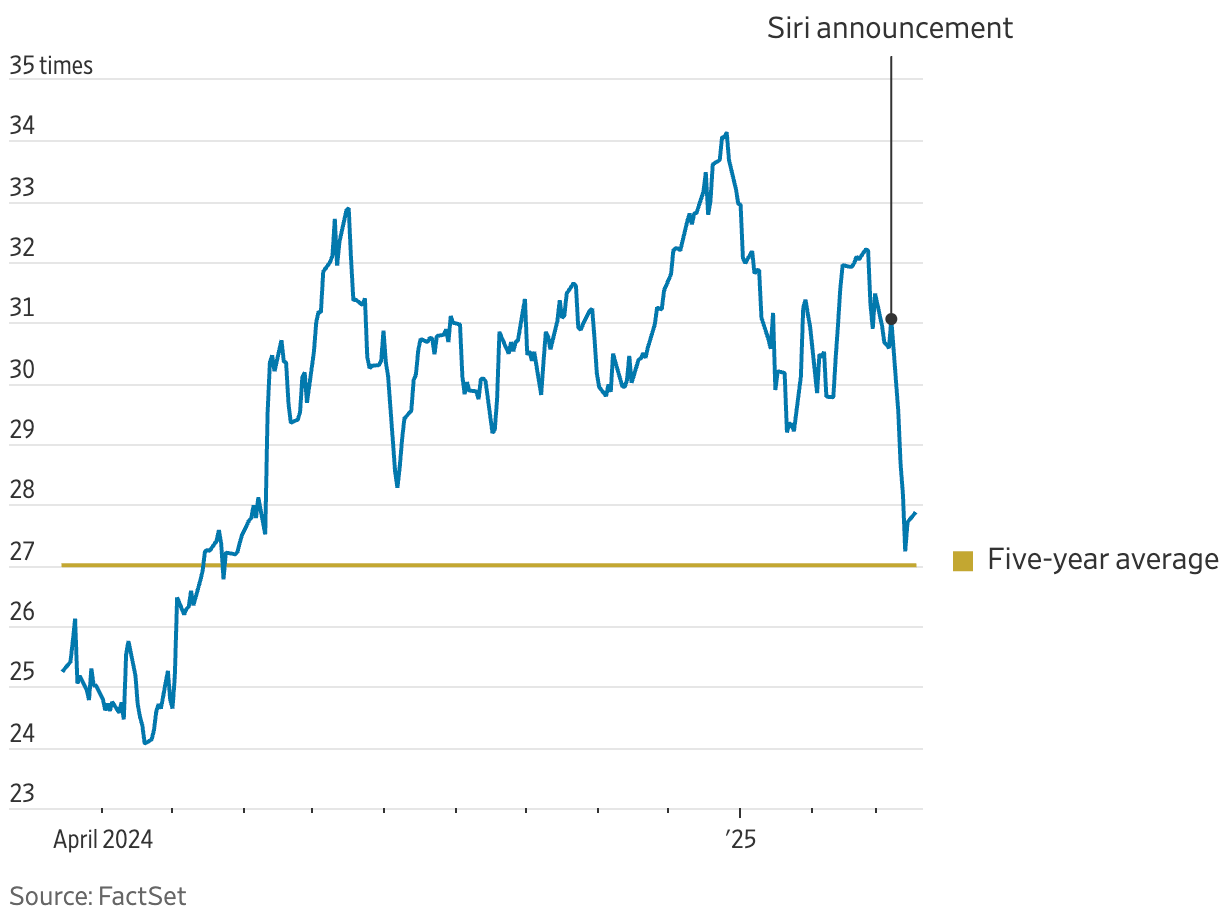

The news that Apple is delaying their Siri revamp to at least 2026 has hit very hard. A rockstar stock for the past year, mainly surfing the AI wave and benefitting tremendously from the announcement of Apple Intelligence ahead of the iPhone 16 line-up release, investors are equally surprised as concerned about Apple’s incapacity to deliver well-rounded AI products while being a tech company and the most valued one in the entire world.

Investors saw Apple Intelligence as the definitive AI product not because of its capabilities, which were well below the state-of-the-art, but because of Apple’s insane distribution, which put these AI features in the hands of billions.

With that dream parked until further notice, the 1.6% sales growth from the 16-line-up hits harder than ever, to the point that Apple is trading almost at its five-year average in terms of forward PE (graph).

TheWhiteBox’s takeaway:

The first thing I noticed was that I believe we are seeing the last stages of Apple's growth premium valuation. Being treated as a growth stock when it is clearly a value stock at this point is not an enduring reality.

As for the AI side, well, even Apple leaders consider this a total embarrassment. And it is. I believe the most significant problem for Apple Intelligence is the extremely low RAM size on iPhones (just 8 GB).

The memory bandwidth isn’t incredible either (7,500 MegaTransfer/second, or 60GB/s at an 8-byte bus width). This is crucial for AI workloads, as data is moved in and out of memory at breakneck speeds. However, this isn’t an issue in smartphone use cases because the context, and thereby the cache, isn’t that large.

In such cases, they should opt for a 1.5B model at most, which sounds small. Still, we have already seen great models of such sizes, and you can fine-tune every use case with LoRA adapters (I covered Apple Intelligence’s architecture here in the past).

Long story short, they clearly don’t have their AI act together, so it’s surprising to see no management heads rolling… for now.

CHAT PRODUCTS

Anthropic Launches Web Search

Finally, Anthropic has released its web search capabilities. It includes inline citations, similar to ChatGPT or Grok. Only for paid plans for now; they will roll it out for free users and other countries, too.

TheWhiteBox’s takeaway:

I’m baffled that it took them one year to add web search, but it’s nice that they finally have it. However, web search capabilities are not available to API models, a feature that OpenAI just released last week.

This is not extraordinary; it’s table stakes, but I’m happy to see anything that increases competition between top AI labs.

MODEL CONTEXT PROTOCOL

Creating Music with LLMs

People are building incredible things with MPC, the Model Context Protocol. In this link, a user uses Claude with Ableton, a music creation software, to create music without human interaction.

Claude does this leveraging Ableton’s MCP Server, which allows Claude to use the software via natural language. Instead of complex, software-specific code, MCP allows software to ‘expose’ their context and tools to LLMs via a natural language interface so that Claude can:

Understand what the software is about

Make precise requests of the context or tools the software offers

This allows the LLMs to leverage a rapidly growing number of software as accessible tools without LLM training or coding effort.

TheWhiteBox’s takeaway:

MCP holds many promises for AI developers. If a software has an MCP Server (well-coded, of course), you don’t need to worry about the complexities of the integration; you need to connect to the server, and that’s it.

To me, MCP seems to solve the LLM-to-software integration problem. Therefore, the only thing preventing an agent explosion is, well, the AI model, as AI models still have an obvious robustness problem.

One of the first MCP Servers I’m looking forward to trying is the Outlook Calendar. I'd like to ask Claude about the state of my calendar, set schedules, find free time, and so on.

TREND OF THE WEEK

60,500 Times Smaller… but Better

A few weeks ago, we saw how Anthropic tried to train Claude to beat Pokemon. The model is making progress but is still far from beating the game.

Now, an independent team has finished Pokemon with a model with just 10 million parameters, several thousand times smaller than frontier AI models (60,500 times smaller than DeepSeek V3, for instance).

But how is this even possible?

The answer is the unsolved breadth vs. depth problem. This is an interesting piece for anyone who wants to understand one of the most counterintuitive issues in AI and the industry’s best bet at creating AGI.

Training AI for Long Horizons

This small model was trained using a Reinforcement Learning algorithm, or RL. As I’ve explained many times, this is a technique where an AI is given a goal and a set of constraints and must learn an action policy that allows it to achieve the goal.

I won’t go into the basics of RL because I’ve done it countless times, but I highly recommend you click the link above whenever you have the time to read the blog; it explains how they trained this model in a highly intuitive, simple-to-follow manner.

Instead, what I want to focus on is three things:

Why RL is different from what we have been doing the last two years with Large Language Models,

why it is necessary to take AIs to new heights,

and how it is possible that such a small model beat the large guys.

From Imitation to Exploration

If we look at AI’s frontier, we find two great training paradigms: imitation learning and learning via exploration.

Imitation learning forces the model to imitate its training data. By performing this imitation, the model identifies the underlying patterns in data and learns to imitate it.

In the case of LLMs, this training is called pre-training, and the model is exposed to Internet-scale datasets, which it has to learn to imitate (we then add tricks so that the model, during inference, generates similar sequences, not ones purely identical to the training set; otherwise, that would mean it’s nothing more than a dataset).

Imitation learning is great for training an AI to behave like us and is the best option when we have data the model can imitate.

However, it also incentivizes memorization (the model is lured to imitate data), which explains why LLMs' performance is largely due to their memorization capabilities rather than real intelligence.

However, there are lots of use cases in which we would want to use AI that combine two issues:

We don’t have enough data for the AI to imitate

We actually don’t want imitation but actual thinking

One great example is reasoning tasks.

For starters, reasoning data (data where humans describe their exact reasoning process) is very scarce. Additionally, we don’t want AIs to learn by imitation; we want them to be capable of thinking outside the box a bit or, to be more specific, to be capable of exploring different ways to solve a problem.

This is why non-reasoning LLMs are bad for reasoning tasks; they were not trained to reason, but to parrot, and thus can only “reason” tasks they have memorized.

Put another way, some tasks require exploration, just like you, as a human, don’t know by heart how to solve every maths problem. However, you have the necessary intuition—the math priors—to face the problem and explore until you find the solution.

This learning is guided by rewards, which tell the model whether the actions it chooses are good.

Think of this training like playing the game “hot or cold”; by telling the model ‘hot’ or ‘cold,’ we guide the model toward the goal (of course, it’s much more complex than this, but this is what we do in first principles).

But how can a model trained for imitation explore? Well, it can’t.

For that reason, for quite some time, we have been adding an exploration training phase on top of LLMs for each of them to explore. This training phase is the RL phase.

The first real LLM explorer was DeepSeek R1 (and probably o3 before, but they didn’t acknowledge it until later).

To train DeepSeek R1, DeepSeek gave DeepSeek v3, their non-reasoning LLM, a small cold start training (they drafted a series of chains of thought where the thought process is actually laid out). Then, they gave it an exploration-based training set with over 800k verifiable examples (examples where the final result is automatically verifiable, like 2×5 = 10).

Next, they simply tasked the model to figure out how to arrive to the correct solution. And when they did this, magic happened.

As the model was allowed to explore how to solve problems, it autonomously learned to reflect on its own “thoughts,” backtrack, search for alternatives, and so on. In layman’s terms, by “brute” trial and error, the model learned the best reasoning strategies to solve the problem.

Is this discovery, and not the infamous $6 million training budget that markets wholly misunderstood, what made DeepSeek's results a true breakthrough.

In summary, our frontier models’ training all follow two steps:

We build knowledge into the model via imitation learning, giving us a non-reasoning model (or the traditional base LLM).

Based on that epistemic foundation (or engine), we run an exploration training phase in which the model uses its learned intuitions to explore and learn to reason, giving us a reasoning model.

For instance, GPT-4o is o3’s non-reasoning model (or o3 is GPT-4o trained for reasoning using RL).

If it helps, you can think of reasoning as intuition (built-in knowledge and experience) plus search. In other words, reasoning = intuition-guided exploration.

Having understood the importance of RL in today’s AI world, we have yet to answer the question: How does a minute, pure-RL, no-LLM “backyard” model outperform an RL-trained reasoning LLM like Claude 3.7 Sonnet?

Breadth vs Depth

For decades, AI has fought an internal battle between going broad and going deep.

LLMs represent going broad. They are larger-than-life models that are exposed to every data point we can find to achieve generalization, the coveted capability where AIs can perform well on tasks they haven’t been trained for.

On the other side, we have examples like AlphaGo/AlphaZero or this Pokemon model, which are trained exclusively with RL and on one single task.

Until the arrival of foundation models (they are called that way for this precise reason), AI was mostly a deep game; all models were trained on a single task.

Nowadays, most investments are based on broad models. But why is this, and what is the downside?

As you may guess, the answer is the pursuit of AGI. The predominant vision is that superintelligent AIs should be general. They do not need to be trained for depth in every single task, which is unrealistic, but they should have a good enough set of priors to ‘generalize’ into new tasks without further training.

But the funny thing is that, while this is true (there’s proof that general models even outcompete single-task models in their task in some cases, as they have learned new capabilities in other domains that transfer to that one, like AlphaZero, which is the best AI in several board games and superior to chess-only AIs), the opposite happens with superhuman AIs.

All superhuman AIs humanity has built (AI that far exceeds human prowess) have been single-task, with the aforementioned example of AlphaGo (playing a board game).

This also explains why the Pokemon model we are discussing today, four orders of magnitude smaller than general state-of-the-art models, easily beats them.

This model sacrifices breadth to achieve outsized returns on a single task.

This happens because, while we are just learning to train large models in pure RL pipelines, we have yet to achieve the results we obtain in single-task training pipelines. In layman’s terms, we know RL is the real deal, but we still have to learn how to train foundation models on RL pipelines properly.

Is RL The Answer?

This might seem like a downer, but to me, it’s actually the opposite; it’s proof that RL works.

If we manage to build pure RL on top of an LLM, we might have found the path to a new age of AI models that do not simply imitate intelligence like standard LLMs do, but actually achieve a greater degree of intelligence.

But will this advance AI to real intelligence? We hope so, although we can’t say for sure. However, it’s our only well-known bet, so let’s better hope we are correct.

But do we have everything figured out to make this transition happen? No.

We have it figured out for verifiable domains, where we can always tell whether the model is progressing. The real challenge will come in areas where the reward—saying ‘hot’ or ‘cold’ to the human playing the “Hot & Cold” game—is subjective, like in creative writing.

Worse, in many cases, especially when solving very long-horizon tasks, the rewards are sparse (the model has to take several actions before arriving at a verifiable reward event, making it really hard to measure how good the intermediate actions were).

Overall, the important thing to take away is that, after reading this story, you now understand what the people behind the brands OpenAI, Deepmind, or Anthropic are doing this curtain of hype their VCs paint to us every day.

Although the methods and techniques may vary, they all unequivocally pursue intelligence as an intuition-guided search or imitation and exploration training. We have explained this today, and quite frankly, it is the only thing you truly need to understand in AI.

The rest is just engineering and capital allocation.

THEWHITEBOX

Join Premium Today!

If you like this content, you will love Premium. Join the free trial today to receive four times as much content weekly without saturating your inbox.

You can even ask the questions you need answered.

Until next time!

Give a Rating to Today's Newsletter

For business inquiries, reach me out at [email protected]