THEWHITEBOX

TLDR;

Welcome back! This week, we have news on curing muteness with AI, a 35-year-old tradition broken, Trump’s new AI super team, markets, and several new products, including a pretty incredible image-generation platform generating images in less than a second.

Enjoy!

BCIs

Using AI to Cure Muteness in ALS Patients

Through a new clinical trial, Neuralink has presented VOICE, a brain chip powered by AI that allows the recipient to “speak with their mind.”

The video shows the user, with its original voice (recovered from previous recordings again using AI), “speaking” using the system without moving the lips and mouth at all, just thinking what he wants to say. Pretty incredible.

TheWhiteBox’s takeaway:

Truly outstanding technology. Brain-Computer interfaces, or BCIs, are one of the most exciting technologies in the AI spectrum. Interestingly, the idea is, at heart, very similar to other AIs like ChatGPT, mapping an input to an output.

However, this time, it’s not mapping a set of words to predict the next one, but processing brain signals (Neuralink uses invasive technology, meaning the chip is implanted in the brain), and learning which brain signals map to which actions.

Previously, Neuralink has made great progress in moving computer cursors with the mind, learning patterns like “whenever the brain activates in this way, the user is thinking about moving the cursor to the left,” and now it’s applying these same principles to speech.

But does this mean muteness is cured? Hardly, I’m afraid.

One issue with these “brain maps” is that each brain is unique. Unlike language, which is universal, the way your brain and mine activate for each thought is different. This means the AIs have to be “tuned” to the user in particular.

This also raises the question of whether there’s a foundational model of brain activations (a backbone you can fine-tune for each patient, like ChatGPT for each task) or whether the AIs are trained from scratch. This is an important question because it would give insight into how scalable this truly is.

Based on public data, we can be hopeful because it seems to be the former: they describe the process as “decoder calibration,” which suggests common patterns across brains and that the “only” thing to do is fine-tune (i.e., calibrate) to the patient.

Either way, I’m pretty sure that if AI news were more about this, rather than about superterminatorGPTClaude3000 about to obsolete millions of workers, the perception of the technology in our society would be much better.

CPUs

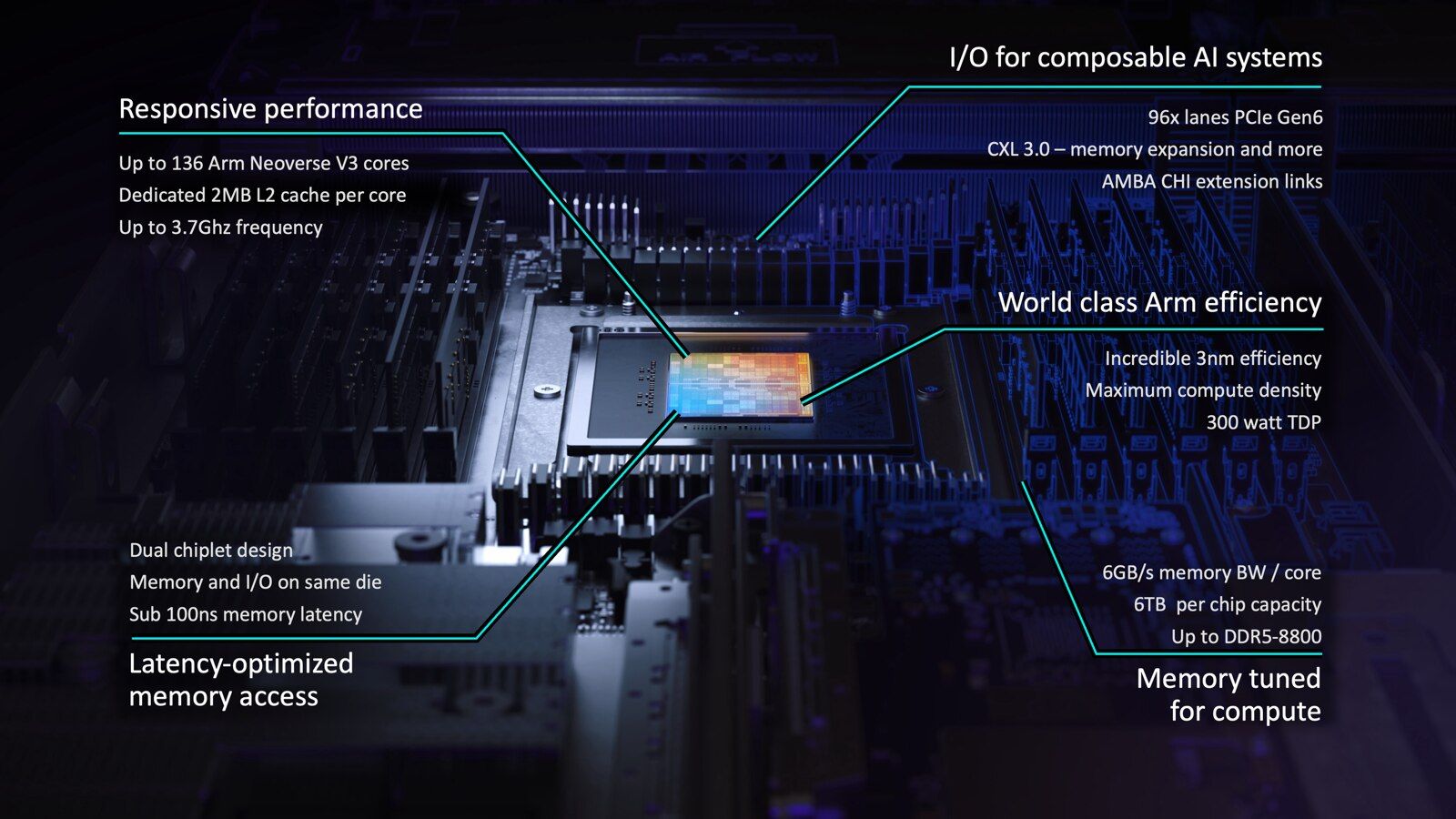

A First in 35 Years: ARM Announces First-Ever CPU

For the first time in its 35-year-old run, ARM has decided to ship its own chip, ARM AGI. Until now, ARM was an IP company; it licensed CPU designs (or, rather, Instruction Set Architectures), blueprints for others to design their own CPUs.

But now, it’s a chip company too. Why this sudden turn into a lower-margin business?

TheWhiteBox’s takeaway:

Do you need bigger proof that the CPU market is about to see an explosion in demand? Even ARM wants a piece of it, despite becoming a direct competitor to its own customers in a business with considerably lower margins than its royalty-based business.

The reason is the expansion of the CPU market, driven by agents.

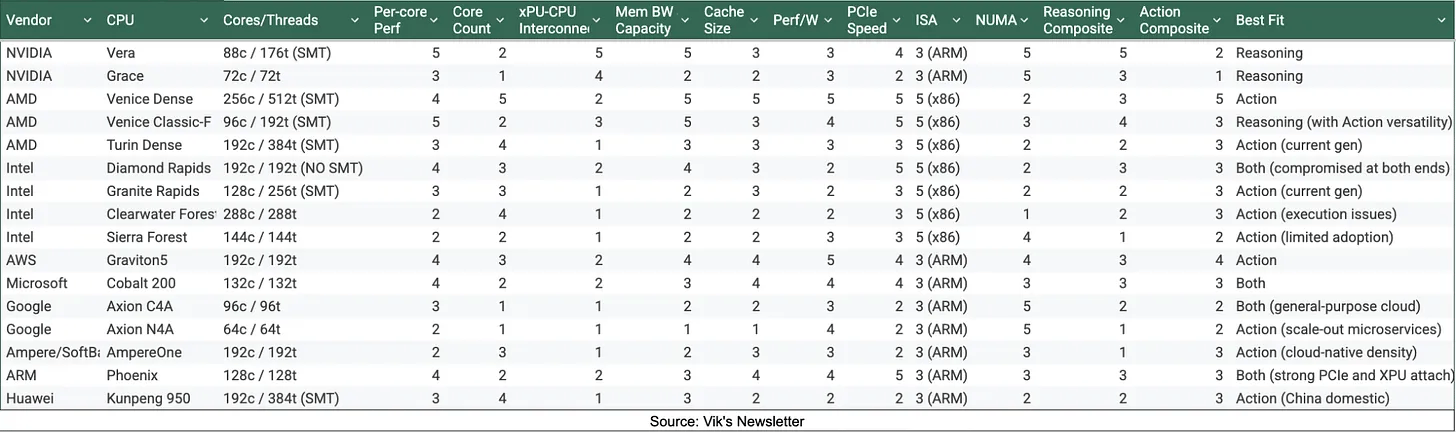

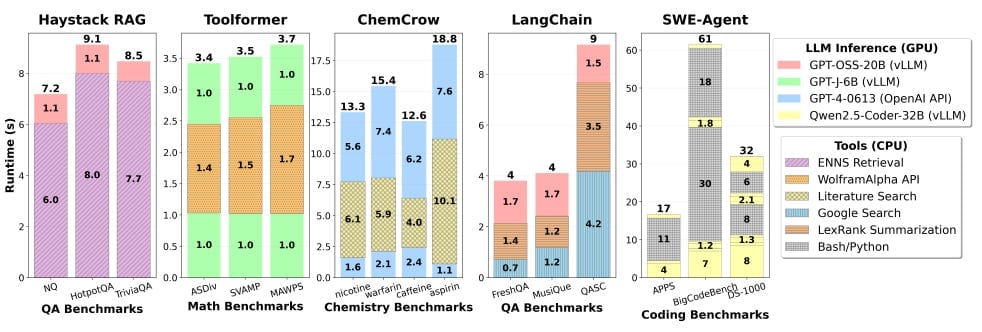

It’s important to clarify that, if we’re referring to pure agentic workloads, workflows where the AIs are mostly calling tools instead of generating reasoning traces, these are CPU-heavy workloads where core count is the most important thing, because agent tools, for the most part, run on CPUs, not GPUs.

In fact, if you analyze an agentic trace (an interaction between an agent and a user), most of the latency is attributable to the CPU, not the GPU. In layman’s terms, CPUs are the bottleneck.

Across all tests, CPU-based workloads account for the majority of the runtime. Source

And what makes a CPU good for agent workloads? The short answer is core count.

Think of CPUs and GPUs as hardware with a defined set of workers, called cores. Each core can do work independently. However, unlike GPUs, which have many cores, CPUs have fewer cores, but each is much more powerful than the average GPU core.

For this reason, CPUs can execute a much wider range of tasks than GPUs, which are usually focused on simple yet highly parallelizable workloads like matrix multiplications, which are the main computing operation you see inside AI models.

However, during a pure agentic workload, the agent is not talking that much and instead doing ‘tool calls’ (declaring they want to use x or y tool). Those tools are then executed by the CPU, and the cycle repeats.

Thus, if we are looking at AI workloads where CPUs drive most of the work, the more cores a CPU has, the better.

Consequently, core count is one of the best ways to classify CPUs as they move toward dominance in this market opportunity that agents have opened, as shown below:

So, where does ARM’s new AGI CPU fall into?

They are positioning themselves as one of the suppliers with the largest core count, up to 272 cores, above NVIDIA’s CPU core count (which are more focused on reasoning-heavy agents), but far from AMD’s offering, which can reach 512 logical cores.

If you wish to better understand the CPU market in 2026, I recommend you read this past newsletter.

REGULATION

Trump’s Super AI Advisor Team

This Wall Street Journal report says Donald Trump is creating a new White House technology advisory group, the President’s Council of Advisors on Science and Technology, and plans to include several prominent tech leaders, including Mark Zuckerberg, Larry Ellison, and Jensen Huang.

It frames the move as part of a broader push to shape US policy on AI and other emerging technologies.

The panel is expected to start with 13 members and could later expand to 24. It will be co-chaired by David Sacks, Trump’s AI and crypto czar, and Michael Kratsios, his technology adviser. Other reported members include Sergey Brin and AMD CEO Lisa Su.

TheWhiteBox’s takeaway:

I wonder, why wasn’t Dario Amodei invited? Jokes aside, it seems like a good move to take in the opinion of those at the bleeding edge of the technology. Taking counsel from the private sector is almost never a bad idea in my view, considering politicians are rarely, if ever, sufficiently prepared or informed to make good decisions.

I would hope that one of the key elements is support for open source, which I would expect, given the participation of people like Jensen or Lisa.

The reason is that it’s of utmost interest to both NVIDIA and AMD that AI doesn’t end up being hoarded by a few companies, which would seriously undermine their ability to diversify their customer base.

It’s also noteworthy that the AI Labs were not mentioned, meaning neither Sam, Dario, nor even Elon may participate. Instead, this seems more focused on hyperscalers and hardware companies, which have a much more credible presence in the view of the current Administration, at least from what I see.

PRIVATE EQUITY

OpenAI Promises 17.5% Return to PEs

Reuters reports that OpenAI is offering private-equity firms unusually generous terms to fund a new enterprise AI joint venture, as it competes with Anthropic for large business customers.

According to the article, OpenAI is offering preferred equity with a guaranteed minimum 17.5% return, plus early access to its newest models, which is a richer package than Anthropic’s comparable pitch.

To be clear, Reuters says the partnerships are meant to let OpenAI and Anthropic roll out their AI tools across the many private companies that PE firms already own. In other words, the PE firms bring access to their portfolio companies, and the joint venture helps fund and organize the deployment work.

TheWhiteBox’s takeaway:

Because if you can’t impose enterprise AI adoption, buy it, right?

This just goes to prove how hard it is to actually implement this technology in enterprises beyond toy projects and iterative workflows like coding, to the point that they are offering the owners of those companies economic incentives to achieve such implementations.

To be fair with these Labs, enterprise AI adoption is much more complicated than people realize and thus requires expertise that most companies don’t have.

And, importantly, in most cases, also model fine-tuning, which requires a specific type of talent that, to this day, is rare. This is why the use of Forward Deployed Engineers (FDEs), popularized by Palantir and now fully adopted by companies like OpenAI, has become so popular; if your customers don’t have the talent, be the talent for them.

It’s undeniable that for companies like OpenAI or Anthropic, which don’t offer on-premises fine-tuned deployments, going beyond toy projects and pilots is proving really, really hard.

Enterprises lack education on “what’s possible”, tech savviness to guarantee good implementations, and major security guarantees that closed-sourced models can’t offer.

OPENAI

Sora Shuts Down

Sora, one of OpenAI’s biggest launches ever of an app that allowed users to create videos in an AI-native social app, is being closed down, as part of their leaked effort to trim all the projects that aren’t business essential, which will end up converting all of OpenAI’s offerings, from ChatGPT to Codex, into a ‘SuperApp’ of sorts.

The problem might have been, once again, unprofitability, as a Forbes article back in November claimed the app was causing $15 million in losses… per day.

TheWhiteBox’s takeaway:

I hated the idea of an AI-native social app from the get-go. It just felt wrong. Never downloaded the app; social media has already separated us enough to now include AI-generated crap.

Therefore, no tears will be shed.

This also strengthens my belief that the dead Internet theory is more than just a theory, and that experiences that don’t feel human all fail.

The Metaverse? Failed.

Sora app? Failed.

LinkedIn experience lately? Absolute shit.

X? Bot farms at scale.

Incidentally, look what’s booming: podcasts. People yearn for connection and authenticity.

Instead, the Internet is becoming an unlivable place, so god forbid I celebrate dancing on the tomb of yet another massive failure at killing what little genuine human presence there’s left on the web.

HARDWARE

SK Hynix Purchases $8 Billion in EUV Tools

SK Hynix has announced the largest single purchase ever of ASML’s EUV lithography tools to ramp up production of its memory chips by the end of 2027. Reuters describes it as the largest single order publicly disclosed by an ASML customer.

ASML designs a key piece of equipment in the fabs that manufacture NVIDIA, Google, or SK Hynix’s chips. They are some of the most advanced tech humanity has ever built, so I highly recommend you take a look at how they work in this brilliant Veritasium video.

The main point is a major expansion in advanced memory capacity. Reuters says the equipment is expected to be used at SK Hynix’s Yongin plant and its M15X plant in Cheongju, supporting both HBM chips for AI and advanced DRAM.

One analyst estimate in the report puts the order at roughly 30 new EUV machines over two years, meaning each machine is being purchased at the affordable price of $266 million.

ASML’s total production per year is around 90 of such tools, so Hynix is buying a considerable amount of total production.

TheWhiteBox’s takeaway:

This news is more important than it may seem at first. The memory market has a huge shadow on top called cyclicality; the pervasive feeling that this incredible demand for memory chips we are seeing is just a high cycle, and that current revenue and profit predictions are not indicative of future demand.

With that realization, many investors are wary of pouring money into these stocks (SK Hynix, Samsung, Micron) in fear that it will, in fact, be a short-lived bonanza.

To be more specific, when demand is unpredictable, stocks trade at multiples over book value (total assets vs. total liabilities) rather than multiples over earnings. Trading over book value is much less attractive, so multiples compress.

This is why SK Hynix is currently trading at a price-to-earnings ratio of 16.5, well below the S&P average of 28 and the 30-ish levels of the average Big Tech company.

And we factor in forward PE, the valuation with respect to predicted future earnings for 2026 and 2027, SK Hynix drops to 5 and even 3, respectively, while Big Tech companies enjoy equivalent multiples that are several times larger.

Of course, if investors realize they should be valuing the company based on earnings, this stock is considerably undervalued. Instead, if fears materialize and demand falls in 2028 onward, SK Hynix could be fairly valued.

But may I ask you: would SK Hynix be pouring dozens of billions of dollars into ramping up production for 2027 onward if demand guidance was set to collapse in 2028?

Such a huge EUV order is a sign of panic buying (I’m seeing huge demand, I want to meet it, so I buy a lot of equipment in advance).

I once said that tracking EUV orders was one of the best ways to predict future AI spending. And this particular news shows that not only is AI spending going to continue, but memory will remain a key element.

This is not financial advice. And to be clear, I’m an SK Hynix investor myself.

FUNDING

OpenAI Raises Total Amount to $120 Billion

Talking about OpenAI, CFO Sarah Friar told CNBC that the company is adding another $10 billion to its recent fundraising, bringing the total round to more than $120 billion, above its original $100 billion target.

The interview also emphasized how quickly OpenAI’s business has scaled. Interestingly, Friar said roughly 60% of revenue currently comes from consumers and 40% from enterprises, but enterprise is growing faster, and the mix could become about 50-50 by the end of the year. She emphasized the importance of enterprise because it can become highly profitable at scale and help make the business sustainable.

Friar also addressed IPO plans. She did not commit to a listing date, but said OpenAI is building toward being ready for the public markets.

Her message was that the company needs to be operationally healthy and mature enough for an IPO, and that this huge private funding round helps reduce timing pressure if the company is ready before market conditions are, but she expected a “soon-ish” IPO, maybe even this year.

Another major topic was capital intensity and compute constraints. The article says OpenAI has scaled back its longer-term infrastructure ambitions, now targeting around $600 billion in total compute spend through 2030, down from the much larger figure Sam Altman had discussed previously (around $1.4 trillion).

TheWhiteBox’s takeaway:

OpenAI's capacity to raise capital is unprecedented. $120 billion is almost enough to get you in the top 100 most valuable companies. For reference, that’s more than Accenture’s entire market capitalization.

Whether they can use that money to turn a profit is another story, but from the previous news, it’s clear they now feel the pressure to spend every single dollar wisely.

Among other things, the company that is supposed to destroy the job market is now planning to double its headcount to 8,000 while simultaneously announcing up to $1 billion in foundation spending to offset some of the job losses they think they’ll cause.

But to me, no matter how much money they raise, OpenAI’s business model will never make sense unless they have a pretty successful venture into the enterprise, and their recent moves prove the point perfectly.

They are pushing hard for it, but I remain skeptical about how much penetration a general-purpose, unreliable model business can have in enterprises, which require the exact opposite: models that nobody cares about being general but execute their tasks to perfection.

THEWHITEBOX

ChatGPT adds a library

ChatGPT now has a File Library that automatically saves files you upload or create, so you can find and reuse them later instead of hunting through old chats.

OpenAI’s release notes say this applies to PDFs, spreadsheets, images, and other files, and that you can browse them from a Library tab in the sidebar or ask ChatGPT questions about files you previously saved.

TheWhiteBox’s takeaway:

While this is a neat add for those of us who send OpenAI a lot of files, it mostly benefits them; they are probably cold-storing the cached activations of processing these slides somewhere, saving reprocessing, which can be very expensive if the files are large. It’s a prompt-caching technique, basically.

Still cool!

AGENTS

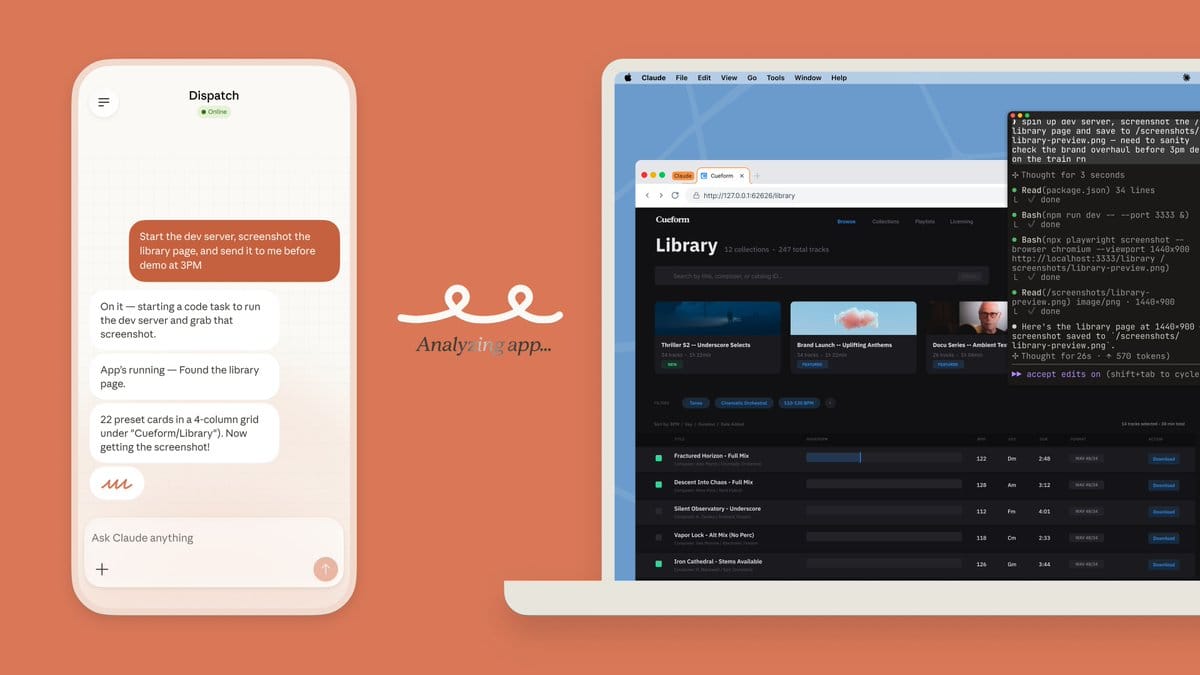

Claude Combines Dispatch and Computer Use

Anthropic is shipping new features at a pace that is basically unmatched, and that’s saying something. Now they’ve added Computer use support to the Dispatch feature.

In layman’s terms, not only can you control an agent running on your computer from your smartphone, but this agent can now interact with your computer as you would.

The appeal is clear: imagine you’re walking your dog and you realize you have to send an invoice right quick. Dispatch your agent to generate the invoice for you.

TheWhiteBox’s takeaway:

To be clear, I remain skeptical of computer-use agents. First, I don’t trust them to execute reliably. Second, I would much prefer to connect the agents to the applications via API or CLI, which is safer and less error-prone.

In other words, using the invoice example, instead of having the agent interact with my Stripe dashboard, it’s preferable to just give the agent access to the Stripe SDK and perform the same action programmatically.

At the end of the day, these agents are much better coders than anything else. Therefore, to me, all computer use features look like patches that won’t be needed soon enough. We don’t have to make agents good at our interfaces; we must create an Internet that’s native to them, making them first-class citizens.

That said, it's really cool technology for visualizing how impressive some of the things you can do with these agents today already are.

IMAGE GENERATION

Sub-second Image generation is here

Modular has announced sub-second image generation on its platform. That is, they claim they can generate images in some cases in under 1 second, a feat unseen in AI today.

The secret is not a mysterious new model. It’s just engineering. They claim most image-generation stacks are completely unoptimized, and they prove this by generating custom kernels (the programs GPUs run) and leveraging several infrastructure tricks to achieve this amazing performance on a state-of-the-art model, Flux 2.

TheWhiteBox’s takeaway:

This just goes to prove how early we are in this technology that there's so much low-hanging fruit out there.

This isn't surprising; image generation isn’t as popular as text or code generation, so popular runtime stacks like torch.compile or even NVIDIA’s own CUDA libraries haven’t focused as much on it.

SOFTWARE DEVELOPMENT

Real-time Websites with Gemini 3.1 Flash Lite

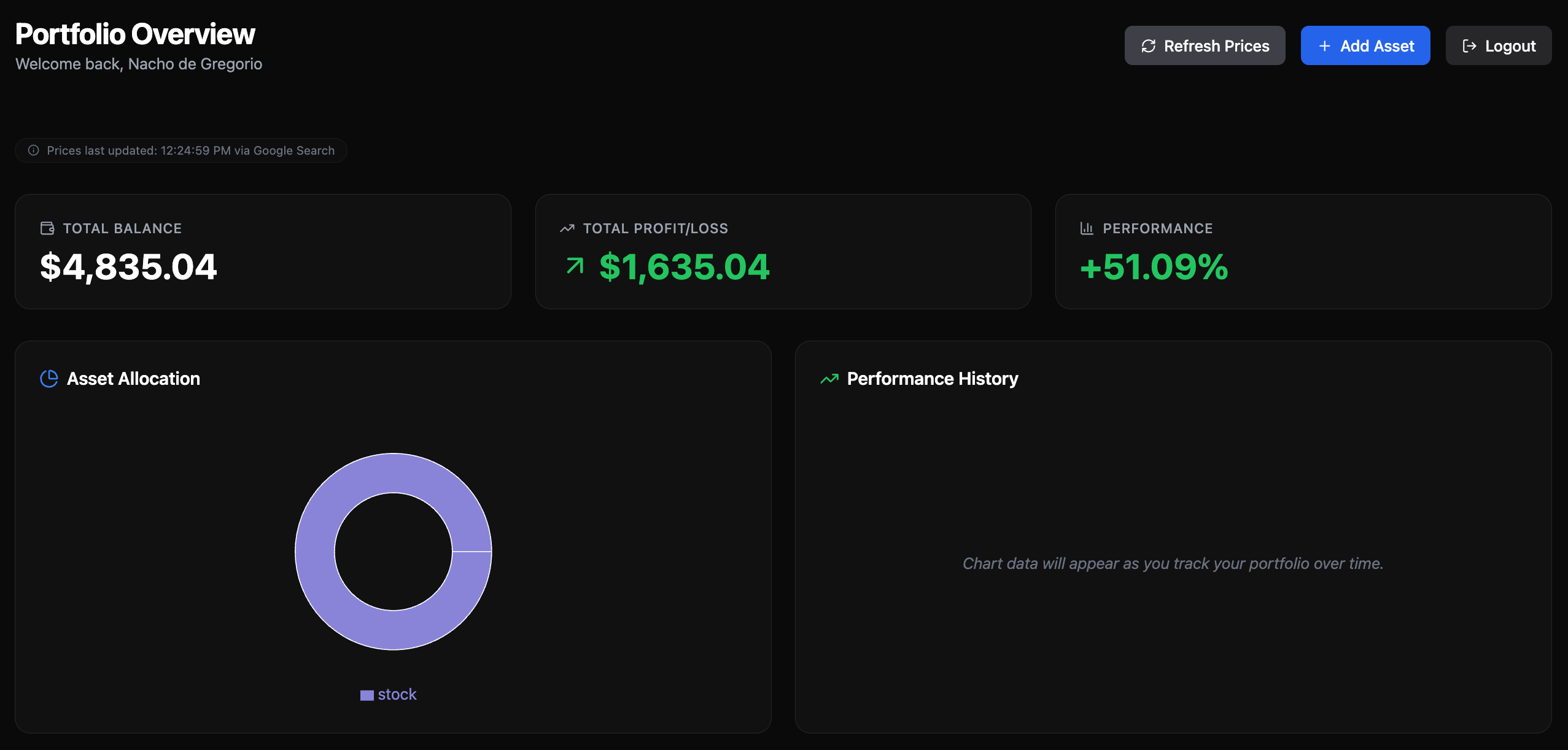

Generated app. Numbers are fake

Gemini has launched a pretty impressive demo of Gemini 3.1 Flash Lite, which you can try here, that allows you to generate websites on the spot. The most impressive part is shown in this demo, where the agent creates the different pages on the spot. That is, it generates the next page as you click it, implying truly real-time generation.

The demo I tried for myself, which generated a wealth tracker (see above), created a Firebase account (to handle all backend stuff like the database), and it tracks real stock prices and everything else on the spot, creating an actual app with Google OAuth and all; very impressive.

But… do we need this?

TheWhiteBox’s takeaway:

The demo is incredible, although not as real-time as the video shows. But the question is: why? Do we need real-time website generation?

We… don’t?

This seems more like something to earn bragging rights than something actually demanded by the market. It’s a way to show how fast the Lite model is (up to 2,000 tokens/second) and still generating what you asked for.

As with all things Google, absolutely incredible tech, dubious go-to-market.

Closing Thoughts

Once again, a pretty eventful week. Product shipping speeds are just too fast; it’s impossible for me to cover even 5% of what’s being released (though many of these products are crap wrapped in ✨AI✨).

To me, the biggest takeaways are three:

Finally, memory companies are fully committing to increasing supply capacity, giving us hope that the AI spending run will not abruptly end in 2028.

The CPU market is getting hotter, signaling that the world is soon going to realize we need way more CPUs than we have right now. And before you ask, no, cloud-based CPUs aren’t AI-ready; we need AI CPUs (much higher core counts)

But the obvious highlight of the week is Elon Musk’s Neuralink VOICE tool, which magically restores speech to people who sadly lost it. This is what AI should be about: driving humanity forward, not as a tool to hire less or fire fast.

Give a Rating to Today's Newsletter

For business inquiries, reach me out at [email protected]