THEWHITEBOX

4 Ways AI is Improving (and Worsening) the World, Beyond LLMs

These days, AI is almost synonymous with Large Language Models (LLMs), large data centers, and multi-hundred-billion-dollar data center buildouts.

But AI is changing the world around in many more ways that go completely under the radar, but could be just as significant as ChatGPT.

Today, while I’m still compelled to share what is clearly the ‘next step’ in the progress ladder in Large Language Models (LLMs), showing you how the bad press Opus 4.7 has gotten is largely misguided and, instead, this model is not only the smartest model you’ve ever used, but also a sign of the change of times, most of what we’re talking about has little to do with LLMs but is still just as impressive, and in some cases, outright scary.

Because I never said these innovations were necessarily good for us. Thus, my goal for today is to make sure you internalize that AI is far more than just LLMs.

Let’s dive in.

Token Efficiency, the New Mantra

The LLM topic of today is what I believe is the next instrumental step in AI progress: per-forward-pass intelligence.

Let me show you with a visual example.

Opus 4.7 Feels Worse, but it’s actually smarter

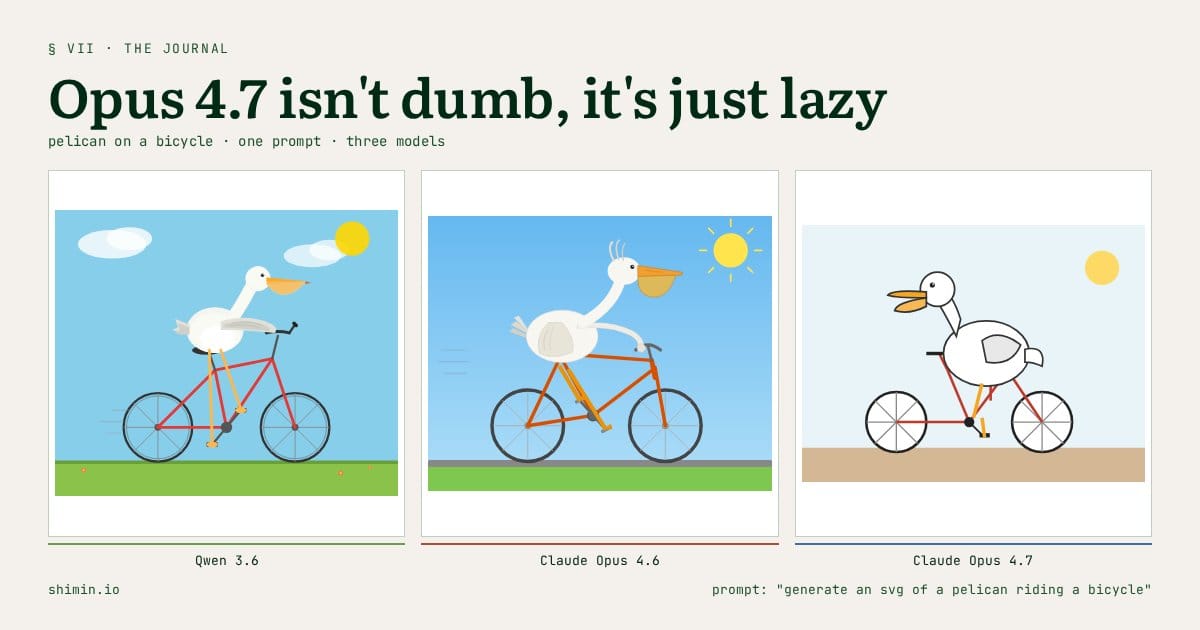

As we covered two days ago, many people have been complaining about Anthropic’s new model, Opus 4.7. The reason was that, relative to other frontier models and even compared to Opus 4.6, it saw some regressions; in many regards, a worse model than its predecessors.

In some cases, the model was extremely condescending toward users. In some other cases, it felt like the model wasn’t even trying, as if it had become lazy.

This was, of course, a negatively received release (and with reason). However, I believe that what is going on is not that the model is worse; it’s that it’s different. That, and that Anthropic hasn’t yet quite figured out how to serve this “new” model.

But why do I say it’s different, and what do I mean by ‘different’? And the answer is token efficiency. This is, my dear reader, unlike any other LLM you’ve ever talked to.

Let me explain.

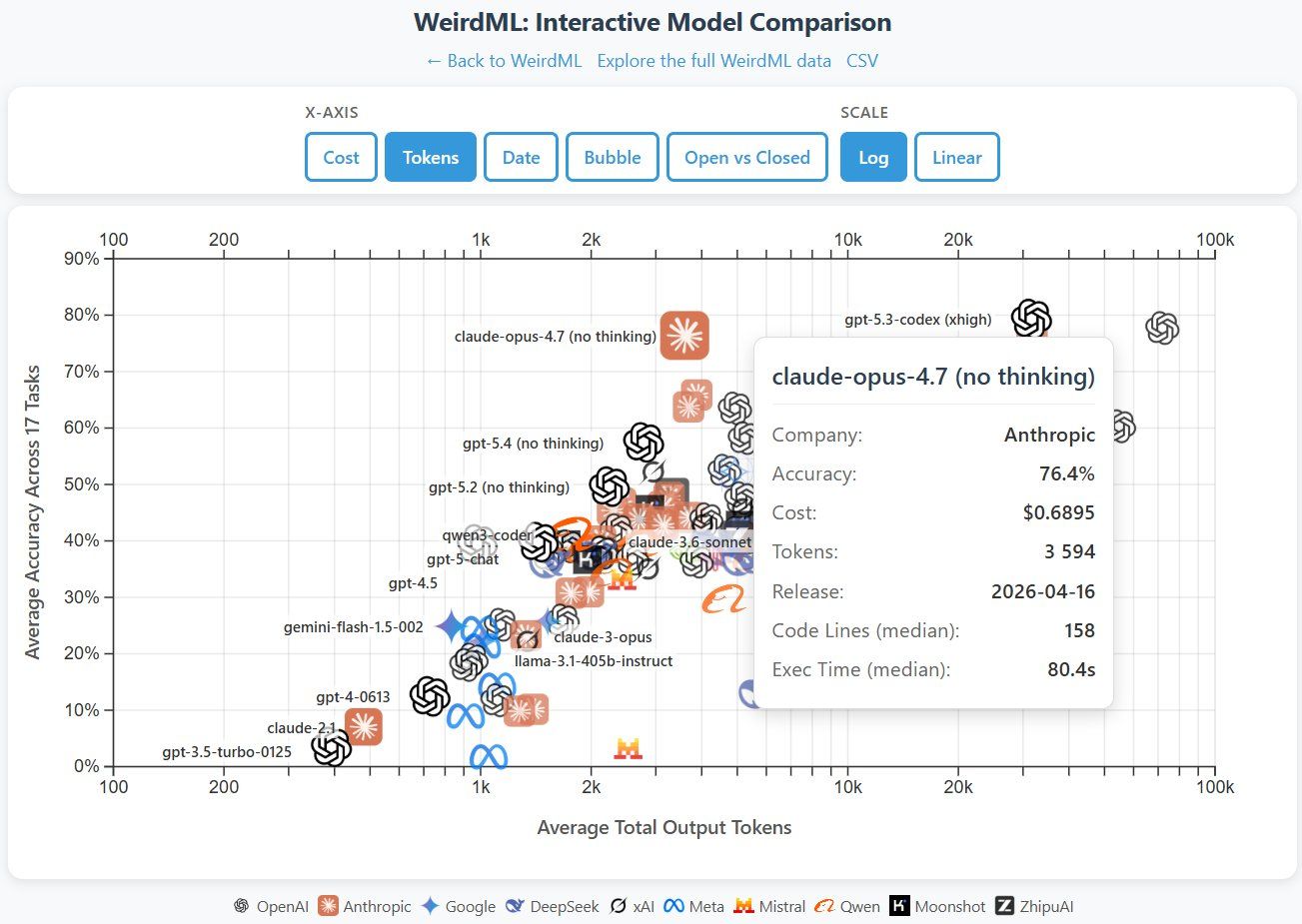

We can see the striking difference in how this model behaves relative to other frontier models (GPT-5.4 and 5.3-Codex models, the two highest scores) in the chart below, where the model scores in the top 4 while requiring way fewer tokens, literally an order of magnitude fewer than other models scoring similar top results.

In other words, undoubtedly, Opus 4.7 is the “smartest” model we’ve ever seen measured by a single forward pass.

But what do I mean by “smartest by forward pass”?

Let me put it this way. What my gut is telling me is that the results “are worse” because overall thinking has indeed decreased for now as Anthropic finds the sweet spot, but on the margins, this is the smartest model ever released.

This probably makes zero sense right now, but bear with me.

What makes the AI behind Opus 4.7 different

AI is a map from inputs to outputs. They use the patterns in the input to make output predictions.

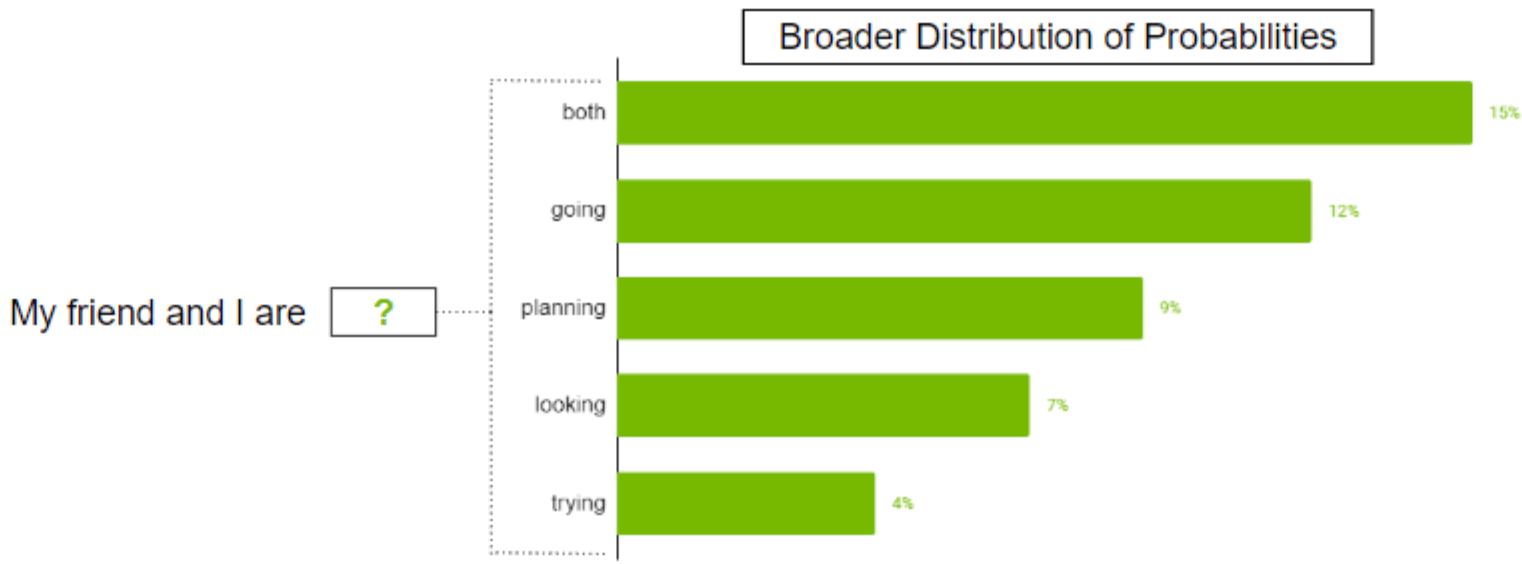

In particular, LLMs are autoregressive. This means they take in a sequence of tokens (text, code, images, and whatever else) and return a continuation of that sequence by predicting a probability distribution over the next token based on the previous ones.

Source: NVIDIA

In plain English, they answer, “Based on the user’s text sequence and out of all the tokens (e.g., words) I know, which one is likely to go next?” That process generates a ranked list of candidates, and one of the top candidates is selected.

Once a token is chosen, it’s appended to the sequence, and then the model “reruns” the entire process again with the new sequence (the old one plus the newly predicted word), generating the next, and the process repeats until the model decides it has finished.

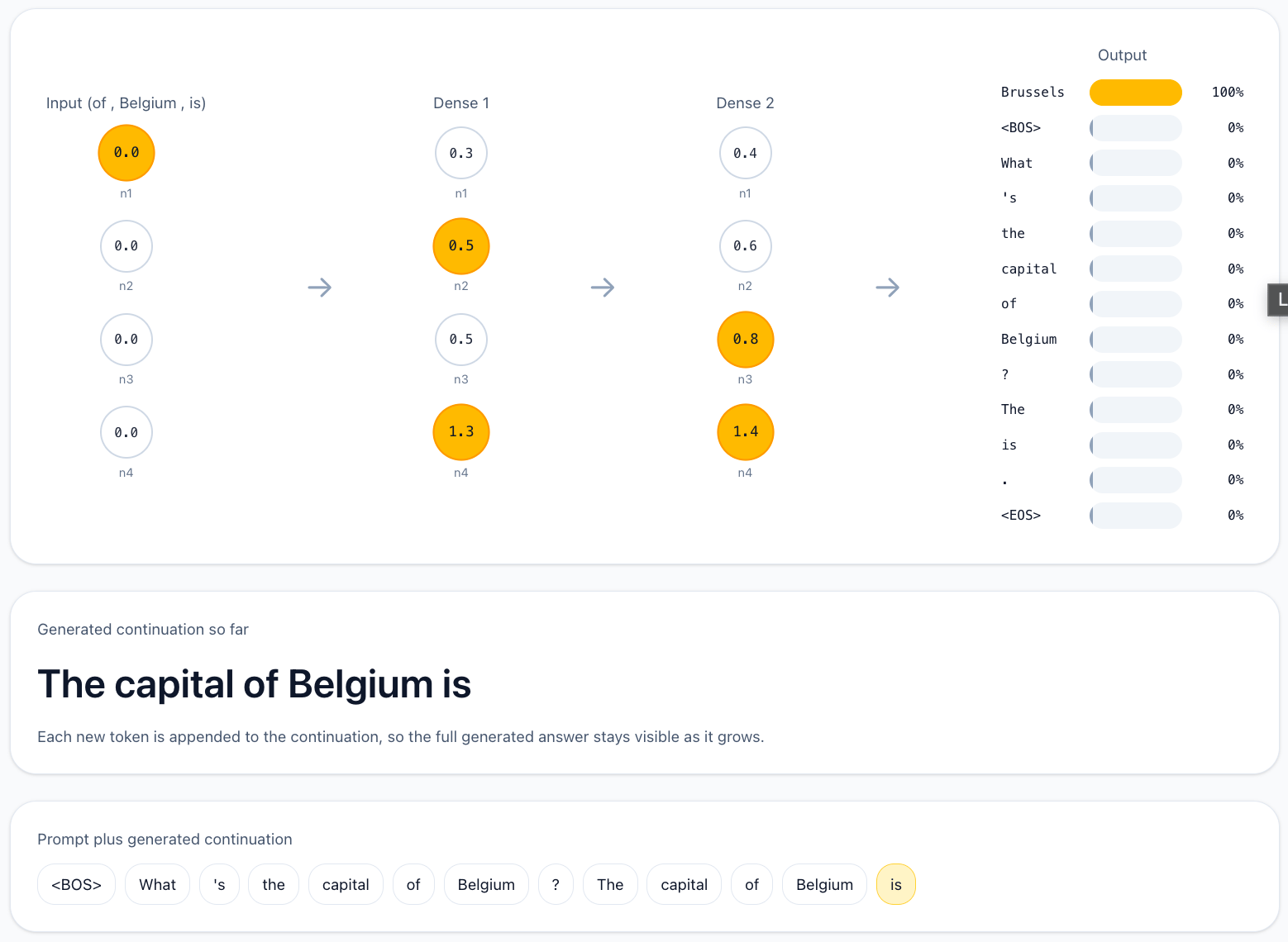

I’ve generated a free small interactive app using ChatGPT to showcase this idea in a simplified form. The app is modifiable if you want to, and shows how the autogressive process works.

Snapshot of the app that shows how the model’s output is not a single word but a distribution from which the most likely word gets chosen.

Each of these individual predictions is called a ‘forward pass’ because we feed the sequence into the model to generate the next word. And here’s precisely where things have started to change with Opus 4.7.

As I’ve repeated countless times, there are two ways to make AIs smarter: make them bigger or give them more room to think.

Over the last two years, “progress” has been largely defined not by the quality of each individual prediction, but by the number of them. That is, by the latter option, a process called inference-time compute, giving models longer thinking budgets per task.

Why? Simple, we couldn’t make models any bigger due to hardware constraints. We were stuck with model size, so giving them larger and larger thinking budgets was our only option.

But as Mythos recently showed, with new hardware generations like Blackwell, we can build larger models, and we’re doing so.

As we have commented recently, we can now train these models, but we cannot serve them yet. This, added to Opus 4.7’s different behavior, tells me this is clearly a Mythos distillation. In other words, it’s a model that has been trained to imitate Mythos while being much smaller to make it “deployable.”

But what happens when we increase model sizes? When you make models bigger, and this is what I’m trying to get at, you increase the number of layers, and thus you’re increasing the model’s intrinsic reasoning; its ability to think more per prediction; if we were stuck with brain size and working around it by giving more thinking time.

Now, as the model has a larger brain, the ‘quality’ of each prediction is considerably better because it can think more on every single prediction, which would explain Opus 4.7’s radically different behavior from previous models, scoring as high as them while requiring a considerably smaller token footprint.

A more efficient model, per se. And if this is true, which I’m very convinced it is, this has huge connotations.

Costs, hardware, and markets

While we still need to see the majority of accelerated hardware to be ‘Blackwell-scale’ for models like Mythos to hit the streets, it’s already going to make an impact.

Currently, the entire hardware layer is being overhauled to accommodate longer thinking budgets (i.e., the number of predictions a model can make to solve a task).

The reason is that longer sequences imply higher memory requirements (since the model must hold more tokens in its working memory).

It’s not a coincidence that memory companies (SK Hynix, Samsung, and Micron) have all entered the top 15 most profitable companies on the planet just as reasoning models (LLMs with large thinking budgets) became popular.

Consequently, if we assume the world is about to see models that “talk” more and more (models need to verbalize their thinking in order to think), hardware has become mostly a game of how much memory you can put into each chip and, by extension, into servers.

However, this is a very naive way of making progress, because, of course, there’s never going to be anything like “enough memory.” If models need larger sequences to be smarter, you’re of course incentivized to make them ever larger.

This is stupidly expensive and, crucially, we don’t have nearly enough compute to continue scaling compute the way we’re doing right now. Compute is so scarce that Anthropic has signed deals for 8.5 GW of new compute in just the last 10 days, the latest of which was announced yesterday with Amazon, totaling approximately $425 billion in investment.

And while compute appetite won’t fall, it’s totally unsustainable.

Therefore, luckily, our ability to train larger models opens a new avenue of intelligence progress (the original avenue, one could say), intrinsic reasoning, increasing the quality of each individual prediction so that, while sequence lengths drop, intelligence doesn’t (i.e., if a model is three times smarter per prediction, it needs ~3 times fewer predictions).

Which is to say, the next generation of models will behave much more closely to Opus 4.7 than to anything else we’ve seen in the past two years, but without the lazy part that is just Anthropic not having yet figured out how to do this properly.

Therefore, over the next few months, we’re going to see huge pressures on forward-pass performance; models are not only going to be judged by inference-time compute (which could be even seen as wasteful), but also be heavily judged by per-prediction intelligence.

To be clear, I’m not saying inference-time compute is going to fall out of fashion (it clearly works and no matter how large models become they’ll benefit from longer thinking times), but being the only way to scale was sending AI Labs into a spiral of losses; seeing how the price subsidies are ending, the days of ‘growth at all costs’ seem to be coming to an end.

Now that I’ve gotten my intuitions as to where LLMs are going out of my system, now to the—I believe—much more fascinating stuff: areas where AI is making an impact that have nothing to do with LLMs and quite possibly that you’ve never heard about.

Boxcrete, Using AI for… Concrete Discovery?

The first one concerns, above all possible things, concrete. And while that sounds as interesting as seeing paint dry, it’s actually fascinating because it’ll teach you the most important lesson one can learn from AI.

Concrete, almost as essential as water

Did you know that concrete is the second-most widely consumed material on Earth, after water, with an estimated annual production of approximately 10 billion m3 (~30 billion tonnes)?

Among all uses, the most common and simultaneously expensive and CO2-Intensive is cement, with an estimated annual production of 4 billion tonnes.

And with new housing quite possibly the only way we can bring prices down, it will only become more important over the next few years.

Its heavy use, combined with its significant CO2 emissions, has forced the industry to seek new formulations that reduce its carbon footprint.

Among those, the most promising is partial substitution of cement with SCMs (Supplementary Cementitious Materials). This has many benefits, but one of them is certainly not the ease of getting the right mixture.

This has transformed the industry into a highly empirical one, paired with the worst trait possible: high variance and a high-dimensional space of many interdependent variables.

In plain English, there are a huge number of possible combinations with outcomes that are very hard to predict unless they are tested individually.

The problem? This experimentation is not only expensive, but it’s also painfully long, with tests taking up to 28 days as these mixtures go through a “curing” process in which they develop progressively different relative strengths.

While in Spain we cure ham and cheese, the world cures cement.

And here’s where AI comes in.

I’ve long argued that AI is an excellent tool for discovery. As mentioned, it can process vast amounts of data and see “what repeats”. That is, it finds the patterns in the data, fancily known as ‘regularities’, and uses those findings to make inferences about the data.

For example, an AI can analyze a large amount of housing data and predict housing prices with great accuracy by identifying correlations, such as ‘the more rooms the house has, the more expensive it probably is’.

‘Probably’ is the keyword here; AIs never estimate certainties, only possibilities, because they are probabilistic.

AI has even been proven capable of “discovering” gravity by being fed astronomical data; the AI wasn’t told the concept of gravity; it inferred it from planet masses and their effects.

This is a form of inductive discovery, going from the specific to the general (i.e., inferring general laws from massive data), contrary to what humans, who are naturally bounded by how much data they can process, do, which is deductive discovery, from the general to the specific (i.e., inferring general laws and testing them on data).

Acknowledging this, it’s not hard to see where I’m getting at: Meta designed Boxcrete, a model that can forecast mixture strength and suggest concrete mixtures without requiring physical experimentation, by leveraging a lot of concrete data.

In other words, the model has found “what makes a mixture have a particular strength” and can make such inferences without the expensive experimentation.

And if this wasn’t fascinating enough, how they did it was even more interesting: they didn’t use neural networks.

Neural networks are the backbone of most new AI models because they just work better than anything else. However, they struggle a lot with data efficiency; they need a lot of data to learn, basically.

As you may infer, concrete data isn’t precisely common. In fact, Boxcrete was trained with just 533 experiments; a lot for a human to process, but a non-starter for neural nets, which require millions of data points just to get you started (LLMs today are fed dozens of trillions).

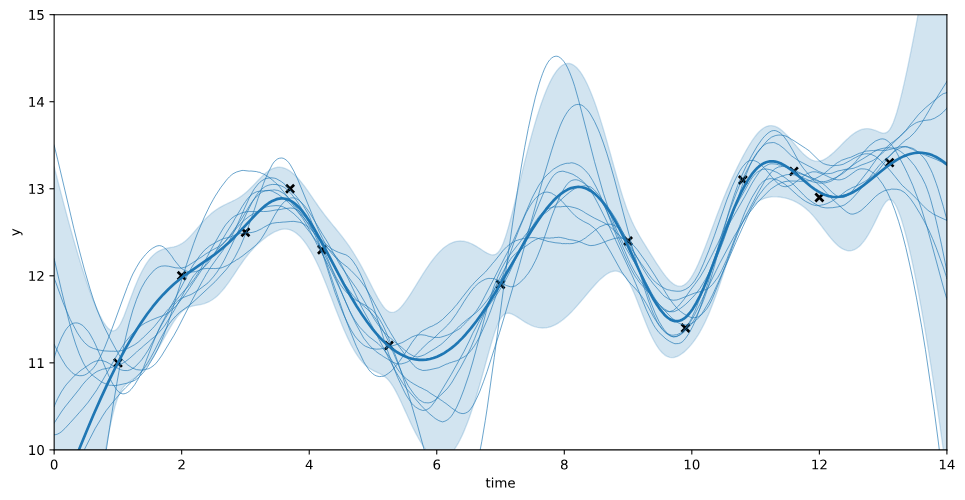

To salvage this, they use a combination of Gaussian processes and Bayesian optimization.

Too complicated to explain in full, but the idea is as follows: Gaussian processes, just like any method to train AIs, map an input to an output. The difference is that they do not output a single value, but a distribution over possible functions.

That may have sounded like gibberish, but the idea is that AIs usually find a single function x→y mapping (AIs are fixed, once they finish training, they don’t change unless we retrain them), meaning they have found “the” solution.

This is part of the reason why we have to make them so massive; the only way they can find “a universal solution” to multiple x→y mappings is to make it incredibly large.

Instead, Gaussian processes assume that, in some situations, there’s no such thing as a “universal mapping,” and that some relationships come from different mappings weighted by probability.

As you can see below, the x→y mapping is displayed as an “average” of different functions weighted by importance (each function is a curve), to naturally account for the high uncertainty of the prediction.

For example, for the same housing example as earlier, a Gaussian process behaves a bit like a cautious forecaster: it keeps track of many different ways the price could depend on things like rooms, size, and location. A neural net is more like a decisive forecaster: it learns one overall rule that seems to predict prices best from the examples it has seen.

How GPs model uncertainty, not just one possible solution (bold blue line).

Also, Gaussian Processes are highly data-efficient, so they can converge to a solution with just a few examples, which is precisely the case here.

However, as we’re working with very little data, we need to find a way to optimize this at scale. This is where Bayesian optimization comes in.

The idea is to have the process evolve as it gathers feedback; the model predicts a mixture based on what it currently knows; the solution is tested, which generates feedback that “guides” the next prediction as the model readjusts its “beliefs.”

This means that Boxcrete doesn’t truly eliminate the need for testing, it just narrows down the search to highly promising mixtures that are then progressively optimized.

Impressive, right? This is why Boxcrete was something I particularly wanted to talk about, because it teaches us AI’s most important lesson:

Its value comes not from the perspective of use cases, but from the perspective of data. What can be compressed and measured, can be learned.

But if Boxcrete was interesting, it has nothing against our two use cases coming next: one that aims to solve one of humankind’s biggest mysteries, and one that will probably scare you more than excite you.

The only thing I’ll say is the following: dogs and turbocharged human profiling. What’s not to like? Well, a lot, actually.

Subscribe to Full Premium package to read the rest.

Become a paying subscriber of Full Premium package to get access to this post and other subscriber-only content.

UpgradeA subscription gets you:

- NO ADS

- An additional insights email on Tuesdays

- Gain access to TheWhiteBox's knowledge base to access four times more content than the free version on markets, cutting-edge research, company deep dives, AI engineering tips, & more