THEWHITEBOX

TLDR;

This week, we have fascinating research from Epoch AI that explains a fundamental misconception in AI progress, new models from Anthropic and OpenAI, and from Chinese Labs, including new leaked data on the most hyped of them all: DeepSeek v4.

We also have insights into how the current AI investment boom compares to previous ones, as well as new products from companies like Cursor and Quiver.

Finally, we analyze Cerebras, covering key facts about the company as it prepares for its imminent IPO.

Enjoy.

RESEARCH

Are Models Stagnating?

One of the most debated topics today is whether AIs are stagnating. The key “issue” is that most progress today can be explained more by what you build around models than the models themselves.

Nonetheless, Anthropic proved that their Haiku model, their smallest model, beat Opus, the frontier model, in almost every task (Opus running without a harness).

This immediately raises the question as to whether we’ve crossed the “neurosymbolic” barrier, the moment where most progress is driven by the system, or harness, aka old-fashioned AI, rather than the actual model being smarter.

And the answer is, well, sort of. I both agree and disagree.

Yes, most progress variance can be attributed to the harness these days (it would be dishonest not to acknowledge this), but that doesn’t mean the underlying AIs aren’t improving (see Mythos).

But there are several reasons why this is hard to see for most:

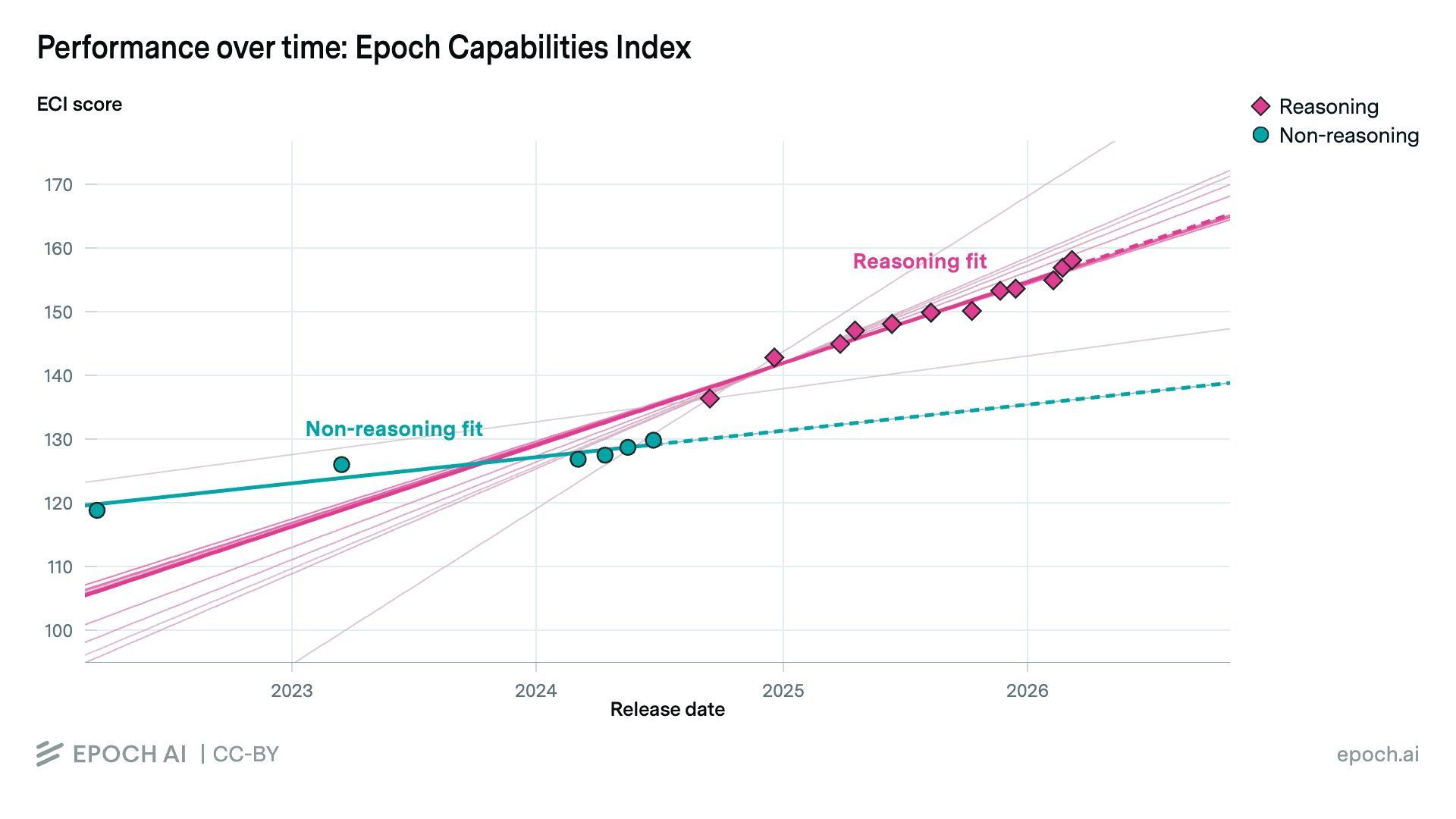

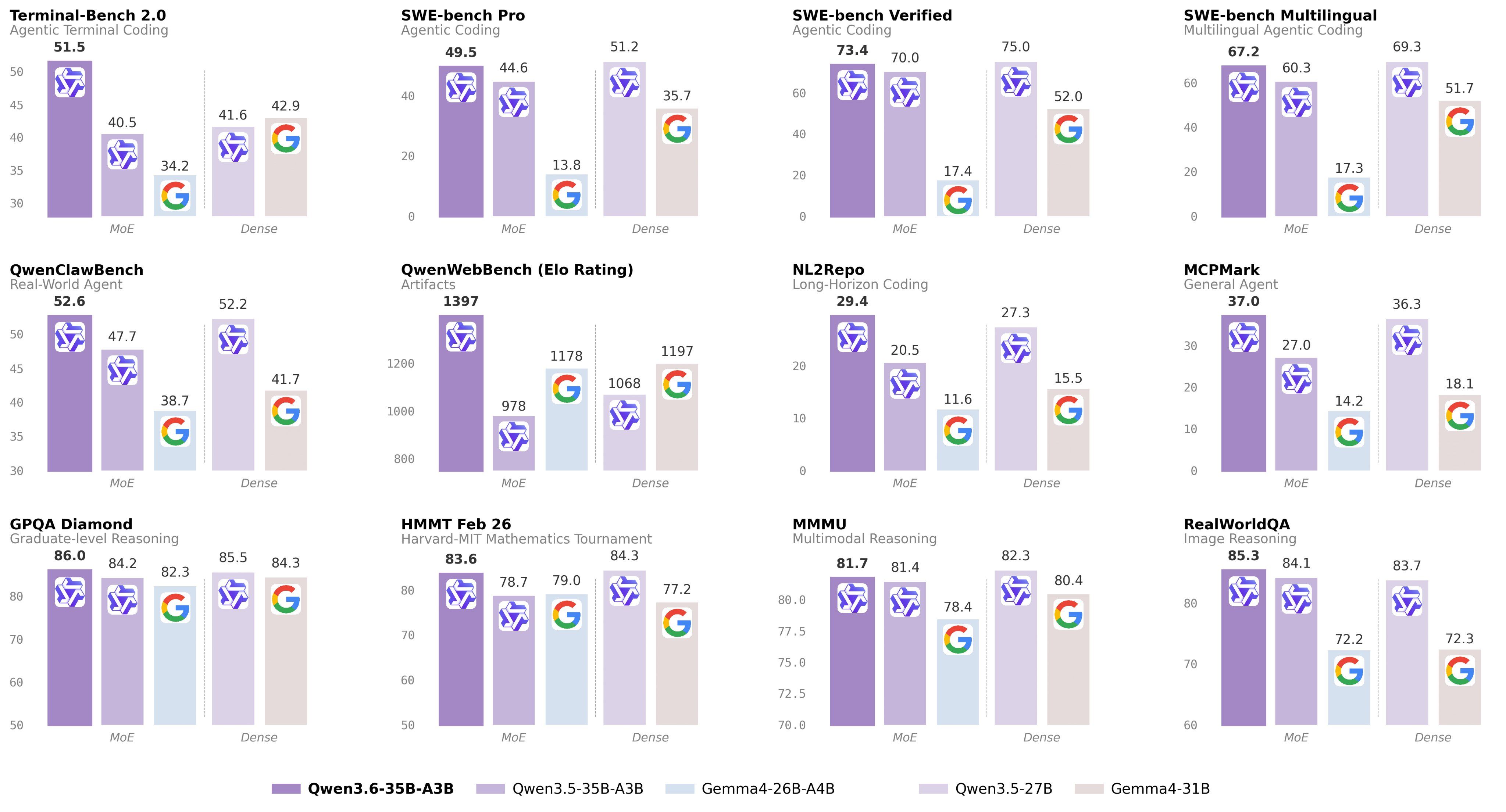

As you can see in the image above, whether AIs are progressing depends entirely on the model type you’re measuring. Reasoning models, models that think for longer on a task, are clearly making progress, while the ones without that additional runtime computation don’t.

I can already tell you this graph is way more important than it seems, because it clearly delineates the experiences with AI of the few single-digit percentage people using Thinking models, and the rest of society.

I can even go so far as to say that this graph alone explains the gap between people who think AI is stagnating and those who don’t.

I’m tired of hearing people on the ChatGPT free tier telling me AI feels stagnant. Yes, John, I do believe you when you say ChatGPT, with no thinking budget, doesn’t give you a better or unique cake recipe now than it did two years ago.

But that is no way to measure progress, my friend. How about you try talking to ChatGPT Pro and tell me if AI has progressed? This is what I believe is the biggest gap in AI today: those who use reasoning models and those who don’t.

AIs are still getting better, harness or withtout harness. It’s just that you aren’t aware of them because you aren’t using them.

This also clearly opens the question of the economic gap between those who can afford the top models and those who can’t.

But why is this the case? It’s pretty simple, actually. Just like the more time you’re given to solve a maths problem, the more likely you are to solve it, models benefit from the exact same principle.

The compute budget between both models is astonishing, which obviously leads to better results. In my honest opinion, inference-time compute is the most important predictor of performance today across models, and yet most people haven’t even experienced those benefits.

TheWhiteBox’s takeaway:

It seems that the reason models felt stagnant was, once again, a compute issue.

We’ve been stuck with the same hardware for several years, and until Blackwells and TPUv7s hit the market, we couldn’t see what awaited us there. That models are Mythos or Spud, and how good they are, will tell us whether AI is truly in harness-only territory, or the technology still has legs to become smarter.

I choose to believe the second, but I say ‘believe’ for a reason: until I’ve tested these models myself, I will not chant victory.

MODELS

Anthropic Releases Opus 4.7, & it’s a Mixed Bag

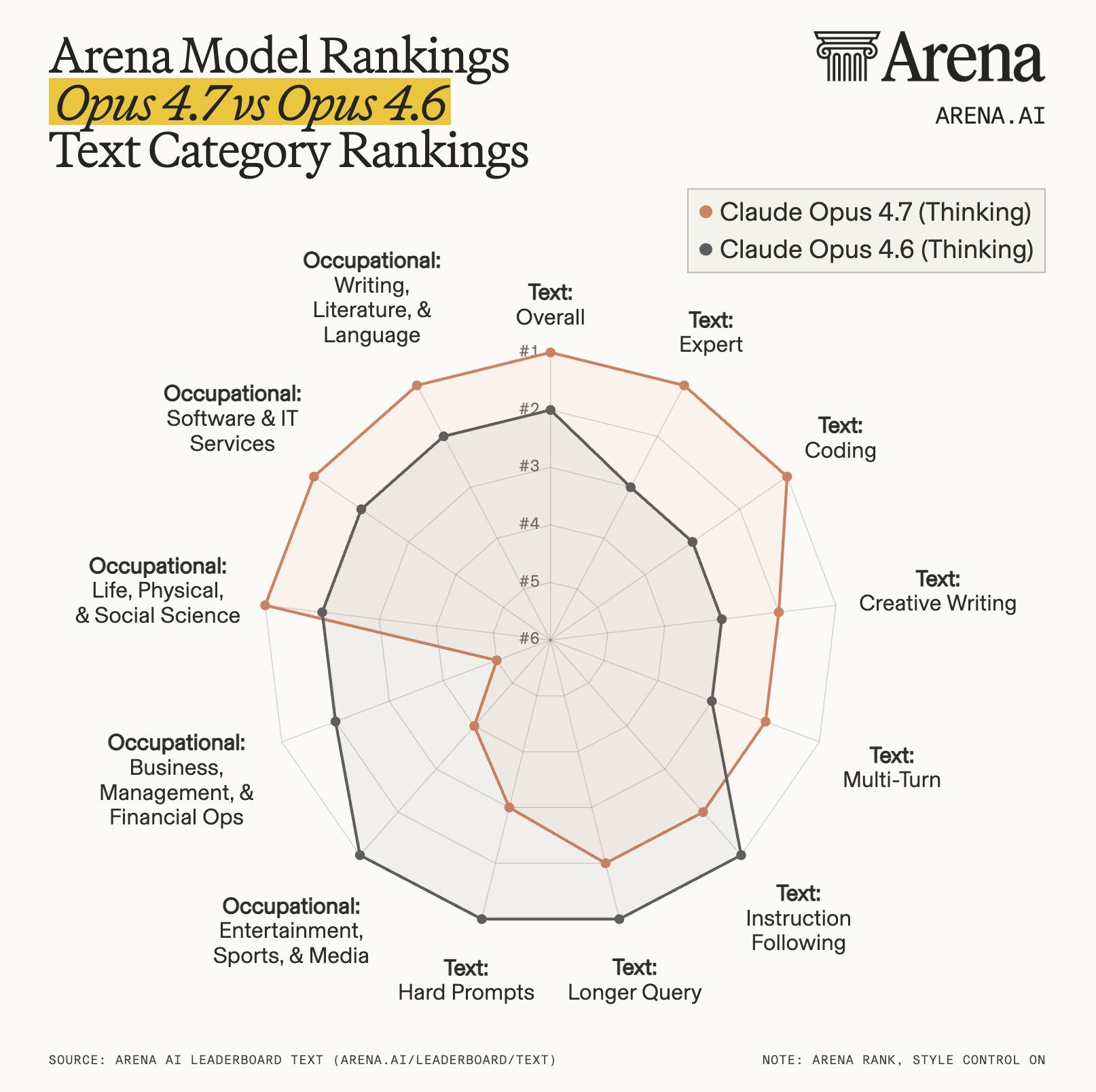

Out of the blue, Anthropic has released a new model, Opus 4.7, but the results have not been great. At least, the overall reception from developers has been quite bad.

The model behaves radically differently from Opus 4.6, thinks less on average per task (meaning it's dropped its inference-time compute and its ability to think for longer on a task), and, perhaps most concerningly, is surprisingly resistant to some instructions, to the point it just ignores your request or decides to simply not do it.

In layman’s terms, some users have described it as “lazy”, “condescending”, and a myriad of other negative adjectives. Nonetheless, as shown by LM Arena, the model has indeed regressed considerably in many areas, particularly in instruction-following.

Anthropic, what happened?

TheWhiteBox’s takeaway:

There seem to be two things to blame here.

The first is the tokenizer. Weirdly, it’s a model with a new one. AI Models have external components called tokenizers that take in the data and “prepare it for the model” by tokenizing it.

In the case of words, that’s taking the sequence and breaking it into chunks (usually two or three characters, sometimes entire words). For images, it patches them into pixel chunks; you get the gist.

A tokenizer is usually chosen before a model is trained, because the model will learn based on how the tokenizer behaves. It affects its behavior. Thus, this suggests Opus 4.7 could be a totally new base model, or a weird reshuffling of the tokenizer midway through training, which seems unlikely.

An interesting insight is that this tokenizer is more compressive, meaning each token is smaller on average. That means, for the same outcome, the model has to generate more tokens on average, which increases your costs because AI is charged per token, so Anthropic might be trying to squeeze you.

Besides, it doesn’t seem like a good reason to change a tokenizer, unless… It’s something much more obvious: a Mythos distillation.

If you’ve read my recent newsletters, you know Mythos’s biggest issue is not its cybersecurity threat to the world: it’s that it cannot be served. It’s too expensive.

Thus, Opus 4.7 might just be Anthropic’s first try at trying to get some sort of return from this Mythos model, but didn’t quite get it right.

The other big thing here is inference-time compute. It might be the case that Anthropic is so desperate to lower compute costs that not only has it reduced average compute budgets per request, but it has even baked in a refusal behavior. In other words, Anthropic may have baked in a natural resistance from the model to deploy larger compute budgets. And I’m definitely not the only one suggesting this.

Anthropic is losing the mandate of heaven with their latest behavior and sloppy releases.

MODELS

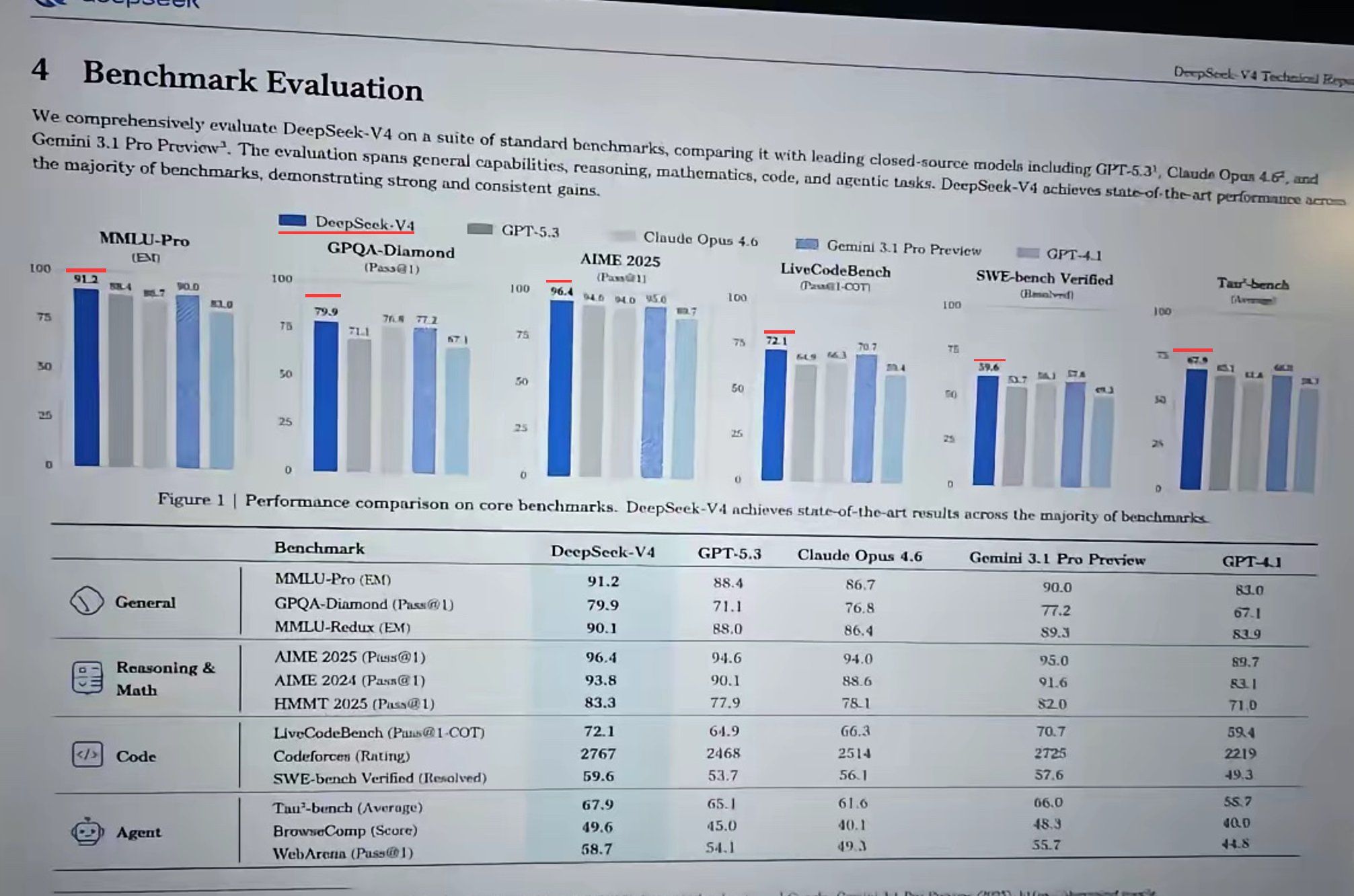

It Seems We Are Finally Getting DeepSeek v4

Although we can’t fully confirm yet, the number of Chinese analysts leaking the imminent release of DeepSeek’s new frontier model, V4, is quite large, to the point that it gives me enough hope that the time has finally come.

Many people even acknowledge having tested the model.

As you can see in the thumbnail (which could be totally AI-generated, by the way), the model seems to be a frontier on released models (meaning it’s not a Mythos-level model, which would freak out everyone in the West), and seems to be particularly strong on agentic workloads (being used as an agent more than as a chatter).

But without a doubt, the most relevant thing about this release is that it could be the first Chinese model that, besides being 3-6 months behind US models, has the most important change: it could be fully Chinese.

But what do I mean by that?

Simply put, it could be the first top Chinese model trained on Huawei Ascend chips and using Huawei’s accelerated training stack CANN. This is a thought that already scares people like Jensen Huang, NVIDIA’s CEO, who predicted this exact thing was about to happen (he called it a “really bad” scenario).

The idea that China is closing in on a reality in which it has completely verticalized the entire AI supply chain is a thought most US national security experts wouldn’t want to entertain, as it’s something even the US can't claim (although they aren’t far from that except for ASML’s EUV chips, TSMC’s advanced packaging (they do have Intel for this though), and Chinese rare Earth refinement—China is on path to having local EUV tools as soon as 2030, something the US is not trying except maybe for Elon’s Terafab, and also has advanced packaging capabilities).

However, it’s important to clarify the main advantage China has: power.

Unlike the US, which is severely power-constrained and thus forces NVIDIA and other players to treat performance/TCO as the only metric that matters (i.e., performance per total cost of ownership, aka performance per dollar and watt), China doesn’t care about that number; it has more than enough power.

This means they don’t have to go as small as US accelerators in terms of process nodes (transistor size, which does improve performance per watt), and instead just build more chips. If NVIDIA’s GPU is three times more powerful than a Huawei Ascend chip, then China will produce three times more chips, because they do have the power to serve such a humongous amount of Silicon.

TheWhiteBox’s takeaway:

For now, though, the compute gap is massive.

Just the five Hyperscalers (Oracle, Meta, Microsoft, Google, and Amazon) own 60% of the world’s accelerated compute. China is ramping up wafer starts (the metric for measuring a country’s chip production capacity) massively, but it’s still producing at a much slower pace than the US and its allies.

Still, a frontier-ish model trained on Chinese chips is a massive success for China’s ambitions, and a clear message to the world that they won’t be stopped, no matter how hard the US tries.

On a final note, the same company is reportedly raising $300 million at a $10 billion valuation.

OPEN-SOURCE

Alibaba Releases Qwen 3.6, and it’s pretty great

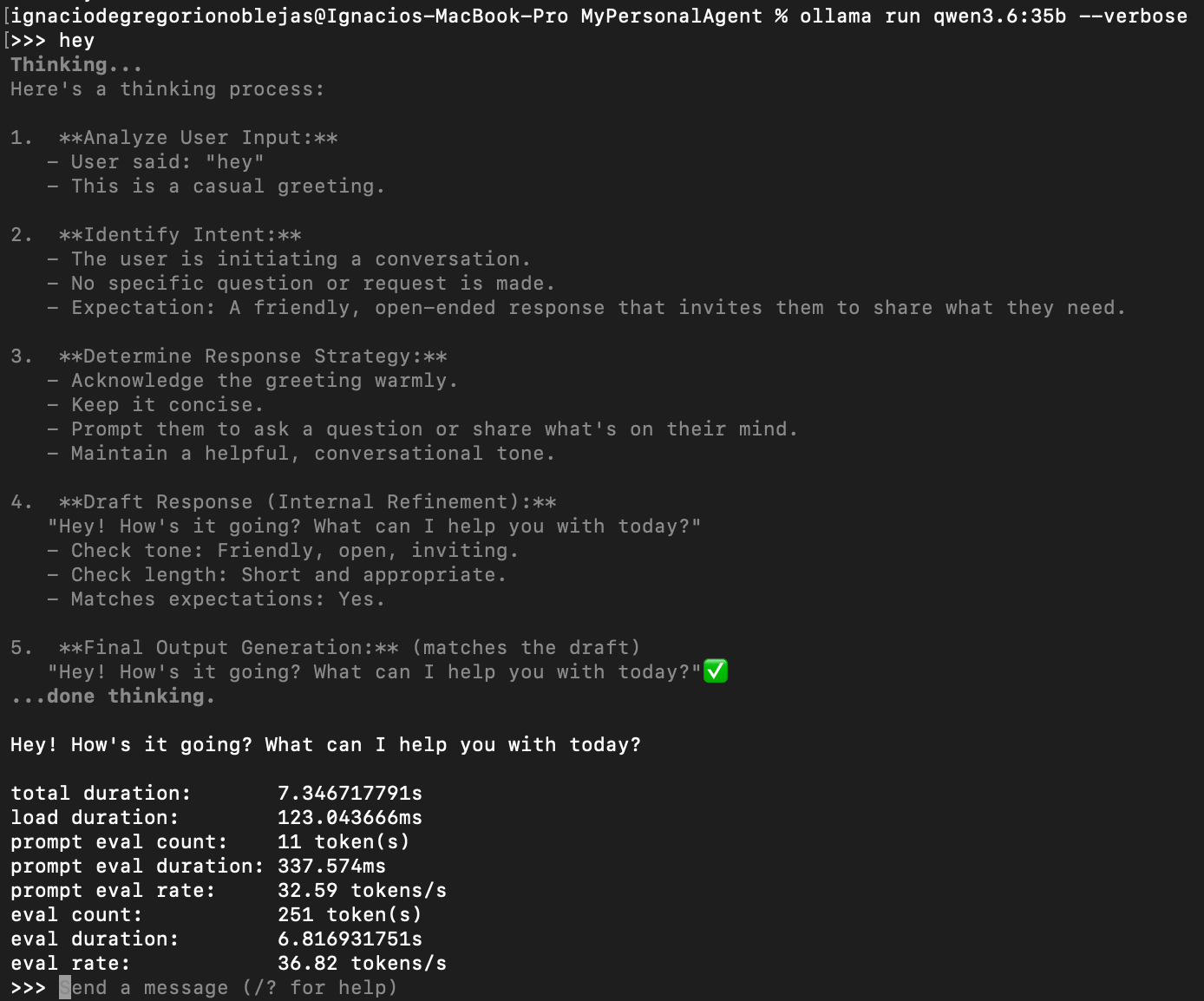

Continuing on China, Alibaba has released its next local model, Qwen 3.6-35B-A3B, with 35 billion parameters and 3 active (meaning roughly only 8.5% of the model activates for any given prediction), making it much faster.

The model particularly shines in agentic and multimodal workflows. Focusing on the former, getting local models to be good enough for agentic workloads would be an absolute game-changer for anyone wanting to run AI at scale without going bankrupt.

This is just 23 GB, so beefy computers can definitely run it. In my M3 Max with 128 GBs (more than enough for both the model and the cache of very long traces), with 546 GB/s of memory bandwidth (the real bottleneck in inference), the model responds at above 30 tokens/second, as shown below:

This is batch 1, of course.

This is clearly insufficient for interactive, real-time conversations, but it’s more than enough when you want to use these models asynchronously as I intend; using them in 24/7 running servers on a Mac Mini or in a hosting service like Hostinger via cron jobs and similar, running in parallel executing jobs you don’t need to see the response too (at least not in real time) and for a fraction of a fraction of the cost (as you’re only paying fro the energy your hardware consumes).

Personally, I aspire to have agents running locally 24/7 without paying the costs of what would be millions of generated tokens today, which would cost from a few dozen dollars to hundreds, depending on the model.

Seeing as the era of subsidies is ending, I see this as a non-negotiable future for anyone truly wanting to use AI at scale: either you own the AI, or you won’t be able to (unless you’re filthy rich).

And don’t get me started on taxes; they are going to tax these products to a serious extent once they truly start taking a toll on the labor market (not because it will lead to massive displacement, but because it will lead to massive hiring freezes).

IPOs

How Big is this Investment Cycle Really?

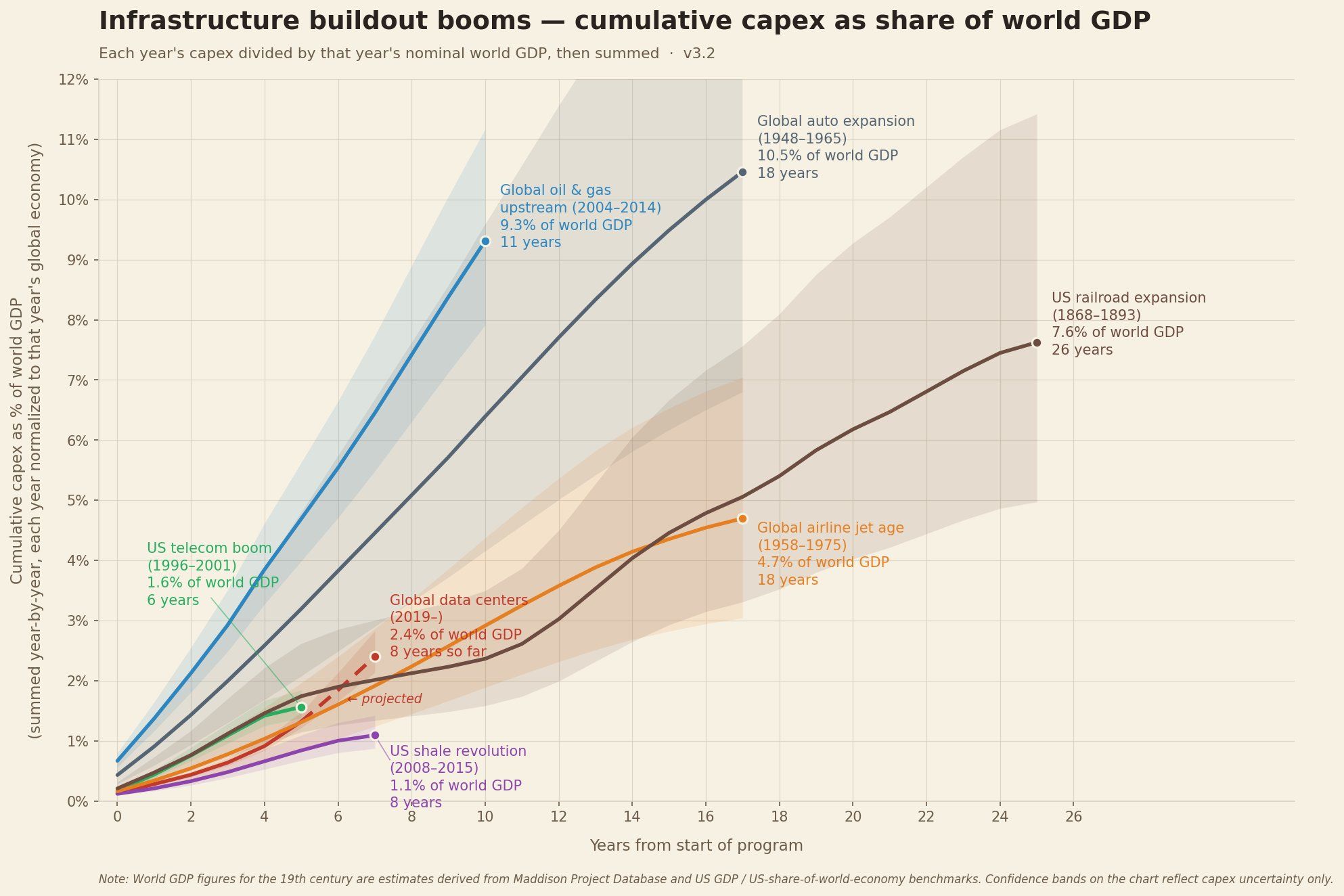

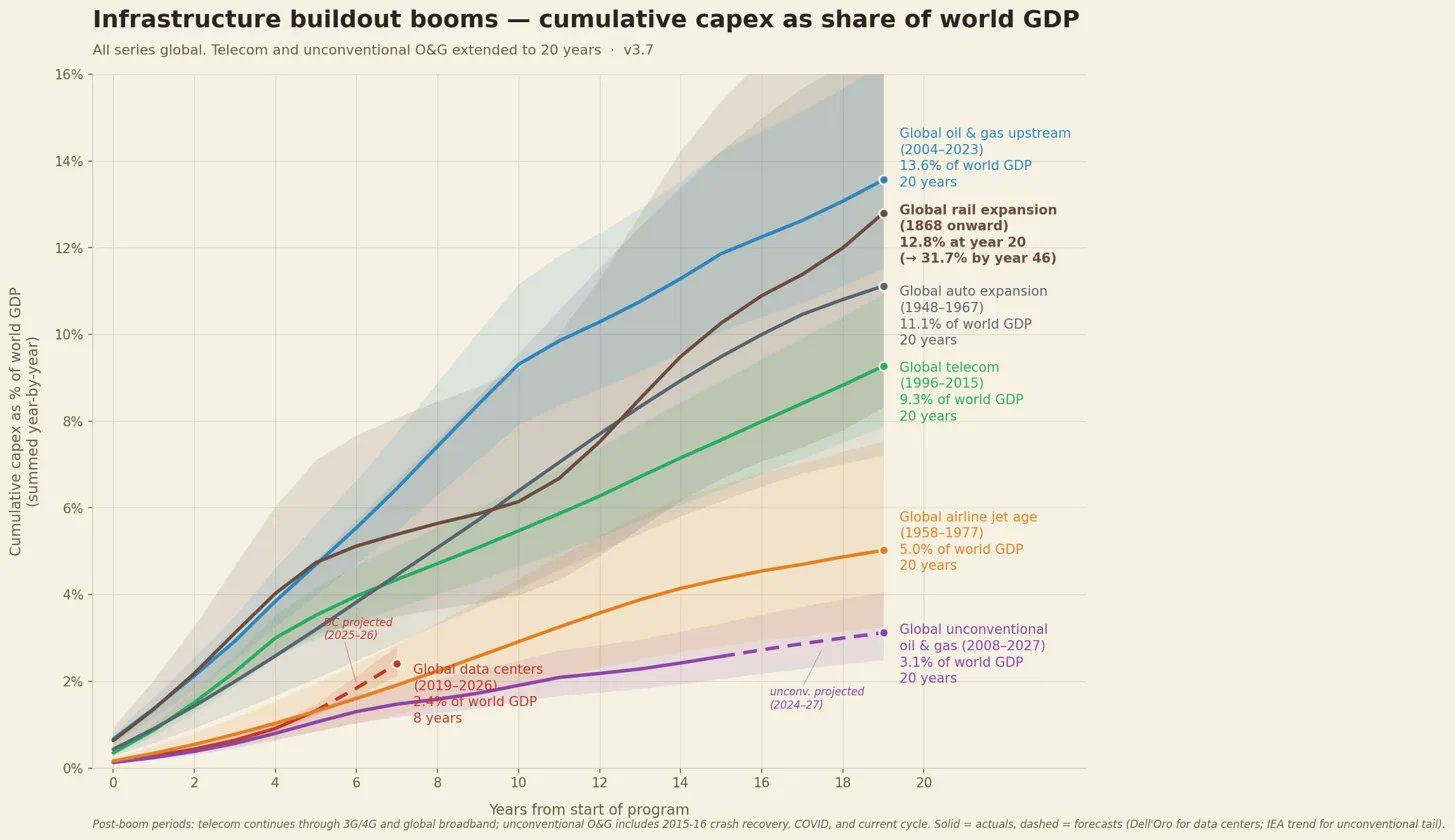

The entire world looks in utter shock and despair at the numbers floating around regarding AI’s huge capital investments, which are undeniably enormous.

People just instantly assume that this is absolutely unprecedented. But the reality is that this is not, in fact, unprecedented; other investment super cycles were not only “larger” but also completely outclass our current one in magnitude.

Nonetheless, you have to squint your eyes to identify where AI is in the above picture, in red, bottom left.

The key is that people love to talk about nominal values but forget that what really matters is the context in which that investment was made, adding two things: ensuring it’s adjusted for inflation and, perhaps even more importantly, framing it as a percentage of world GDP.

So while AI is clearly the one with the highest nominal values, this is also after years of inflation.

When you factor in world GDP, investment cycles like the railroad, auto, and oil expansions over the last few centuries were much, much larger.

But does this kill all risk?

At the end of the day, it’s mostly about whether this huge investment cycle leads to something of value.

AI certainly meets that criteria, but its diffusion is going much slower than you would have expected if you listened to {insert your favorite AI charlatan}.

POLITICS

Anthropic and the White House, Destined for Make Up

As explained by this Financial Times article, Anthropic is in discussions to give parts of the US government access to its new Mythos model, including agencies such as the Treasury, even though the company has been in conflict with the Pentagon over military-use guardrails and was previously treated as a supply-chain risk by the Defense Department.

The core tension is that Mythos is treated simultaneously as a national security asset and a cyber risk.

Treasury and other officials want access because the model may help find software vulnerabilities and strengthen defenses, while policymakers and banks are also worried that the same capabilities could make offensive cyberattacks easier.

TheWhiteBox’s takeaway:

Beyond the fact that Anthropic and the White House are destined for reconciliation, my takeaway here has nothing to do with it.

Instead, it’s this one sentence here:

“Multiple people with knowledge of the matter suggested Anthropic was holding back from a wider release until it could reliably serve the model to customers.”

Oh, the sweet taste of vindication. As I’ve been pretty outspoken about lately, Anthropic’s non-release of Mythos was a lot less about cybersecurity armageddon and a lot more about “how do I serve this thing?”

HARDWARE

Cursor Purchases Compute from xAI

In one of the most surprising moves by a top AI Lab recently, xAI has agreed to provide compute to Cursor, especially for training their upcoming coding model, Composer 2.5.

TheWhiteBox’s takeaway:

Considering most AI Labs are not precisely in excess of compute, it’s very surprising to see one of them agreeing to rent compute to other Labs. This obviously signals that xAI is the only Lab with more compute than demand, quite tragic, if you ask me.

I think this is the first step in xAI’s path to acquiring Cursor, which is close to raising a new round at $50 billion. That means this potential purchase would have to be at least $75 billion to make sense for investors in this last round.

VECTORIZATION

Quiver’s Arrow 1.1 and Max

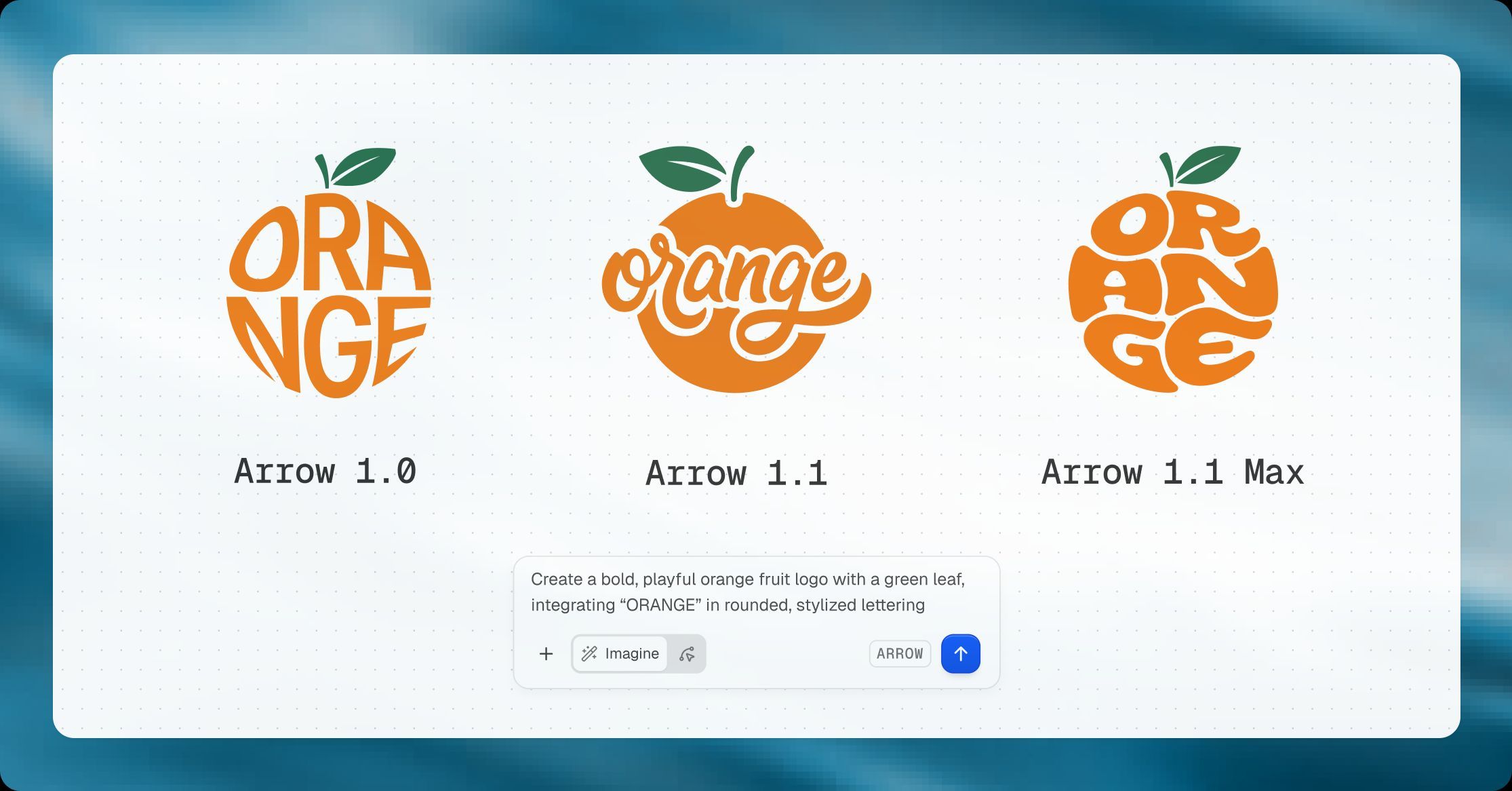

Quiver AI’s SVG creation tool is one of the coolest uses of AI today. And now, they’ve released the new version of their proprietary models: Arrow.

The reason is not only that it solves a real problem, but also that SVGs are crisp at any resolution and editable, as you can tweak colors, strokes, and paths in design tools or code.

But also because it shows how you don’t need billions of dollars to create a state-of-the-art AI model (this company is minute relative to the AI Labs it’s outclassing).

You just need depth.

For years, since the arrival of natural language foundation models, known as Large Language Models (LLMs), the AI industry has focused on general-purpose models, models that can do everything. The issue is that this has always come at a cost of depth; models are good at many things, truly great at none.

Specialized AI models, what QuiverAI’s Arrow models are, can’t write Shakespeare poems and execute SVG images perfectly. It’s either one or the other.

The advantage is that they become incredibly good at that one task, giving us models like this one that blow frontier models completely out of the water.

TheWhiteBox’s takeaway:

Deep models are the future of enterprise. I have zero doubts about it. In the enterprise, especially for automation, you don’t want your customer support agent to be good at sales; you can't have it be great at the one thing it’s meant to do.

I believe general-purpose models, except for very general cases like coding, lack the nuance to be truly great at any given task.

For that reason, as companies become more sophisticated, they’ll start training their own models or fine-tuning open-source models to the task, no longer requiring OpenAI or Anthropic models.

SCIENCE

OpenAI’s model for science, Rosalind

OpenAI has released GPT-Rosalind, a purpose-built model for life sciences research, focused on biology, drug discovery, and translational medicine. OpenAI says it is optimized for scientific workflows and offers stronger reasoning across chemistry, protein engineering, and genomics.

The pitch is that it helps researchers with evidence synthesis, hypothesis generation, experimental planning, database querying, and reading new scientific papers, rather than acting as a general chatbot repackaged for pharma.

For now, access is limited. GPT-Rosalind is in research preview and available only to eligible institutions and selected users through OpenAI’s trusted-access program, across ChatGPT, Codex, and the API. OpenAI also launched a free Life Sciences plugin for Codex that connects to more than 50 scientific tools and data sources.

TheWhiteBox’s takeaway:

Depth. Specialization. Two words that this industry ignored for the longest time are becoming instrumental to progress.

Reinforcement Learning, the training method that teaches AI models to explore and find new solutions, is tremendously spikey, meaning models trained under that regime become incredibly good at some stuff at the expense of losing capabilities elsewhere. They become, in a way, narrower.

And while this is bad news for those who believed AGI would be a god model, not a plethora of models, it’s just how things were inevitably going to end up.

For companies like OpenAI, which need to start making money from enterprises, going deep and specialized is the only way to go, and thus, they are taking decisive steps in that direction.

CODING

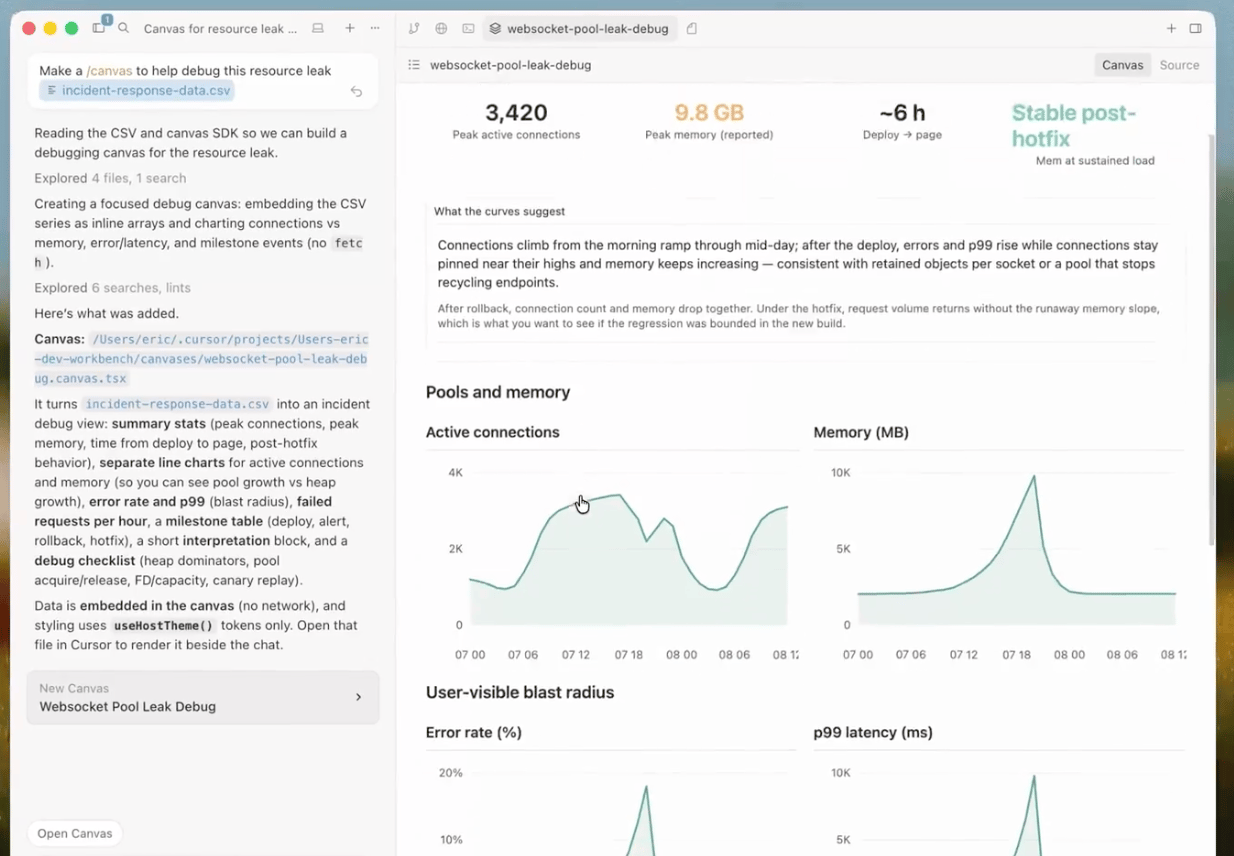

Cursor’s Canvas

Cursor has released Canvases in Cursor 3.1. The idea is that instead of only replying in chat or markdown, the agent can now generate interactive visual interfaces such as dashboards, charts, diagrams, tables, and review UIs that live as persistent artifacts inside Cursor’s Agents window.

TheWhiteBox’s takeaway:

Cursor frames this as a way to increase “information bandwidth” in human-agent collaboration. And that’s exactly what it is and what makes it so powerful.

It’s one of those features that show you what the future of software looks like. The agents do all the work, but they need to be able to expose the results in a “human-digestible” way so that humans can truly serve as guides and managers of these powerful entities.

Nobody can be a good agent manager reading markdowns all day; you need to visualize data in the best way possible, and I predict software is going to shift massively toward this idea: fully agentic (AI does the work) with Canvas-like dashboards for the user. This will apply to any software, from Salesforce to Jira.

Closing Thoughts

Everywhere you look, you see constraints. AI is truly running into a massive wall of lack of compute, and it’s reaching a point of impacting products which, in some cases like Claude, are notoriously worse than they were weeks ago. That’s the hard truth of AI:

Now more than ever, your compute is your moat. Your lack thereof is your doom.

Another lesson is that your company, or you, should not be dependent or at risk of being shut down by AI Labs reprioritizing compute away from you, or one day suddenly deciding you’re simply not good enough to be served.

What happens when you pull the plug on companies that have gotten used to using you every day, and suddenly can’t or have to pay you 4 times more?

Not your AI, not your future. Sadly, as long as the compute crunch persists, these Labs simply aren’t reliable partners.

And now, one of the most important upcoming decisions: Cerebras, the next AI IPO, is upon us. Is it a yes or a no?

Give a Rating to Today's Newsletter

For business inquiries, reach me out at [email protected]