THEWHITEBOX

Your AI Personal Secretary

Greetings, my fellow readers. Finally, after months of restraining myself, I’ve finally decided to try the new agent harnesses, OpenClaw-esque products that, theoretically, allow you to create your personal virtual assistant.

They are not exempt from issues and things to improve, but they work sufficiently well to the point that ignoring them is a disservice to you.

Thus, today, I’m going to explain:

How these agent harnesses, skills, and other crucial terms you have to be familiar with work, learning how, in fact, these are some of the simplest software to ever exist (don’t get me wrong, being simple is the feature),

How to use them (and how not to) by offering you my way (how I did it), which requires some technical savvy, and the easy, you-can’t-go-wrong way. Of course, I’ll explain my reasons for why I’m doing it my way.

And what this tells us about the future of software (spoiler: you are going to understand why software companies are really crashing).

By the end, you’ll have great intuition as to what this new form factor everyone is going crazy over is about. You’ll also learn what agents truly are; no jargon, no bloom, just what they are and nothing more.

And I’ll also show you how I’m automating my life using these agents, in particular, how I use Telegram to talk to my own AI and take care of the things my agenda prevents me from doing myself.

Let’s dive in.

It’s all Context Engineering

We’ve been hearing about this so-called agentic revolution for quite some time. Probably so have you.

And as I already explained in a recent post, agents have finally hit that point: they work. Kind of. But you know how this works; in two months’ time, I won’t be adding ‘kind of’ anymore, so I suggest you start paying attention today.

And this new page in the AI story has been written by a project called OpenClaw (although it’s not the one I’m using, as we’ll see later).

The Simple Agent

Agents are one of those things that people make look unbelievably complex despite being, in essence, outrageously simple.

It’s all marketing for what’s actually a simple runtime prompt modification. For that, let me demystify agents for you.

Our most powerful AIs, be that OpenAI’s GPT-5.3 Codex, Anthropic’s Claude Opus 4.6, or the soon-to-be-released DeepSeek v4 and GPT-5.4, which I both trust are going to be incredible, are all simple sequence-to-sequence stateless machines.

But what does that mean? Well, they take in an input sequence, normally text, and return a continuation to that sequence. They are mathematical functions (literally) that predict ‘what comes next’ in a sequence.

Source: Nano Banana 2

By themselves, they don’t have a persistent state, meaning every interaction is, by nature, new; they don’t remember previous interactions. Thinks of this as every time you talk to an AI model, it’s as if you were meeting them for the first time.

They are like Dory in Finding Nemo, an entity that lives only in the present and will “forget” all about you the moment it ends the interaction. All. It lives inside the sequence (your current conversation), and all else is irrelevant.

Therefore, their state (or the lack thereof) is determined by two variables:

What the current context is (the information explicitly present in the sequence)

What the model’s internal knowledge provides as contextually relevant information

Using the Dory analogy again, Dory remembers her name is Dory, but can’t remember what she did four seconds ago.

The importance of each becomes apparent with a simple example.

Say you ask a model, “Who’s this Turkish guy playing on the Rockets?” The only way that model can answer correctly with “Alperen Sengun” is if it knows the answer, because the contextual information (the sequence) was entirely insufficient for it to infer what to answer.

Therefore, refining our previous equation, each prediction is, in fact, a function of context and memory, i.e., f(input context + model memory) = output.

Nonetheless, models like ChatGPT, also known as Transformers, are composed of two layers: attention, which processes context, and MLPs, which process model memory. They are that simple because the prediction equation is.

So, what is an agent? How does this stateless machine become an agent? Simply put, an agent just “adds” three things to this equation: memory, tools, and action.

And their biggest superpower is adapting to new context on the fly.

In-context learning, using a new context on the fly

The secret behind the vast majority of new start-ups emerging in the industry that aren’t training their own models is no secret at all: in-context learning.

You see, when you have stateless machines that can extract information either from context or from their internal memory (which has a knowledge cutoff based on the time the model stopped training), you find yourself at a crossroads: how do I inject new knowledge?

This is a story for another day, but the obvious-yet-wrong first answer is retraining, or fine-tuning, by giving the model that new information so it learns it. However, that is almost never a good option unless you really know what you’re doing, because you might actually worsen your model.

A much simpler solution is simply adding the context to the input. That is, upon receiving a user request, perform some ‘context engineering’ to update the prompt to be sent to the AI with additional information that might be relevant to the prediction.

Nonetheless, when incumbents talk about ‘hyper personalization’ and ‘memory’, creating models that remember you, what they are talking about is the most boring thing ever: taking the input the user is going to send to the model and adding new context on top to help the AI respond.

Using our Sengun analogy, it could be that the model used was trained before Alperen Sengun joined the Houston Rockets. That model will not respond correctly because it can’t possibly do so without the right context or knowledge.

Instead, we add a search tool that queries the internet for that information, extracts the relevant context (text explaining where Sengun plays now), and only then feeds both to the AI model.

At this point, I think this is a good moment to present one of the most common patterns of context engineering you see these days, even amongst top AI Labs, to drive home what they mean by ‘memory’ here.

Upon receiving a request from you, the agentic system, which keeps a markdown file with preferences from users, takes memory.md file and adds it to the prompt the AI model actually receives, thereby becoming “personalized” to you.

Don’t get me wrong, it’s still the stateless machine we described earlier. It still “lives in the moment” and, in reality, knows nothing about you.

Instead, the system is just providing, on the fly, the required context that grounds the model for you. It’s as if you had a friend who, before every single interaction with you, read a story about your life in front of you, and only then answered.

Source: Nano Banana 2

Which is to say, the model’s functioning has not changed at all; it’s still f(input) = output, but now we have modified the equation slightly to f(input + new context) = output.

And it’s the fact that the model can effectively use the next context, despite it being “unknown” to it, which is what’s called in-context learning (because it learns with the new context) and is undoubtedly, to this day, Large Language Models’ (LLMs) greatest feature.

Source: Nano Banana 2

But agents also include another facet that has to be addressed: tool calling.

Tools and Actions

Besides having a harness that updates an AI’s context in real-time, agents’ most well-known ability is to take action on our behalf.

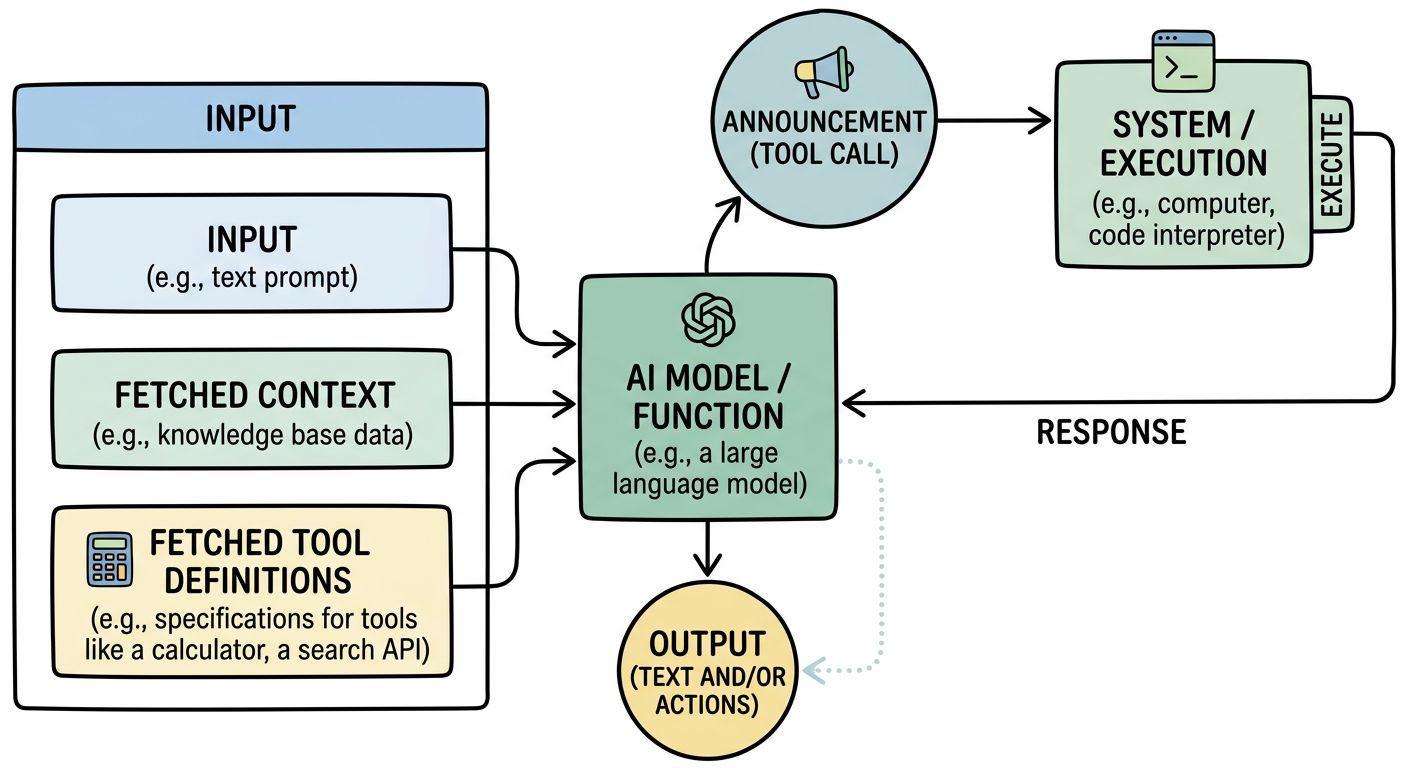

In essence, an agent is just a function disguised as a model that may (or may not) output actions based on a list of actionable tools we also expose to the AI. That is, the actual equation for agents is { f(input + fetched context + fetched tool definitions) = text and/or tool calls }.

Here, ‘tool call’ means ‘action’, but how these models work is that they “announce” the tool they want to use, which is then executed by the system, and the response is fed back to the model. That’s why we call it ‘tool call’.

In other words, this ‘harness’ that governs the context the AI receives also gives the AI the ability to decide whether to use a certain tool and, if so, to use it.

For instance, you may provide an agent with a tool to read emails, so if the user asks for information on the last received emails, the agent can process the request and generate a tool execution requirement (i.e., the output would be interpreted as “I want to call my email tool with the following constraints”).

Then, the agent harness takes that and runs the tool, getting a response that is then returned to the AI for a new run at the stateless equation f( input + tool response) = new output.

This creates a loop (as long as the output isn’t a stop token, meaning the model says it’s finished), the new output gets fed back again and again. This, in essence, is what an ‘agent’ is.

Source: Nano Banana 2

But I want to further convince you of how simple at heart all this is by explaining to you the latest “simple as a rock” thing that marketing teams in Silicon Valley have made it out to be the next big thing: skills.

Skills, glorified folders.

What if I told you that billion-dollar companies will be built around this idea of exposing tools to a model?

Ironically, this super futuristic idea is actually a folder. Yes, a folder. The hottest thing in AI right now is giving AIs access to folders. We call them ‘skills’, which sounds way cooler, but they are just a Markdown file that explains what the skill does, and a bunch of tools and resources for the AI to use.

Benefiting from the fact that AI models can adapt to new contexts, meaning they can become experts on you (the user.md) or on new tasks (skills), the future of software is nothing but agent tooling; giving AIs the tools to execute actions on a given domain with accuracy and expertise.

So when you read that a new flashy AI start-up has created “ChatGPT for law clerks," the reality is as boring as a bunch of folders with prompts and tools that the agentic AI can use to execute the task.

So, how about we build one of these? An AI secretary?

As mentioned at the beginning, we are going to discuss two ways: the simple one that anyone can do, and the harder one, only for those who want maximum control and power over their agents.

Because in this day and age, if you don’t have your own personal AI secretary, what are you doing?

Subscribe to Full Premium package to read the rest.

Become a paying subscriber of Full Premium package to get access to this post and other subscriber-only content.

UpgradeA subscription gets you:

- NO ADS

- An additional insights email on Tuesdays

- Gain access to TheWhiteBox's knowledge base to access four times more content than the free version on markets, cutting-edge research, company deep dives, AI engineering tips, & more