THEWHITEBOX

TLDR;

Welcome back! Today, we have a newsletter packed with insights, from the ongoing memory supercycle, Google’s incredible Perch model that uses birds to understand whales, China’s new SOTA video model, and deep dives into the importance of debt in AI and new products like OpenAI’s updated Deep Research.

Enjoy!

AI & ANIMALS

Google Does it Again. What Birds Teach About Whales

There are some AI applications that make it really hard to believe they actually exist and aren’t a complete fabrication, and this is one of them.

Google has trained a bioacoustics model called Perch 2.0, primarily on birds and other terrestrial vocalizing animals, and shown that the same model can be used to identify marine animals with few-shot learning (simply giving it examples of new data it has never seen, and the model still works).

Specifically, a model trained mostly on birds is given a handful of whale examples and can suddenly identify whale species, no problem. That’s how crazy this research is.

But how is this possible?

TheWhiteBox’s takeaway:

The answer is, funnily enough, already known. In simple terms, even if it sounds crazy, there’s an overlap between the sounds of birds and the sounds that whales emit. That is, some acoustic features are common across the animal kingdom, and some other acoustic features are common across all sounds, both living and not.

By training an AI in large amounts of terrestrial animal data, the model might not have seen any whale sounds, but it’s already capable of picking up the two sets of acoustic features I just mentioned. In layman’s terms, this model, even if whales are new to it, already knows, for lack of a better expression, “what sound is,” and even picks up the particularities of whale sounds.

Consequently, when faced with what seem to be totally new sounds, in the model's eyes, much of what these sounds are is already familiar. Therefore, with just a few extra training sessions, or even just examples, the model is now an expert on whale sounds and can identify them with no problem.

For a better intuition, always think of this in human terms. Imagine your brain had to relearn what sound “sounds like” every time it heard a new sound.

Luckily for us, the human brain already knows what sounds “sound like”, so it just needs to “fine-tune” itself to the new sound, so that it becomes familiar for future reference. Which is to say, our brains perform transfer learning all the time, applying learnings from one dataset to another.

The only difference, and what makes AIs unique here, is that the level at which AIs can process these patterns goes above and beyond our capacity, meaning we can use these AIs to identify sounds we can’t quite tell what they are with surprisingly low effort.

As I always like to say, hype makes it really hard not to hyperfocus on Large Language Models (LLMs), but we have to remember that AI is much more than ChatGPT, and the principles that underpinned ChatGPT’s success can be applied beautifully to other areas.

VIDEO

SeeDance 2 is Pretty Incredible

Years from now (in some sense, they are already doing so), Microsoft investors will look back and see Microsoft’s decision to let Microsoft Asia’s AI team go as one of the worst decisions in company history.

Today, that team is ByteDance’s Seed team, arguably one of the top 5 research labs. Yes, top 5 in the world. The only reason they aren’t top 3, with the excuse of DeepMind and OpenAI’s research teams, is simply a matter of compute.

But when you evaluate their research and the models they are publishing, coupled with the apparent lagging performance of Microsoft’s own AI efforts, oh boy, was this a terrible, terrible mistake that will haunt them for years to come. In some respects, they are way ahead of DeepSeek as the top Chinese Lab, at least for video models.

And SeeDance 2 is the perfect example of this. Images speak louder than words, so please watch the videos linked here and come back. If this isn’t the absolute state-of-the-art of video generation, it’s close (personally, I have zero doubts). It’s definitely better than OpenAI’s Sora (some say it’s a drastic step-up, even ahead of Google’s Veo 3.1 model), and almost impossible to tell if it’s AI or not.

I would have liked to explain the technical underpinnings, but the paper hasn't been published yet. Still, the results are so incredible that I had to share them with you.

But how are Chinese models lagging so far behind in text but setting SOTA scores on video?

TheWhiteBox’s takeaway:

I can tell for sure, but I have my intuitions as to why this is possible. While text/code-based models are extremely memory-bandwidth bottlenecked, video models are not.

Okay, so?

That is, running those models at scale requires very advanced DRAM technology (usually HBM, or High Bandwidth Memory, which is why companies like Sk Hynix are set to have an enormously profitable 2026) because during the decoding phase (the phase where you see ChatGPT streaming a response back to you), memory speed is the limiting factor.

With video, that’s not the case. In video, at least video generation models that do not stream new frames back to you in real-time, as Google’s Project Genie does, compute is the bottleneck because the model generates the entire video in one go while you wait.

And why does this matter? Well, because when we say China is behind in compute, we are indirectly referring to the fact that they have much fewer XPUs in total, which constrains how much compute you can serve to users because you need a lot of XPUs to serve due to the memory constraints (in fact, each XPU is usually extremely idle most of the time during inference).

With video models, hardware can be used much more efficiently (i.e., we need many fewer accelerators per workload), which means China is less constrained by scale. This could somehow explain why they don’t have nearly enough compute to compete with agents and chatbots, but enough to compute on video. And it shows.

CODING

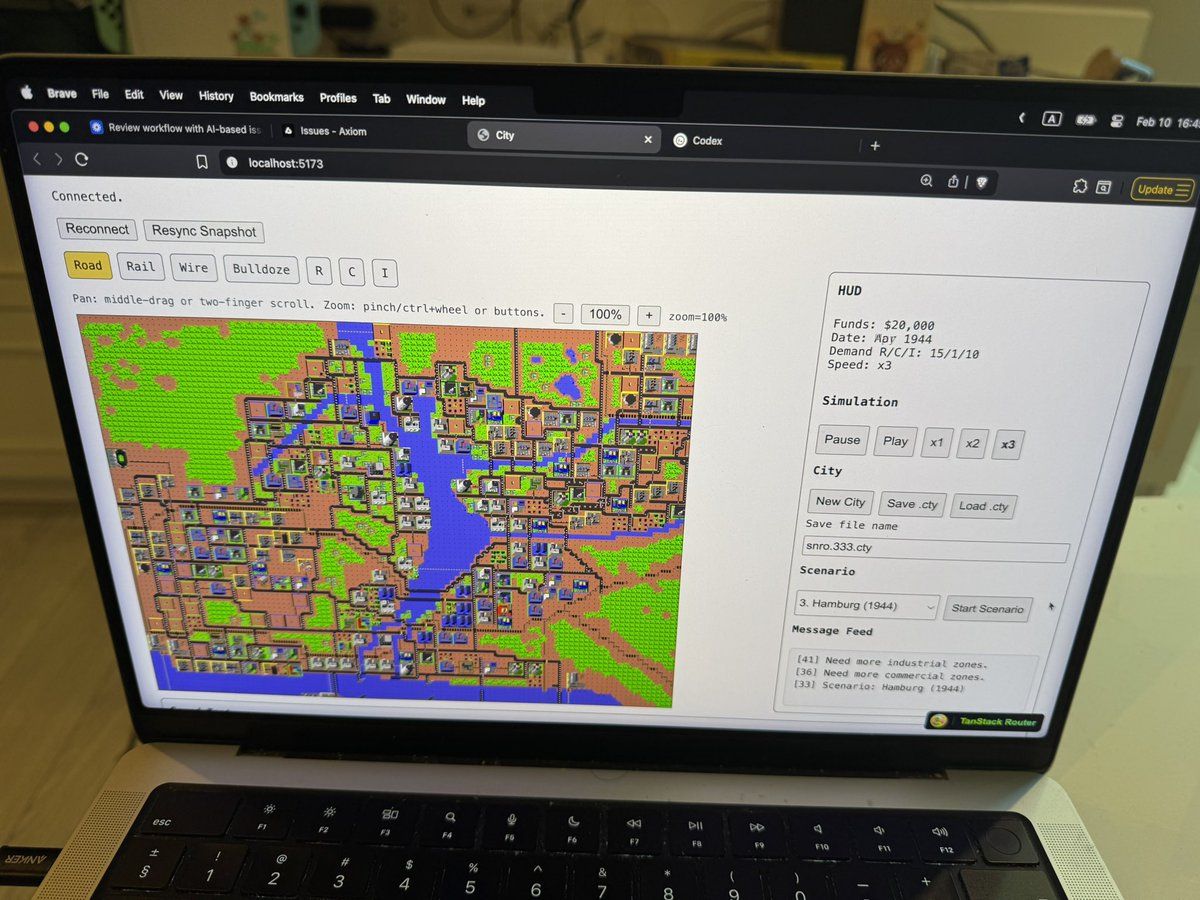

Porting VideoGames to Browsers

In our last Leaders segment, I insisted that we were already in the acceleration and that we would see increasingly crazy new capabilities and use cases from AI coding models on a weekly basis.

Now, a guy has literally migrated an entire version of the SimCity videogame, written back in 1989 in the programming language C, to TypeScript (so it can run in a browser).

Et voilà:

The reason I’m highlighting this is the keyword ‘migration’. AIs are becoming notoriously good at picking something up in a certain configuration and moving it to another place with a new configuration.

Therefore, how long will it take for companies to migrate their data out of their systems-of-record SaaS products that have them completely kidnapped?

Interestingly, the biggest reason people are stuck with Salesforce isn't that the user interface is amazing; it’s because the pain of moving off the platform is unbearable. But with AI, that will eventually no longer be the case, as AI migrators will handle the job.

When I say this, some people push back, telling me that, well, they’ve also trained their employees on Salesforce and that retraining them to the new interface would be a pain yadda yadda yadda.

To which I reply, “Wrong framing.”

The mistake there is assuming that the new software from a competitor offering 1/30th of Salesforce's features (but the most important ones) for 1/100th the price will be “Salesforce 3.0”.

No, it will be a declarative CRM where your salespeople will just declare what needs to be done, and agents will do the rest. No “retraining” needed. In fact, soon enough, most AI agents will be proactive, so humans will only intervene in the most important stuff.

How Your Ads Will Win in 2026

Great ads don’t happen by accident. And in a world flooded with AI-generated content, the difference between “nice idea” and “real impact” matters more than ever.

Join award-winning creative strategist Babak Behrad and Neurons CEO Thomas Z. Ramsøy for a practical, science-backed webinar on what actually drives performance in modern advertising.

They’ll break down how top campaigns earn attention, stick in your target’s memory, and build brands people remember.

You’ll see how to:

Apply neuroscience to creative decisions

Design branding moments that actually land

Make ads feel instantly relevant to real humans

In 2026, you have to earn attention. This webinar will show you exactly how to do it.

THEWHITEBOX

The Day Has Arrived

Finally, OpenAI has announced that ads are coming to ChatGPT. Interestingly, they insist (a lot) that ads won’t affect generations, something many have feared since the first signs of this finally reaching free users appeared, even accusing Anthropic of lying in its Super Bowl ad that, well, suggested exactly that.

In other words, allegedly, ChatGPT’s responses won’t be influenced by the ads they are serving, which is to say, if you ask something about cheesecake, you won’t be getting the recipe from the advertiser that paid to appear the highest for cheesecake recipes.

Instead, I assume they’ll use a system that naturally connects generations to advertisers, showing them at the end and clearly labeled. Specifically, they mention “we decide which ad to show by matching ads submitted by advertisers with the topic of your conversation, your past chats, and past interactions with ads.”

TheWhiteBox’s takeaway:

While I would understand the criticism that ads became intertwined with responses, I don’t see the issue with OpenAI trying to monetize 700 million free users, aside from the fact that, well, this company was born as a non-profit, but that’s another story.

The larger question is whether, sometime in the future, that intertwining will become true, when ChatGPT’s responses become inseparable from the ads they are immediately showing next.

Technically, there’s not much I can add here, as the much more interesting analysis would have been how to actually ‘embed’ advertisers into ChatGPT’s responses (I’ve held the belief, for a long time, that this is a very, very hard engineering challenge).

The one technical question I wonder is how profitable this really is. OpenAI is asking for a lot of money per impression ($60 per 1,000 impressions), which leads me to believe I was right: it's going to run into “Mr. Inference costs” a lot along the way to good margins.

In layman’s terms, OpenAI can’t predict model responses, so they can’t know in advance how many inferences they are going to require to get those 1,000 impressions. If that number is 1,400, then this is presumably very profitable. However, if they need 100,000 inferences to deliver 1,000 impressions to the advertiser, the cost of those 100,000 could be way higher than the $60 they are getting.

USE CASES

ChatGPT Updates Deep Research to GPT-5.2

This was a long time coming, but OpenAI has finally updated its Deep Research feature to GPT-5.2. They’ve additionally updated the interface, offering a cleaner, better-looking experience (kind of reminiscent of Gemini and Grok Deep Research), and the possibility to “intervene” and update your requirements midway through the process.

Moreover, they have a new search system that lets you specify the websites the agent should focus on, while ignoring the rest. The finalized report can also be exported to Markdown, Word, or PDF.

TheWhiteBox’s takeaway:

An important upgrade if you’re in the lower pricing tiers, but mostly irrelevant if you’re a GPT Pro user. ChatGPT Pro is just the default option for anything that requires long thinking; it’s just a much superior option for almost everything.

I have these discussions a lot with clients, and I insist: until you try models like ChatGPT Pro, you won’t realize how much you’re underestimating AI progress.

MODELS

Anthropic Release Claude Fast at Unbearable Price

Anthropic is a beautiful reminder that AI prices aren’t falling. If anything, they are growing. Fast. They are now offering a new usage tier called Claude Fast Mode, which gives you access to a much faster (2.5x) Claude at a price 6x higher.

That is, this mode is priced at a staggering $30/$150 per million input/output tokens, a price that is quickly entering prohibitive territory when you factor in that a “modest” coding session with Claude can represent several million tokens, which means you could be burning $200/day on Claude, mostly unaffordable for the vast majority of the population.

Before talking about the implications of this, we must ask ourselves: How does Anthropic suddenly release a model that is as good as slower models but much faster? Usually, better means slower because models are either larger or think for longer, so “better and faster” doesn’t make much sense.

The most likely explanation is batching magic. That is, they are sending your requests in smaller batches to the GPUs/TPUs. When you click ‘Send’ to ChatGPT or Claude, your request is sent to a GPU server. Usually, your request is processed alongside those of other users.

XPUs are designed to parallelize, so the larger each batch sent to the system is, the higher the hardware utilization. However, this also implies slowness, because matrices (or, more technically, tensors) are larger as a result.

Alternatively, one way to make models faster is to send them smaller batches. This increases the serving speed, but increases inference costs for the AI Lab because, to put it simply, you are using the same hardware to process fewer requests. So what is most likely going on here is that Anthropic is simply transferring the inference costs to your pricing.

TheWhiteBox’s takeaway:

One of the biggest original truths that has now become a lie is that AI is getting cheaper. AI Labs are seeing costs skyrocket, so prices can’t fall, no matter how much they would like them to.

The industry needs to find a way to depress prices. Otherwise, we are soon going to find ourselves in a world where most of the population can’t afford the tool that could make them hyperproductive.

For a deeper analysis of this news, read what I wrote on Medium here for free.

DEBT & CAPITAL

Apollo Lends to Elon Musk… Again

According to The Information reporting, Apollo Global Management is finalizing a $3.4 billion loan for an investment vehicle that will purchase Nvidia chips and lease them to Elon Musk’s xAI.

Arranged by Valor Equity Partners, this deal marks the second major chip funding arrangement between Apollo and xAI, following a similar $3.5 billion agreement in November.

The crux of the issue is that this financing allows xAI to secure massive computing power without an immediate upfront capital expenditure, supporting the company's growth following its recent merger with SpaceX.

TheWhiteBox’s takeaway:

People still believe all of the ‘money pumping’ in AI comes from Hyperscaler (Microsoft, Amazon, Google, Meta) free cash flow (money made from their own businesses), but that’s not actually accurate at all.

Google had $164 billion in operating profits in 2025, of which “only” $92 billion went to AI. That’s a lot, but did they only spend that? No, they actually issued corporate bonds (debt) with extremely high maturities.

As I have explained in the past, this is not a sign of weakness; it’s Google simply benefiting from “free money” coming from markets, as Google’s bond yields are very close to Treasury yields and thus basically “free”. Nonetheless, they are preparing another bond deal, this time 100-year maturity bonds, something we had not seen since 1998 (Motorola).

But if Hyperscalers are doing this, you can already assume that, down the line, everyone else will do the same.

A notorious example is Oracle, which has leveraged debt way more than other Hyperscalers simply because they don’t have the others’ free cash flow and whose bonds are trading mostly in junk territory (i.e., investors demand higher interest rates (i.e., coupons) on the bond because they don’t trust Oracle to pay up, and Oracle’s credit default swaps, aka insurance on the bonds, are at dangerously-high numbers, putting their 5-year risk of default at almost 10% as of January 2026:

Down the line into the AI Labs, xAI is another primary example of heavy debt usage: a company that usually issues debt whenever it raises money through equity sales.

This is highly risky debt that has ironically rallied since it was announced that xAI was joining SpaceX, reminding the world that, if money is the deciding factor, Elon Musk is almost undefeatable at the game.

By “rallying” here, I mean that, as xAI’s debt takes the form of tradeable securities, people speculate over them. If more people want to hold this debt, their price increases, which then pushes yields lower (e.g., if a $1,000 bond yields $100 in interest, that’s 10% coupon. But if the bond price grows to $2,000, the investor who pays for those $2,000 still earns $100, which now represents a 5% return, not 10%). Basically, with the merger with SpaceX, people feel better about holding xAI debt now, considering it’s part of the SpaceX $1.5 trillion behemoth.

The point I’m trying to make here is two-fold:

Debt liquidity will be—already is—instrumental to AI progress. If debt liquidity dries up, everything collapses. However…

Debt liquidity is unlikely to collapse because debt investors will do anything to avoid holding cash. The debasement trade, the idea that fiduciary coins are to be avoided, is ironically the best thing going for the AI industry because it guarantees fresh financing.

But is this representative of a healthy industry?

Of course not, the bubbly indicators should be skyrocketing right now. But here’s the thing: Is something a bubble if the bubble indicators are irrelevant at a time when there are too many dollars out there looking for a place to be in?

I personally don’t think so, which is why I don’t think AI will crash. If anything, it’s the dollar that's crashing.

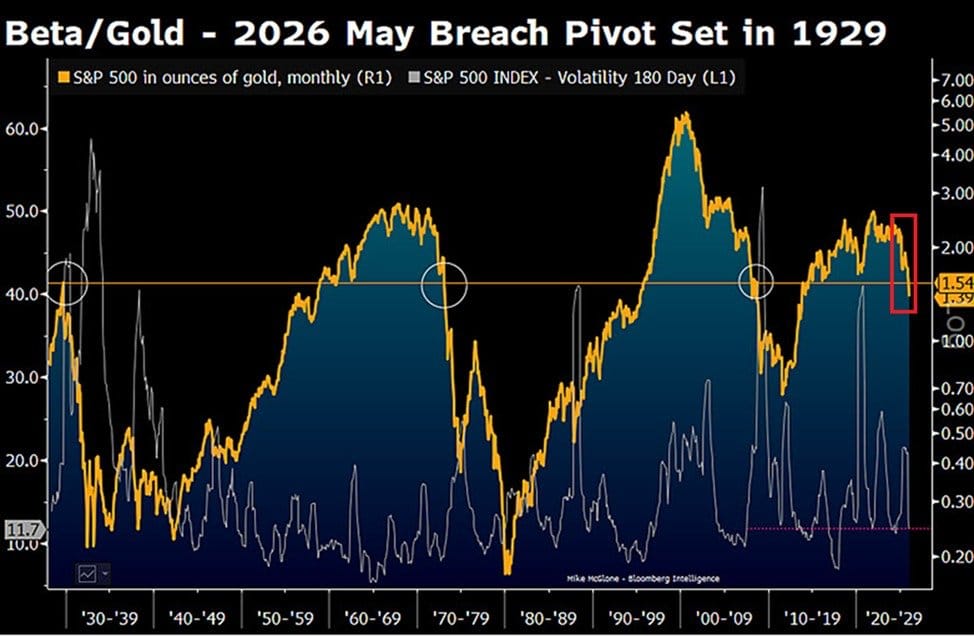

Which is to say: is it a logical thing to measure how something is crashing, measuring it relative to an asset that’s crashing harder? If anything, it hides that things are crashing in absolute terms, but not relative to the main asset we use to measure it.

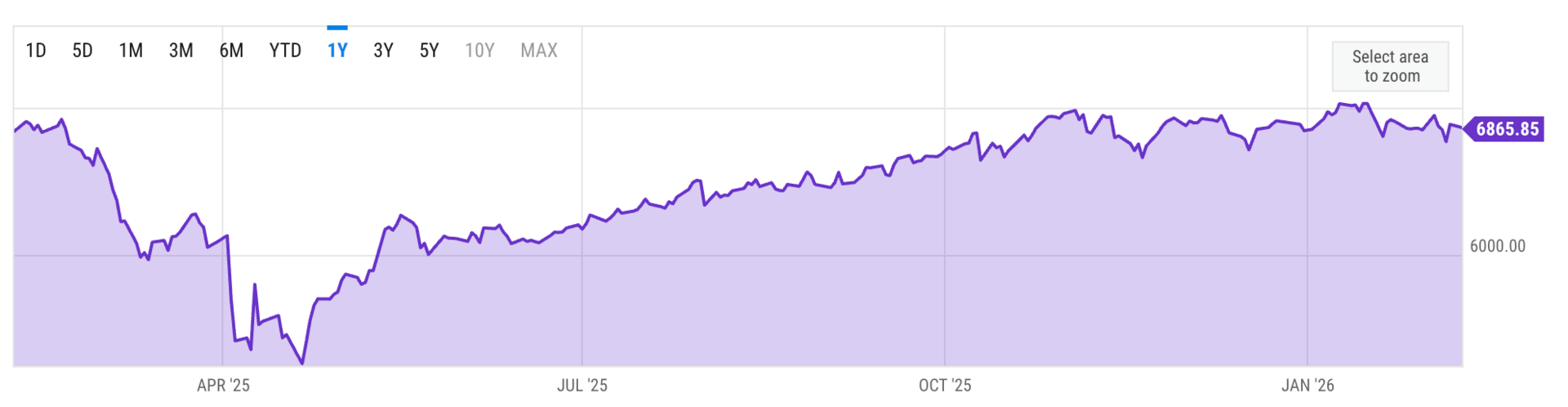

In a nutshell, interestingly, AI and markets are already somehow crashing; we’ve just not realized it yet because our evaluation system, the dollar, is long broken. For example, measured in euros, the S&P is flat over the last year:

Measured in gold, the picture is way worse: the S&P 500 has lost 48% of its real value since 2022.

Of course, this is opportunistic (everything seems to be falling compared to gold these days), but take this as a simple reminder that our main metric to measure progress is not having a great time. Thus, as long as the debasement trade is real, I believe the AI trade will simply get going as money flushes in. In fact, this points to a larger-than-AI trend: assets in general will continue to rise no matter what.

Strong asset price growth coupled with currency inflation can end very badly, though, leading to the emergence of a ‘permanent underclass’ of people who kept their wealth in dollars rather than investing in assets, be that AI or Pokémon cards. That’s more than 50% of Americans, by the way, and a much larger proportion in Europe.

Only a significant loss of consumer confidence in fiduciary coins could end it all, but that doesn’t seem likely. After all, the US isn’t the Weimar Republic suffering from an unpayable amount of post-WWI reparations, right?

Everything will be just fine… right?

MEMORY

The Memory & Shortage Dramas

The Wall Street Journal has already argued that the AI Trade is, in fact, the ‘memory trade.’ We were quite early on this in this newsletter (not super early, I have to say), but early enough to potentially be poised to ride one of the biggest stock upcycles we’ve ever seen.

Yes, the risk is very high because we don’t yet fully know what will happen to revenues 2028 forward (preventing immediate multiple repricing from price-to-book to price-to-earnings, which could see these stocks doubling or even tripling even after assuming modest multiples), but the promise is there.

In fact, the trade has become so important these days that I am forced to distill some of the news related to it:

The South Korean Financial Services Commission has approved Interactive Brokers and a few other international brokers to offer Korean stocks. This will significantly increase liquidity in Korean memory stocks (mainly SK Hynix and Samsung). Until now, the only way to invest in these stocks was through the respective GDRs (Hy9h for Hynix in Frankfurt, SMSN for Samsung in the London Stock Exchange). This will take time, I assume, but the path to direct investment is finally in reach.

Supply constraints are so intense that the Big Three (Hynix, Samsung, Micron) are basically changing contract prices after signature. That is, they had signed a contract at a given price, but if the spot price of the product has doubled since then, the suppliers are asking for that increase, basically nullifying the agreed price. SMIC, China’s main chip manufacturer, acknowledges the immense shortage. That’s how much pricing power they have right now.

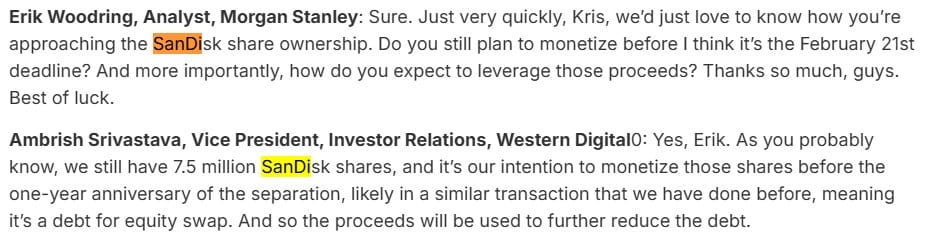

Sandisk, a storage company that has grown 1,500% in 12 months and 142% just in January alone, the biggest increase out of all public stocks in the world, is now dumping, amid fears that Western Digital, a major shareholder, is about to dump shares. I’ve already made my case for why I’m not interested in SanDisk, but if you’re a shareholder or planning to be, this news is important to you.

The one-year anniversary is February 21, 2026.

In the time between writing these words and reviewing them a few hours later, the stock is now up 11%, showcasing the extreme speculation.

In a recent interview on the Dwarkesh Patal podcast, Elon Musk, who plans to build data centers in space, said that what concerned him most was memory. Yes, the dude who plans to send GPUs into space at a rate of 100 GW per year is very worried that chips, particularly memory chips, won’t be able to keep up.

Here, the two main questions are as follows:

Will we find an alternative hardware architecture that takes over AI compute, dethroning NVIDIA/AMD/Google? Or a new AI software paradigm?

Will Hyperscaler demand continue well into the end of the decade?

If the answers are no/yes, memory stocks are going to the moon. If the answer is no/no, they will have strong years but lose revenue growth intensity in 2028 and beyond, and thus remain cyclical and therefore valued as a multiple of book value, which is how they are right now, meaning no massive valuation expansion beyond. And if the answer is yes/yes, bad news too, because that means HBM will no longer be as important.

Which one is the correct option? My belief is that we are in a no/yes scenario.

Regarding the first question, there’s too much money spent in this direction, and progress is strong. For the second question, both Hyperscalers’ free cash flows and appetite for their corporate bonds are very strong, which is why I’m invested.

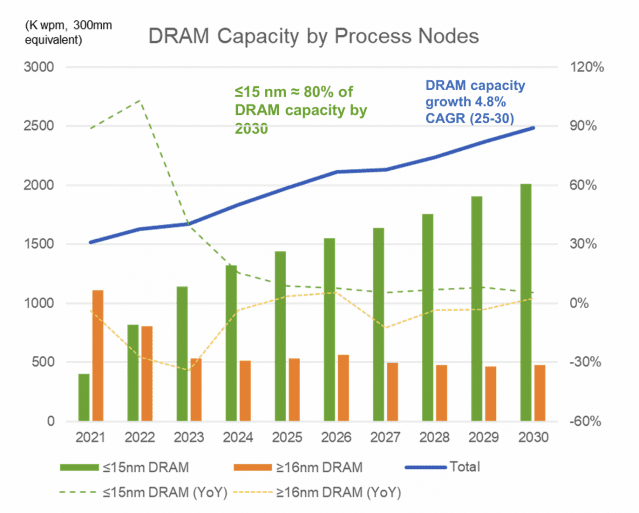

In fact, the crux of the issue seems to be that DRAM expansion (the speed at which memory companies increase production capacity) is much slower than infrastructure growth, which means supply shortages may become the new normal.

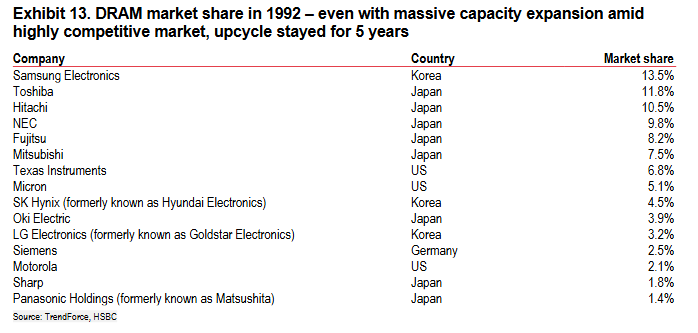

And what does history tell us? Interestingly, we had another period of DRAM supply shortages back in 1992.

Then, the supply was much more spread across many more players (recall that today we only have three for HBM, four with China’s CXMT if we account for other DRAM technologies), and the upcycle still lasted for 5 years, so the current upcycle abruptly ending in 2028 is not something history tells us we should expect.

But whether you agree with me or not, I’m afraid that’s your call.

Closing Thoughts

Well, that was a dense newsletter. However, I believe we have shared a lot of meaningful and valuable information today:

More intuition as to why memory stocks are thriving

The emerging importance of debt in the AI financing cycle

The acknowledgement that prices are not going down, contrary to what most people believe

The beautiful non-LLM ways AI can drive progress in science, with models trained on birds that can identify whale species

And how China has a credible opportunity to take over the video generation industry.

Until the next one!

Give a Rating to Today's Newsletter

For business inquiries, reach me out at [email protected]