THEWHITEBOX

From 0 to 100 in AI. CEO Edition

Last week, I gave a talk to the CEO of one of the largest hospitality companies here in Spain, who wanted to understand five big things:

How to think of AI in first principles,

The AI in the enterprise, including the ‘where does AI fit’ framework that easily identifies opportunities and discards mistakes

The short-term impact of AI in her company,

The long-term impact,

And a series of key tips for personal usage, my 10 commandments of AI usage.

The goal? Provide a blueprint for thinking strategically about the tool that will shape our future. No time for hype. Nor bullshit. No flashy predictions and empty promises. Just honest, down-to-Earth recommendations and insights in a language anyone can understand.

If I did my job well, by the end of this newsletter, you’ll have a holistic and sound understanding of AI without noise or jargon; just first principles.

This is what I told her.

Foundations

Many of you already know what AI is in great detail. Neural nets. Embeddings. Next-token prediction. Backpropagation.

But it’s perhaps even better to try to simplify it to the essentials. How can we describe AI without giving a single mention to any of that jargon, and still stay true to what AI is in first principles?

This is my best take at doing that, nearing a decade of AI study.

The mapping analogy

AI is a map. Yes, a map. A map between an input and an output. It’s something that takes in data and uses it to produce (the word is "predict") more data. Every single AI on this planet, no matter how different the prediction is, is built that way.

We call it a prediction because there’s some uncertainty; we can’t fully guarantee it’s correct, only infer.

ChatGPT takes words in and produces the next one; Midjourney takes words in and produces an image; Google’s Nano Banana takes in text and produces text and images; Genie 3 takes in an image and a user action, and produces the next frames that capture the user’s action in the scene; Neuralink’s chips take in brain signals and produce computer actions.

Of these, those that ‘generate’ something with that prediction are called “Generative AIs”. While the most popular AI models today are generative, not all AI models are.

There are various types of AIs, but the most important one is neural nets. In 1932, Edgar Adrian won a Nobel Prize for discovering three attributes of human neurons that occurred across the entire animal kingdom: they had all-or-nothing behavior, either they “fired,” or they didn’t. They used “rate coding” (not the intensity of each firing, but the firing rate, that matters), and they adapted to new stimuli.

AI researchers took the concept of a neuron and used it as inspiration to build an analogous “machine brain,” called a neural network, which combines artificial neurons deeply connected to one another, as human neurons are.

Real neurons (left), artificial neurons (right). source

A human brain has many fewer neurons than most frontier models today, around 86 billion, but has 100 trillion synapses, way more than any AI in existence.

However, this is a loose analogy, as they only imitate the first principle, the all-or-nothing behavior, meaning artificial neurons “either fire, or they don’t”, but don’t rate code (they only fire once per prediction, if at all) and don’t adapt to new stimulus (AIs are mostly trained once and do not learn afterward).

But even if they aren’t remotely close to human brains, they imitate us really well. But how do we do it? How do we build such things that imitate us so well?

AI Training Sounds Hard to Understand. It’s Not.

You need to understand that a lot in this world is made to look harder than it is by the incumbents. And AI, in all its glory, is a perfect example of a technology that is constantly made out to be more than it is.

AIs are just an algorithm to look at data, see what repeats, and use those repetitions, called regularities or patterns, to infer similar data.

And we really only know two ways to train an Artificial Intelligence to pick up such patterns in data: imitation and reinforcement.

Imitation is giving the AI a lot of data and telling it, “imitate it.” You give a Large Language Model the entire Internet, and ask it to copy it to the dot.

Reinforcement, glorified trial-and-error learning, is more sophisticated but simple to understand: we give an AI a task without the solution and have it trial-and-error its way to the solution, reinforcing actions that take it closer to success and disincentivizing those that do not.

One is like having your kid learn to write by, well, writing. The other is teaching your dog to sit by giving it a treat whenever it sits. The dog won’t understand at first, but it will eventually pick up the pattern, after, well, trial and error.

That’s it. And what’s ChatGPT, you ask?

A combination of both. We first build the model’s knowledge of the world through repetition, and then we enhance its capabilities through reinforcement.

It’s like teaching a kid to write by forcing them to replicate English texts, then having them develop their writing skills by writing their own essays, reinforcing the good ones, and telling them to avoid the bad ones.

Feels like very human-like learning. But AIs have a key difference: they learn mostly by induction. To learn the English language, we don’t replicate the entire Internet; we learn the key rules of the language, the grammar, and use those rules to learn how to write. It’s a deductive process, from the general to the specific.

Without getting into the reasons why, AIs learn by induction: from the specific to the general. They “learn” the rules of grammar by imitating Shakespeare’s entire opera. A hundred times.

If it feels inefficient, well, you’re right: AIs are extremely inefficient learners.

And this is why they need so much data. That is why the term ‘big data’ was such a big thing a few years ago (it’s still is, it’s just not used anymore). All this leads us to the key law to answer whether something can be solved with AI:

If you have a lot of data and you can verify correctness (you can point to the AI whether its predictions are correct or wrong), you can train an AI that does great at the task.

This explains why AIs are great at things like maths or coding but struggle with folding a napkin; we humans have found ways to assemble a lot of data on the former, but surprisingly little on the latter.

In case you’re wondering, most robotic efforts these days are humanoids for this exact reason: if they look like us, we can generate telemetry data of our movements that the humanoid can imitate.

So, what is AI? AIs are just software that make predictions (inferences) about data after being trained on humongous amounts of similar data.

Those predictions can be generative or not, and the fields where we have successfully deployed them have two things in common: we have lots of data, and we can verify correctness.

This automatically primes us to very reasonably judge the chances of success of AIs in any field:

Maths? Sure, lots of data and instant verifiability (we can tell right from wrong).

Writing? Lots of data, but hard to define ‘what great writing is’. Therefore, while we can have ChatGPT generate average writing, that writing is, at best, modest unless a human is largely involved.

And with this foundation, we move into the important stuff: How to think about AI in its “conquering of the enterprise”.

The AI in the enterprise

Here, my goal is to simply narrow everything down to what really matters for enterprise AI.

Eliminate unnecessary data and focus on what she should be thinking about. These are: economics, implementation types, strategies, use case types, your biggest enemies, culminating with a short and sweet ‘where does AI fit’ framework.

A disclaimer: I’m focusing on GenAI for the enterprise, for obvious reasons.

The economics

The economics of AI have two key variables for any executive serious about AI adoption: tokenomics and marginal costs.

Tokenomics means you meter your AI based on tokens. Tokens are the smallest unit of semantics for any given Generative model. For ChatGPT, a token is ~a word; it can be an image patch for an image model,… You get the gist.

Importantly, it’s a recurring expense measured by the number of tokens your AI services process and generate. That is, your AI expenditure equals the number of input and output tokens (remember, AIs are input-to-output maps) multiplied by their respective prices.

But perhaps more importantly, you have to consider the direct and proportional relationship between AI and marginal costs, breaking historical IT spending assumptions.

Let me put it this way: in traditional IT, once the big investment has been made, onboarding new customers or new employees to a given IT service was basically “free”, as the amount of “new IT hardware” to serve that user was negligible.

With AI services, that is no longer the case. Each user of those services impacts your tokenomics; you are suddenly processing more tokens and generating others, both of which are automatically metered and incur a clear, non-zero additional cost.

This completely transforms how you think of IT spending.

With AI, IT spending is a large, ever-growing part of your cost structure.

In the short-term impact of AI section, we’ll discuss the implications for the company's organizational structure and, in particular, for software spending.

Implementation types

Here, we classify the different angles from which one can view any AI implementation.

Iteration vs repetition.

While AI has “cracked the code” for iterative workflows, workflows where humans and AIs collaborate on the task, like coding, creative work, financial modeling, or PowerPoint work, AIs still struggle considerably with automation.

But funnily enough, that’s the framing everyone gives AI: as a tool for automation. Why, you ask? Simply because collaboration doesn’t sell as much (the human stays), while automation neatly translates to layoffs, disruption, and revenues for incumbents.

But this, my dear executive, is nothing but garbage of the utmost quality. AIs don’t have the slightest reliability capabilities to even suggest that without getting laughed out of the room (if the room knows what AI can or cannot do).

As a matter of fact, these reliability issues are hidden in plain sight. While working with an investment banking client, I wanted to look for the best way to convey this reality.

It didn’t take long.

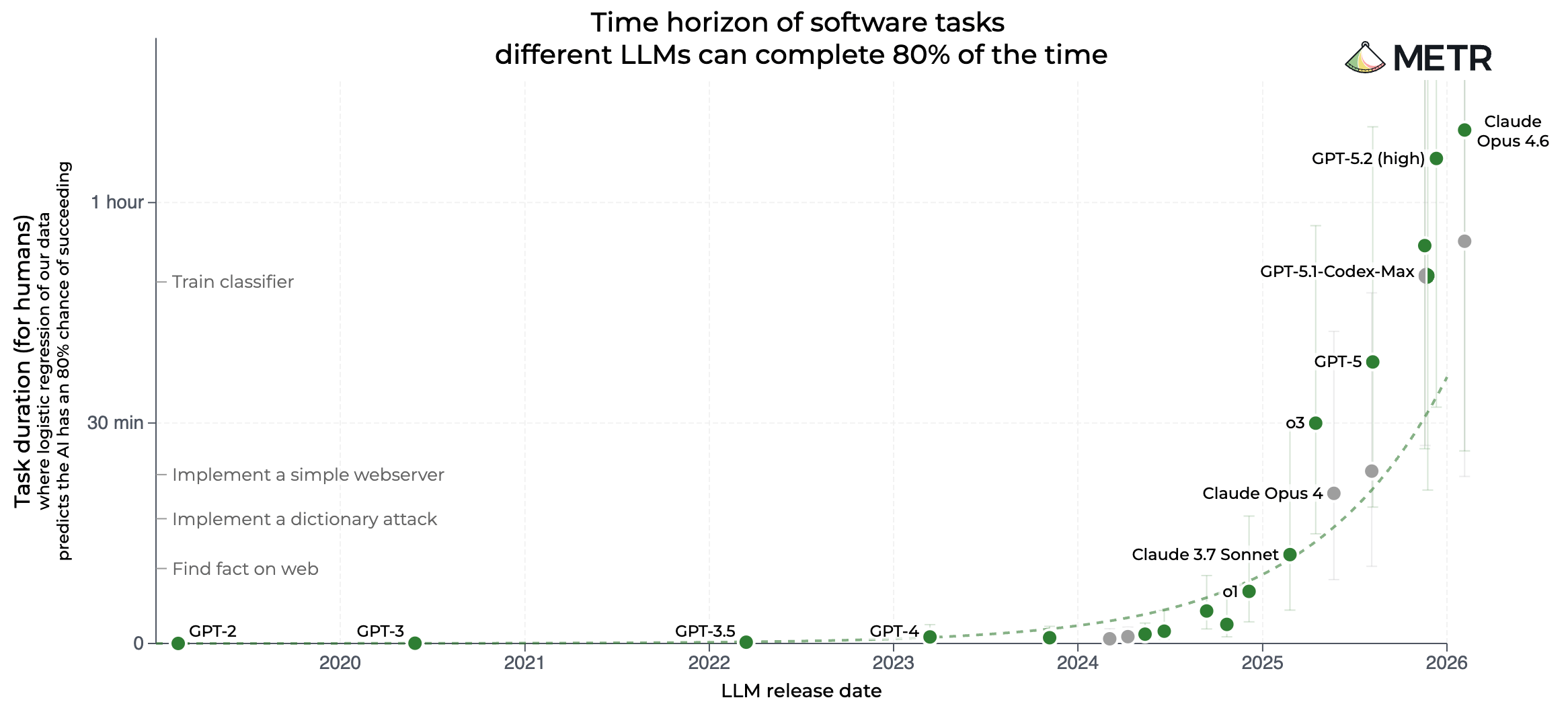

One of the most popular benchmarks in AI right now is METR, which measures long-horizon task accuracy. In plain English, it measures how long the longest task an AI can do by itself is, in human hours, with 50% accuracy.

This is an extremely popular graph because it allegedly shows AI’s exponential improvements, for AI Labs to pump their chests on how fast “the world is changing”.

Pretty incredible, right? Well, now take this chart, head to your closest CFO, and tell them straight to the face that you are going to automate their {insert company department} with “super AI agents” that fail 50% of the time.

But wait, an AI that can solve work that takes humans 12 hours is more than you would need in most cases. Thus, if we increase accuracy, we may see a drop in duration, but we can still have an agent that works for several hours with insane accuracy, correct?

METR does that for you, increasing the threshold to 80% accuracy, which sounds better. But when you do that, this happens:

Looks identical and super exponential, right? Problem solved!

Well, not quite. Look at the y-axis: now the threshold is no longer 12 hours; it’s 1 hour and 10 minutes, a ~90% decrease in “long-horizoness” in exchange for that extra 30% accuracy.

But here’s the thing: unlike the average 20-year-old San Francisco tech bro, you probably know that even 80% is completely unacceptable accuracy for the enterprise.

There’s a reason companies ask cloud service providers for 99.999% up times! (the famous five nines of reliability). As a reference, that’s around 5 minutes of downtime per year.

One could say agents don’t require as much uptime as your cloud service provider. However, I recommend you do the math.

Enterprise automation means an AI that performs the same task repeatedly. Sometimes thousands of times per day. Say it has 80% accuracy, sounds pretty good.

But if you have that agent execute a task 10 times in a row, the probability that all 10 are successful is… 10.7%. If you increase that number to 50 times (an average AI agent in an enterprise has to execute a task thousands of times), that percentage drops to… 0.0014%. The odds are 1 in 71 thousand.

There’s a reason companies demand four or five nines of reliability: an agent with 99.99% accuracy has a 99.5% chance of completing a task correctly 50 times in a row. Now that’s deployable, not whatever the guys in the graph are plotting.

And worse, in AI, you also pay for the mistakes, as you’re metered by the token and charged independently of the outcome.

When we actually labeled both graphs together, the investment banking executive actually audibly laughed. Like, it’s so evident how full of shit this industry is when it comes to present its accolades.

But let’s now focus on one of the biggest misunderstandings, by far, in enterprise AI. Or as I call it: the agent fallacy, because most “agents” aren’t really agents at all.

Agentic vs workflow.

If I had to choose, this is the most important lesson of the entire presentation: how to understand agentic vs non-agentic implementations so that you can sniff out bullshit from consultants (or your own employees) when you see it, because it literally separates success from failure in most implementations.

Subscribe to Full Premium package to read the rest.

Become a paying subscriber of Full Premium package to get access to this post and other subscriber-only content.

UpgradeA subscription gets you:

- NO ADS

- An additional insights email on Tuesdays

- Gain access to TheWhiteBox's knowledge base to access four times more content than the free version on markets, cutting-edge research, company deep dives, AI engineering tips, & more