THEWHITEBOX

TLDR;

Welcome back!

This week, the steep escalation in US Gov vs. Anthropic drama takes most of the industry’s interest, so we are compelled to cover it.

But we also have several exciting news items on hardware, including how Broadcom has raced ahead of NVIDIA in one particular domain, OpenAI’s huge $110 billion round, the release of Nano Banana 2, and the best SVG model in the world.

At the end, I’ll also share a much-needed update review of the state of the AI trade, capturing my beliefs on the future of several top AI stocks, and how my own picks have fared (really well, I have to say).

Enjoy!

WARFARE

Anthropic Gets Defined as a Supply Chain Risk

Anthropic has become the center of a high-profile dispute with the US government.

The company refused Pentagon demands to allow its AI to be used without restrictions in military operations, particularly for mass domestic surveillance and fully autonomous weapons.

The argument seems to revolve around the second point: that the Pentagon was seeking Anthropic’s approval for the use of Claude in fully autonomous weaponry.

But how did all this start? All this became an issue when it was “uncovered” that Claude had been used during the capture of Maduro (something Anthropic already knew and has only recently pumped its chest against now that it is public, but I digress).

This week-long standoff led President Donald Trump to order all federal agencies to stop using Anthropic’s technology and the Department of War to label the company a “supply chain risk,” effectively severing existing government contracts worth around $200 million.

Specifically, Trump said the following:

Being dubbed a supply chain risk forces all companies that work in some capacity for the government to stop working with Anthropic, effectively a death sentence for the company (if truly enforced, which I don’t think it will).

The reasons why things got out of control are hard to know, but according to the Washington Post, things escalated after a particular response from Dario: when put in a life-or-death situation (specifically, a ballistic missile being sent to the United States), Dario answered that the Pentagon should contact Anthropic to receive permission to act (he said, “you could call us and work it out”).

Anthropic plans to challenge that designation in court and defends its ethical constraints as essential.

IN the meantime, that contract has been secured by, you guessed it, OpenAI.

TheWhiteBox’s takeaway:

Just for the record, I’m no idealist, I’m sorry. Thus, you may not like what I’m about to say, but I hope you guys read me because you want honesty and, above all, want to understand how things really are, not how we would like them.

Let me be clear from the start: I don’t think things will end badly, and there’s a big chance this is all performative, helping Anthropic avoid the obvious PR hit of clearly not walking their own talk about ethics, and how the company is transitioning into one that, despite labling itself as anti-war, will now accept its models being used for war without ‘but or maybes.’

Nonetheless, they already have a history of backtracking on commitments. They said they would never take money from Middle East dictators, and they eventually did.

Besides, I don’t understand the political framing this ‘fight’ is getting; the Democrats would have demanded the exact same even if they were much less vocal about it. If there’s something that unites both parties, it's national security, which is all that this is about.

Yes, you’re seeing some Democratic senators like Mark Kelly trying to score political points (we’ll probably see Republican Senators doing the same if they feel it suits their individual political careers), but you would be extremely naive to assume this isn't something Democrats wouldn’t have enforced any other way.

And to be clear, while I understand Anthropic’s claims, I mean, the naivety is appalling, because what did you expect? If you get in bed with the US Government (and Anthropic did so willingly by bidding for the contract), you know that the Government will do some unpopular things with your product; it’s just how the world works.

Anthropic knew Palantir used Claude extensively, so they, of course, knew (and did not address it until it was public) that this was happening and did shit-all about it until it was made public.

And even if we give them the benefit of the doubt, do you really think this through when you bid for DoW government contracts? Couldn’t you predict that the Pentagon would, of course, demand full control over the technologies it buys into, as it has always done?

It’s one of two things:

Either Anthropic’s leadership is as naive as a lamb and completely unsuited to make decisions on such an important technology,

Or it’s all performative and, in reality, this ethics-above-all-else narrative they have going on is just, well, an extreme version of virtue signaling (again, they bid for this contract and nobody forced them to), and now they’ve found themselves between a rock and a hard place because their claims do not align with the facts, and they don’t know how to act.

In my view, it’s probably both. But all in all, it’s a real shame this is happening.

Yes, governments should not be treating their companies this way, and you should be able to enforce the terms of service of your own product.

But no, such rules don’t apply if your customer is the Government (they never did, they never will), and it’s not like Anthropic is a fantasy-land, principled company that suddenly realized the Pentagon was doing stuff against the Terms of Service of Anthropic’s product and is being hammered ‘just because’.

They willingly entered that contract knowing (I presume) how the world works. If not, they are even dumber than their chatbot, and that’s saying something.

All in all, I remain optimistic that it’s all mostly performative.

Trump surely doesn’t want to take it as far as “killing” a $380 billion private company because, well, midterms (although it’s fair to say none of the AI companies are popular these days), and Anthropic does not want to be blacklisted and, if not for the publicity, would have happily taken the $200 million and looked the other way.

HARDWARE

Broadcom’s Huge News: Industry-First 3.5D Shipments

Broadcom, a key semiconductor supplier and one of the largest companies in the world by market capitalization, has announced the first-ever shipments of 3.5D-packaged chips, meant for consumer-end hardware.

This is a big deal, as such advanced packaging was not expected before 2027. But what does that actually mean?

When we think about hardware like a GPU nowadays, it has two components: one or more compute chips and one or more memory chips that are tightly coupled.

But originally, a chip was just that, a single silicon die in a plastic or metal package. For the longest time, to improve performance, Moore's Law did the dirty work by reducing the size of each transistor (computer chips are just a bunch of transistors grouped into logical circuits to perform arithmetic). As a good rule of thumb, compute power grew inversely proportional to transistor size (2x more transistors meant ~2x more compute power).

However, over the years, it became increasingly difficult to reduce transistor size. Simultaneously, the obvious alternative of “just making chips larger to fit more transistors" wasn't an option either, because manufacturing these chips is a very complicated and easy-to-fuck-up process where there's a sizeable chance you get a ‘defect’ that kills the chip.

Defects on a wafer are roughly spread across the area, so the bigger the die, the more opportunities there are for a defect to land somewhere that breaks it.

So, if we can't make chips larger and we can't make transistors smaller, how do we scale? And the answer was (is) advanced packaging.

The point of advanced packaging is to take individual chips, which we now call ‘chiplets’, and tightly connect them in a single package, de facto behaving as a single, unified chip. Here, the key is how tight that connection is, which is naturally proportional to the interconnect surface area.

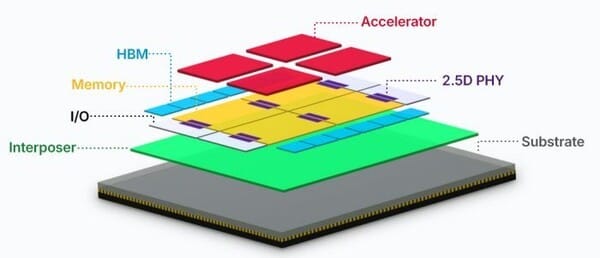

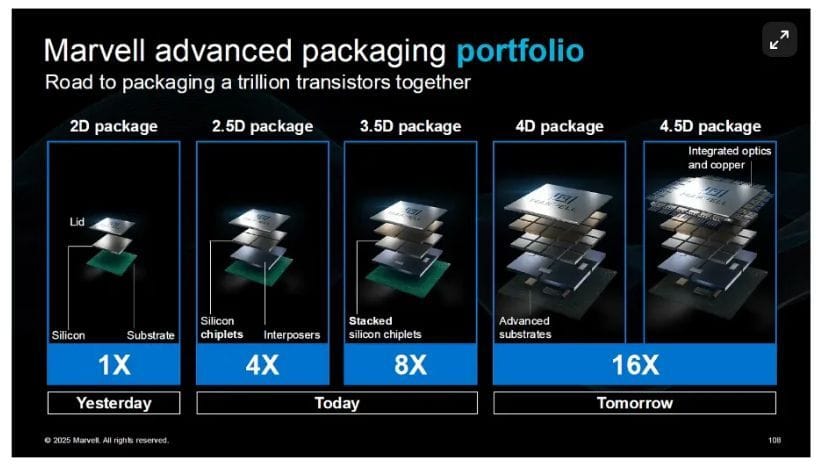

This leads us to different packaging levels that Marvell shows neatly below: the traditional 2D package (a substrate, a chip, and a lid), and how that is evolving toward more complex packaging approaches, such as 2.5D and 3.5D (or even 4.5D, but that will take years).

Vertical stacking is better than side-to-side because the interconnect area is much larger, and also interconnections are much shorter and, thus, more power efficient (more interconnectivity per watt).

And you may wonder: where are we in that image? Right now, our most advanced accelerators, like NVIDIA’s Blackwell and the upcoming Rubin GPUs, use 2.5D packaging, meaning we have several chips (up to four with the upcoming Rubin Ultra), all connected side-to-side, which gives us the 2.5D nomenclature.

Interestingly, current state-of-the-art accelerators also include memory chips stacked one on top of the other (High Bandwidth Memory chips), forming a 3D stack. However, it’s still a 2.5D package because semiconductor companies focus on distributing compute chiplets to determine the packaging level.

And with 3.5D, what Broadcom is announcing today, what we are getting is the first 3D-stacked compute chiplets package, one where chips are placed side-to-side and on top.

Why 3.5D? Well, because you have both 2.5D packaging (chips are side-to-side) and another layer of chips stacked on top, as shown in the thumbnail.

Importantly, this connection is done using hybrid bonding, a relatively new technique that connects chips vertically using copper pads across the entire chip

The advantage? As hinted earlier, we can scale compute power tremendously without enlarging the package footprint (the size of the package, which leads to greater defects, as well as the power requirements).

Adding to this, Broadcom is selling this to other companies in a very convenient fashion: they simply need to design the compute cores (the red dies), and Broadcom designs the bottom chips custom to the customer's needs.

This alleviates the pain for chip designers by outsourcing all the complexity of communication and packaging to Broadcom/TSMC (Broadcom designs, TSMC manufactures and packages), while allowing them to design the accelerator to their specific needs and AI models.

And besides all this, this package includes the first-ever 2nm compute chiplets, meaning Broadcom will allow customers (e.g., Google, Meta, et al) to design their chips using 2nm transistors, which is pretty incredible and ahead of NVIDIA’s own roadmap for both technologies (2nm transistors and vertical packaging).

TheWhiteBox’s takeaway

In short, advanced packaging has become our main hope for scaling compute at the package level (you can, of course, scale compute by connecting many GPUs, servers, and even data centers).

And for the next generation, Broadcom seems to be the first to arrive at the party. Whether that materializes into revenue, too early to say, but it sure as hell has made me inclined to analyze this stock in more detail, and perhaps even invest (I’ll address this more in the portfolio section).

HARDWARE

HBF, Closer to Reality

Sk Hynix and Sandisk continue to formalize a partnership that could meaningfully change the AI memory landscape.

As I explained in more detail above, the AI semiconductor industry, and AI by extension, is placing much of its scaling hopes on vertical GPU chip stacking (literally placing chips on top of each other), which maximizes capacity per unit of surface area.

Currently, AI’s most advanced accelerators (GPUs and TPUs) already have vertical memory chips, the famous High Bandwidth Memory (HBM), which is sending DRAM companies’ profits to the moon.

Nonetheless, Samsung is widely expected to be the world's most profitable company by nominal terms in 2027, even ahead of one of its key customers, NVIDIA.

Source: Morgan Stanley

And as I was saying, the reason for Samsung's (and Hynix and Micron) sudden burst in revenues and profits is HBM. Inside each GPU, in addition to the GPU chiplets (what NVIDIA et al design), you have several groups of HBM chips stacked on top of each other.

By doing this, you maximize memory capacity (the amount of GBs per GPU) without blowing up the entire package size, which would be a literal yield nightmare (much more likely that the assembly process fails and wastes an entire GPU).

Instead, you keep a lot of capacity in a comparatively small surface area and very close to the chiplets, which is also crucial for performance.

As you may already be guessing, the idea behind HBF (High Bandwidth Flash) is to approach the assembly of the next layer of memory, flash memory (also known as fast storage), to be vertically-stacked in the same way, ensuring one can pack a much larger amount of flash memory into every single AI server.

Why? Well, if you haven’t noticed by Sandisk’s skyrocketing stock performance over the last year, flash memory (the type of memory your smartphone has a ton of) is heavily demanded in AI servers because memory capacity demands in modern AI workloads are simply massive.

The primary reason is not only that AI models are huge, but their cache is “huger.” That is, as the overwhelming majority of modern AI models are autoregressive Transformers, these are stateless machines, meaning that, for them to remember context during inference (for them to remember what was told to them in the input and even some of the things the model responded to during the conversation), we have to store, besides the model, what’s basically the entire conversation in memory.

In reality, we don’t actually store the literal conversation but references to that past context. The reason is that this is the only way the model can remember the past. The problem? This memory is not compressable; the model stores it, for lack of a better term, word by word.

And what does all this mean? If Hynix and SanDisk deliver, this would be a truly differentiating product that would be highly desirable for AI server companies like NVIDIA or AMD. Very cool stuff that, of course, is highly bullish for both.

But will they deliver? They seem committed, which is the first step in achieving success.

HARDWARE

TPU Orders to Intel

We seem to be seeing more and more evidence that at least some of the TPUs manufactured and packaged in 2027 will do so on Intel’s foundry, which, if true (I’m not sure there’s official confirmation of this), would mean my 2026 prediction that Intel lands at least one big customer this year would already be true.

It seems Intel would be working on the lesser version of TPUv8, with only one compute die, while the more powerful one will be built and packaged by TSMC.

But as an Intel shareholder, it’s still progress!

VENTURE CAPITAL

OpenAI Raises $110 Billion

On February 27, 2026, OpenAI announced $110 billion in new financing at a $730 billion pre-money valuation (about $840 billion post-money).

The round’s headline backers are Amazon ($50B, structured as $15B up front plus $35B later), SoftBank ($30B), and NVIDIA ($30B), and OpenAI said additional investors may join as the round progresses.

A central angle of the raise is infrastructure: OpenAI framed the funding as support for rapidly scaling compute and deployment as frontier AI moves from research into broad use.

Alongside the financing, OpenAI also announced a strategic partnership with Amazon that makes AWS the exclusive third-party cloud provider for “OpenAI Frontier,” and it highlighted expanded access to NVIDIA inference compute.

TheWhiteBox’s takeaway:

Amazon’s involvement is, by far, the most interesting thing about this release. OpenAI knows this, which is why they released a separate announcement just for them.

I feel the urge to highlight two things about this particular partnership.

The first is this project they’ve announced, which they refer to as the ‘Stateful Runtime Environment,' a project they are going to work with Amazon to provide customers with, in layman’s terms, “an AI that remembers.”

I don’t know why they went with such weird jargon, but the point is that ‘stateful' here means the agentic system keeps track of past interactions, tool calls, and long-horizon memory snippets so that it progressively “evolves” with you. It's basically an enterprise-grade offering similar to OpenClaw (more on this in our Leaders segment in a few days).

It’s also notable that a lot of the deal hinges on OpenAI adopting Amazon’s Trainium chips (up to 2GW of capacity) and on AWS being the sole cloud provider for OpenAI’s enterprise solution, Frontier.

This is not only bad for Microsoft for obvious reasons, but here’s my thinking: Microsoft’s “AI play” over the last few years has hinged on them being the shadow behind OpenAI. If OpenAI grew, so did Microsoft. But OpenAI is, by now, basically a separate actor, with deals across all three big clouds.

This, added to Microsoft's poor showing in their ASIC efforts (Maia is progressing, but it’s unproven), and an even more mediocre showing of their AI products like Copilot, and their basically “no show" in their own AI model efforts, makes me feel all not too well about their ‘AI strategy’.

And don’t get me started with the fact that their business model is, amongst the Big Tech companies, the most exposed to AI disruption by far (Google was more exposed but did its job and reinvented itself).

I mean, the fact that Anthropic’s Claude Cowork eats Copilot alive in terms of Excel capabilities (it’s not even close, actually) is a huge embarrassment.

And the fact that OpenAI and Microsoft felt compelled to issue a joint statement after the Amazon deal tells us all we need to know: Microsoft is nervous, and, as incredible as it may sound, could soon be seen as a laggard.

Circling back to the deal, as always in these “AI agreements", cash is mostly made up, in the sense that Amazon is committing liquidity to OpenAI in exchange for securing long-term contracts for their infrastructure into the future.

To Amazon, this is mostly about giving its cloud business a refreshed look, which, despite being the largest, has long been seen as a laggard in the AI race against Microsoft and Google.

With this investment, Amazon now owns 6% of OpenAI and double-digit percentages of Anthropic, securing a preeminent place in both.

Furthermore, with this new commitment, investors worried about liquidity to the AI trade will breathe a sigh of relief, and, together with the huge adoption of both AI Labs and coding/agentic products (Codex and Claude Code, respectively), things are looking quite good for the bulls.

Naturally, this investment and OpenAI's entire future depend on how well their ad business works.

Despite having 900+ million users, only a tiny fraction are paying subscribers, so OpenAI needs to monetize the remaining 880 million users in some way to justify a valuation without precedent, if not for SpaceX, in the history of private companies.

What’s next? Quite possibly, we are soon going to see their IPO filing, which could arrive as soon as Q3 this year, and at a valuation I would be very surprised to see below one trillion.

This may sound like an outrageous valuation for a public market debut, but SpaceX is going to open the Overton window wide open, with a $1.5 trillion valuation coming in the next few months.

Will OpenAI be a good investment? It’s too soon to tell, but my inclination, for now, is a hard no.

SVGs

QuiverAI Steals the SVG Spotlight

You would be surprised to learn that having AIs create SVGs is an active area of frontier research. AI models struggle with it a lot and have thus become a primary benchmark for measuring progress.

An SVG is a scalable, resolution-independent image format that stays sharp at any size and can be styled or animated with code.

To prove this point, when Google dropped Gemini 3.1 Pro last week, it chose SVG as one of the use cases to show off. However, their dominance didn't last long.

A start-up called QuiverAI has launched the best SVG model the world has ever seen, completely stealing the spotlight and securing the first spot in the SVG arena, and by quite a distance from the rest.

I’ve tried the product (you can too here), and it's quite the user experience, as you see the model slowly create your SVG, and the results are actually very good.

TheWhiteBox’s takeaway:

Is this going to change the world? Of course not. It’s a model that does SVG and nothing more. But it shows how much low-hanging fruit there is in having AIs commit to a single task.

It’s the depth vs. breadth story all over again: general-purpose models like ChatGPT are great but get just as greatly destroyed by models that specialize in a task, achieving a level of depth of expertise that others can’t match.

This is why, again and again, I feel compelled to remind executives that the future of enterprise is fine-tuning general-purpose models to their tasks, not using a one-size-fits-all approach with Opus 4.6 slightly prompted in a different way every time.

This is why open-source is so important: it allows companies to train models on their proprietary data without worrying that OpenAI is stealing it.

IMAGE GENERATION

Google Drops Nano Banana Pro 2

Nano Banana 2 (also called “Gemini 3.1 Flash Image”) is Google DeepMind’s new image generation model that aims to combine Nano Banana Pro’s higher-quality, more controllable image creation (including better subject consistency and instruction following) with the much faster iteration speed associated with Gemini Flash.

Google says it can use stronger real-world knowledge (including real-time info and images from web search) to produce more accurate depictions, and it improves text rendering within images, including translation/localization.

It’s rolling out across Google products, including the Gemini app, Google Search, and Google Ads, alongside ongoing work to help identify AI-generated images using SynthID and C2PA Content Credentials.

TheWhiteBox's takeaway:

Google pulling ahead from the previous state-of-the-art which was.. them. Nano Banana models are just on another level compared to the rest, and this only makes it more evident.

I’m beginning to realize that Google has a big opportunity to sweep the entire creative market, as it feels quite unlikely that others can match Google's scale and data quality across YouTube and other data sources.

This also speaks to the fact that Reinforcement Learning, the training method that’s all the buzz right now, is starting to clearly specialize labs into different areas. This is no surprise considering that RL isn’t that much about generalization (creating a model that's good in many things) but depth (a model that becomes great at one).

Therefore, we’ll start to see meaningful, observable quality differences among models, telling me that, without a doubt, the future of AI is multi-model; if you’re using only one, you’re definitely missing out.

My Thoughts

I would say this is a very sad week, given all the bad things we’re seeing. But it was only a matter of time for the AI industry to come to terms with the fact that, if they were truly building the most powerful technology in the last 40 years, this technology was inevitably going to end up being diffused into debatable applications.

But what’s striking to me is the general naivety of the average AI researcher/lab. For all the genius they possess, they are truly incompetent at interpreting how the world works. As a snob would tell you, they are terrible at game theory.

For those reasons, I predict that, over time, AI Labs will be slowly transferred to leadership that isn’t idealistic but pragmatic, who understand the trade-offs of their position. No, you can’t be a CEO of an “AGI Lab” and not betray, in some shape or form, the beliefs you claim to uphold.

Many Wehrmacht officers and soldiers were horrified by the atrocities they committed or saw during World War 2 by the Nazis. And they still pulled the trigger, making them murderers nonetheless.

There’s a reason why Wilhelm Keitel or Alfred Jodl, the two top Army officers in the Third Reich, were sentenced and hanged in the Nuremberg trials despite “following orders”. It was a reminder that there is no excuse and you have a choice not to do bad things. They could have decided not to sign the Commissar and Commando orders.

But they did.

AI is going to be used in bad ways, and AI idealists are in for a rude awakening.

And now, moving on to the last section, I discuss the overall performance of my AI stock portfolio, which has been much better than I would have ever expected, and also discuss potential next moves on the ASIC trade.

Give a Rating to Today's Newsletter

For business inquiries, reach me out at [email protected]