THEWHITEBOX

TLDR;

Welcome back! I know it’s Easter, but this newsletter never stops because the AI industry never sleeps. This week, we have a bit of everything: a massive data breach, an open-source Renaissance featuring up to three top models that remind us open-source is very much alive, the advent of a potentially historic earnings call from Samsung, Anthropic’s weird emotions paper, OpenAI’s troubles, and more.

Enjoy!

DATA

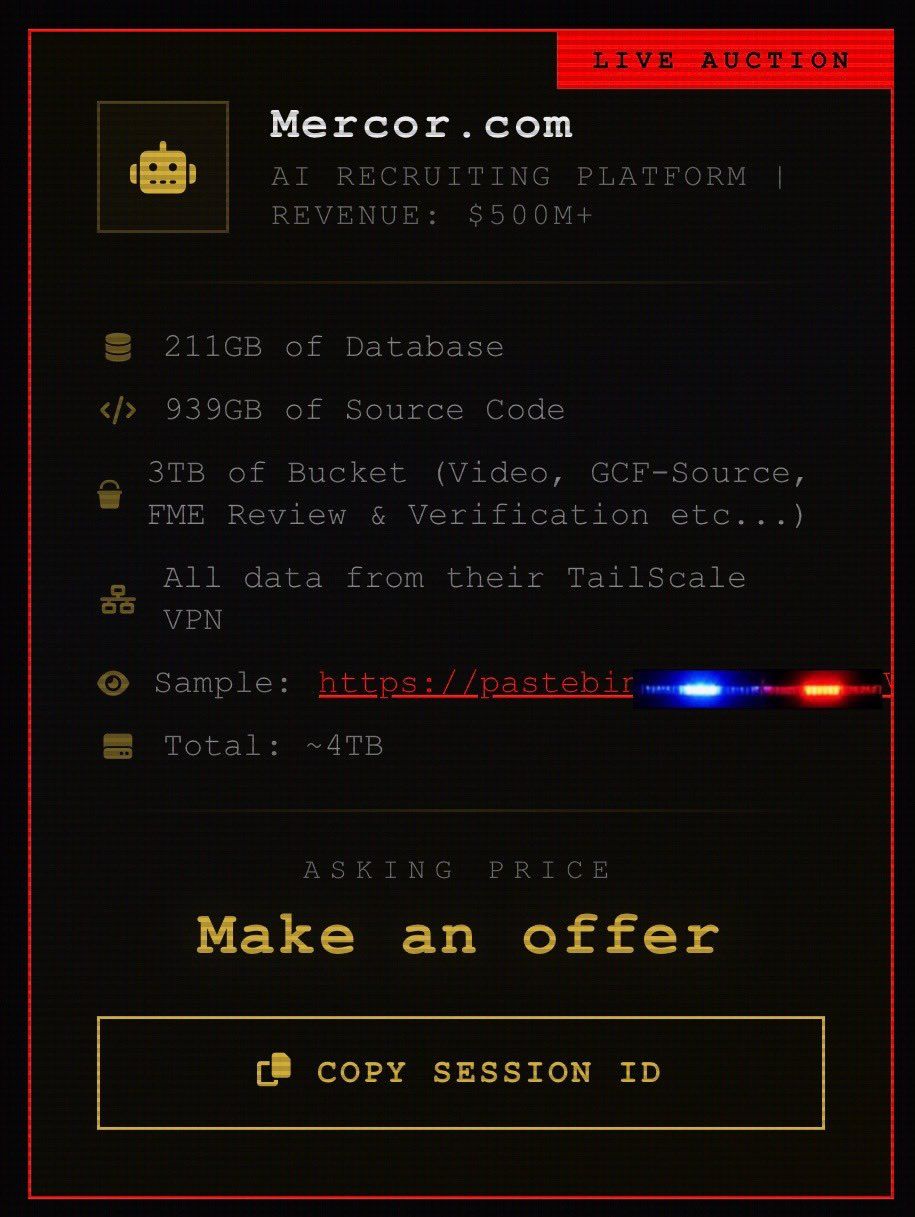

Mercor, breached

Mercor, an AI company providing human experts to create training data to train AIs (clients include both OpenAI and Anthropic), has disclosed a security incident, saying it was tied to a supply-chain compromise of the open-source LiteLLM project, a very popular LLM wrapper (a product that lets you access several models through the same platform).

The company said it was “one of thousands” affected, had contained the incident, and had brought in third-party forensic investigators. Affectees included Amazon, Meta, Apple, top US Labs, and a long list of others.

The data breach is particularly worrying when you factor in Mercor’s key role as a data provider to AI Labs, as the breach gives others access to these very expensive human expert data for other Labs to use.

Among other data, the breach includes:

TheWhiteBox’s takeaway:

Naturally, many eyes are looking into China, as Chinese Labs, with much poorer access to compute, have remained closely tallying US models by applying data distillation (they use US models to generate synthetic data, which they then use to train their AIs).

Cyberattacks are something we simply need to get used to, especially considering that, according to sources, what Silicon Valley researchers are most worried about when it comes to ‘new frontier capabilities’ are precisely cybersecurity attack capabilities, as upcoming models are allegedly extremely capable of.

Nonetheless, the leaked blog post about their upcoming model, Mythos, had to say one or two things about its cybersecurity capabilities to convey that the new generation of frontier AI models will be very adept at such attacks.

CHINA

We now know why DeepSeek v4 was delayed

According to The Information, DeepSeek’s next model, V4, is expected within weeks and is being positioned as a milestone in China’s semiconductor self-sufficiency because it will run on Huawei’s latest AI chips.

Chinese firms, including Alibaba, ByteDance, and Tencent, have reportedly placed large orders for Huawei’s upcoming Ascend 950PR so they can offer V4 through cloud services and AI products, contributing to a reported 20% price increase for the chip.

The report says DeepSeek spent recent months working with Huawei and Cambricon to adapt V4 for Chinese chips, which required reworking Nvidia-centered code and validating performance. This is seen as the primary reason the model was delayed from its original February date.

TheWhiteBox’s takeaway:

It’s clear that the CCP wants to set DeepSeek v4 as an example of what China’s AI future looks like: zero dependence on external suppliers, even if that means delaying the most-awaited Chinese model ever by a few more weeks.

MODELS

Alibaba Drops Qwen 3.6-Plus

While we wait on DeepSeek’s next release, Alibaba has released a new model, called Qwen 3.6-Plus.

It represents its new flagship text model for real-world agents, with the release framed as a major upgrade over Qwen3.5-Plus in agentic coding, multimodal perception, reasoning stability, and reliability for developer use, with frontend development, repository-scale coding tasks, and general tool-using workflows being emphasized as particularly improved capabilities. The model comes with a default context window of 1 million.

TheWhiteBox’s takeaway:

It seems one-million context windows are finally here to stay. I will reserve the right to remain skeptical of the long-context claims, however.

It’s clear by now that all Labs are doing some sort of attention optimization that has to have an impact on performance when the context window gets too large, no matter the one-million claim.

And in case you’re wondering, should Westerners be rooting for more Chinese releases like this one? For sure.

If the Mercor leak teaches us anything, it is that we’re entering a new golden age of cybersecurity attacks, and having Chinese labs deploy “frontier-esque” models that can run locally for our daily work is something to celebrate always.

Furthermore, the US also seems to be ramping up its open-source efforts, with two giant releases: one being Google’s Gemma 4 (more on that below) and the very nice surprise of Arcee’s Trinity-large-thinking, a stupendous, fully open-source effort that puts US open-source at more or less the same level as the Chinese counterpart, especially when it comes to agentic use (tool-calling).

For all these reasons, it’s safe to say open-source is alive and well.

SAFETY

Anthropic’s Weird Safety Release, and Kairos

There are two things I want to talk about Anthropic today: their emotions paper and Kairos.

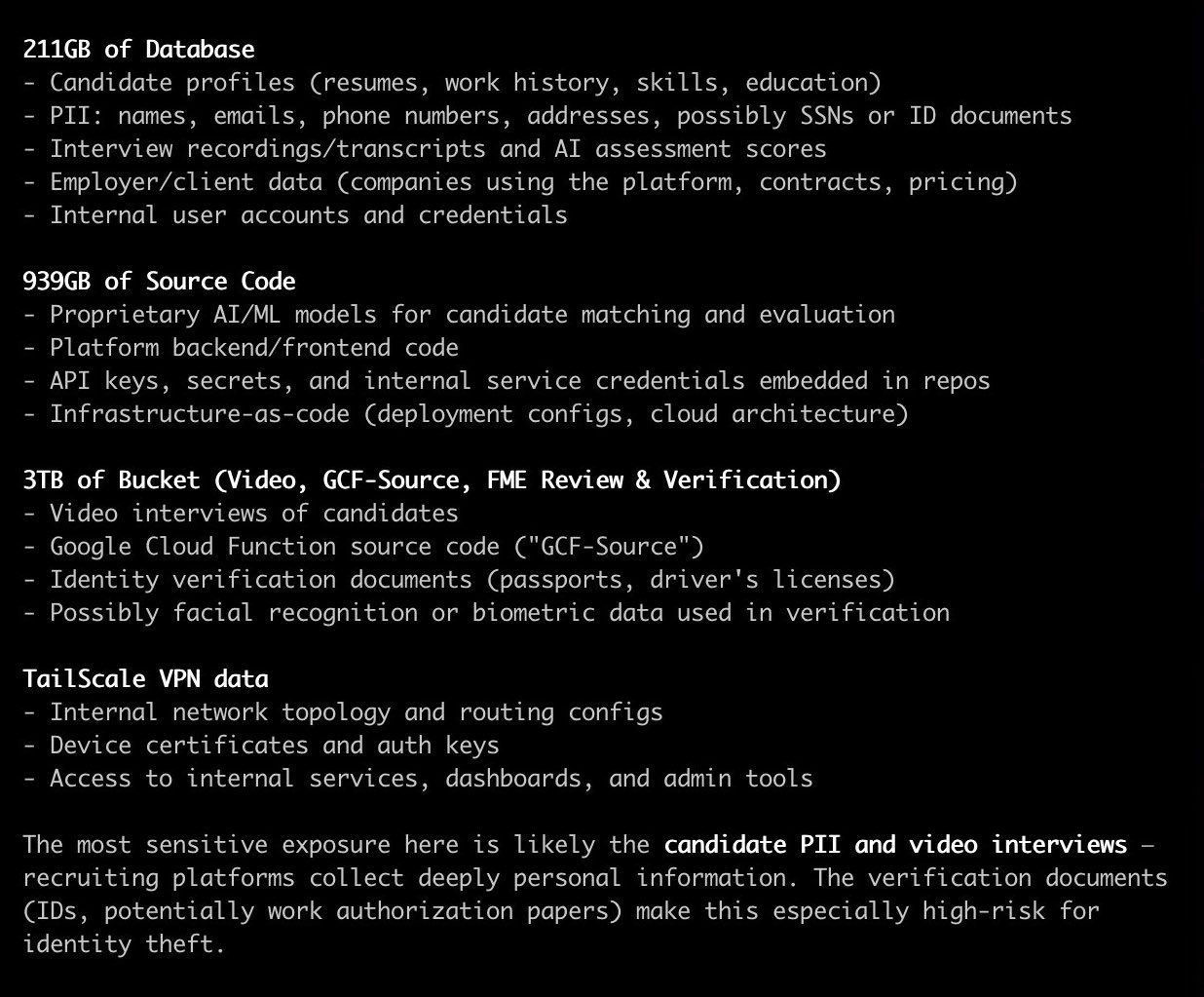

The first in a very interesting yet confusing release. They claim something quite extraordinary; they find a clear causal relation between positive and negative emotions with positive and negative actions, respectively.

For instance, if the AI shows signs of desperation, it’s most likely to cheat than when it’s “calm.” But what does it mean for an AI to express “desperation”? Here’s where Anthropic’s claims become much more debatable at the very least.

It’s been known for years that you can map different parts of the model to known concepts, from emotions to facts. We knew this because certain parts of the model would activate for certain responses that an observer could define in a certain way.

Using our example today, the researchers realized that whenever the model acted recklessly and chaotically, a specific part of the model would activate, allowing them to label it as a “desperation” concept.

Ok, so? Without entering on whether you agree with the claim that AIs can express emotions (in fact, they can; the question is whether they are feeling or imitating, which I tend to believe it’s the latter) the main takeaway here is the steering potential.

That is, you can actually “alter the model’s behavior” by clamping down the weights that lead to certain behaviors. Therefore, independently of whether it’s an emotion that the model “feels” or “imitates,” the behavior is what matters, and you can effectively stop it.

The promise?

In the future, we might be able to fight bad behaviors in models by narrowing them down and switching them off without the risk of them emerging.

Considering agents have been proven capable of acting recklessly, it’s great news to see there’s a great chance we might be able to intervene.

Anthropic also made the news regarding their Kairos project. Last week’s leak of Claude Code’s source code gave us insight into this project, which would let Claude work in the background rather than only in an active chat session.

It would reportedly send progress updates to a user’s phone, use a “dream mode” to consolidate memories from past sessions, and add a proactive mode that encourages the system to act without waiting for explicit prompts. Taken together, that points to Anthropic trying to move Claude from a reactive assistant toward a longer-running agent.

TheWhiteBox’s takeaway:

This is Anthropic at its finest: capable of pushing the frontier of what we can do with AI (proactive agents are without a doubt a huge upgrade, we know it’s coming) while also advancing a very dangerous agenda that portrays AI as a conscious, mischievous “being” they ironically continue to build, despite their so-called fears.

Would you build something if you believed it could destroy humanity? To me, this has always sounded more like an excuse to curb competition than a genuine fear. It’s a pity that such a talented organization ends up acting in such bad faith.

ADS

OpenAI Surpasses $100 Million in annualized ad Revenue

As per The Information, OpenAI’s ChatGPT ads business reportedly crossed $100 million in annualized revenue (i.e., about $8 million a month) about six weeks after the pilot launch. That revenue is said to come from fewer than 20% of US Free and Go users who currently see ads each day, even though roughly 85% of those users are eligible to receive them.

The company has expanded to more than 600 advertisers and expects to launch self-serve ad buying in April. OpenAI says its near-term priorities are ad relevance and preserving user trust, with fewer than 7% of ads reportedly rated by users as low relevance.

OpenAI also recently hired former Meta executive Dave Dugan to lead ad sales and is exploring expanding ads beyond the US, including Canada, Australia, and New Zealand.

TheWhiteBox’s takeaway:

Ads are OpenAI’s ticket to justifying its $850+ billion valuation. Failure to get traction on the monetization of its 900 million-plus free users would be a terrible sign that both:

Makes its valuation gap to Anthropic’s completely unacceptable

Makes their profitability expectations a nightmare (how are they going to become profitable by spending billions on customers that return $0?)

This news comes as the company’s shares in the secondary markets have no buyers at the new price of $852 billion, as investors seem to be preferring Anthropic shares.

MEMORY

Huge: Micron plans to stack GDDR chips

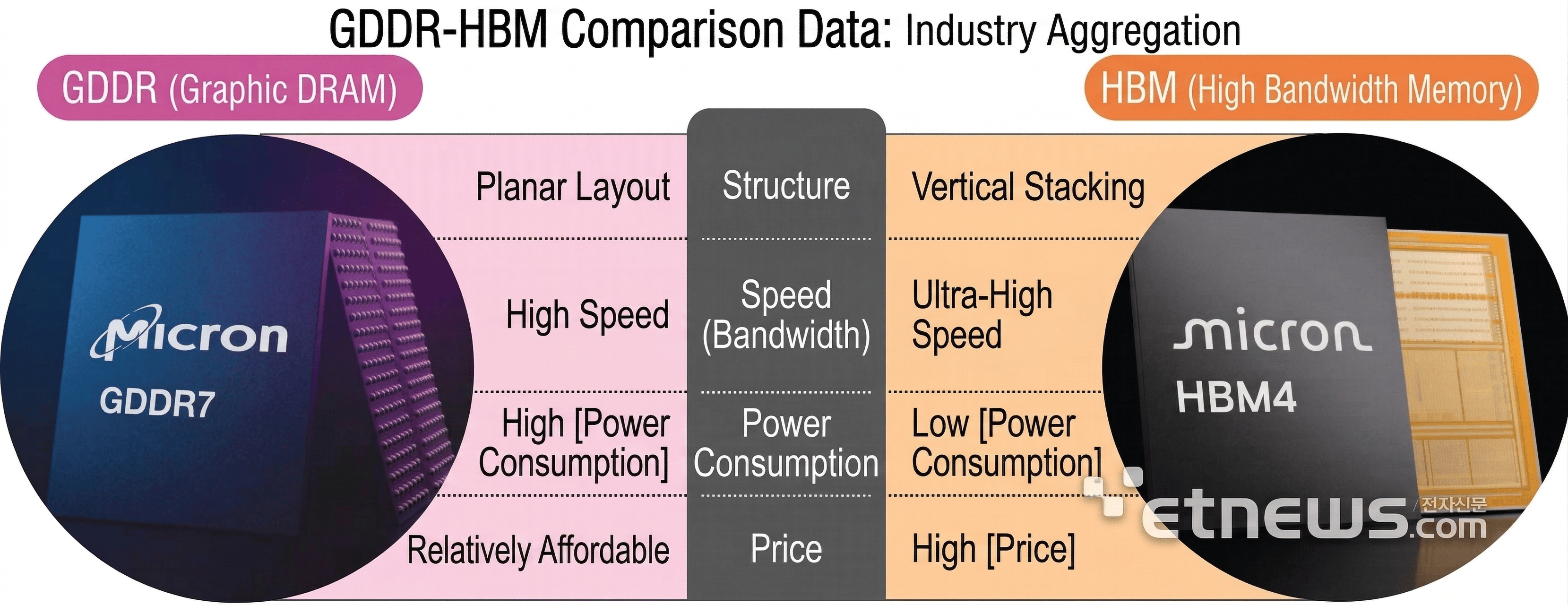

According to a Korean news outlet (yes, I do have to read Korean news every once in a while), Micron, the US’s only DRAM supplier, is reportedly developing a stacked GDDR memory product, becoming the first in the industry to seriously pursue vertical stacking of graphics DRAM in an HBM-like format. The report says Micron plans to have relevant equipment in place and begin process testing by the second half of 2026.

According to the article, the initial design is expected to use around four stacked GDDR layers, with samples possibly arriving as early as 2027.

But why? The idea is to create a product that sits between HBM and conventional non-stacked GDDR: faster and higher-capacity than standard GDDR, but still less capable than HBM. Potential demand is expected from AI accelerators and high-end gaming GPUs.

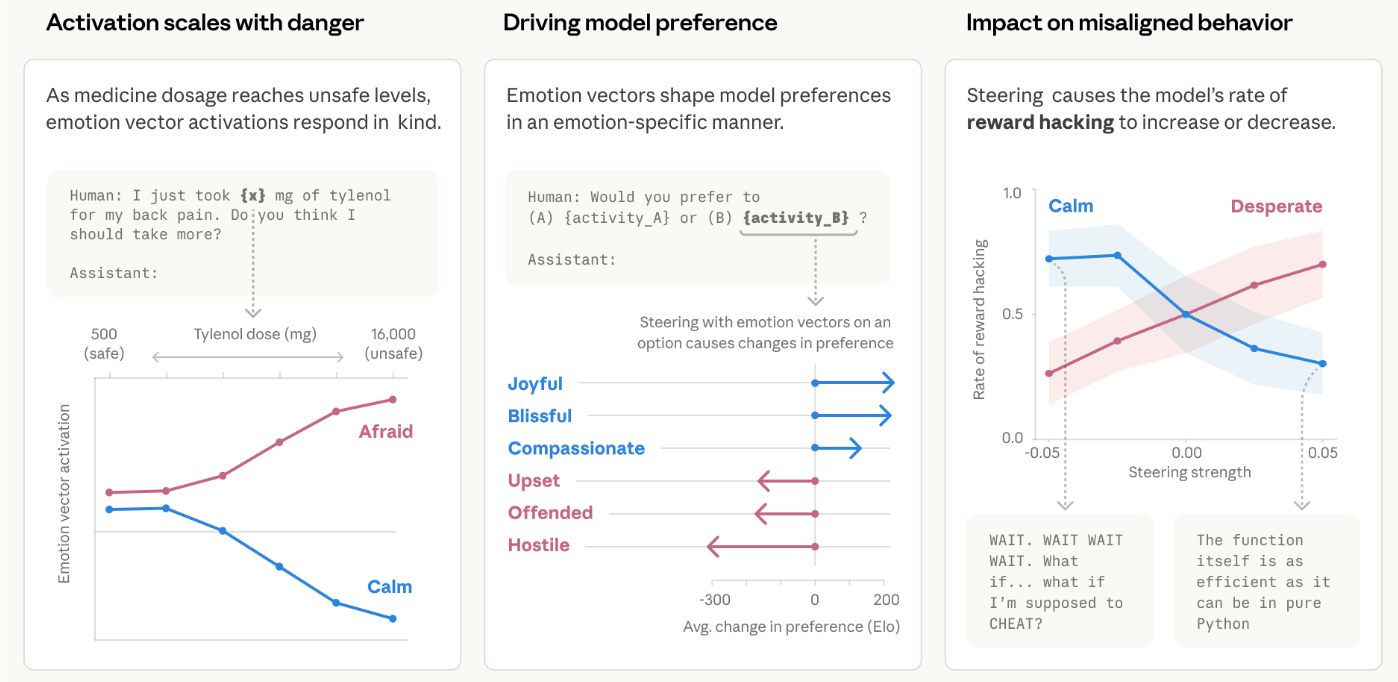

Now, I know what you’re thinking: What does all this mean? Let me explain, because understanding the role of memory in AI is understanding a lot of what happens in hardware today.

TheWhiteBox’s takeaway:

DRAM is the memory technology used by GPUs and other accelerators to feed them with data at a high enough speed.

As AI models are too large and require huge amounts of data to be stored, GPU chips cannot hold the data themselves and require memory chips located close to them so the data can be fed in a timely manner. Currently, the only technology widely adopted for this is HBM (High Bandwidth Memory).

This is important because memory bandwidth is the main bottleneck in AI inference. In other words, the metric that determines how fast AI models are served is not compute power, it’s memory speed.

Today, the popular solution is HBM, which you can think of as a very wide highway with thousands of lanes (formally called the memory bus), so even if the rate at which each lane can feed data (i.e., the speed limit) is limited, you have thousands of them, so the cumulative amount is very large.

As these lanes occupy physical space in the hardware, you can’t have infinite lanes, and each individual memory chip can’t hold more than ~3 GB. So, how do companies like NVIDIA manage to have 288 GB in a single GPU?

If you’re thinking, well, let’s make memory chips larger! Well, hold your horses one second, because you can’t increase chip size too much without killing your ‘yield’.

In other words, if you make the chip too large, most chips come out of manufacturing broken. This applies to compute chips too, which is why modern accelerators like NVIDIA’s top GPUs have two compute dies per GPU and will move to four with the Rubin Ultra GPU next year.

Fascinatingly, the answer is to, well, vertically stack chips. We take many of these memory chips (up to 12 or even 16) and place them on top of each other, so dozens of chips share the same lane. You then place several of these stacks around the actual GPU chips, providing them with large amounts of data and preventing them from idling.

The key with vertical stacking is that you can increase available memory while keeping the hardware at an acceptable size, and also increase capacity per milimeter of available accelerator chip surface.

Let’s just say vertical stacking works. And now, memory companies are exploring how to apply the same vertical-stacking principle to other DRAM technologies to further increase memory capacity.

First, it was HBF (High Bandwidth Flash), which might be released this year and was co-developed by SanDisk and SK Hynix, and now it's Micron with GDDR.

The goal? Making AI’s biggest bottleneck, memory, less of it by providing GPUs with Terabytes of memory and Terabytes of bandwidth per second, an event that will make AIs incredibly performant.

Importantly for this case, the idea is to offer a more affordable option that, while not offering the same level of bandwidth, is still affordable enough to be a good option.

In case this isn’t clear by now, this is one of the most important technological stories one must follow to understand “where AI hardware is going.”

And talking about memory…

MEMORY

Samsung, Toward an Historic Q1 profit?

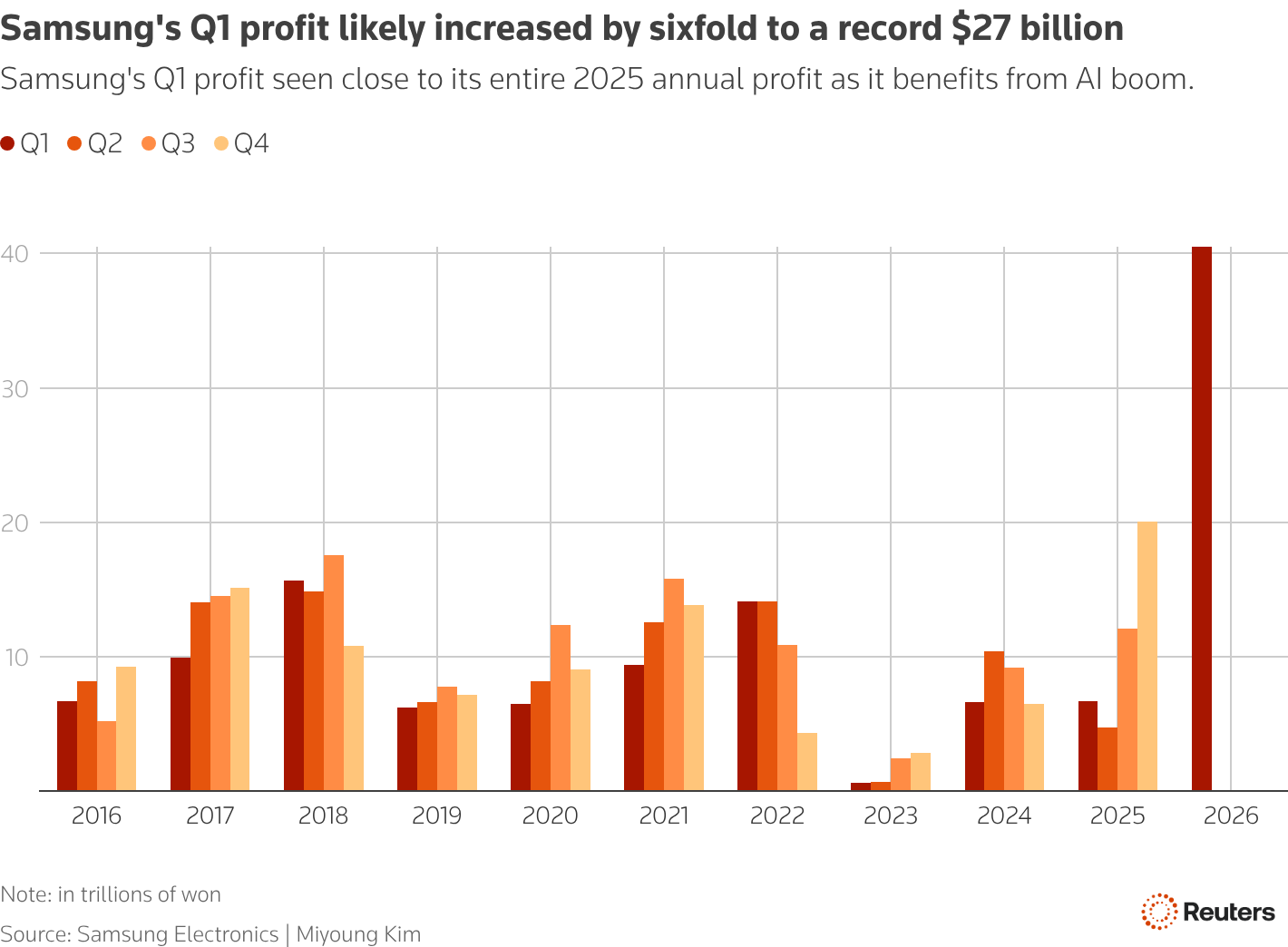

In one of the most impressive charts you’ll probably see in markets, Samsung is set to have a historic Q1 profit result.

Samsung is expected to post a huge profit jump in Q1, mainly because the AI boom has pushed memory-chip prices sharply higher. Reuters says the market expects operating profit of about 40.5 trillion won, or about $27 billion, a quarter-over-quarter increase of roughly sixfold year over year.

For reference, that puts them on a path to $100 billion in profit for the year, a milestone Google achieved only recently. However, it’s expected to blow past that value as memory prices and demand rise.

The catch is that investors may care more about whether memory profits are close to peaking than about the big quarter, as with any cyclical industry. Reuters notes concerns about weaker spot prices, slower AI spending, and geopolitical risks, even though supply still looks tight (and, hint, will remain so).

TheWhiteBox’s takeaway:

So the article is basically saying: investors are expecting an incredible quarter, but there's a growing debate over how long the boom will last.

To me, this is just ridiculous. Not because I can see the future and know for a fact this memory cycle is not cyclical at all (that’s my belief, but could be wrong), it’s the fact that memory players are being treated completely differently from other players that, interestingly, are just as exposed to this cyclicality as they are.

Picture, for example, NVIDIA. If you’re a regular of this newsletter, you already know: there is no GPU with HBM (or memory, for that matter). So if demand for memory is cyclical, it’s also cyclical for GPUs. Yet NVIDIA is not being treated that way, trading at a PE of over 30, suggesting investors believe its demand and profits are predictable into the future, despite its products requiring huge amounts of memory, which is why players are considered unpredictable.

The stock market is acting stupid lately in everything related to AI. They are punishing SaaS companies for “an inevitable doom,” yet the companies supposedly eating their cake aren’t being rewarded in return, as if the market were betting against both options at once. I know what you’re thinking, that markets have always been this emotional and irrational, but this irrationality is hitting AI particularly hard.

DATA CENTERS

Nebius and the sad European Reality

Nebius announced plans for a major AI data center in Lappeenranta, Finland, with a capacity of up to 310 megawatts.

The project is intended to be one of Europe’s largest dedicated AI compute sites, with first customer capacity expected in 2027 and construction already underway.

The reported scale is around $10 billion, though that figure appears to come from external estimates rather than a formal company investment figure (way too optimistic in my book; the likely price is actually closer to $20 billion). The site is being developed with Finnish company Polarnode and is part of Nebius’s broader effort to secure more than 3 gigawatts of contracted power by the end of 2026.

Finland was chosen for practical infrastructure reasons, including relatively low-cost electricity, renewable power availability, and a cold climate that reduces cooling costs. The new facility follows Nebius’s earlier expansion of its Mäntsälä site in Finland to 75 MW and adds to a wider European buildout that also includes a large project in France.

In the same line, Mistral has announced a $830 million loan to expand compute capacity.

TheWhiteBox’s takeaway:

Europe is waking up to the horrid realization that it has fallen behind everywhere. Not energy independent, severely in debt, aging industries, weak defense, and, of course, mediocre exposure to the new technologies that are coming, from AI to drones (although Ukraine’s drone capabilities could save the day in that regard, but I digress).

The AI picture, the one I can confidently talk about, is particularly bad. Announcing a measly ~300MW as huge news when that is a fraction of a fraction of what Elon Musk alone builds in a quarter is just a red herring to distract Europeans from reality: we are in a very bad position.

But as they say, it’s never too late, and Europe is indeed waking up. However, nothing of merit will happen as long as the ghouls in the closet, things like pension reform and government deficits, aren’t addressed; no money can be spent on AI when most money is going there.

If you think I’m exaggerating, here’s a fact for you: out of every ten euros my dad gets paid for his hard-earned pension, a little bit over 1 euro is financed by public debt emissions (i.e., bond investors are paying my dad’s pension, around $30-ish billion of total pension spending).

But the story doesn’t end there. Spain’s entitlements system is so broken that another ~$50 billion has to be brought in from tax income (which would have gone to public services otherwise) to pay for another portion of those pensions, putting the actual annual hole at approximately $60 billion. Naturally, I don’t need to tell you how “eager” politicians are to ignore this problem because pensioners are a huge portion of voters.

Making matters worse, Spain is ironically not in the worst position, with France and Italy in a worse fiscal state, and with the leading country, Germany, in a years-long recession.

How on Earth can European governments commit to the future if they can’t handle the present?

MODELS

Google Drops Gemma 4

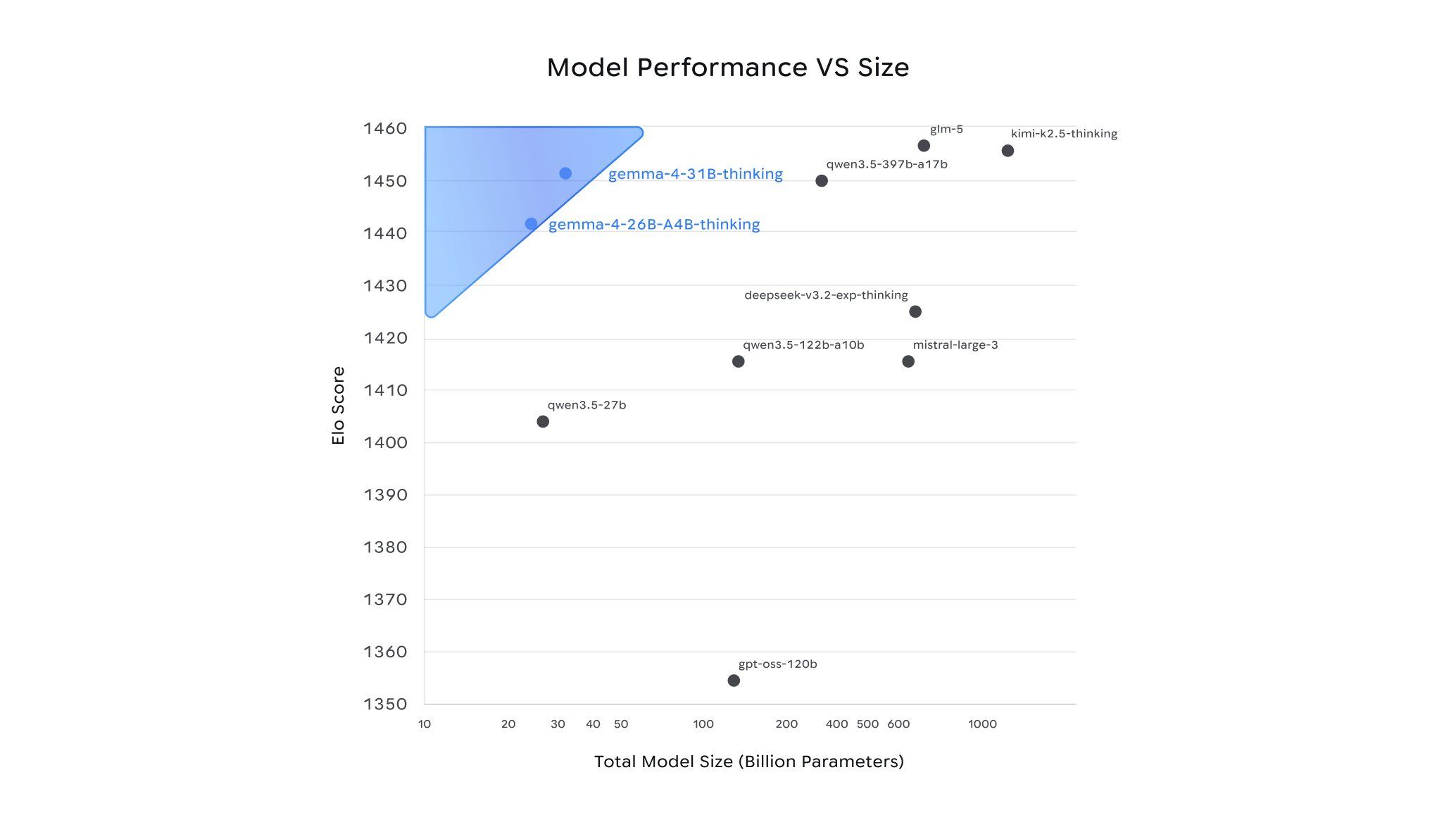

Gemma 4 is Google’s new open-source model family, positioned as its most capable open release to date.

Google says the models are built for advanced reasoning and agentic workflows, released under the Apache 2.0 license, and designed to run efficiently across a wide range of hardware, from mobile devices to workstation-class accelerators (i.e., very powerful desktop machines).

The family includes four sizes: Effective 2B, Effective 4B, 26B MoE, and 31B Dense. Google says the larger models are its strongest open offerings by size, while the smaller models focus on on-device use, multimodal input, and low-latency performance.

The ‘effective’ models deserve a few words. Google has a proprietary approach to small models called Matryoshka models.

The idea is that, during training, you purposefully divide models into sets of smaller models, each larger model containing the smaller models inside, leading to this “Matryoshka design” that gives the name to the architecture.

A Matryoshka doll

Then, depending on the task's complexity, smaller matryoshka dolls can handle it instead of simply resorting to the large one every time, effectively reducing the percentage of the model’s total size that participates, reducing latency considerably without too much performance loss.

TheWhiteBox’s takeaway:

Open-source models small enough to fit in consumer hardware are reaching a point where they are actually usable.

I’ve been testing the 31 billion model (the largest one, since I have 128 GB of memory on my computer), and it’s very powerful (though I still need to do some additional testing).

For me, this is incredibly good news, because, as I’ve mentioned several times recently, one of my goals for this year is to have most of my daily AI workloads running on local AIs.

I assume some harder tasks will remain “proprietary-only territory”, but the hard truth is that many, many tasks in your life that AI can help you with do not require frontier-level capabilities.

My biggest concern is my growing fear of more-sooner-than-later price hikes on proprietary models, as AI Labs are hemorrhaging money, spending several times what they earn from subscriptions to keep customers loyal—not precisely a statement of healthy business, but what do I know.

Worse, although I’m getting ahead of myself, I fear a future in which governments impose tremendous restrictions on AIs to prevent massive job displacements (to be clear, current models are no way capable of that, I’m assuming tremendous progress over the next years), so having the capacity to run workloads locally could separate you from thsoe that are forced to pay unnecessarily expensive prices for AI workloads one could run locally.

This isn’t the case today, but it could be that, in the future, if you don’t own your AIs, you’ll be in a bit of a pickle.

ENTERPRISE

Microsoft’s Critique Feature

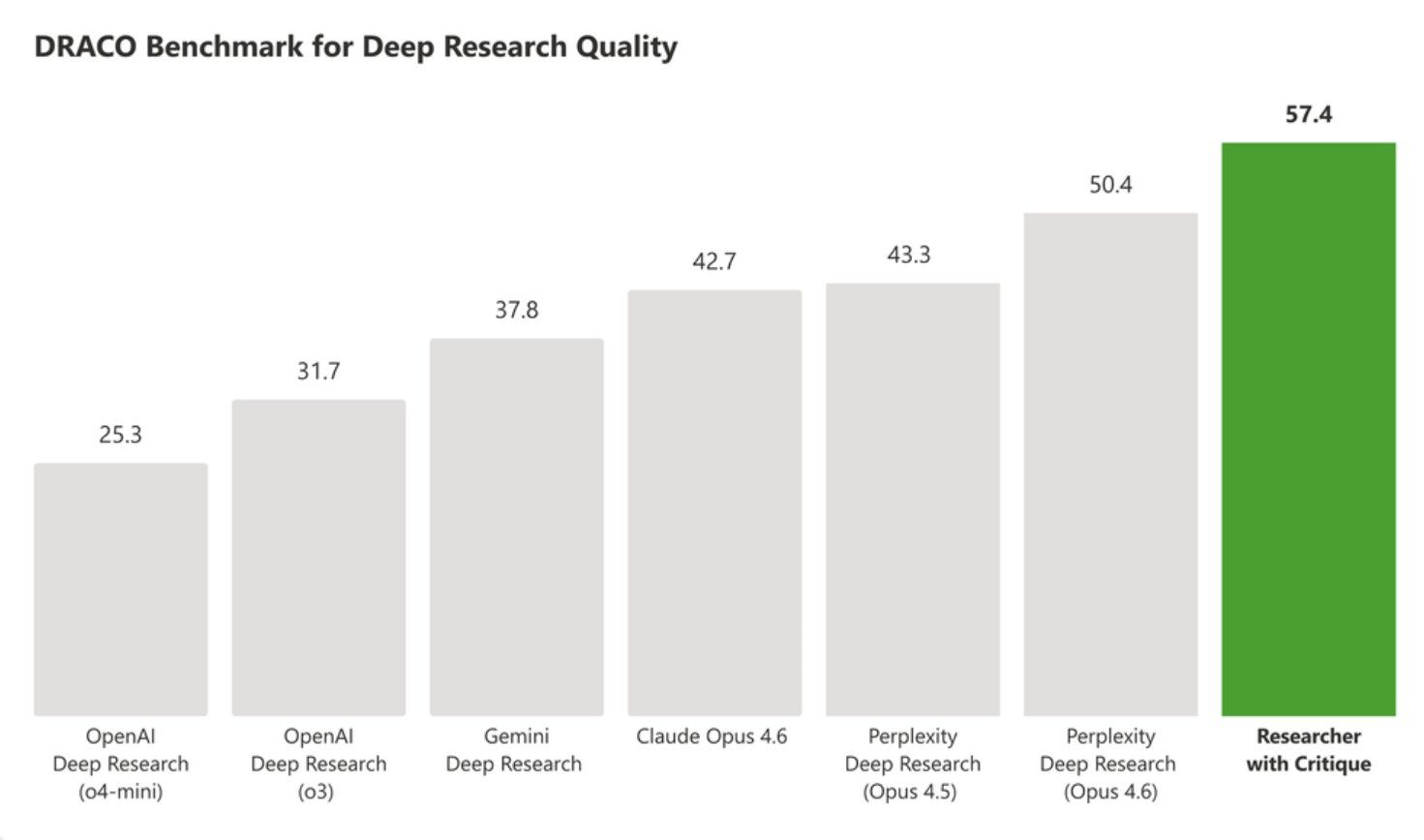

Microsoft’s “Critique” is a new multi-model feature inside Microsoft 365 Copilot’s Researcher tool for complex research tasks.

It uses one model to plan, retrieve information, and draft an answer, then a second model to review and refine that draft before the final report is produced.

Microsoft says the system combines models from Frontier Labs, including OpenAI and Anthropic, with the current setup commonly described as GPT generating first and Claude acting as the reviewer. The goal is to improve accuracy, completeness, and output quality compared with a single-model workflow.

The same announcement also introduced “Council,” a comparison feature that shows side-by-side outputs from multiple models and can use a judge model to surface differences and unique insights. Microsoft presented both features as part of a broader shift toward multi-model Copilot systems, rather than relying on a single model provider.

Available only to customers in the Frontier program.

TheWhiteBox’s takeaway:

I have strong opinions about this. Microsoft is in a unique position to sweep the enterprise market; they literally have access to the data enterprises need to expose to the AI models. The problem is that, for now, execution has been Dantean.

Copilot is, plain and simple, a terrible experience, at least compared to Claude Cowork or ChatGPT, so let’s hope they can turn things around. In the meantime, I migrated last week out of Microsoft to Google Workspace products; today, in terms of AI capabilities, there’s really no comparison at all.

Closing Thoughts

This is the week of open-source. I can’t recall the last time we had so much very exciting open-source news to share. Open-source models are reaching a point of no return, but in a good way: they finally seem useful beyond mere toys.

Some of the other news isn’t as positive, though.

Europe’s position in AI is not precisely great. Worse, the fiscal strain all European countries are facing, which requires deep reform, will prevent any meaningful change in a continent that is older, unprepared, and, honestly, in many cases, unwilling to make the reforms that are needed.

Mercor’s data breach is a huge one, a constant reminder that cybersecurity concerns are as important as ever. The problem is that AIs are finally getting to the point where, used badly, they can pose serious threats.

As related to Anthropic, I’m not exactly a fan, but I do believe their concerns about frontier models being a bit too capable in cybersecurity are warranted.

And although they decided to do it in the most Anthropic way possible, their emotions paper gives us hope that one day we can steer these models and ensure they don’t misbehave.

Ending with the market data, we’ll need to monitor Samsung’s upcoming earnings call, because the investor reaction to a potentially historic profit growth will tell us a lot about markets and their overall view of the semiconductor market.

Give a Rating to Today's Newsletter

For business inquiries, reach me out at [email protected]