THEWHITEBOX

TLDR;

Welcome back! This week, we have robots from the future, an historical IPO (with detailed technical coverage at the end of the newsletter), a star Lab’s first model release, OpenAI’s personal finance tool, and many other news items you must know.

Enjoy!

RESEARCH

A New Type of Generative Model?

Thinking Machines, one of the most highly valued AI Labs in the private markets and packed with star researchers and engineers from other top Labs, is finally showing its teeth.

And the results are very promising because they aren’t simply following the same playbook as other top Labs; they are actually pushing novel research (and quite publicly, too).

Their first major model release is an interaction model, trained to offer a strong balance between intelligence and interactivity. The trade-off here is obvious because interactivity goes against both scaling laws of intelligence:

The smarter you want a model to become, the larger it is (and thus slower to run)

The more thinking budget you give it, the slower its response will be, too.

The solution is an architecture that may sound familiar if you’re a regular reader of this newsletter because it draws strong similarities to how robotics AI is being approached, but also includes unique features.

It’s inspired by Daniel Kahneman’s System 1 and System 2 ways of thinking. Known as Thinking, Fast and Slow, it argues that our brains think at two speeds, one fast one slow:

one is intuition-based, fast, unconscious, like flinching when someone is about to hit you,

and the other is slow, conscious, and deliberate, like solving a maths test.

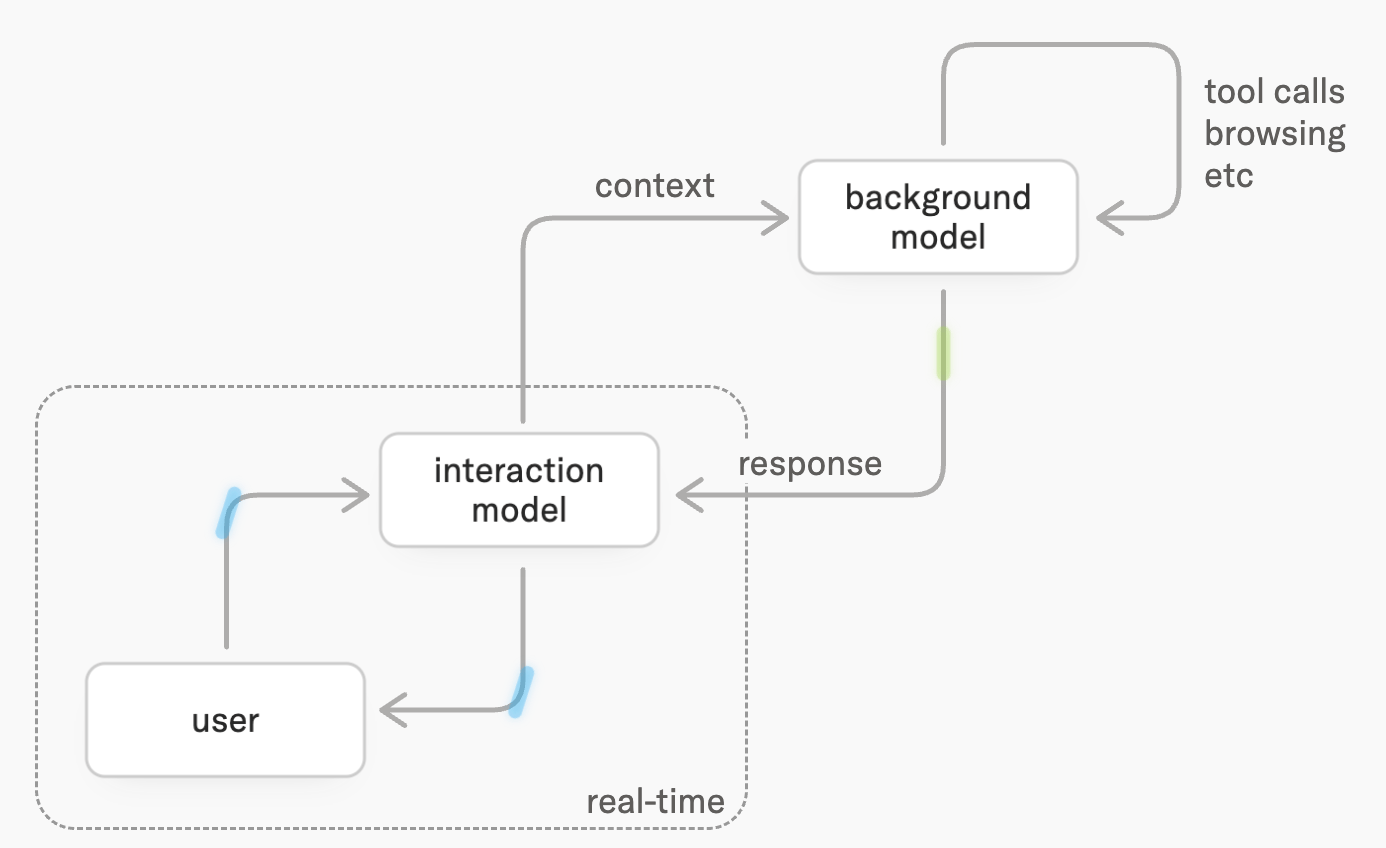

Following this principle, and as the thumbnail suggests, here we have an architecture with a fast model, known as the interaction model, which is the one in charge of interacting with the user, and the background model, which is in charge of the ‘slow thinking’ and also of making the necessary tool calls (like calling a search API to check something on the Internet).

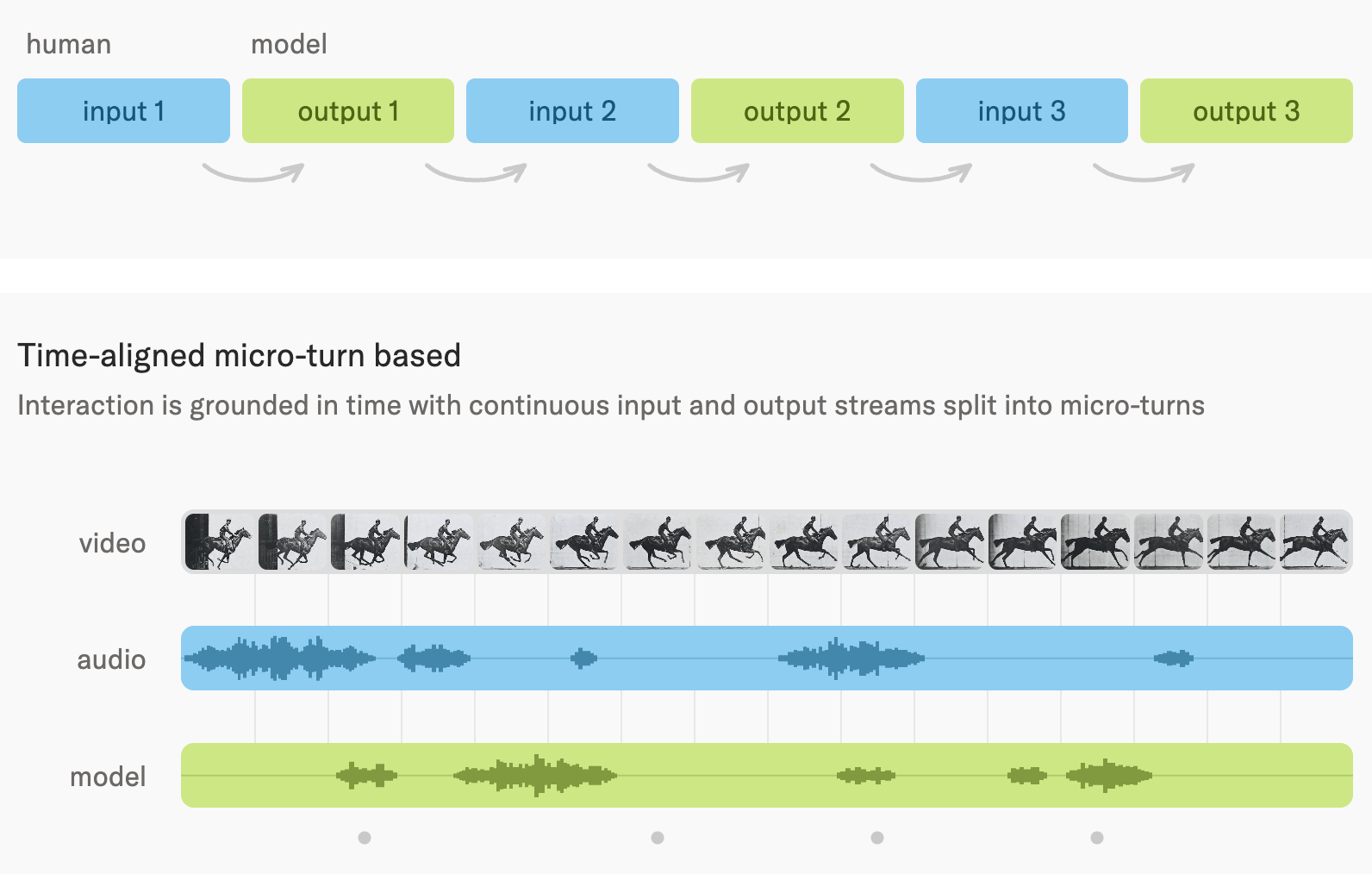

This way, the model can still handle long-range, reasoning-heavy tasks that require time while still appearing snappy and “real-time.” The result is a system that breaks with the way AIs have historically interacted with us, on a turn-by-turn basis.

Instead, both you and the model interact continually across all time slots. Therefore, the AI can talk alongside you and across multiple modalities, such as audio, video, or text.

These allow this “model” to do stuff we hadn’t seen before, certainly not at these levels of interactivity:

It can handle real-time multimodal interaction: audio, video, text, screen input, and tool outputs in one continuous stream.

It can process interaction every 200 ms, so it understands pauses, interruptions, overlapping speech, timing, hesitation, and turn-taking.

It can speak while listening, allowing simultaneous conversation, live translation, corrections, and backchanneling.

It can watch what you are doing live, spot bugs, follow a screen, count reps, comment on a video feed, or react to visual changes.

It can coordinate with tools and background agents, meaning one part of the system can keep talking to you while another searches, reasons, browses, or executes longer tasks.

It can generate or update interfaces while interacting, making it useful for live coding, dashboards, design work, research workflows, and interactive tutoring.

Furthermore, the model is very smart and competitive with alternatives from OpenAI, such as GPT -2, in real time, across several benchmarks.

TheWhiteBox’s takeaway:

As we’ve learned from Cerebras' IPO and from OpenAI and Anthropic’s super-successful fast modes, there’s a lot of value in optimizing interactivity; people don’t like to wait.

It’s interesting that TML went this route to start their model releases, but it certainly looks good.

CODING AGENTS

Are Task-specific harnesses the real deal?

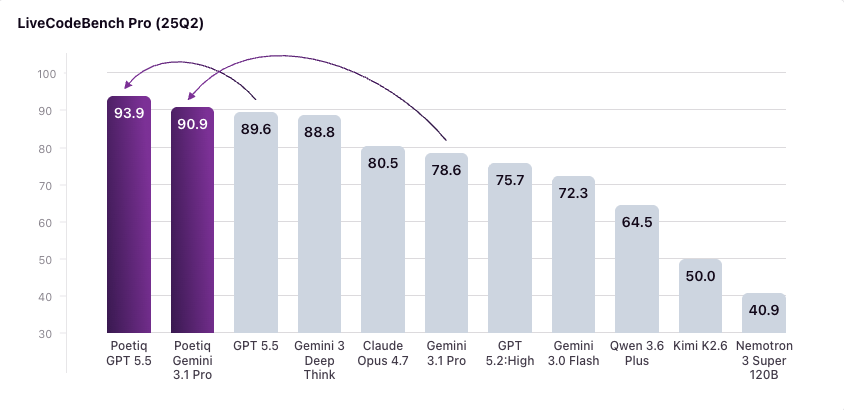

Poetiq's Meta-System autonomously developed a coding harness from scratch through recursive self-improvement, achieving new state-of-the-art performance across several benchmarks and frontier models.

A harness is a system that is placed on an AI to improve its performance. All modern AIs run inside harnesses.

Using only standard APIs, with no fine-tuning or access to model weights (meaning they cannot adapt the model to the problem), the harness achieved state-of-the-art results on LiveCodeBench Pro, a contamination-resistant C++ coding benchmark that evaluates pure programming ability without relying on public ground-truth code or tool use.

Importantly, this harness is both model-agnostic, initially optimized for Gemini 3.1 Pro, raising its score from 78.6% to 90.9%, surpassing Google's own Gemini Deep Think (Google’s own harness and multi-agent setting), but they also applied the same harness, unchanged, to other models, and still had GPT 5.5 High improve from 89.6% to 93.9% and boosted Kimi K2.6 by nearly 30 percentage points.

Importantly, it’s also task-specific, which is the key secret here; the harness is built for the task in particular, which is what makes models see such huge bumps in performance.

TheWhiteBox’s takeaway:

I do still believe most of the real value comes from actually fine-tuning models on the task.

But the idea of task-specific harnesses, which make models perform particularly well on a specific task without additional training, is a neat middle-ground way to customize models you can’t control (i.e., proprietary models) to your task.

AI BEYOND LLMs

Time-series models scale

Datadog has released Toto 2.0, a new family of open-weight time-series forecasting models designed to predict numerical signals over time.

The models range from 4 million to 2.5 billion parameters and are available on GitHub and Hugging Face.

Datadog says the release is intended to test whether time-series foundation models improve as they scale and reports that each larger Toto 2.0 model improves over the smaller one, with no sign of saturation at the largest 2.5-billion-parameter size.

But why do we need this?

It’s easy to see the value in a model like ChatGPT, which, with scaling, gives it the ability to speak and reason better, but a model that simply predicts lines on a graph?

TheWhiteBox’s takeaway:

This is needed anywhere organizations depend on numerical signals that change over time and need to anticipate what comes next.

In Datadog’s core market, that means forecasting cloud infrastructure metrics such as latency, error rates, traffic, CPU usage, memory consumption, database load, GPU utilization, and cost, so teams can detect anomalies earlier, prevent incidents, and plan capacity more efficiently.

More broadly, the same type of model can be useful for retail demand planning, energy consumption forecasting, financial time series, logistics, weather-sensitive operations, and any environment where thousands or millions of signals are too many to model manually.

So there’s definitely value, especially inside certain sectors, and seeing that the principles of scaling that have made LLMs so valuable apply to other areas too is great news.

PUBLIC MARKETS

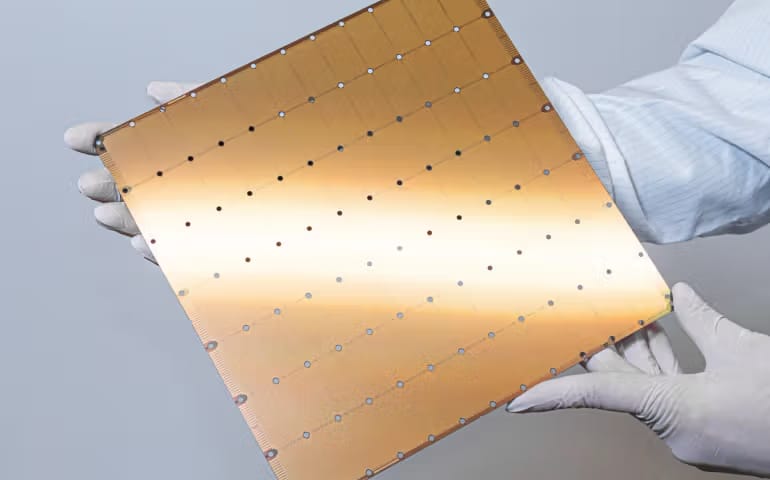

Cerebras’ Historic IPO

If markets weren’t crazy enough as they are, we’ve the biggest IPOs of the year, Cerebras Systems, to the mix, which went public yesterday.

The AI chipmaker priced its IPO at $185 per share, raising $5.55 billion. Trading opened far above that level, with shares starting at $350, briefly climbing higher, and closing at $311.07, up 68% on the day.

Today, it’s down $CBRS ( ▼ 10.08% ) .

But should you invest?

TheWhiteBox’s takeaway:

To me, there’s a mixture between delusion and warranted optimism here. It’s a great company, and the chip is an engineering marvel, but what’s the right price?

I was asked to do a simplified due diligence for an investment banking client last week. In the portfolio section at the end of this newsletter, you’ll find what I told them. I cover everything, from the technological perspective, to financials and supply chain, as well as how much dent they can make in the AI market.

RESEARCH

Ineffable partners with NVIDIA

Ineffable, the AI Lab co-founded by David Silver, one of the founding fathers of Reinforcement Learning (RL) and the creator of AlphaGo, the first superhuman AI, has partnered with NVIDIA to develop—potentially—new hardware for a fundamentally different approach to AI training using Large Language Models (LLMs).

RL is a fundamental learning technique in AI, in which it explores new solutions to problems. It’s trial-and-error learning, basically.

The reason I’m highlighting this is to make you aware of this lab, as it's fundamentally different from what we have right now. They don’t believe the current state of AI is in the right direction.

As David Silver himself explained, “Researchers have largely solved the easier problem of AI: how to build systems that know all the things humans already know,” Silver said. “But now we need to solve the harder problem of AI: how to build systems that discover new knowledge for themselves. That requires a very different approach — systems that learn from experience.”

In plain English, he basically thinks LLMs are fine but not the endgame.

But how are they going to do that? We don’t actually know, but there are several hints along the way. The first is the paper he coauthored with Rich Sutton, the other Godfather of reinforcement learning, the era of experience.

It’s an interesting read, but simplified, it’s the idea that while modern AIs learn from human data, learning by imitating us and then adding a sprinkle of exploration and experience-gathering.

Instead, they believe that truly powerful AIs turn the balance upside down; it’s fine if AIs learn from human data, but the vast majority of learning should come from their own experiences.

In other words, they want to create AIs that learn from their own experience rather than from human experience.

The secret might be feedback. In other words, for AIs to learn without being told whether they are making progress (the verifying principle; we can only learn what we can verify), they will experience the consequences of their actions and use that as verification (e.g., I jump from a height of 10 feet and break my leg; never jumping from that high again).

The real world becomes the verifier, and that’s when you truly learn from experience.

TheWhiteBox’s takeaway:

The idea makes sense. But just like Yann LeCun’s ideas with JEPAs, for which he’s started a new AI Lab to build world models that have nothing to do with LLMs, I need to see the receipts. I like what I read, but I need proof.

VENTURE CAPITAL

Another $30 billion, really?

Anthropic has agreed terms for a $30bn fundraising that would value the artificial intelligence company at $900bn, according to the Financial Times.

The round is expected to close as soon as this month, though terms could still change before completion. The deal would nearly triple Anthropic’s previous valuation and place the Claude maker above OpenAI’s reported $852bn valuation.

The valuation increase reflects the company’s rapid revenue growth, with annualized revenue projected at about $45bn, up from $9bn last year. Big tech groups are not expected to take part in the round.

TheWhiteBox’s takeaway:

I’m old enough to remember this company raised the same amount of money 3 months ago, which puts their annualized spending at a whopping $120 billion.

So even if people can linearly extrapolate and assume Anthropic will double revenues by the end of this year (that’s what the current growth suggests), which they probably won’t because, even if there was such demand, they largely don’t have the compute to serve it, they are still spending way more.

This industry is finally seeing revenues piling in, but the elephant in the room is that, for every new dollar that comes in, more than one dollar has to come out, so spending is accelerating even faster than revenues. Ironically, this might be an opportunity for Cerebras, as we’ll talk about later.

PICTURE

Netflix’s INKubator

Netflix is staffing up a new internal animation studio called INKubator, which is expected to focus on AI-assisted animated shorts and specials. The unit is hiring producers, software engineers, and CG artists to work on experimental “GenAI-native” production pipelines.

The studio has not been formally announced by Netflix, but listings and LinkedIn profiles suggest it quietly began taking shape earlier this year. The initiative appears focused first on short-form animation, while some listings point to longer-term ambitions for feature-quality content.

TheWhiteBox’s takeaway:

Seeing the progress image/video generation models are making, especially with OpenAI’s GPT-image-2, I’m sorry, but this is a no-brainer we will have to get used to.

At this point, much of the animosity toward AI generations is deeply rooted in bias rather than objective criticism.

For instance, an X user tested the following: He uploaded an image they had created using AI that resembled a Monet painting and asked others to critique it. The criticisms were varied and mostly negative: “there’s no cohesion”, “it’s all borked nonsense”, “it’s a mess”, and a long tail of others.

The reality, though, is that this was, in fact, an actual Monet.

Which begs the question: are people against AI just for the sake of it?

To be fair, I think this is just the same reaction that we see with every industrial revolution. If you take a fabricated leather shoe that looks man-made, and ask people to critique it, they will find ways to explain why it’s not as great as a man-made version.

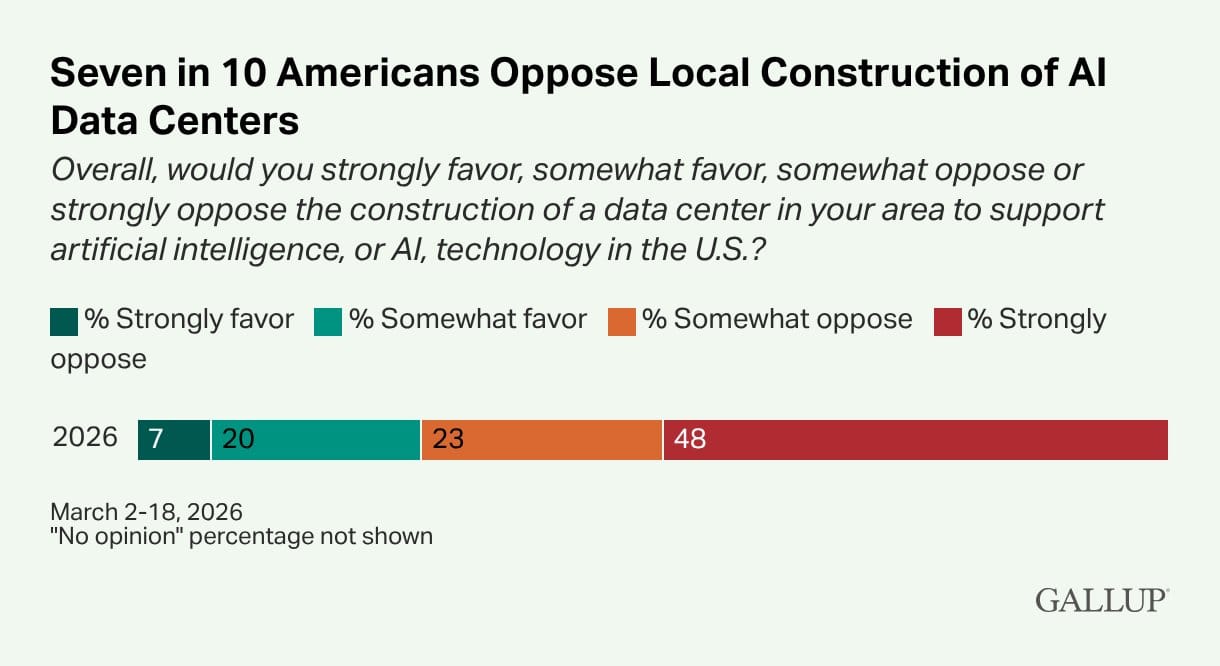

Interestingly, currently, AI is as unpopular as ever. A new Gallup survey shows that people are strongly opposed to having data centers nearby and would rather have a fricking nuclear reactor.

And while I’m the first to acknowledge that the industry incumbents are the first to blame for all of this, and data centers aren’t precisely fountains of job creation, this is just madness. People hate AI and will cling to any reason for it, point-blank.

CODING

You can now access Codex from your ChatGPT iPhone app.

If my dog park human colleagues already probably thought I was somewhat shy, being all the time listening to podcasts instead of making small talk (in case you’re wondering how I manage to write so much about AI, that’s the kind of thing I have to do), Codex might make me look like a sociopath after their new release that lets you talk to your models and processes on your laptop from your iPhone.

A feature available to Claude Code users for a while now, it’s finally available in Codex, and it’s an amazing experience. In an hour-long dog walk, I’ve pushed several new features to an app I’m building (don’t worry, I am very aware of the tech debt and always take time to clean code afterward) simply by talking to my Codex agents.

Truly incredible times we’re living in.

TheWhiteBox’s takeaway:

A word has to be said on the “quality” of the code we’re seeing in the world right now (including probably mine).

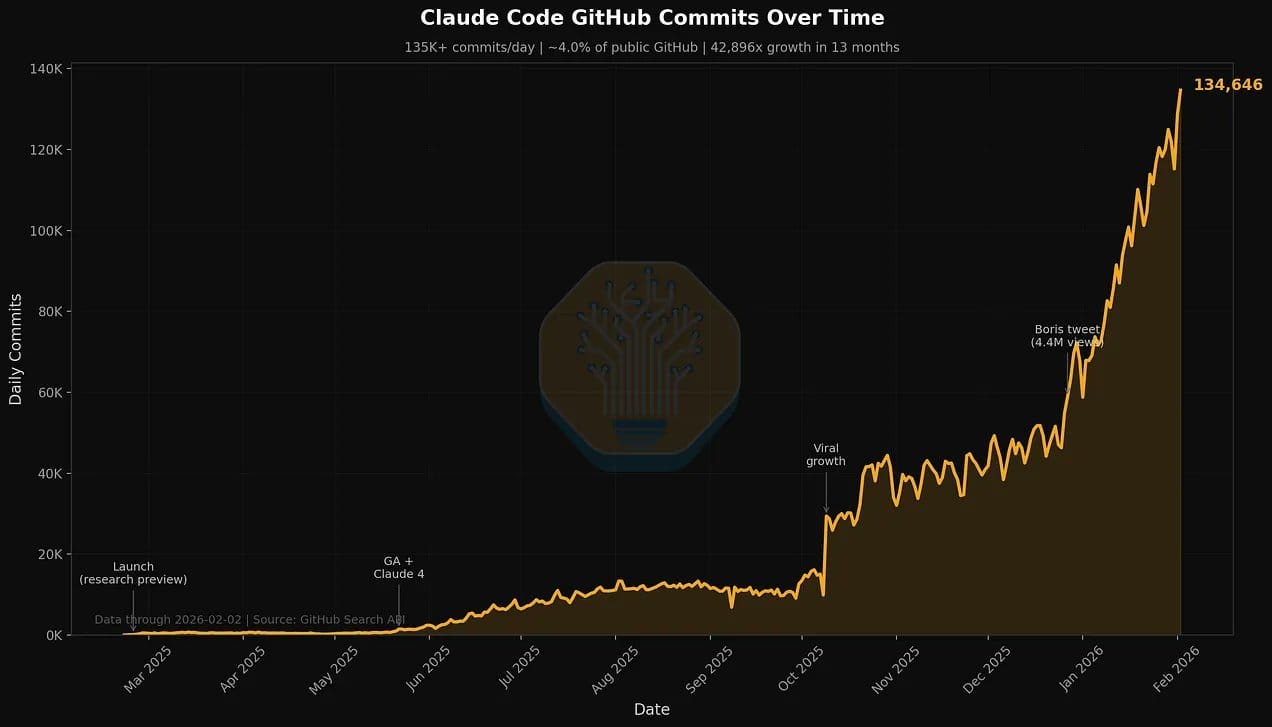

As some people are tracking commits to GitHub that are at least coauthored by Claude and Codex, they have skyrocketed (and I suspect the actual number is way higher because you can choose to exclude coauthors from the commit), and the numbers below are just from February!

Source: SemiAnalysis

The scale at which these agents write code makes it very, very hard to believe this code is being audited, like at all (unless it’s being done by other AIs). For good measure, all the apps I’m building are for personal use and/or for my business; no public app accessible to other users has my name and Codex’s signature on it.

Personally, I am very aware of tech debt and obsessively refactor my code almost daily after long sessions.

Still, I fear the quality might not be superb because it’s all AI-generated. At this point, the only thing we can hope for is for future models to be excellent debuggers. Otherwise, it’s going to be a mess.

ROBOTICS

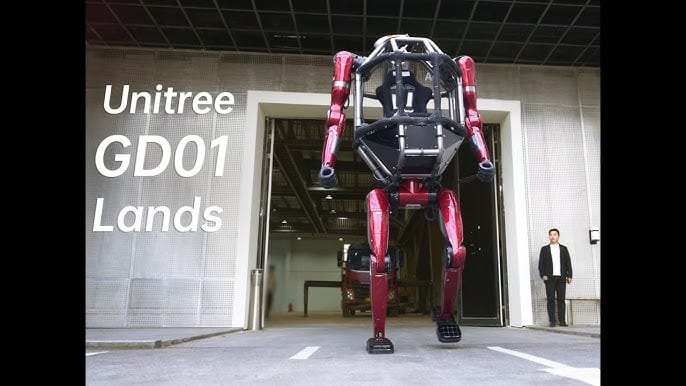

Unitree’s Transformer robot

In what’s possibly one of the coolest robotics demos, or even the coolest robotics demo ever, Unitree has presented what seems to be a real-life version of a Hollywood transformer; yes, those that fly and fight and do all that crazy stuff.

The video shows Unitree’s founder, Xingxing Wang, literally riding this thing, and boy must he feel like a Superhero.

TheWhiteBox’s takeaway:

Cool demo that is obviously more about collecting bragging rights than actual utility, but I could see this thing being used for dangerous construction work and other situations where we still need human motion but want the human out of harm’s way.

Of course, some people will see this and imagine the end of humanity, but those people have probably seen too many Hollywood films.

As far as we know, this is fully human-controlled.

CONSUMER HARDWARE

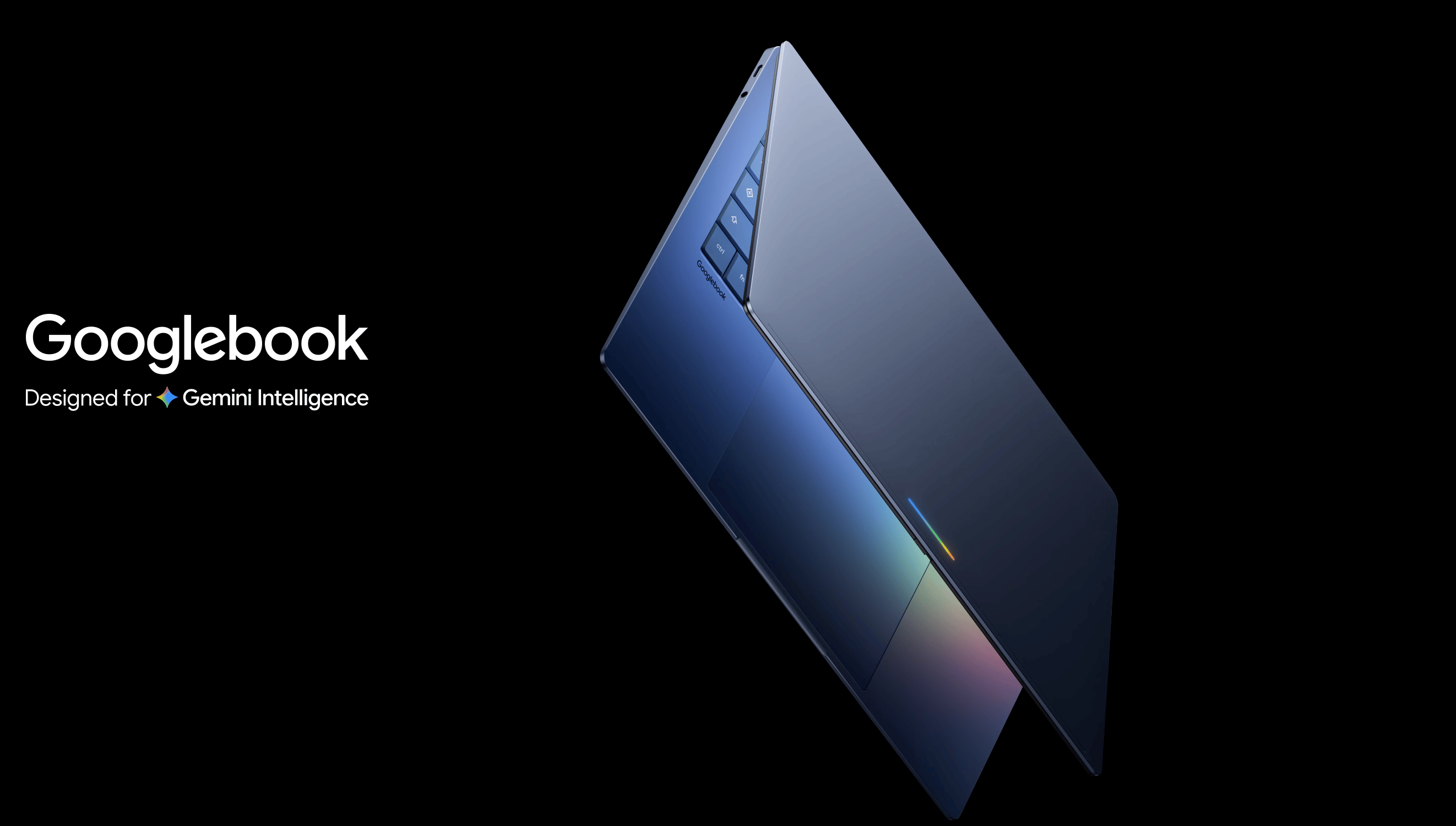

Google’s GoogleBook and the Magic Pointer

Google has announced Googlebook, a new premium laptop category designed around Gemini.

The company says Googlebook will combine Android technologies with ChromeOS capabilities, while putting Gemini directly into the core laptop experience rather than leaving it as a separate chatbot or assistant.

One of the main new features is Magic Pointer, developed with Google DeepMind. The idea is to turn the computer cursor into an AI interface: users point at something on screen, such as a date, image, table, object, or location, and Gemini can understand what is being referenced and suggest an action.

DeepMind describes this as a rethink of the mouse pointer, a 50-year-old interface that has mostly remained a tool for clicking, dragging, and selecting. With Magic Pointer, the cursor becomes a way to tell the AI what “this” or “that” means, keeping users in their current workflow rather than forcing them to copy text, upload files, or manually describe context.

Google says the first Google Books will come from partners including Acer, ASUS, Dell, HP, and Lenovo, with availability expected in the fall. The company has not yet shared full specifications or pricing.

TheWhiteBox’s takeaway:

We’ll see how far this idea goes. Google's entry into the laptop market is interesting. I’m particularly intrigued by the hardware; will it be strong enough to run local models like Gemma?

I hope it is, otherwise it’s just a waste of my time.

The Magic Pointer idea looks incredible, but I’m not convinced it will become something I can’t live without. Will it be another solution looking for a problem to solve?

FINANCE

ChatGPT’s New Personal Finance Feature

As always just when I’m closing the edit of my newsletter for sending, an AI Lab decides it’s the perfect time to drop a feature I’m compelled to talk about.

Now, OpenAI has launched a preview of a new personal finance experience in ChatGPT for Pro users in the US.

Users can connect financial accounts, view a dashboard of spending, investments, subscriptions, upcoming payments, and liabilities, and ask ChatGPT questions grounded in their own financial data. The rollout starts on web and iOS, with support for more than 12,000 financial institutions through Plaid, and Intuit support is planned.

TheWhiteBox’s takeaway:

This is basically a copy of what I am building for myself; the functionality appears very similar. I’m interested in knowing how complex the solution is, whether it allows you to change categories, ask for very specific KPIs and data, and support other features that my app does.

Considering it’s a first release, I would bet it will be somewhat limited, but it takes no genius to see the appeal of this: having a single place to manage all your personal finances.

Closing Thoughts

The biggest news of the week is, of course, the Cerebras IPO, which I talk about in excruciating detail below. Beyond that, we’ve seen a lot of “what the world might give us in the future” and not much about the present, aside from a couple of OpenAI releases.

For a week, it seems AI is going back to ignoring the present and trying to imagine an incredible future, the type of stuff you do when proof of reality is not available.

However, personally, I think it’s about time we realize the size of this industry, and the huge debt and invested capital around it make all that future talk pointless. We need results now.

For what it’s worth, we’re getting them, but as Anthropic’s latest round shows, just when we thought AI had cracked the revenue code, we see that the corresponding losses accelerate even faster. Not a good sign.

And without further ado, I leave you my analysis of one, if not the most, fascinating company I’ve covered in this newsletter, probably ever.

But is the stock market way too excited? Let’s find out.

Give a Rating to Today's Newsletter

For business inquiries, reach me out at [email protected]