THEWHITEBOX

TLDR;

Welcome back. This week, we have nothing but bangers.

From incredibly insightful research coming from many places (OpenAI, Anthropic, Cursor, or DataBricks), to key things to know about AI markets, including the Allbirds AI pivot that will make the most experienced of you relive the traumas from the dot-com bubble, two new products from Google and Anthropic, and insights into what OpenAI and Google will launch soon.

Enjoy!

MODELS

OpenAI’s GPT-5.4-Cyber

OpenAI has announced a new model, specialized in cyber, a version of GPT-5.4 fine-tuned to be more permissive for defensive cybersecurity work.

The company says the model lowers refusal boundaries for legitimate security tasks and adds capabilities such as binary reverse engineering, allowing professionals to analyze compiled software for malware, vulnerabilities, and security robustness without source code.

Binary reverse engineering is the process of analyzing compiled software (binaries) without access to the original source code to understand its functionality, structure, or behavior.

The crucial thing to outline is that access to the overall program, the Trusted Access for Cyber (TAC) program, will be tiered. This means that the most permissive access, including GPT-5.4-Cyber, will initially be limited to vetted security vendors, organizations, and researchers.

Just like Anthropic did with Mythos, OpenAI is closing down the gates around its new cyber model. But unlike the former, they argue that much of the cyber risk is already present.

TheWhiteBox’s takeaway:

To be clear, this is not an equivalent announcement to Claude Mythos. This is a fine-tuned version of GPT-5.4, specialized in cyber, while Anthropic’s model is so large and knowledgeable that it is incredibly good at cybersecurity.

In other words, one was trained to be excellent at cybersecurity, the other is so large and knowledgeable that it happens to be great at cyber.

This is because models have something known as “emerging capabilities”: capabilities that “emerge” unexpectedly and without the team's specific focus as models get larger.

Instead, OpenAI’s model has been purposefully focused on cyber. It’s not an emergent capability; it was purposefully trained.

Moreover, this lends credence to Anthropic’s fears about Mythos; OpenAI seems to share similar concerns.

I’m still sure there’s a lot of marketing in all of this, but I’m okay with them being cautious; I just hope this doesn’t become an excuse to keep most of society out of the frontier. I truly hope they don’t weaponize fear to close the curtains around them.

RESEARCH

Anthropic’s Autonomous Alignment Researchers

One of the coolest things emerging in 2026 is the idea of autoresearch: having AI models run, validate, and iterate on their own experiments to accelerate scientific research.

Now, Anthropic has released the first (at least that I’m aware of) such study coming from a big AI Lab. And it’s incredibly cool.

The idea they test is whether they can have AI improve our ability to perform weak-to-strong alignment, an experiment where we’re evaluating whether weaker AIs can align (steer/control) stronger models, clearly projecting a future in which humans have to control AI models “smarter” than them.

What’s at stake is clear: if there comes a time when AIs are actually smarter than humans, we need to make sure we can control them.

And… the experiment worked.

Despite humans only managing to achieve a 23% recovery of the stronger model’s performance (i.e., on a scale 0 to 100%, we measure how dumber we make the strong model become if forced to behave under the directions of the weaker model), the researchers got 23%, meaning that using weaker models to steer smarter ones resulted in much worse performance.

Think of this as having a team of genius humans being run by a very dumb manager; it’s logical that the manager becomes the bottleneck.

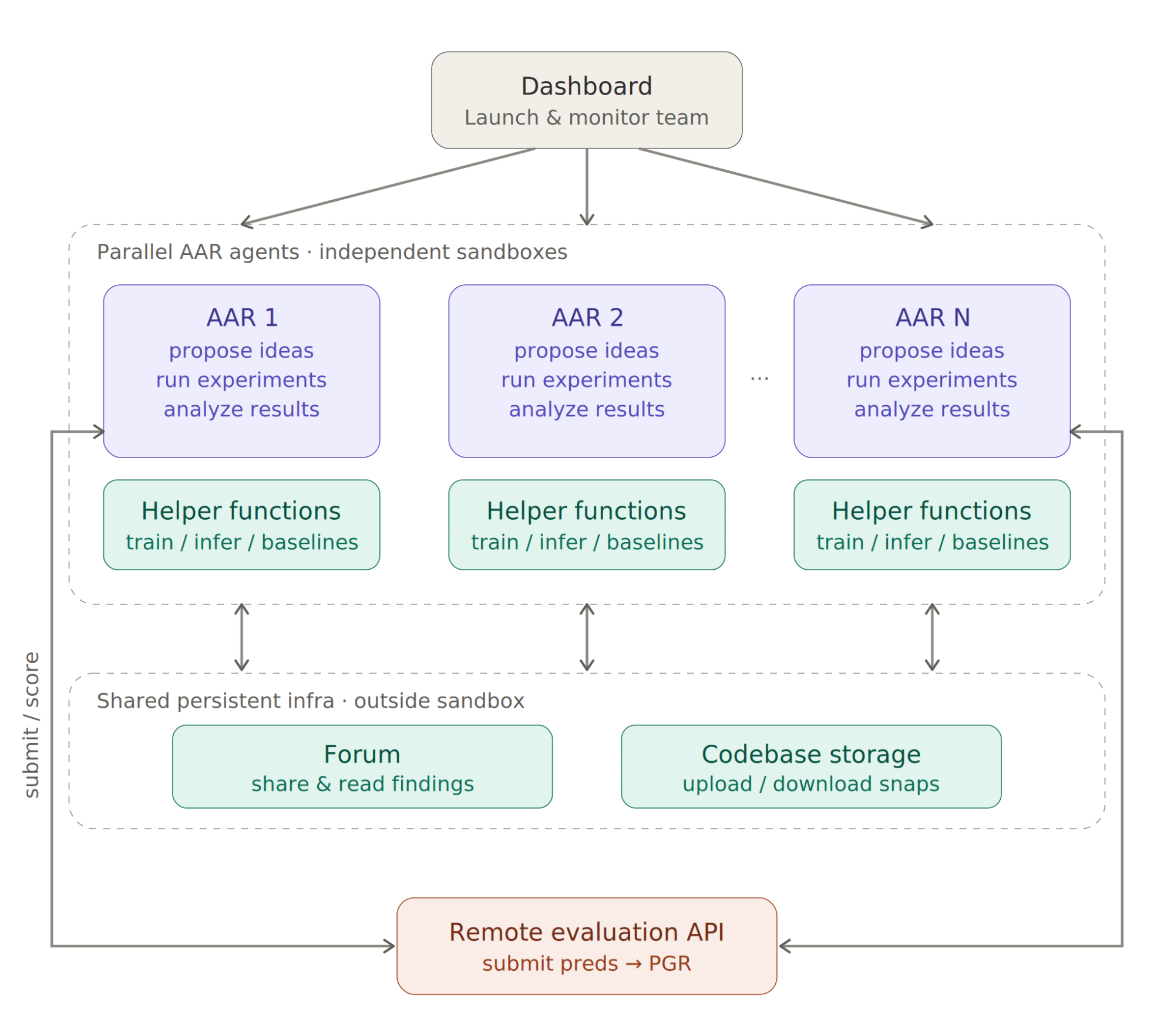

Instead, the AI’s autoresearch method achieves a 93% in just around 800 hours of testing (across several Claude Opus 4.6 agents deployed in parallel, as shown in the thumbnail), cutting months of human effort to days.

In layman’s terms, the result means that, despite having a weaker model steering, the system manages to retain almost all the performance of the stronger model. In other words, the stronger model doesn’t get “dumbified” by the weaker model and still gets steered by it, opening hopes for a future where we can run superhuman AIs without making them as dumb as us.

Importantly, the solutions are tested on held-out datasets (to ensure the system works in areas AIs haven’t seen), preventing overfitting (when AIs find solutions that give high scores but are not applicable to new data).

TheWhiteBox’s takeaway:

This type of research puts into perspective the insane potential AI has.

Imagine a future where you have “infinite” compute to test all your hypotheses, doubts, and concerns; a future where you aren’t limited by the number of hours in your days, but the number of agents running experiments for you.

The problem, as you may have guessed by the numbers, is the price. This experiment required $18,000 worth of compute in just 5 days, something totally worth it for Anthropic but completely untenable for most enterprises, let alone consumers.

Ironically, the last thing we need is AIs being able to speed up scientific discovery, but only if you’re filthy rich.

ENGINEERING

Cursor and NVIDIA use AI to Optimize Software

In partnership with NVIDIA, Cursor has used its multi-agent AI system to optimize CUDA Kernels (the compute programs that run NVIDIA GPUs), achieving incredible results.

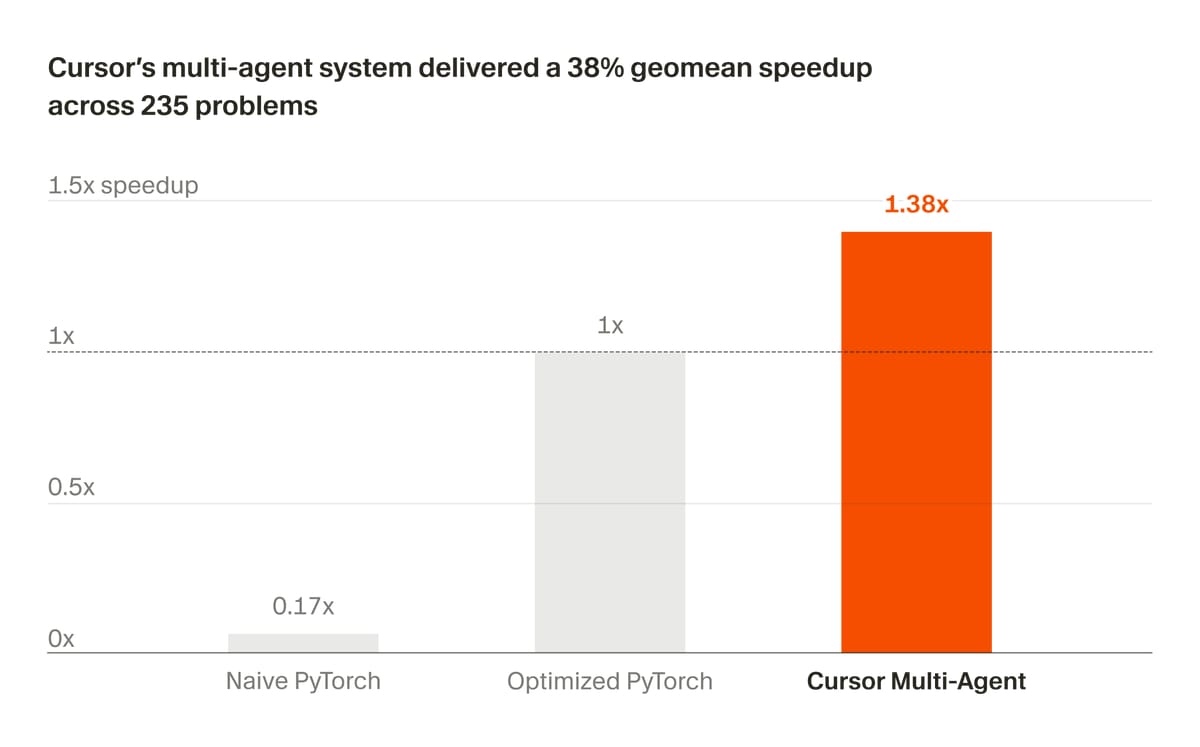

Cursor says it used a multi-agent system to optimize 235 CUDA kernels for NVIDIA Blackwell B200 GPUs, achieving a 38% geometric mean speedup over its baselines in a three-week run.

It says the system outperformed the baseline on 149 of 235 problems, and that 45 problems, or 19%, saw gains above 2x.

According to the post, the system used a planner agent to distribute work across autonomous workers and repeatedly benchmark, debug, and refine kernels without developer intervention.

TheWhiteBox’s takeaway:

This announcement is the perfect combination of two very hot things in AI:

Multi-agents: The use of several agents working on the same task has become a key driver of progress (although, as I always say, using multi-agents in places that don’t need them is just a waste of money).

Autoresearch: Using AIs to speed up experimentation, as we’ve seen with Anthropic above, is an extremely promising area of progress in this industry, but with a clear ceiling: costs.

The common theme with most of AI progress today is money. If we can barely afford one AI, how are we going to afford 20?

ENTERPRISE

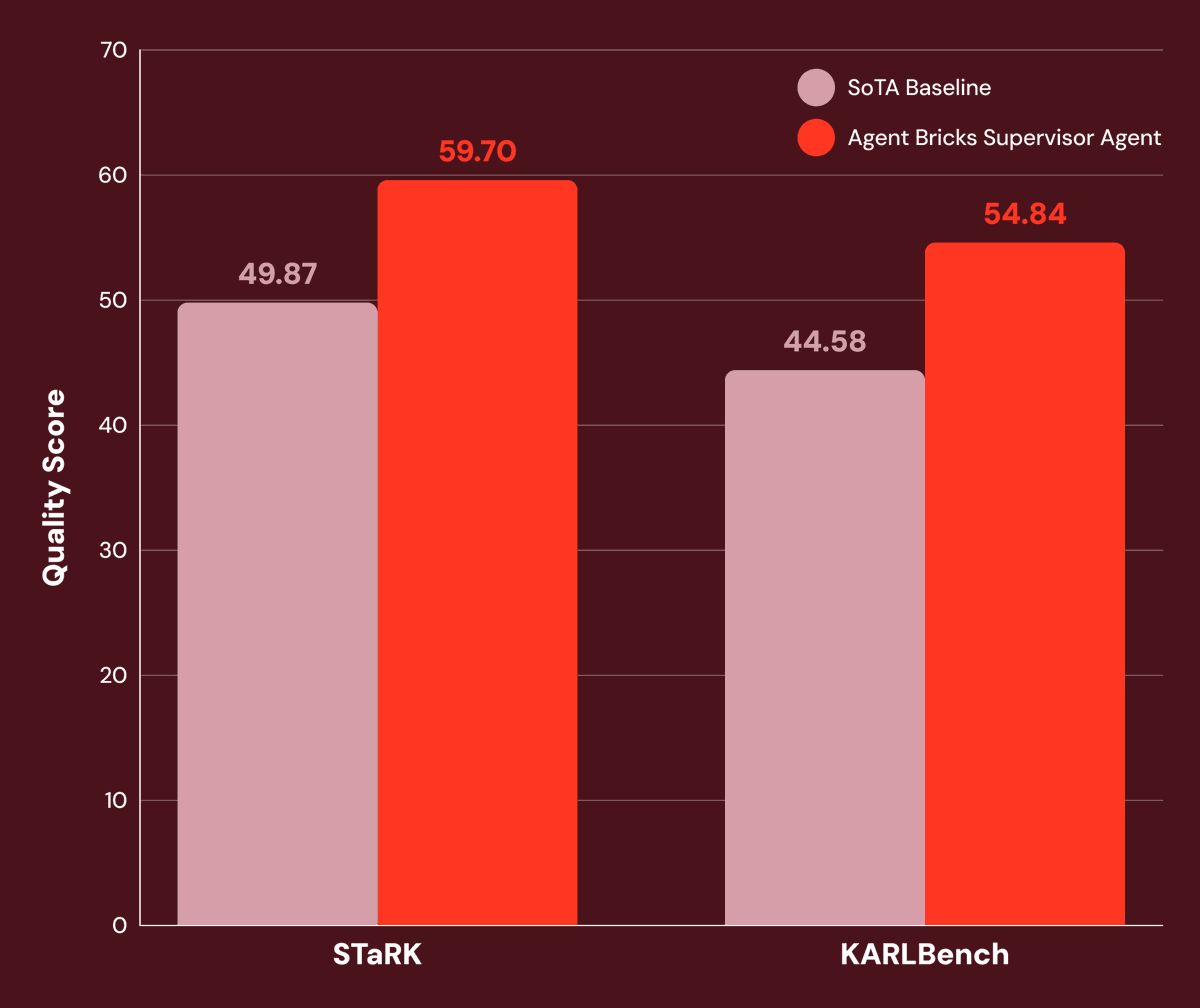

DataBricks Search Agent is SOTA

Two weeks ago, we talked about the Chroma agent, which could execute multi-step research across knowledge bases. At the time, I explained that this was the future of enterprise search, plain and simple.

The reason is obvious: most enterprise data isn’t stored in a single place; it's spread across multiple enterprise sources. Thus, the agent must be able to execute several search steps sequentially until it reaches the solution.

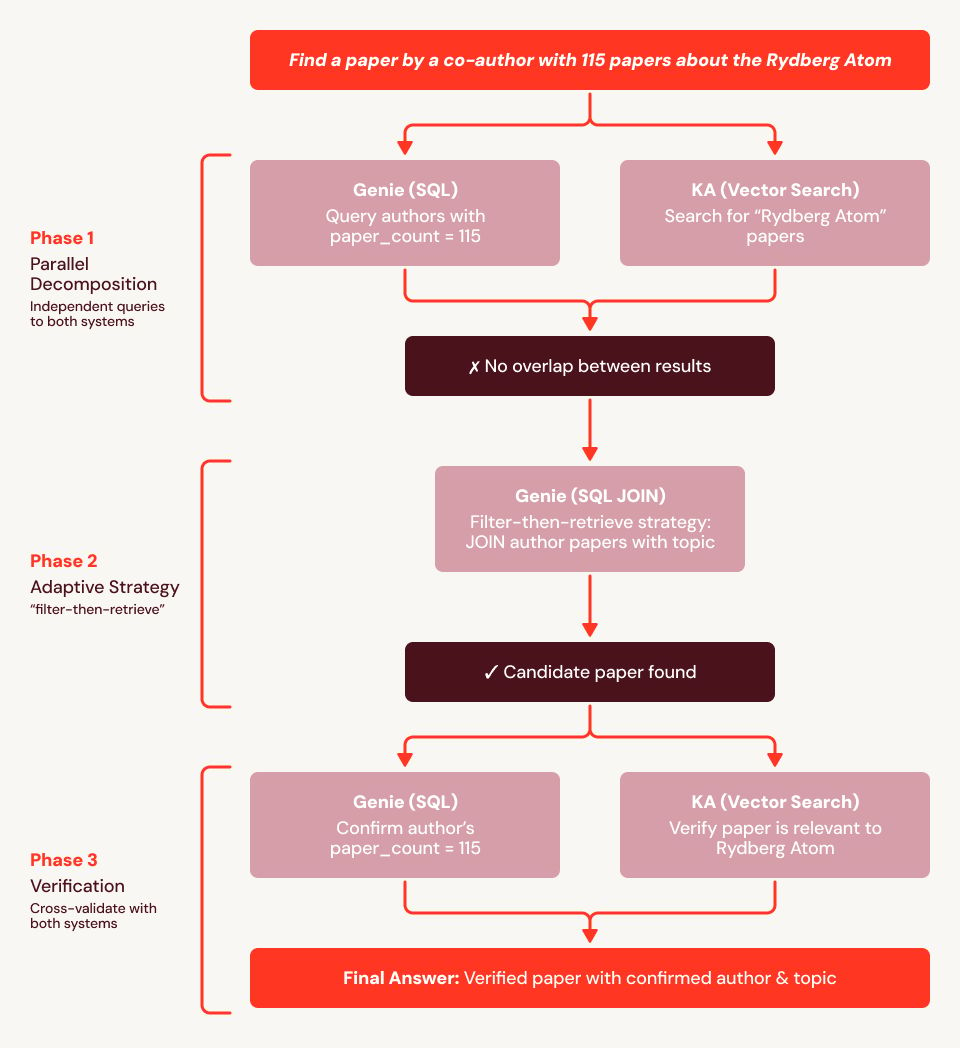

And now, DataBricks has reached SOTA in enterprise search, doing the exact thing. As seen in the example diagram below, some questions require traversing several databases, and an agent capable of performing that exact reasoning is needed.

TheWhiteBox’s takeaway:

“Chat with your data” has been the quintessential GenAI use case for enterprises since the dawn of time. But it never actually worked. However, with agents with multi-step search capabilities, you can design solutions that actually get you the responses you’re asking for.

The quiet part of all this is that, in many cases, it actually requires fine-tuning with Reinforcement Learning on your databases, something most enterprises aren’t yet sophisticated enough to execute.

But it’s a matter of time. Not only because it’s a necessary step, but also because it opens the door to using open-source models, which will play a crucial role in cheapening AI costs for enterprises.

BUBBLE

Diary of a Painful Cost

In one of the craziest turns late, Allbirds has completely pivoted to AI from a business focused on… trendy shoes.

"The company will initially seek to acquire high-performance, low-latency AI compute hardware and provide access under long-term lease arrangements, meeting customer demand that spot markets and hyperscalers are unable to reliably service,“ read a company statement.

Yes, a shoe company is pivoting to AI, after announcing a $50 million raise, something that, in nominal terms, is about 1 MW of compute, not much considering the Rubin Ultra, slated for 2027, will require more than half of that (~600 kW).

The news feels like an April Fool’s Day joke delivered late, but the stock has quadrupled in a day.

TheWhiteBox’s takeaway:

The last time investors saw something like this was probably 26 years ago. Yes, we had seen some weird pivots like Microsystems becoming a Bitcoin holding company, but not a shoe company wanting to provide AI inference services with the equivalent of bread crumbs to show for it.

Wild times.

ENTERPRISE

Diary of a Painful Cost

In a rare sight, Uber’s CTO, Neppalli Naga, has shared quite a lot of information regarding Uber’s experience with AI tools, particularly Claude Code, leaving us with a striking insight: they’ve burned through their token budget, the amount of usage they expect from Large Language Models (LLMs) for the entire year in just three months, and are now thinking in ways to expand the budget while also, unsurprisingly, be more efficient about it.

TheWhiteBox’s takeaway:

To be clear, he’s not arguing against the use of this technology. In the same interview, he described his job as: “The vision for me as a CTO is to transform from software engineering to [AI] agent software engineering.”

So he’s not precisely a Luddite. But it’s clear that even the most senior executives are being caught off guard by the increasing costs of this technology.

We’re seeing a clear divergence right now: usage indicates value (they claim 11% of new code is being written by AIs), but it’s extremely hard to measure the return on that usage.

Furthermore, this is just another nail in the coffin of the lie that AI is cheap, something that has been completely debunked. AI is many things; cheap isn’t one.

The solution to this “problem” is not to stop using AI, but to stop approaching this technology naively.

That is, educate your employees on how to use this technology wisely, with clear budget tracking, defining KPIs to verify success and returns before, not after, and perhaps more importantly, start to develop a hybrid strategy which I summarise as follows: “open-source where you can, Claude when you must.”

In the same way you don’t need a plane to go to buy your groceries, you don’t need frontier models for most enterprise use cases.

PRICING

It has started.

Talking about Anthropic consuming enterprise budgets, it’s going to get worse.

As reported by The Information, Anthropic is turning up the pricing notch for enterprises, charging AI for what it’s really worth. Or, at the very least, use usage-based pricing instead of subscriptions.

In particular, Anthropic has changed the pricing for Claude Enterprise so that large business customers now pay a $20-per-user monthly fee plus charges based on the AI compute they actually use. Previously, customers paid a higher flat subscription fee that included a heavily discounted usage allowance.

For now, the change applies to larger customers only, not smaller teams with fewer than 150 users, and some companies may only see the new pricing when they renew their contracts. One licensing adviser The Information spoke with estimates that, for heavy users, costs could double or triple.

TheWhiteBox’s takeaway:

When I wrote a few days ago, a piece on Medium (it’s free to read, but requires an account) arguing that we were, in fact, getting priced out, some people pushed back, saying I was exaggerating.

Well, it literally took two days to prove my thesis correct.

People take this personally, but it’s just maths, guys. Anthropic may be the first because it’s under the most pressure (it has the least cash and compute of all the major players), but I predict this is something that will catch up to all Labs eventually: huge subscription losses can’t be tolerated forever.

AI will end up being a usage-based pricing technology for heavy use. For light users or consumers, you will be offered dynamic subscriptions where Labs reserves the right to, if you excuse my words, fuck you in all the ways they want, deprioritizing you, serving you worse models… whatever it takes to serve you the worst possible model without you churning.

The more things worsen, the clearer it is to me that I can’t trust my AI workloads to these companies. In Crypto, there’s the saying “not your keys, not your coins,” which argues for storing crypto in physical wallets under your control.

I now have the equivalent for AI: “not your AI, not your intelligence.” I predict we’re about to see a wave of interest in open-source solutions, and a pretty big one.

FUNDING

Anthropic, Toward an $800 Billion Valuation?

I’m sure you have noticed that this newsletter now talks a lot more about Anthropic than about OpenAI. The reason is not my obsession, but that Anthropic is currently in the spotlight in basically every direction you look; from product, to models, to now, money.

Seeing OpenAI’s $852 billion valuation and Anthropic’s business being basically on par with them at this point, the $350 billion feels weak at best. And many investors seem to be thinking that, trying to raise money from Anthropic at a staggering $800 billion valuation.

TheWhiteBox’s takeaway:

Not much is known about the deal, but there are two things here:

On the one hand, Anthropic has no need to give equity to investors at $800 billion if it’s planning to IPO at $1 trillion in a few months’ time.

On the other hand, Anthropic desperately needs cash to avoid bankruptcy, as it’s losing tons of money from customers while pouring billions into buying compute, so they could be forced into it.

Either way, one thing’s for sure: they are killing it.

CHIPS

Broadcom 🤝 Meta

A month ago, I covered Meta’s new line of chips, called MTIA. Now, they’ve announced Broadcom as their partner, sending the chip upward, a stock that is having a great month so far (up 21% at the time of writing).

The partnership seems to be a pretty big one, as Broadcom will help Meta across most areas of design and manufacturing, including networking, advanced packaging, and even chip design, ensuring Broadcom’s presence in every sense of the word and basically guaranteeing that Broadcom will charge a hefty margin to Meta for its efforts.

For instance, in Google’s partnership with Broadcom, the former has much greater influence over most decisions, especially in networking. Instead, here it seems Broadcom will be critical across the entire pipeline.

The relationship is so strong that Hock Tan, Broadcom’s CEO, will join as a member of Meta’s Board of Directors. The deal is for up to 1 GW of MTIA chips.

TheWhiteBox’s takeaway:

I’m liking more and more what I’m seeing from Meta. They do seem to have a solid plan. The business is booming to the point that some expect it will surpass Google in ad revenue for the first time in 2026 (careful, only in ad revenue).

And amongst the Hyperscalers, excluding maybe Google, it has the biggest overlap between AI and its core business, meaning its investment could very well translate into greater revenues.

Put another way, while I may struggle to see how Amazon is going to get a positive return in spending $200 billion a year on AI CapEx (even accounting for AWS), as, guess what, AI inference, which is where these companies hope to make money one day, isn’t precisely a profitable business today, Meta already claims to be getting a positive return out of its AI investments.

In their case, it’s mostly AI for ad targeting rather than chatbot-based revenue, meaning they are using AI to understand us better and know which ads to show us.

Their AI game seems to have gotten better after the massive organizational reshuffle, with its latest model release, Muse Spark, which, although clearly not frontier, seems good enough to be offered to Meta’s 3.5 billion active customer base (and guess what, that may be enough).

Additionally, and something that is largely ignored most of the time, they have what’s probably the second-largest fleet of AI accelerators, only second to Google, and the most experience handling huge distributed workloads (again, alongside Google), which gives them an edge when it comes to offering AI at scale and reliably.

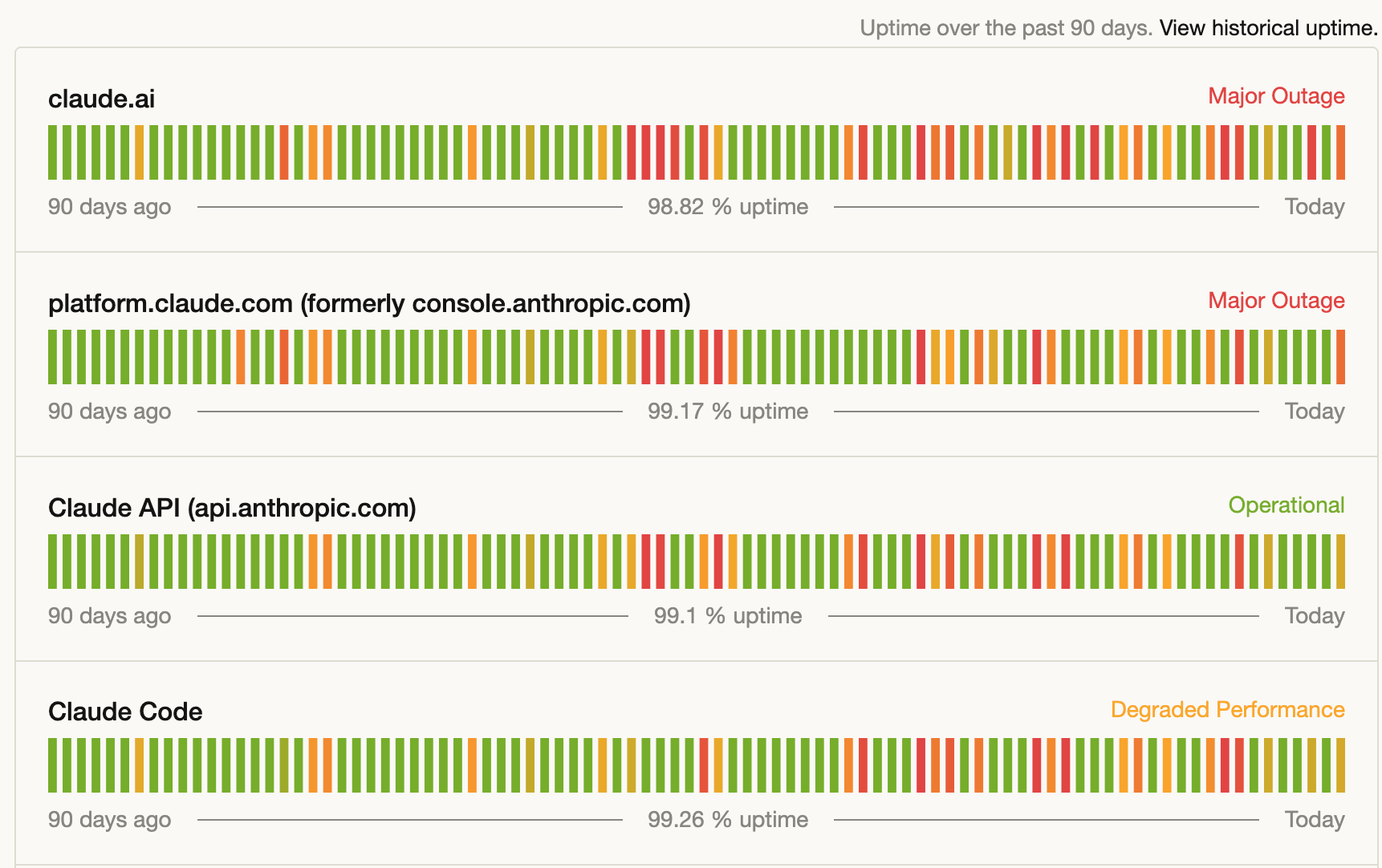

Most people take this for granted, but just ask Anthropic if you should:

Anthropic is struggling to keep uptimes stable, and that’s an understatement

I’m not an investor today, but I can’t deny that they have a lot going for them.

BROWSER AND SUPERAPPS

Codex and Atlas, Soon Merged

You may not remember this, but not long ago, OpenAI released a browser called Atlas. The product was a massive flop, but it’s not like OpenAI is going to let that effort die.

And now, based on recent leaked data, we know they are about to merge the Codex and Atlas apps into a single app.

This means you can now browse the Internet inside Codex. However, that’s not the point; the point is to allow developers to test their own creations inside the app (it’s unclear to me whether this will include backend support, too, like Docker).

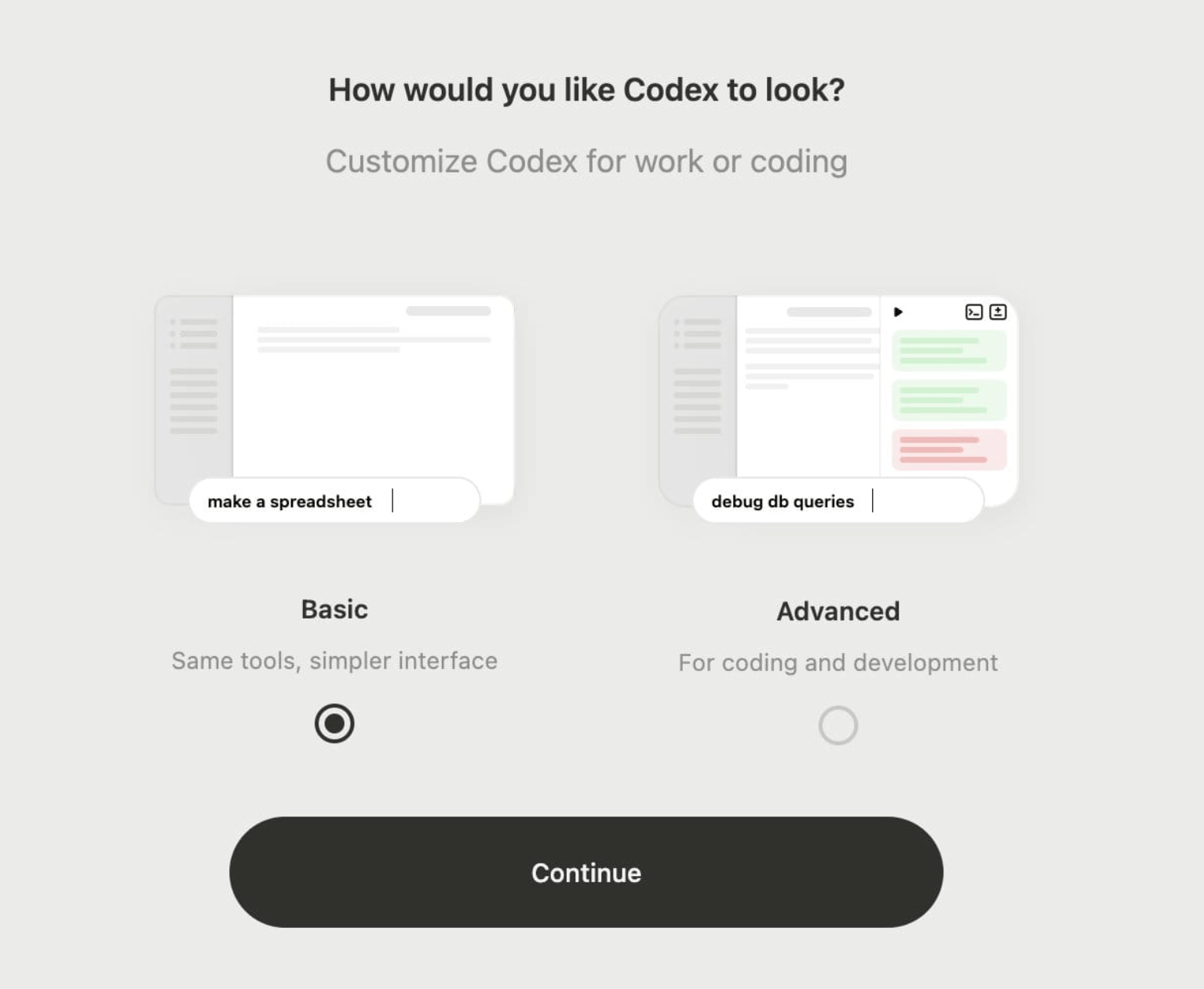

Simultaneuously to this clearly technically-oriented improvement, as you can see in the thumbnail, they are also trying to appeal to laymen too, planning to release two completely distinct experiences in Codex, ‘Basic’ and ‘Professional’, so that people trying to use this technology more in the style of Claude Cowork (to be more productive with knowledge work tools) and those wanting to use these tools to code can coexist.

This adds to another upcoming release of Codex Scratchpad, a feature that lets users assign work to agents in a to-do list form.

TheWhiteBox’s takeaway:

OpenAI has clearly embraced the idea of the ‘superapp,’ an app that offers everything to everyone. However, it’s clear that its focus is enterprise; whether you're appealing to a business analyst or to a software developer, the idea is to clearly productivize work, and consumer users are going to get more and more deprioritized.

AGENTS

Google’s Gemini Cowork, Around the Corner

We are all building the same things over and over again, aren’t we? Google is preparing the launch of an agent to compete with Claude Cowork.

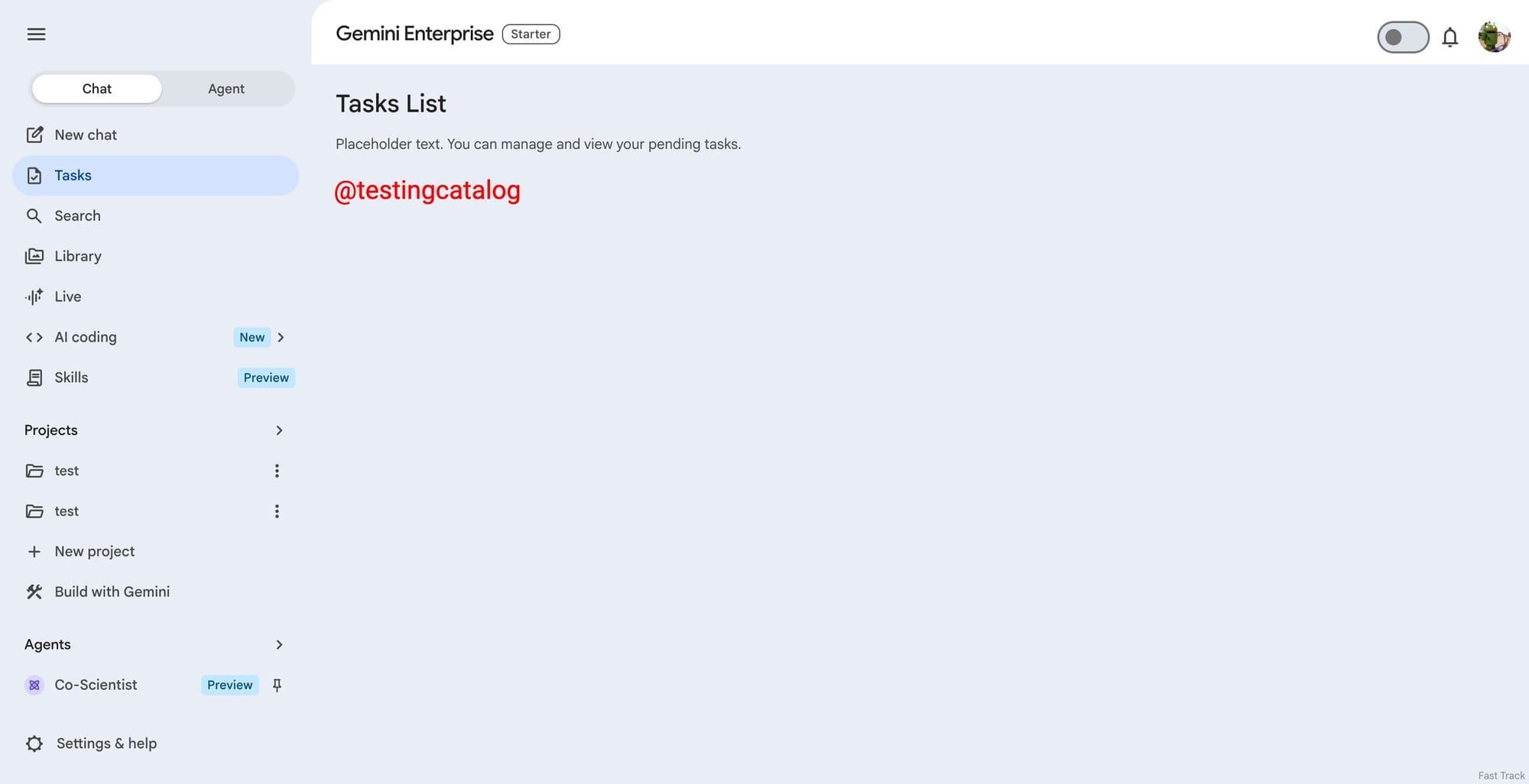

TestingCatalog reports that Google is developing a new agent-style workspace inside Gemini Enterprise, with an “Agent” tab that includes “New Task” and “Inbox.”

The reported interface includes a chat area plus controls for setting a goal, selecting agents, connecting apps, adding files, and requiring human review before actions are taken.

It’s Claude Cowork with a Google branding, basically.

TheWhiteBox’s takeaway:

As with everybody else, Google is moving Gemini beyond a standard chatbot toward a system that can manage multi-step tasks using tools and connected services.

Everyone is aiming for the same form factor: a declarative paradigm where users declare, and AIs do.

I predict this will be the biggest announcement at Google I/O coming next month, and the key thing going for Google is that they have by far (it’s not even close, actually) the biggest distribution amongst the Big AI Three (OpenAI, Anthropic, Google) as they can instantly make their agent available to billions of users, both enterprise (which seems to be the focus right now) and consumer (mostly via Chrome and Google Search).

Now, as always with Google, the question is whether they’ll execute.

AGENTS

Google Adds Skills to Chrome.

Continuing with Google, besides finally launching a Mac desktop app, it has launched Skills for Chrome, a new feature that lets you store certain prompts and interactions.

I’ve talked about skills a lot in this newsletter, the idea that you can “train” your AI to behave in a certain way without training at all; just giving it a set of instructions that modify the model’s behavior and even teach it new skills (hence the name).

And, thus, in yet another clear step toward turning Chrome into a fully agentic experience, they have added skills to the app. Now, your successful interactions with Gemini can be stored as reusable ‘skills’. They are also offering a library of pre-created skills.

TheWhiteBox’s takeaway:

When I first wrote about Google two years ago, I described its future as “Gemini for everything” as the clear bet the company was making. At the time, most people didn’t believe it, and the stock was the ugly duckling of tech.

Today, it’s the second-largest company by market cap, the company with the largest customer base of Generative AI (most Meta users don’t use GenAI features at all), and the one with, by far, the largest amount of compute and great models (although the latter are not quite at the same level as Anthropic and OpenAI’s flagships).

If they fix the AI issues, which are perfectly solvable, they are running away with it, and there’s nothing anyone can do about it.

To be clear, I am a Google shareholder, so I’m, of course, interested in my own thoughts being true. Therefore, take my optimism with care.

AGENTS

Anthropic continues massive shipping

This AI Lab just can’t stop releasing new stuff. A few hours apart, they have announced two new features:

A complete redesign of their Claude Code Desktop product, leaving the CLI interface to more geeky customers and appealing more to laymen

Claude Code Routines, a way for you to define what’s basically cron jobs that run agents you configure on your desired time and frequency.

You really can’t keep up with how many things these guys are releasing.

TheWhiteBox’s takeaway:

The rate is so impressive it feels hard to believe. In 2026 alone, they have made 28 product releases so far. Yes, 28, which raises the question:

Is this massive shipping frequency we’re seeing from Anthropic the first real proof of the ‘AI flywheel’?

Is this what it looks like when a company has AI embedded into its development to such an extent that they outclass everyone else simply by AI superiority?

Maybe I’m exaggerating, but how do you explain this velocity otherwise?

Closing Thoughts

In a week where we saw two of AI’s most hyped research avenues, multi-agents and autoresearch, making undeniable progress, it’s crazy to me that the most surprising news was a shoe company pivoting to AI.

But this is what the industry gave us. AI has many words, but boring is not one of them.

Beyond that, we saw product insights once again proving to us that everyone is building the same damn thing: knowledge agents, coding agents, skills for Chrome… all are announcements pointing in the same direction: the declarative paradigm I’ve been calling since… ever.

Hopefully, AI’s clearest contribution will be to build a digital future where humans can stop living in it and instead enjoy the real world. Let agents do the dirty work for us.

That would be a vision I would stand for.

However, instead, we are being told AI is going to destroy our lives. No wonder some people have gotten so freaked out that they've attacked Sam Altman.

There’s nothing positive about attacking someone, but I hope it at least serves as a wake-up call for AI incumbents to rethink their PR strategy. Maybe building something while telling the world that something is going to destroy their livelihood isn’t a good idea after all.

Give a Rating to Today's Newsletter

For business inquiries, reach me out at [email protected]